Summary:

Redo of https://github.com/pytorch/pytorch/issues/56373 out of stack.

---

To reviewers: **please be nitpicky**. I've read this so often that I probably missed some typos and inconsistencies.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57247

Reviewed By: albanD

Differential Revision: D28247402

Pulled By: mruberry

fbshipit-source-id: 71142678ee5c82cc8c0ecc1dad6a0b2b9236d3e6

Summary:

Pull Request resolved: https://github.com/pytorch/elastic/pull/148

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56811

Moves docs sphinx `*.rst` files from the torchelastic repository to torch. Note: only moves the rst files the next step is to link it to the main pytorch `index.rst` and write new `examples.rst`

Reviewed By: H-Huang

Differential Revision: D27974751

fbshipit-source-id: 8ff9f242aa32e0326c37da3916ea0633aa068fc5

Summary:

Fixes https://github.com/pytorch/pytorch/issues/54555

It has been discussed in the issue https://github.com/pytorch/pytorch/issues/54555 that {h,v,d}split methods unexpectedly matches argument of single int[] when it is expected to match single argument of int. The same unexpected behavior can happen in other functions/methods which can take both int[] and int? as single argument signatures.

In this PR we solve this problem by giving higher priority to int/int? arguments over int[] while sorting signatures.

We also add methods of {h,v,d}split methods here, which helped us to discover this unexpected behavior.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57346

Reviewed By: ezyang

Differential Revision: D28121234

Pulled By: iramazanli

fbshipit-source-id: 851cf40b370707be89298177b51ceb4527f4b2d6

Summary:

The new function has the following signature `cholesky_ex(Tensor input, *, bool check_errors=False) -> (Tensor L, Tensor infos)`. When `check_errors=True`, an error is thrown if the decomposition fails; `check_errors=False` - responsibility for checking the decomposition is on the user.

When `check_errors=False`, we don't have host-device memory transfers for checking the values of the `info` tensor.

Rewrote the internal code for `torch.linalg.cholesky`. Added `cholesky_stub` dispatch. `linalg_cholesky` is implemented using calls to `linalg_cholesky_ex` now.

Resolves https://github.com/pytorch/pytorch/issues/57032.

Ref. https://github.com/pytorch/pytorch/issues/34272, https://github.com/pytorch/pytorch/issues/47608, https://github.com/pytorch/pytorch/issues/47953

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56724

Reviewed By: ngimel

Differential Revision: D27960176

Pulled By: mruberry

fbshipit-source-id: f05f3d5d9b4aa444e41c4eec48ad9a9b6fd5dfa5

Summary:

You can find the latest rendered version in the `python_doc_build` CI job below, in the artifact tab of that build on circle CI

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55966

Reviewed By: H-Huang

Differential Revision: D28032446

Pulled By: albanD

fbshipit-source-id: 227ad37b03d39894d736c19cae3195b4d56fc62f

Summary:

This PR tries to make the docs of `torch.linalg` have/be:

- More uniform notation and structure for every function.

- More uniform use of back-quotes and the `:attr:` directive

- More readable for a non-specialised audience through explanations of the form that factorisations take and when would it be beneficial to use what arguments in some solvers.

- More connected among the different functions through the use of the `.. seealso::` directive.

- More information on when do gradients explode / when is a function silently returning a wrong result / when things do not work in general

I tried to follow the structure of "one short description and then the rest" to be able to format the docs like those of `torch.` or `torch.nn`. I did not do that yet, as I am waiting for the green light on this idea:

https://github.com/pytorch/pytorch/issues/54878#issuecomment-816636171

What this PR does not do:

- Clean the documentation of other functions that are not in the `linalg` module (although I started doing this for `torch.svd`, but then I realised that this PR would touch way too many functions).

Fixes https://github.com/pytorch/pytorch/issues/54878

cc mruberry IvanYashchuk

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56265

Reviewed By: H-Huang

Differential Revision: D27993986

Pulled By: mruberry

fbshipit-source-id: adde7b7383387e1213cc0a6644331f0632b7392d

Summary:

No oustanding issue, can create it if needed.

Was looking for that resource and it was moved without fixing the documentation.

Cheers

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56776

Reviewed By: heitorschueroff

Differential Revision: D27967020

Pulled By: ezyang

fbshipit-source-id: a5cd7d554da43a9c9e44966ccd0b0ad9eef2948c

Summary:

In the optimizer documentation, many of the learning rate schedulers [examples](https://pytorch.org/docs/stable/optim.html#how-to-adjust-learning-rate) are provided according to a generic template. In this PR we provide a precise simple use case example to show how to use learning rate schedulers. Moreover, in a followup example we show an example how to chain two schedulers next to each other.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56705

Reviewed By: ezyang

Differential Revision: D27966704

Pulled By: iramazanli

fbshipit-source-id: f32b2d70d5cad7132335a9b13a2afa3ac3315a13

Summary:

The pre-amble here is misformatted at least and is hard to make sense of: https://pytorch.org/docs/master/quantization.html#prototype-fx-graph-mode-quantization

This PR is trying to make things easier to understand.

As I'm new to this please verify that my modifications remain in line with what may have been meant originally.

Thanks.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52192

Reviewed By: ailzhang

Differential Revision: D27941730

Pulled By: vkuzo

fbshipit-source-id: 6c4bbf7c87d8fb87ab5d588b690a72045752e47a

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56528

Tried to search across internal and external usage of DataLoader. People haven't started to use `generator` for `DataLoader`.

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D27908487

Pulled By: ejguan

fbshipit-source-id: 14c83ed40d4ba4dc988b121968a78c2732d8eb93

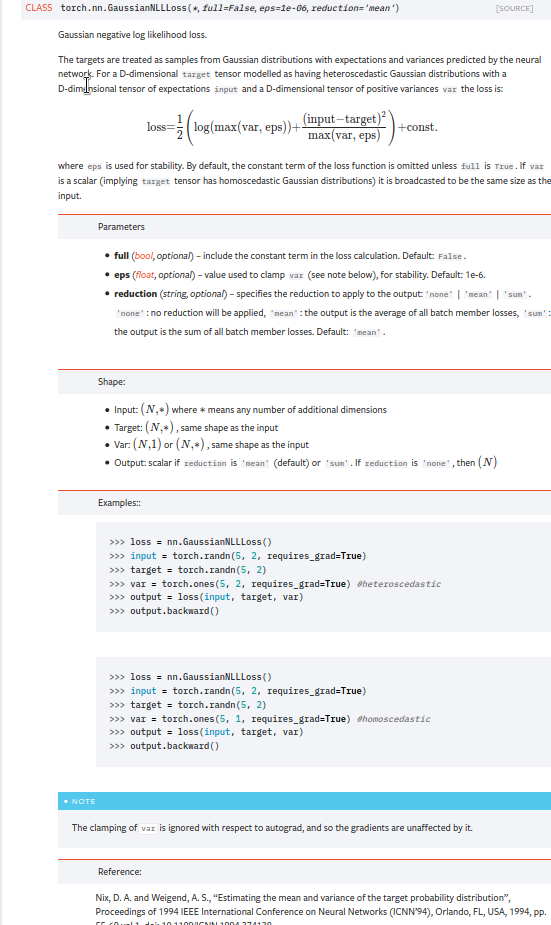

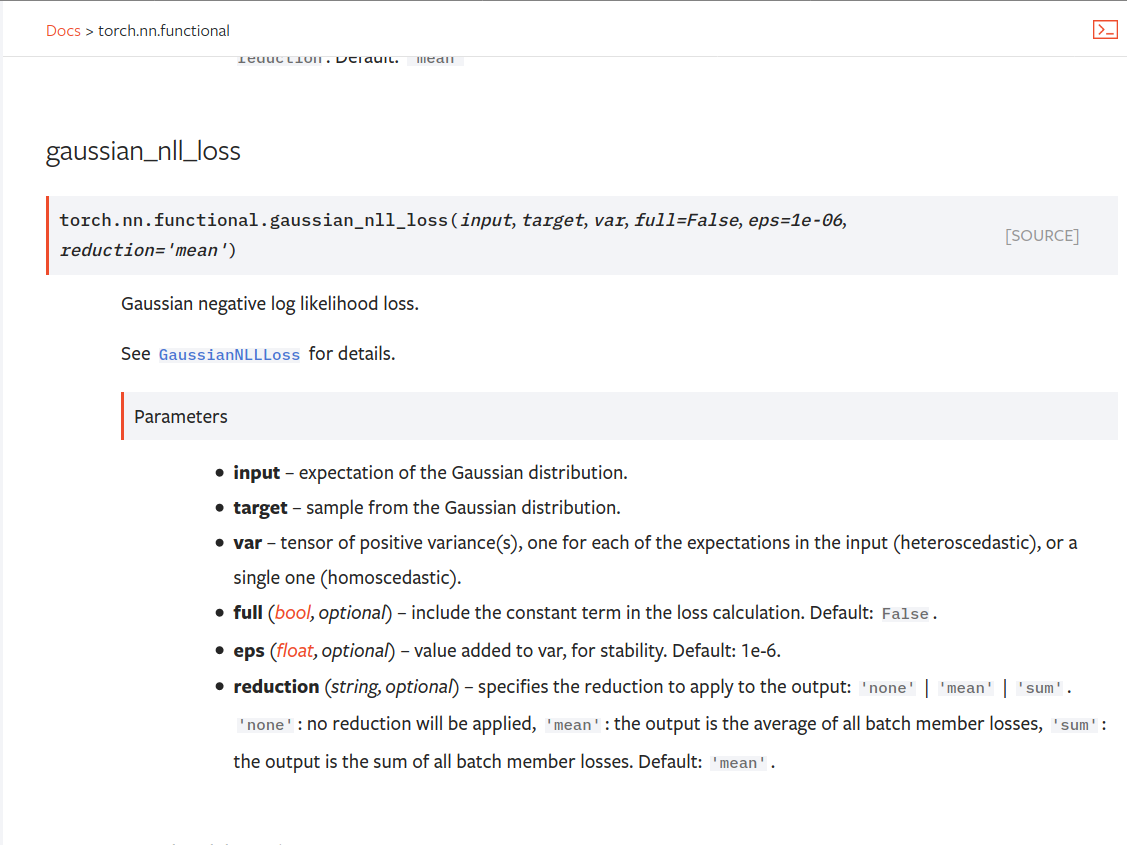

Summary:

Fixes https://github.com/pytorch/pytorch/issues/53964. cc albanD almson

## Major changes:

- Overhauled the actual loss calculation so that the shapes are now correct (in functional.py)

- added the missing doc in nn.functional.rst

## Minor changes (in functional.py):

- I removed the previous check on whether input and target were the same shape. This is to allow for broadcasting, say when you have 10 predictions that all have the same target.

- I added some comments to explain each shape check in detail. Let me know if these should be shortened/cut.

Screenshots of updated docs attached.

Let me know what you think, thanks!

## Edit: Description of change of behaviour (affecting BC):

The backwards-compatibility is only affected for the `reduction='none'` mode. This was the source of the bug. For tensors with size (N, D), the old returned loss had size (N), as incorrect summation was happening. It will now have size (N, D) as expected.

### Example

Define input tensors, all with size (2, 3).

`input = torch.tensor([[0., 1., 3.], [2., 4., 0.]], requires_grad=True)`

`target = torch.tensor([[1., 4., 2.], [-1., 2., 3.]])`

`var = 2*torch.ones(size=(2, 3), requires_grad=True)`

Initialise loss with reduction mode 'none'. We expect the returned loss to have the same size as the input tensors, (2, 3).

`loss = torch.nn.GaussianNLLLoss(reduction='none')`

Old behaviour:

`print(loss(input, target, var)) `

`# Gives tensor([3.7897, 6.5397], grad_fn=<MulBackward0>. This has size (2).`

New behaviour:

`print(loss(input, target, var)) `

`# Gives tensor([[0.5966, 2.5966, 0.5966], [2.5966, 1.3466, 2.5966]], grad_fn=<MulBackward0>)`

`# This has the expected size, (2, 3).`

To recover the old behaviour, sum along all dimensions except for the 0th:

`print(loss(input, target, var).sum(dim=1))`

`# Gives tensor([3.7897, 6.5397], grad_fn=<SumBackward1>.`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56469

Reviewed By: jbschlosser, agolynski

Differential Revision: D27894170

Pulled By: albanD

fbshipit-source-id: 197890189c97c22109491c47f469336b5b03a23f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56547

**Summary**

This commit tweaks the docstrings of `PackageExporter` so that they look

nicer on the docs website.

**Test Plan**

Continuous integration.

Test Plan: Imported from OSS

Reviewed By: ailzhang

Differential Revision: D27912965

Pulled By: SplitInfinity

fbshipit-source-id: 38c0a715365b8cfb9eecdd1b38ba525fa226a453

Summary:

This PR fixes the formatting issues in the new language reference

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56042

Reviewed By: gmagogsfm

Differential Revision: D27830179

Pulled By: nikithamalgifb

fbshipit-source-id: bce3397d4de3f1536a1a8f0a16f10a703e7d4406

Summary:

Reference: https://github.com/pytorch/pytorch/issues/50345

Changes:

* Add `i0e`

* Move some kernels from `UnaryOpsKernel.cu` to `UnarySpecialOpsKernel.cu` to decrease compilation time per file.

Time taken by i0e_vs_scipy tests: around 6.33.s

<details>

<summary>Test Run Log</summary>

```

(pytorch-cuda-dev) kshiteej@qgpu1:~/Pytorch/pytorch_module_special$ pytest test/test_unary_ufuncs.py -k _i0e_vs

======================================================================= test session starts ========================================================================

platform linux -- Python 3.8.6, pytest-6.1.2, py-1.9.0, pluggy-0.13.1

rootdir: /home/kshiteej/Pytorch/pytorch_module_special, configfile: pytest.ini

plugins: hypothesis-5.38.1

collected 8843 items / 8833 deselected / 10 selected

test/test_unary_ufuncs.py ...sss.... [100%]

========================================================================= warnings summary =========================================================================

../../.conda/envs/pytorch-cuda-dev/lib/python3.8/site-packages/torch/backends/cudnn/__init__.py:73

test/test_unary_ufuncs.py::TestUnaryUfuncsCUDA::test_special_i0e_vs_scipy_cuda_bfloat16

/home/kshiteej/.conda/envs/pytorch-cuda-dev/lib/python3.8/site-packages/torch/backends/cudnn/__init__.py:73: UserWarning: PyTorch was compiled without cuDNN/MIOpen support. To use cuDNN/MIOpen, rebuild PyTorch making sure the library is visible to the build system.

warnings.warn(

-- Docs: https://docs.pytest.org/en/stable/warnings.html

===================================================================== short test summary info ======================================================================

SKIPPED [3] test/test_unary_ufuncs.py:1182: not implemented: Could not run 'aten::_copy_from' with arguments from the 'Meta' backend. This could be because the operator doesn't exist for this backend, or was omitted during the selective/custom build process (if using custom build). If you are a Facebook employee using PyTorch on mobile, please visit https://fburl.com/ptmfixes for possible resolutions. 'aten::_copy_from' is only available for these backends: [BackendSelect, Named, InplaceOrView, AutogradOther, AutogradCPU, AutogradCUDA, AutogradXLA, UNKNOWN_TENSOR_TYPE_ID, AutogradMLC, AutogradNestedTensor, AutogradPrivateUse1, AutogradPrivateUse2, AutogradPrivateUse3, Tracer, Autocast, Batched, VmapMode].

BackendSelect: fallthrough registered at ../aten/src/ATen/core/BackendSelectFallbackKernel.cpp:3 [backend fallback]

Named: registered at ../aten/src/ATen/core/NamedRegistrations.cpp:7 [backend fallback]

InplaceOrView: fallthrough registered at ../aten/src/ATen/core/VariableFallbackKernel.cpp:56 [backend fallback]

AutogradOther: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradCPU: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradCUDA: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradXLA: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

UNKNOWN_TENSOR_TYPE_ID: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradMLC: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradNestedTensor: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradPrivateUse1: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradPrivateUse2: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradPrivateUse3: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

Tracer: registered at ../torch/csrc/autograd/generated/TraceType_4.cpp:9348 [kernel]

Autocast: fallthrough registered at ../aten/src/ATen/autocast_mode.cpp:250 [backend fallback]

Batched: registered at ../aten/src/ATen/BatchingRegistrations.cpp:1016 [backend fallback]

VmapMode: fallthrough registered at ../aten/src/ATen/VmapModeRegistrations.cpp:33 [backend fallback]

==================================================== 7 passed, 3 skipped, 8833 deselected, 2 warnings in 6.33s =====================================================

```

</details>

TODO:

* [x] Check rendered docs (https://11743402-65600975-gh.circle-artifacts.com/0/docs/special.html)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54409

Reviewed By: jbschlosser

Differential Revision: D27760472

Pulled By: mruberry

fbshipit-source-id: bdfbcaa798b00c51dc9513c34626246c8fc10548

Summary:

Related to https://github.com/pytorch/pytorch/issues/52256

Use autosummary instead of autofunction to create subpages for optim and cuda functions/classes.

Also fix some minor formatting issues in optim.LBFGS and cuda.stream docstings

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55673

Reviewed By: jbschlosser

Differential Revision: D27747741

Pulled By: zou3519

fbshipit-source-id: 070681f840cdf4433a44af75be3483f16e5acf7d

Summary:

Related to https://github.com/pytorch/pytorch/issues/52256

Use autosummary instead of autofunction to create subpages for autograd functions. I left the autoclass parts intact but manually laid out their members.

Also the Latex formatting of the spcecial page emitted a warning (solved by adding `\begin{align}...\end{align}`) and fixed alignment of equations (by using `&=` instead of `=`).

zou3519

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55672

Reviewed By: jbschlosser

Differential Revision: D27736855

Pulled By: zou3519

fbshipit-source-id: addb56f4f81c82d8537884e0ff243c1e34969a6e

Summary:

Fixes https://github.com/pytorch/pytorch/issues/35901

This change is designed to prevent fragmentation in the Caching Allocator. Permissive block splitting in the allocator allows very large blocks to be split into many pieces. Once split too finely it is unlikely all pieces will be 'free' at that same time so the original allocation can never be returned. Anecdotally, we've seen a model run out of memory failing to alloc a 50 MB block on a 32 GB card while the caching allocator is holding 13 GB of 'split free blocks'

Approach:

- Large blocks above a certain size are designated "oversize". This limit is currently set 1 decade above large, 200 MB

- Oversize blocks can not be split

- Oversize blocks must closely match the requested size (e.g. a 200 MB request will match an existing 205 MB block, but not a 300 MB block)

- In lieu of splitting oversize blocks there is a mechanism to quickly free a single oversize block (to the system allocator) to allow an appropriate size block to be allocated. This will be activated under memory pressure and will prevent _release_cached_blocks()_ from triggering

Initial performance tests show this is similar or quicker than the original strategy. Additional tests are ongoing.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44742

Reviewed By: ngimel

Differential Revision: D23752058

Pulled By: ezyang

fbshipit-source-id: ccb7c13e3cf8ef2707706726ac9aaac3a5e3d5c8

Summary:

Related to https://github.com/pytorch/pytorch/issues/52256

Use autosummary instead of autofunction to create subpages for `torch.fft` and `torch.linalg` functions.

zou3519

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55748

Reviewed By: jbschlosser

Differential Revision: D27739282

Pulled By: heitorschueroff

fbshipit-source-id: 37aa06cb8959721894ffadc15ae8c3b83481a319

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55817

**Summary**

This commit makes minor edits to the docstrings of `PackageExporter` so

that they render properly in the `torch.package` API reference.

**Test Plan**

Continuous integration (especially the docs tests).

Test Plan: Imported from OSS

Reviewed By: gmagogsfm

Differential Revision: D27726817

Pulled By: SplitInfinity

fbshipit-source-id: b81276d7278f586fceded83d23cb4d0532f7c629

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55812

**Summary**

This commit creates a barebones API reference doc for `torch.package`.

The content is sourced from the docstrings in the source for the

`torch.package`.

**Test Plan**

Continuous integration (specifically the docs tests).

Test Plan: Imported from OSS

Reviewed By: gmagogsfm

Differential Revision: D27726816

Pulled By: SplitInfinity

fbshipit-source-id: 5e9194536f80507e337b81c5ec3b5635d7121818

Summary:

Reference: https://github.com/pytorch/pytorch/issues/50345

Chages:

* Alias for sigmoid and logit

* Adds out variant for C++ API

* Updates docs to link back to `special` documentation

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54759

Reviewed By: mrshenli

Differential Revision: D27615208

Pulled By: mruberry

fbshipit-source-id: 8bba908d1bea246e4aa9dbadb6951339af353556

Summary:

Related to https://github.com/pytorch/pytorch/issues/52256

Splits torch.nn.functional into a table-of-contents page and many sub-pages, one for each function

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55038

Reviewed By: gchanan

Differential Revision: D27502677

Pulled By: zou3519

fbshipit-source-id: 38e450a0fee41c901eb56f94aee8a32f4eefc807

Summary:

This PR adds `torch.linalg.eig`, and `torch.linalg.eigvals` for NumPy compatibility.

MAGMA uses a hybrid CPU-GPU algorithm and doesn't have a GPU interface for the non-symmetric eigendecomposition. It means that it forces us to transfer inputs living in GPU memory to CPU first before calling MAGMA, and then transfer results from MAGMA to CPU. That is rather slow for smaller matrices and MAGMA is faster than CPU path only for matrices larger than 3000x3000.

Unfortunately, there is no cuSOLVER function for this operation.

Autograd support for `torch.linalg.eig` will be added in a follow-up PR.

Ref https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52491

Reviewed By: anjali411

Differential Revision: D27563616

Pulled By: mruberry

fbshipit-source-id: b42bb98afcd2ed7625d30bdd71cfc74a7ea57bb5

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55253

Previously DDP communication hooks takes a tensor list as the input. Now only takes a single tensor, as the preparation of retiring SPMD and only providing a single model replica for DDP communication hooks.

The next step is limiting only 1 model replica in Reducer.

ghstack-source-id: 125677637

Test Plan: waitforbuildbot

Reviewed By: zhaojuanmao

Differential Revision: D27533898

fbshipit-source-id: 5db92549c440f33662cf4edf8e0a0fd024101eae