mirror of

https://github.com/zebrajr/pytorch.git

synced 2025-12-06 12:20:52 +01:00

d59a6864fb

68 Commits

| Author | SHA1 | Message | Date | |

|---|---|---|---|---|

|

|

d59a6864fb |

Revert "[BE]: Update ruff to 0.285 (#107519)"

This reverts commit

|

||

|

|

88ab3e4322 |

[BE]: Update ruff to 0.285 (#107519)

This updates ruff to 0.285 which is faster, better, and have fixes a bunch of false negatives with regards to fstrings. I also enabled RUF017 which looks for accidental quadratic list summation. Luckily, seems like there are no instances of it in our codebase, so enabling it so that it stays like that. :) Pull Request resolved: https://github.com/pytorch/pytorch/pull/107519 Approved by: https://github.com/ezyang |

||

|

|

4cc1745b13 |

[BE] f-stringify torch/ and scripts (#105538)

This PR is a follow up on the pyupgrade series to convert more strings to use f-strings using `flynt`. - https://docs.python.org/3/reference/lexical_analysis.html#f-strings - https://pypi.org/project/flynt/ Command used: ``` flynt torch/ -ll 120 flynt scripts/ -ll 120 flynt tools/ -ll 120 ``` and excluded `collect_env.py` Pull Request resolved: https://github.com/pytorch/pytorch/pull/105538 Approved by: https://github.com/ezyang, https://github.com/malfet |

||

|

|

79c5e33349 |

[BE] Enable ruff's UP rules and autoformat nn/ mps/ and torch/ (#105436)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/105436 Approved by: https://github.com/malfet, https://github.com/albanD |

||

|

|

74dc2a53f6 |

Thread generator through trunc_normal_ (#100810)

This will solve @albertz's issue as described in #98200 , threading the generator argument through the trunc_normal_ function. I'm still working on #99796 (and won't let it stall out), but this fix doesn't trigger any JIT issues, so I think it might be helpful to get it merged now. Would be happy to iterate on this if there are any issues. Pull Request resolved: https://github.com/pytorch/pytorch/pull/100810 Approved by: https://github.com/Skylion007, https://github.com/albanD |

||

|

|

c848a777e8 |

DOC: Various typo fixes (#97095)

Various typos found while browsing documentation/source code. Thank you for a wonderful deep-learning library! Pull Request resolved: https://github.com/pytorch/pytorch/pull/97095 Approved by: https://github.com/mikaylagawarecki, https://github.com/kit1980 |

||

|

|

b136f3f310 |

More doctest refinements. (#83317)

Follow up to #82797 Now that the doctests themselves are in a better state, we should be able to enable xdoctest on the CI so they stay that way. @ezyang @vadimkantorov Pull Request resolved: https://github.com/pytorch/pytorch/pull/83317 Approved by: https://github.com/ezyang |

||

|

|

4618371da5 |

Integrate xdoctest - Rebased (#82797)

This is a new version of #15648 based on the latest master branch. Unlike the previous PR where I fixed a lot of the doctests in addition to integrating xdoctest, I'm going to reduce the scope here. I'm simply going to integrate xdoctest, and then I'm going to mark all of the failing tests as "SKIP". This will let xdoctest run on the dashboards, provide some value, and still let the dashboards pass. I'll leave fixing the doctests themselves to another PR. In my initial commit, I do the bare minimum to get something running with failing dashboards. The few tests that I marked as skip are causing segfaults. Running xdoctest results in 293 failed, 201 passed tests. The next commits will be to disable those tests. (unfortunately I don't have a tool that will insert the `#xdoctest: +SKIP` directive over every failing test, so I'm going to do this mostly manually.) Fixes https://github.com/pytorch/pytorch/issues/71105 @ezyang Pull Request resolved: https://github.com/pytorch/pytorch/pull/82797 Approved by: https://github.com/ezyang |

||

|

|

8def154e00 |

Fix multiple docstring type mistakes (#82474)

### Description * Docstrings using `(tuple of ints)` shows up as `(tuple of python:ints)`, so I fixed them by making the `int` no longer plural. Example: https://pytorch.org/docs/stable/generated/torch.permute.html#torch.permute * A docstring type in JIT had one of its types incorrectly highlighted as code. Example: https://pytorch.org/docs/stable/generated/torch.jit.script.html#torch.jit.script * I found some docstring type usages of `string` that had not yet been converted to `str` after #82410 * Some docstrings incorrectly listed their defaults inside the docstring types. * I also found a docstring that was missing its type ### Testing No testing should be required. --- In the developer guidelines, there should probably be standards listed for the docstring types. Pull Request resolved: https://github.com/pytorch/pytorch/pull/82474 Approved by: https://github.com/albanD |

||

|

|

dfde877c0b |

Add type hints for a few random functions/classes

Adds type hints for a few functions/classes that we use in [TorchGeo](https://github.com/microsoft/torchgeo). Pull Request resolved: https://github.com/pytorch/pytorch/pull/74171 Approved by: https://github.com/jbschlosser, https://github.com/anjali411 |

||

|

|

80fe96c860 |

Revert "Add type hints for a few random functions/classes"

This reverts commit

|

||

|

|

cdb40eb528 |

Add type hints for a few random functions/classes

Adds type hints for a few functions/classes that we use in [TorchGeo](https://github.com/microsoft/torchgeo). Pull Request resolved: https://github.com/pytorch/pytorch/pull/74171 Approved by: https://github.com/jbschlosser |

||

|

|

b5b296c4cf |

Fix: Make nn.init.orthogonal_ no-op for empty input

Fixes #73503. Pull Request resolved: https://github.com/pytorch/pytorch/pull/75553 Approved by: https://github.com/albanD |

||

|

|

f87f753bb9 |

avoiding adding some functions to the public python API before 1.11 release (#72543)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/72543

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision: D34085724

Pulled By: bdhirsh

fbshipit-source-id: 941d5a90a6fa5328268d623e0e2b01577e4132ca

(cherry picked from commit

|

||

|

|

8c505bbc86 |

Make ShardedTensor ctor more inline with torch.Tensor ctor (#72164)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/72164

torch.Tensor ctor creates an empty tensor and this PR makes

ShardedTensor on par with that.

In particular we remove TensorInitParams and instead always a create an empty

tensor and then fill it in for things like ones, zeros, full etc. This is

inline with torch.ones etc. as well since even for those APIs we first create

an empty tensor and then fill it out.

ghstack-source-id: 148318045

Test Plan: waitforbuildbot

Reviewed By: wanchaol

Differential Revision: D33934603

fbshipit-source-id: 5655bbd726f29e74600ebe9f33f9dc5952b528f4

(cherry picked from commit

|

||

|

|

b199e3c842 |

Provide functionality to write custom ShardedTensor ops. (#69874)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/69874 We have a handful of ops supported for ShardedTensor via ``__torch_function__`` dispatch. However, we currently can't cover all torch operators and having a way for users to extend this functionality will make this functionality much more general. In this PR, I've introduced a custom_sharded_op decorator which can be used to register a custom sharded op implementation. ghstack-source-id: 145841141 Test Plan: waitforbuildbot Reviewed By: wanchaol Differential Revision: D33078587 fbshipit-source-id: 5936b7ac25582e613653c19afa559219719ee54b |

||

|

|

3596e13d45 |

Add torch.nn.init.normal_ and torch.nn.init.kaiming_uniform_ ops to ShardedTensor (#67057)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/67057 Extend ShardedTensor with torch.nn.init.[normal_, and kaiming_uniform_] ops Follow up from https://github.com/pytorch/pytorch/pull/63997 Test Plan: a) Unit Test (pytorch) ... $ python test/distributed/_sharded_tensor/ops/test_init.py TestShardedTensorNNInit --v or b) Manual run: Instruction here: https://docs.google.com/document/d/1_m1Hdo5w51-hhPlZ_F8Y6PIWrN7UgJZqiSpARYvhsaE/edit# s/uniform_/normal_ or kaiming_uniform_ Imported from OSS Reviewed By: pritamdamania87 Differential Revision: D31845654 fbshipit-source-id: e7aedc0972539da59f7b84bbbf617caf6b206d52 |

||

|

|

b6df043f1f |

Add torch.nn.init.uniform_ operator to ShardedTensor. (#63997)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63997 Use torch_function to extend torch.nn.init.uniform_ The Init is done in SPMD fashion. Note that ideally we want to aggregate sharded tensors into a global tensor, init it and reshard. It's fine to run it SPMD since uniform is I.I.D indepenent and identifically distributed. Also enable unit test for test_linear.py for OSS test Test Plan: a) Unit Test (pytorch) ... $ python test/distributed/_sharded_tensor/ops/test_init.py TestShardedTensorNNInit --v (pytorch) ... $ python test/distributed/_sharded_tensor/ops/test_linear.py --v (before runs this command is no-op) or b) Manual run: Instruction here: https://docs.google.com/document/d/1_m1Hdo5w51-hhPlZ_F8Y6PIWrN7UgJZqiSpARYvhsaE/edit# Imported from OSS Reviewed By: pritamdamania87, anjali411 Differential Revision: D30563017 fbshipit-source-id: d1859f7682235bcb44515efc69ca92bc5e34fce1 |

||

|

|

24087d07ca |

Deprecate QR (#57745)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/57745 Reviewed By: bdhirsh Differential Revision: D28318164 Pulled By: mruberry fbshipit-source-id: b8e3cb9d7ab33f30c8653ec39f932a8af8bd2a50 |

||

|

|

df1dfd879e |

Fix errors when initializing Linear with 0 in_features (#56505)

Summary: Fixes https://github.com/pytorch/pytorch/issues/48152 Pull Request resolved: https://github.com/pytorch/pytorch/pull/56505 Reviewed By: malfet Differential Revision: D27919590 Pulled By: jbschlosser fbshipit-source-id: 462ca280051f63c31ff588c38a9e436116c0f336 |

||

|

|

a7c7fc96ff |

Add doc warnings for default SELU gain (#54057)

Summary: Fixes https://github.com/pytorch/pytorch/issues/24991 and provides the alternative solution suggested in https://github.com/pytorch/pytorch/issues/53694. Also related to https://github.com/pytorch/pytorch/issues/54055 Attempt to make people aware of the difference between paper and implementation of SELU gain. Pull Request resolved: https://github.com/pytorch/pytorch/pull/54057 Reviewed By: ailzhang Differential Revision: D27292060 Pulled By: jbschlosser fbshipit-source-id: e0e303595e6a7d05d11dfb68735e1839f55987a2 |

||

|

|

70a43425e0 |

Simplify init._calculate_fan_in_and_fan_out (#53522)

Summary: This uses the shape of the tensor instead of directly indexing it. This is useful when extending PyTorch's tensor class, e.g. for lazy access. Since the `init` sub-module doesn't check for `torch_function`, it is not possibly to override its functions. Explicitly indexing the tensor will force a call to tensor() and reconstruct the full tensor/explicitly access the elements. Simply using the shape allows to avoid that. Fixes https://github.com/pytorch/pytorch/issues/53540 Pull Request resolved: https://github.com/pytorch/pytorch/pull/53522 Reviewed By: anjali411 Differential Revision: D26947794 Pulled By: jbschlosser fbshipit-source-id: 80cd65efed16383f21363cee2eb404c9bc05971c |

||

|

|

e9b369c25f |

Add SELU Activation to calculate_gain (#50664)

Summary:

Fixes #{[24991](https://github.com/pytorch/pytorch/issues/24991)}

I used a value of 0.75 as suggested in the forums by Thomas: https://discuss.pytorch.org/t/calculate-gain-tanh/20854/6

I verified that the value keeps the gradient stable for a 100-layer network.

Code to reproduce (from [jpeg729](https://discuss.pytorch.org/t/calculate-gain-tanh/20854/4)):

```python

import torch

import torch.nn.functional as F

import sys

a = torch.randn(1000,1000, requires_grad=True)

b = a

print (f"in: {a.std().item():.4f}")

for i in range(100):

l = torch.nn.Linear(1000,1000, bias=False)

torch.nn.init.xavier_normal_(l.weight, torch.nn.init.calculate_gain("selu"))

b = getattr(F, 'selu')(l(b))

if i % 10 == 0:

print (f"out: {b.std().item():.4f}", end=" ")

a.grad = None

b.sum().backward(retain_graph=True)

print (f"grad: {a.grad.abs().mean().item():.4f}")

```

Output:

```

in: 1.0008

out: 0.7968 grad: 0.6509

out: 0.3127 grad: 0.2760

out: 0.2404 grad: 0.2337

out: 0.2062 grad: 0.2039

out: 0.2056 grad: 0.1795

out: 0.2044 grad: 0.1977

out: 0.2005 grad: 0.2045

out: 0.2042 grad: 0.2273

out: 0.1944 grad: 0.2034

out: 0.2085 grad: 0.2464

```

I included the necessary documentation change, and it passes the _test_calculate_gain_nonlinear_ unittest.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50664

Reviewed By: mruberry

Differential Revision: D25942217

Pulled By: ngimel

fbshipit-source-id: 29ff1be25713484fa7c516df71b12fdaecfb9af8

|

||

|

|

b89827b73f |

Drop unused imports (#49972)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/49972 From ``` ./python/libcst/libcst codemod remove_unused_imports.RemoveUnusedImportsWithGlean --no-format caffe2/ ``` Test Plan: Standard sandcastle tests Reviewed By: xush6528 Differential Revision: D25727352 fbshipit-source-id: 6b90717e161aeb1da8df30e67d586101d35d7d5f |

||

|

|

f83d57f99e |

[Don't review] Clean up type annotations in caffe2/torch/nn (#50079)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/50079 Test Plan: Sandcastle tests Reviewed By: xush6528 Differential Revision: D25718694 fbshipit-source-id: f535fb879bcd4cb4ea715adfd90bbffa3fcc1150 |

||

|

|

20ac736200 |

Remove py2 compatible future imports (#44735)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/44735 Reviewed By: mruberry Differential Revision: D23731306 Pulled By: ezyang fbshipit-source-id: 0ba009a99e475ddbe22981be8ac636f8a1c8b02f |

||

|

|

847d102e93 |

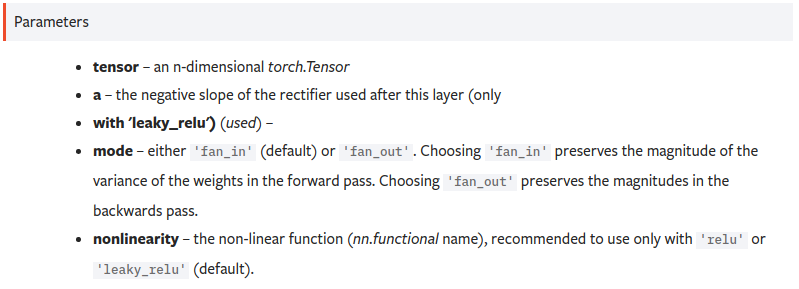

docs: Fixed docstring indentation for documentation (#37739)

Summary: Hello there, I was going through the default initialization of some layers, and ended up on the `torch.nn.init` documentation. As shown below, there was a slight issue with the docstrings of both `kaiming_normal_` and `kaiming_uniform_` that yielded a wrong list of function parameters:  This PR fixes the indentation in the corresponding docstrings. Any feedback is welcome! Pull Request resolved: https://github.com/pytorch/pytorch/pull/37739 Differential Revision: D21393728 Pulled By: ngimel fbshipit-source-id: 64523cb328e72d2e51c2c42b20a4545c1ec5f478 |

||

|

|

78d5707041 |

Fix type annotations and make MyPy run on torch/ (#36584)