Summary:

Currently when cudnn_convolution_relu is passed a channels last Tensor it will return a contiguous Tensor. This PR changes this behavior and bases the output format on the input format.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62482

Reviewed By: ngimel

Differential Revision: D30049905

Pulled By: cpuhrsch

fbshipit-source-id: 98521d14ee03466e7128a1912b9f754ffe10b448

Summary:

Enable Gelu bf16/fp32 in CPU path using Mkldnn implementation. User doesn't need to_mkldnn() explicitly. New Gelu fp32 performs better than original one.

Add Gelu backward for https://github.com/pytorch/pytorch/pull/53615.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58525

Reviewed By: ejguan

Differential Revision: D29940369

Pulled By: ezyang

fbshipit-source-id: df9598262ec50e5d7f6e96490562aa1b116948bf

Summary:

Fixes https://github.com/pytorch/pytorch/issues/11959

Alternative approach to creating a new `CrossEntropyLossWithSoftLabels` class. This PR simply adds support for "soft targets" AKA class probabilities to the existing `CrossEntropyLoss` and `NLLLoss` classes.

Implementation is dumb and simple right now, but future work can add higher performance kernels for this case.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61044

Reviewed By: zou3519

Differential Revision: D29876894

Pulled By: jbschlosser

fbshipit-source-id: 75629abd432284e10d4640173bc1b9be3c52af00

Summary:

Fixes Python part of https://github.com/pytorch/pytorch/issues/60747

Enhances the Python versions of `Transformer`, `TransformerEncoderLayer`, and `TransformerDecoderLayer` to support callables as their activation functions. The old way of specifying activation function still works as well.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61355

Reviewed By: bdhirsh

Differential Revision: D29967302

Pulled By: jbschlosser

fbshipit-source-id: 8ee6f20083d49dcd3ab432a18e6ad64fe1e05705

Summary:

Here is the PR to enable the softmax calculation with data type of `bfloat16` when not along the last dim.

* Use bf16 specialization for forward calculation to reduce the bf16/fp32 cast in vec template.

* Release the bf16 limitation for backward calculation.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/60371

Reviewed By: ejguan

Differential Revision: D29563109

Pulled By: cpuhrsch

fbshipit-source-id: f6b439fa3850a6c633f35db65ea3d735b747863e

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62281

Closes gh-24646, Closes gh-24647

There is no `TensorIterator` equivalent to these kernels so this is just

migrating the existing kernels over to the ATen style.

I've benchmarked for contiguous tensors with this script:

```

import torch

shape = (10, 10, 100, 100)

x = torch.randn(*shape, device='cuda')

w = torch.randn((10, 1, 5, 5), device='cuda')

for _ in range(100):

torch.nn.functional.conv2d(x, w, groups=10)

```

and similarly for backwards. I see these as the same to within measurement error.

| | Master Forward (us) | This PR Forward (us) |

|------------------:|:-------------------:|:--------------------:|

| Forward | 133.5 | 133.6 |

| Backward (input) | 1,102 | 1,119 |

| Backward (weight) | 2,220 | 2,217 |

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision: D29943062

Pulled By: ngimel

fbshipit-source-id: fc5d16496eb733743face7c5a14e532d7b8ee26a

Summary:

Part of the fix for https://github.com/pytorch/pytorch/issues/12013

Checks if the inputs and outputs are non-zero in order to allow the Bilinear layer to accept 0-dim batch sizes. The if-check for this checks for both input and output dim sizes since the `_trilinear` function is written to work with both forward and backward for Bilinear.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/47106

Reviewed By: ejguan

Differential Revision: D29935589

Pulled By: jbschlosser

fbshipit-source-id: 607d3352bd4f88e2528c64408f04999960be049d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62006

Closes gh-24646, gh-24647

There is no `TensorIterator` equivalent to these kernels so this is just

migrating the existing kernels over to the ATen style.

I've benchmarked for contiguous tensors with this script:

```

import torch

shape = (10, 10, 100, 100)

x = torch.randn(*shape, device='cuda')

w = torch.randn((10, 1, 5, 5), device='cuda')

for _ in range(100):

torch.nn.functional.conv2d(x, w, groups=10)

```

and similarly for backwards. I see these as the same to within measurement error.

| | Master Forward (us) | This PR Forward (us) |

|------------------:|:-------------------:|:--------------------:|

| Forward | 133.5 | 133.6 |

| Backward (input) | 1,102 | 1,119 |

| Backward (weight) | 2,220 | 2,217 |

Test Plan: Imported from OSS

Reviewed By: jbschlosser

Differential Revision: D29883676

Pulled By: ngimel

fbshipit-source-id: 9b2ac62cdd8a84e1a23ffcd66035b2b2fe2374d8

Summary:

Fixes https://github.com/pytorch/pytorch/issues/61924

The fused backward kernel was using the weight dtype to detect mixed precision usage, but the weights can be none and the `running_mean` and `running_var` can still be mixed precision. So, I update the check to look at those variables as well.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61962

Reviewed By: albanD

Differential Revision: D29825516

Pulled By: ngimel

fbshipit-source-id: d087fbf3bed1762770cac46c0dcec30c03a86fda

Summary:

Fixes https://github.com/pytorch/pytorch/issues/58816

- enhance the backward of `nn.SmoothL1Loss` to allow integral `target`

- add test cases in `test_nn.py` to check the `input.grad` between the integral input and its floating counterpart.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61112

Reviewed By: mrshenli

Differential Revision: D29775660

Pulled By: albanD

fbshipit-source-id: 544eabb6ce1ea13e1e79f8f18c70f148e92be508

Summary:

Fixes https://github.com/pytorch/pytorch/issues/61242

Previous code was wrongly checking if a tensor is a buffer in a module by comparing values; fix compares names instead.

Docs need some updating as well- current plan is to bump that to a separate PR, but I'm happy to do it here as well if preferred.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61429

Reviewed By: gchanan

Differential Revision: D29712341

Pulled By: jbschlosser

fbshipit-source-id: 41f29ab746505e60f13de42a9053a6770a3aac22

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61584

add_relu is not working with broadcasting. This registers a scalar version of add_relu in native_functions that casts to tensor before calling the regular function. TensorIterator handles broadcasting analogously to existing add.

ghstack-source-id: 133480068

Test Plan: python3 test/test_nn.py TestAddRelu

Reviewed By: kimishpatel

Differential Revision: D29641768

fbshipit-source-id: 1b0ecfdb7eaf44afed83c9e9e74160493c048cbc

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/60517

This is to fix the module support on lazymodulefixin on the bug issue #60132

Check the link: https://github.com/pytorch/pytorch/issues/60132

We will have to update lazy_extension given the dependency on module.py and update the unit test as well.

Test Plan:

Unit test passes

torchrec test passes

Reviewed By: albanD

Differential Revision: D29274068

fbshipit-source-id: 1c20f7f0556e08dc1941457ed20c290868346980

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59987

Similar as GroupNorm, improve numerical stability of LayerNorm by Welford algorithm and pairwise sum.

Test Plan: buck test mode/dev-nosan //caffe2/test:nn -- "LayerNorm"

Reviewed By: ngimel

Differential Revision: D29115235

fbshipit-source-id: 5183346c3c535f809ec7d98b8bdf6d8914bfe790

Summary:

Fixes https://github.com/pytorch/pytorch/issues/24610

Aten Umbrella issue https://github.com/pytorch/pytorch/issues/24507

Related to https://github.com/pytorch/pytorch/issues/59765

The performance does not change between this PR and master with the following benchmark script:

<details>

<summary>Benchmark script</summary>

```python

import torch

import torch.nn as nn

import time

torch.manual_seed(0)

def _time():

torch.cuda.synchronize()

MS_PER_SECOND = 1000

return time.perf_counter() * MS_PER_SECOND

device = "cuda"

C = 30

softmax = nn.LogSoftmax(dim=1)

n_runs = 250

for reduction in ["none", "mean", "sum"]:

for N in [100_000, 500_000, 1_000_000]:

fwd_t = 0

bwd_t = 0

data = torch.randn(N, C, device=device)

target = torch.empty(N, dtype=torch.long, device=device).random_(0, C)

loss = nn.NLLLoss(reduction=reduction)

input = softmax(data)

for i in range(n_runs):

t1 = _time()

result = loss(input, target)

t2 = _time()

fwd_t = fwd_t + (t2 - t1)

fwd_avg = fwd_t / n_runs

print(

f"input size({N}, {C}), reduction: {reduction} "

f"forward time is {fwd_avg:.2f} (ms)"

)

print()

```

</details>

## master

```

input size(100000, 30), reduction: none forward time is 0.02 (ms)

input size(500000, 30), reduction: none forward time is 0.08 (ms)

input size(1000000, 30), reduction: none forward time is 0.15 (ms)

input size(100000, 30), reduction: mean forward time is 1.81 (ms)

input size(500000, 30), reduction: mean forward time is 8.24 (ms)

input size(1000000, 30), reduction: mean forward time is 16.46 (ms)

input size(100000, 30), reduction: sum forward time is 1.66 (ms)

input size(500000, 30), reduction: sum forward time is 8.24 (ms)

input size(1000000, 30), reduction: sum forward time is 16.46 (ms)

```

## this PR

```

input size(100000, 30), reduction: none forward time is 0.02 (ms)

input size(500000, 30), reduction: none forward time is 0.08 (ms)

input size(1000000, 30), reduction: none forward time is 0.15 (ms)

input size(100000, 30), reduction: mean forward time is 1.80 (ms)

input size(500000, 30), reduction: mean forward time is 8.24 (ms)

input size(1000000, 30), reduction: mean forward time is 16.46 (ms)

input size(100000, 30), reduction: sum forward time is 1.66 (ms)

input size(500000, 30), reduction: sum forward time is 8.24 (ms)

input size(1000000, 30), reduction: sum forward time is 16.46 (ms)

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/60097

Reviewed By: mrshenli

Differential Revision: D29303099

Pulled By: ngimel

fbshipit-source-id: fc0d636543a79ea81158d286dcfb84043bec079a

Summary:

Before this change it was implemented with the assumption, that number of groups, input and output channels are the same, which is not always the case

Extend the implementation to support any number of output channels as long as number of groups equals to the number of input channels (i.e. kernel.size(1) == 1)

Fixes https://github.com/pytorch/pytorch/issues/60176

Pull Request resolved: https://github.com/pytorch/pytorch/pull/60460

Reviewed By: albanD

Differential Revision: D29299693

Pulled By: malfet

fbshipit-source-id: 31130c71ce86535ccfba2f4929eee3e2e287b2f0

Summary:

Fixes #https://github.com/pytorch/pytorch/issues/50192

It has been discussed in the issue that, currently RNN apis do not support inputs with `seq_len=0` and the error message does not reflect this issue clearly. This PR is suggesting a solution to this issue, by adding a more clear error message that, none of RNN api (nn.RNN, nn.GRU and nn.LSTM) do not support `seq_len=0` for neither one-directional nor bi-directional layers.

```

import torch

input_size = 5

hidden_size = 6

rnn = torch.nn.GRU(input_size, hidden_size)

for seq_len in reversed(range(4)):

output, h_n = rnn(torch.zeros(seq_len, 10, input_size))

print('{}, {}'.format(output.shape, h_n.shape))

```

Previously was giving output as :

```

torch.Size([3, 10, 6]), torch.Size([1, 10, 6])

torch.Size([2, 10, 6]), torch.Size([1, 10, 6])

torch.Size([1, 10, 6]), torch.Size([1, 10, 6])

Traceback (most recent call last):

File "test.py", line 8, in <module>

output, h_n = rnn(torch.zeros(seq_len, 10, input_size))

File "/opt/miniconda3/lib/python3.8/site-packages/torch/nn/modules/module.py", line 727, in _call_impl

result = self.forward(*input, **kwargs)

File "/opt/miniconda3/lib/python3.8/site-packages/torch/nn/modules/rnn.py", line 739, in forward

result = _VF.gru(input, hx, self._flat_weights, self.bias, self.num_layers,

RuntimeError: stack expects a non-empty TensorList

```

However, after adding this PR, this error message change for any combination of

[RNN, GRU and LSTM] x [one-directional, bi-directional].

Let's illustrate the change with the following code snippet:

```

import torch

input_size = 5

hidden_size = 6

rnn = torch.nn.LSTM(input_size, hidden_size, bidirectional=True)

output, h_n = rnn(torch.zeros(0, 10, input_size))

```

would give output as following:

```

Traceback (most recent call last):

File "<stdin>", line 2, in <module>

File "/fsx/users/iramazanli/pytorch/torch/nn/modules/module.py", line 1054, in _call_impl

return forward_call(*input, **kwargs)

File "/fsx/users/iramazanli/pytorch/torch/nn/modules/rnn.py", line 837, in forward

result = _VF.gru(input, hx, self._flat_weights, self.bias, self.num_layers,

RuntimeError: Expected sequence length to be larger than 0 in RNN

```

***********************************

The change for Packed Sequence didn't seem to be necessary because from the following code snippet error message looks clear about the issue:

```

import torch

import torch.nn.utils.rnn as rnn_utils

import torch.nn as nn

packed = rnn_utils.pack_sequence([])

```

returns:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/fsx/users/iramazanli/pytorch/torch/nn/utils/rnn.py", line 398, in pack_sequence

return pack_padded_sequence(pad_sequence(sequences), lengths, enforce_sorted=enforce_sorted)

File "/fsx/users/iramazanli/pytorch/torch/nn/utils/rnn.py", line 363, in pad_sequence

return torch._C._nn.pad_sequence(sequences, batch_first, padding_value)

RuntimeError: received an empty list of sequences

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/60269

Reviewed By: mrshenli

Differential Revision: D29299914

Pulled By: iramazanli

fbshipit-source-id: 5ca98faa28d4e6a5a2f7600a30049de384a3b132

Summary:

Partially addresses https://github.com/pytorch/pytorch/issues/49825 by improving the testing

- Rename some of the old tests that had "inplace_view" in their names, but actually mean "inplace_[update_]on_view" so there is no confusion with the naming

- Adds some tests in test_view_ops that verify basic behavior

- Add tests that creation meta is properly handled for no-grad, multi-output, and custom function cases

- Add test that verifies that in the cross dtype view case, the inplace views won't be accounted in the backward graph on rebase as mentioned in the issue.

- Update inference mode tests to also check in-place

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59891

Reviewed By: albanD

Differential Revision: D29272546

Pulled By: soulitzer

fbshipit-source-id: b12acf5f0e3f788167ebe268423cdb58481b56f6

Summary:

Fixes https://github.com/pytorch/pytorch/issues/27655

This PR adds a C++ and Python version of ReflectionPad3d with structured kernels. The implementation uses lambdas extensively to better share code from the backward and forward pass.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59791

Reviewed By: gchanan

Differential Revision: D29242015

Pulled By: jbschlosser

fbshipit-source-id: 18e692d3b49b74082be09f373fc95fb7891e1b56

Summary:

Following https://github.com/pytorch/pytorch/issues/59624 I observed some straggling failing tests on Ampere due to TF32 thresholds. This PR just twiddles some more thresholds to fix the (6) failing tests I saw on A100.

CC Flamefire ptrblck ngimel

Pull Request resolved: https://github.com/pytorch/pytorch/pull/60209

Reviewed By: gchanan

Differential Revision: D29220508

Pulled By: ngimel

fbshipit-source-id: 7c83187a246e1b3a24b181334117c0ccf2baf311

Summary:

Makes possible that the first register parametrization depends on a number of parameters rather than just one. Examples of these types of parametrizations are `torch.nn.utils.weight_norm` and low rank parametrizations via the multiplication of a `n x k` tensor by a `k x m` tensor with `k <= m, n`.

Follows the plan outlined in https://github.com/pytorch/pytorch/pull/33344#issuecomment-768574924. A short summary of the idea is: we call `right_inverse` when registering a parametrization to generate the tensors that we are going to save. If `right_inverse` returns a sequence of tensors, then we save them as `original0`, `original1`... If it returns a `Tensor` or a sequence of length 1, we save it as `original`.

We only allow to have many-to-one parametrizations in the first parametrization registered. The next parametrizations would need to be one-to-one.

There were a number of choices in the implementation:

If the `right_inverse` returns a sequence of parameters, then we unpack it in the forward. This is to allow to write code as:

```python

class Sum(nn.Module):

def forward(self, X, Y):

return X + Y

def right_inverse(Z):

return Z, torch.zeros_like(Z)

```

rather than having to unpack manually a list or a tuple within the `forward` function.

At the moment the errors are a bit all over the place. This is to avoid having to check some properties of `forward` and `right_inverse` when they are registered. I left this like this for now, but I believe it'd be better to call these functions when they are registered to make sure the invariants hold and throw errors as soon as possible.

The invariants are the following:

1. The following code should be well-formed

```python

X = module.weight

Y = param.right_inverse(X)

assert isinstance(Y, Tensor) or isinstance(Y, collections.Sequence)

Z = param(Y) if isisntance(Y, Tensor) else param(*Y)

```

in other words, if `Y` is a `Sequence` of `Tensor`s (we check also that the elements of the sequence are Tensors), then it is of the same length as the number parameters `param.forward` accepts.

2. Always: `X.dtype == Z.dtype and X.shape == Z.shape`. This is to protect the user from shooting themselves in the foot, as it's too odd for a parametrization to change the metadata of a tensor.

3. If it's one-to-one: `X.dtype == Y.dtype`. This is to be able to do `X.set_(Y)` so that if a user first instantiates the optimiser and then puts the parametrisation, then we reuse `X` and the user does not need to add a new parameter to the optimiser. Alas, this is not possible when the parametrisation is many-to-one. The current implementation of `spectral_norm` and `weight_norm` does not seem to care about this, so this would not be a regression. I left a warning in the documentation though, as this case is a bit tricky.

I'm still missing to go over the formatting of the documentation, I'll do that tomorrow.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58488

Reviewed By: soulitzer

Differential Revision: D29100708

Pulled By: albanD

fbshipit-source-id: b9e91f439cf6b5b54d5fa210ec97c889efb9da38

Summary:

Implements a number of changes discussed with soulitzer offline.

In particular:

- Initialise `u`, `v` in `__init__` rather than in `_update_vectors`

- Initialise `u`, `v` to some reasonable vectors by doing 15 power iterations at the start

- Simplify the code of `_reshape_weight_to_matrix` (and make it faster) by using `flatten`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59564

Reviewed By: ailzhang

Differential Revision: D29066238

Pulled By: soulitzer

fbshipit-source-id: 6a58e39ddc7f2bf989ff44fb387ab408d4a1ce3d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58950

Use tensor iterator's API to set grain size in order to parallelize gelu op.

ghstack-source-id: 130947174

Test Plan: test_gelu

Reviewed By: ezyang

Differential Revision: D28689819

fbshipit-source-id: 0a02066d47a4d9648323c5ec27d7e0e91f4c303a

Summary:

Make sure tests run explicitely without TF32 don't use TF32 operations

Fixes https://github.com/pytorch/pytorch/issues/52278

After the tf32 accuracy tolerance was increased to 0.05 this is the only remaining change required to fix the above issue (for TestNN.test_Conv3d_1x1x1_no_bias_cuda)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59624

Reviewed By: heitorschueroff

Differential Revision: D28996279

Pulled By: ngimel

fbshipit-source-id: 7f1b165fd52cfa0898a89190055b7a4b0985573a

Summary:

As per title. Resolves https://github.com/pytorch/pytorch/issues/56683.

`gradgradcheck` will fail once `target.requires_grad() == True` because of the limitations of the current double backward implementation.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59447

Reviewed By: agolynski

Differential Revision: D28910140

Pulled By: albanD

fbshipit-source-id: 20934880eb4d22bec34446a6d1be0a38ef95edc7

Summary:

This PR introduces a helper function named `torch.nn.utils.skip_init()` that accepts a module class object + `args` / `kwargs` and instantiates the module while skipping initialization of parameter / buffer values. See discussion at https://github.com/pytorch/pytorch/issues/29523 for more context. Example usage:

```python

import torch

m = torch.nn.utils.skip_init(torch.nn.Linear, 5, 1)

print(m.weight)

m2 = torch.nn.utils.skip_init(torch.nn.Linear, 5, 1, device='cuda')

print(m2.weight)

m3 = torch.nn.utils.skip_init(torch.nn.Linear, in_features=5, out_features=1)

print(m3.weight)

```

```

Parameter containing:

tensor([[-3.3011e+28, 4.5915e-41, -3.3009e+28, 4.5915e-41, 0.0000e+00]],

requires_grad=True)

Parameter containing:

tensor([[-2.5339e+27, 4.5915e-41, -2.5367e+27, 4.5915e-41, 0.0000e+00]],

device='cuda:0', requires_grad=True)

Parameter containing:

tensor([[1.4013e-45, 0.0000e+00, 0.0000e+00, 0.0000e+00, 0.0000e+00]],

requires_grad=True)

```

Bikeshedding on the name / namespace is welcome, as well as comments on the design itself - just wanted to get something out there for discussion.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57555

Reviewed By: zou3519

Differential Revision: D28640613

Pulled By: jbschlosser

fbshipit-source-id: 5654f2e5af5530425ab7a9e357b6ba0d807e967f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48919

move data indexing utils

parallel inference contiguous path

parallel inference channels last path

add dim apply

optimize update stats

add channels last support for backward

Revert "add channels last support for backward"

This reverts commit cc5e29dce44395250f8e2abf9772f0b99f4bcf3a.

Revert "optimize update stats"

This reverts commit 7cc6540701448b9cfd5833e36c745b5015ae7643.

Revert "add dim apply"

This reverts commit b043786d8ef72dee5cf85b5818fcb25028896ecd.

bug fix

add batchnorm nhwc test for cpu, including C=1 and HW=1

Test Plan: Imported from OSS

Reviewed By: glaringlee

Differential Revision: D25399468

Pulled By: VitalyFedyunin

fbshipit-source-id: a4cd7a09cd4e1a8f5cdd79c7c32c696d0db386bd

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48918

enable test case on AvgPool2d channels last for CPU

Test Plan: Imported from OSS

Reviewed By: glaringlee

Differential Revision: D25399466

Pulled By: VitalyFedyunin

fbshipit-source-id: 9477b0c281c0de5ed981a97e2dcbe6072d7f0aef

Summary:

Adds a new file under `torch/nn/utils/parametrizations.py` which should contain all the parametrization implementations

For spectral_norm we add the `SpectralNorm` module which can be registered using `torch.nn.utils.parametrize.register_parametrization` or using a wrapper: `spectral_norm`, the same API the old implementation provided.

Most of the logic is borrowed from the old implementation:

- Just like the old implementation, there should be cases when retrieving the weight should perform another power iteration (thus updating the weight) and cases where it shouldn't. For example in eval mode `self.training=True`, we do not perform power iteration.

There are also some differences/difficulties with the new implementation:

- Using new parametrization functionality as-is there doesn't seem to be a good way to tell whether a 'forward' call was the result of parametrizations are unregistered (and leave_parametrizations=True) or when the injected property's getter was invoked. The issue is that we want perform power iteration in the latter case but not the former, but we don't have this control as-is. So, in this PR I modified the parametrization functionality to change the module to eval mode before triggering their forward call

- Updates the vectors based on weight on initialization to fix https://github.com/pytorch/pytorch/issues/51800 (this avoids silently update weights in eval mode). This also means that we perform twice any many power iterations by the first forward.

- right_inverse is just the identity for now, but maybe it should assert that the passed value already satisfies the constraints

- So far, all the old spectral_norm tests have been cloned, but maybe we don't need so much testing now that the core functionality is already well tested

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57784

Reviewed By: ejguan

Differential Revision: D28413201

Pulled By: soulitzer

fbshipit-source-id: e8f1140f7924ca43ae4244c98b152c3c554668f2

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55189

Currently EmbeddingBag and it variants support either int32 or int64 indices/offsets. We have use cases where there are mix of int32 and int64 indices which are not supported yet. To avoid introducing too many branches we could simply cast offsets type to indices type when they are not the same.

Test Plan: unit tests

Reviewed By: allwu

Differential Revision: D27482738

fbshipit-source-id: deeadd391d49ff65d17d016092df1839b82806cc

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57558Fixes#53359

If someone directly saves an nn.LSTM in PyTorch 1.7 and then loads it in PyTorch

1.8, it errors out with the following:

```

(In PyTorch 1.7)

import torch

model = torch.nn.LSTM(2, 3)

torch.save(model, 'lstm17.pt')

(In PyTorch 1.8)

model = torch.load('lstm17.pt')

AttributeError: 'LSTM' object has no attribute 'proj_size'

```

Although we do not officially support this (directly saving modules via

torch.save), it used to work and the fix is very simple. This PR adds an

extra line to `__setstate__`: if the state we are passed does not have

a `proj_size` attribute, we assume it was saved from PyTorch 1.7 and

older and set `proj_size` equal to 0.

Test Plan:

I wrote a test that tests `__setstate__`. But also,

Run the following:

```

(In PyTorch 1.7)

import torch

x = torch.ones(32, 5, 2)

model = torch.nn.LSTM(2, 3)

torch.save(model, 'lstm17.pt')

y17 = model(x)

(Using this PR)

model = torch.load('lstm17.pt')

x = torch.ones(32, 5, 2)

y18 = model(x)

```

and finally compare y17 and y18.

Reviewed By: mrshenli

Differential Revision: D28198477

Pulled By: zou3519

fbshipit-source-id: e107d1ebdda23a195a1c3574de32a444eeb16191

Summary:

Fix a numerical issue of CUDA channels-last SyncBatchNorm

The added test is a repro for the numerical issue. Thanks for the help from jjsjann123 who identified the root cause. Since pytorch SBN channels-last code was migrated from [nvidia/apex](https://github.com/nvidia/apex), apex SBN channels-last also has this issue. We will submit a fix there soon.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57077

Reviewed By: mruberry

Differential Revision: D28107672

Pulled By: ngimel

fbshipit-source-id: 0c80e79ddb48891058414ad8a9bedd80f0f7f8df

Summary:

Fixes https://github.com/pytorch/pytorch/issues/45687

Fix changes the input size check for `InstanceNorm*d` to be more restrictive and correctly reject sizes with only a single spatial element, regardless of batch size, to avoid infinite variance.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56659

Reviewed By: pbelevich

Differential Revision: D27948060

Pulled By: jbschlosser

fbshipit-source-id: 21cfea391a609c0774568b89fd241efea72516bb

Summary:

Fixes https://github.com/pytorch/pytorch/issues/56380

BC-breaking note:

This changes the behavior of full backward hooks as they will now fire properly even if no input to the Module require gradients.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56693

Reviewed By: ezyang

Differential Revision: D27947030

Pulled By: albanD

fbshipit-source-id: e8353d769ba5a2c1b6bdf3b64e2d61308cf624a2

Summary:

Fixes https://github.com/pytorch/pytorch/issues/55587

The fix converts the binary `TensorIterator` used by softplus backwards to a ternary one, adding in the original input for comparison against `beta * threshold`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56484

Reviewed By: malfet

Differential Revision: D27908372

Pulled By: jbschlosser

fbshipit-source-id: 73323880a5672e0242879690514a17886cbc29cd

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55237

In this PR, we reenable fast-gradcheck and resolve misc issues that arise:

Before landing this PR, land #55182 so that slow tests are still being run periodically.

Bolded indicates the issue is handled in this PR, otherwise it is handled in a previous PR.

**Non-determinism issues**:

- ops that do not have deterministic implementation (as documented https://pytorch.org/docs/stable/generated/torch.use_deterministic_algorithms.html#torch.use_deterministic_algorithms)

- test_pad_cuda (replication_pad2d) (test_nn)

- interpolate (test_nn)

- cummin, cummax (scatter_add_cuda_kernel) (test_ops)

- test_fn_gradgrad_prod_cpu_float64 (test_ops)

Randomness:

- RRelu (new module tests) - we fix by using our own generator as to avoid messing with user RNG state (handled in #54480)

Numerical precision issues:

- jacobian mismatch: test_gelu (test_nn, float32, not able to replicate locally) - we fixed this by disabling for float32 (handled in previous PR)

- cholesky_solve (test_linalg): #56235 handled in previous PR

- **cumprod** (test_ops) - #56275 disabled fast gradcheck

Not yet replicated:

- test_relaxed_one_hot_categorical_2d (test_distributions)

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D27920906

fbshipit-source-id: 894dd7bf20b74f1a91a5bc24fe56794b4ee24656

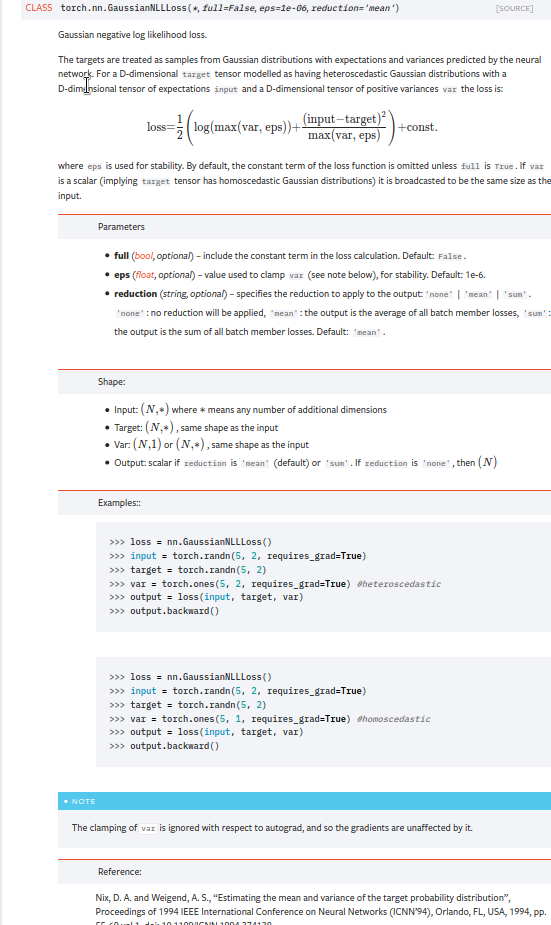

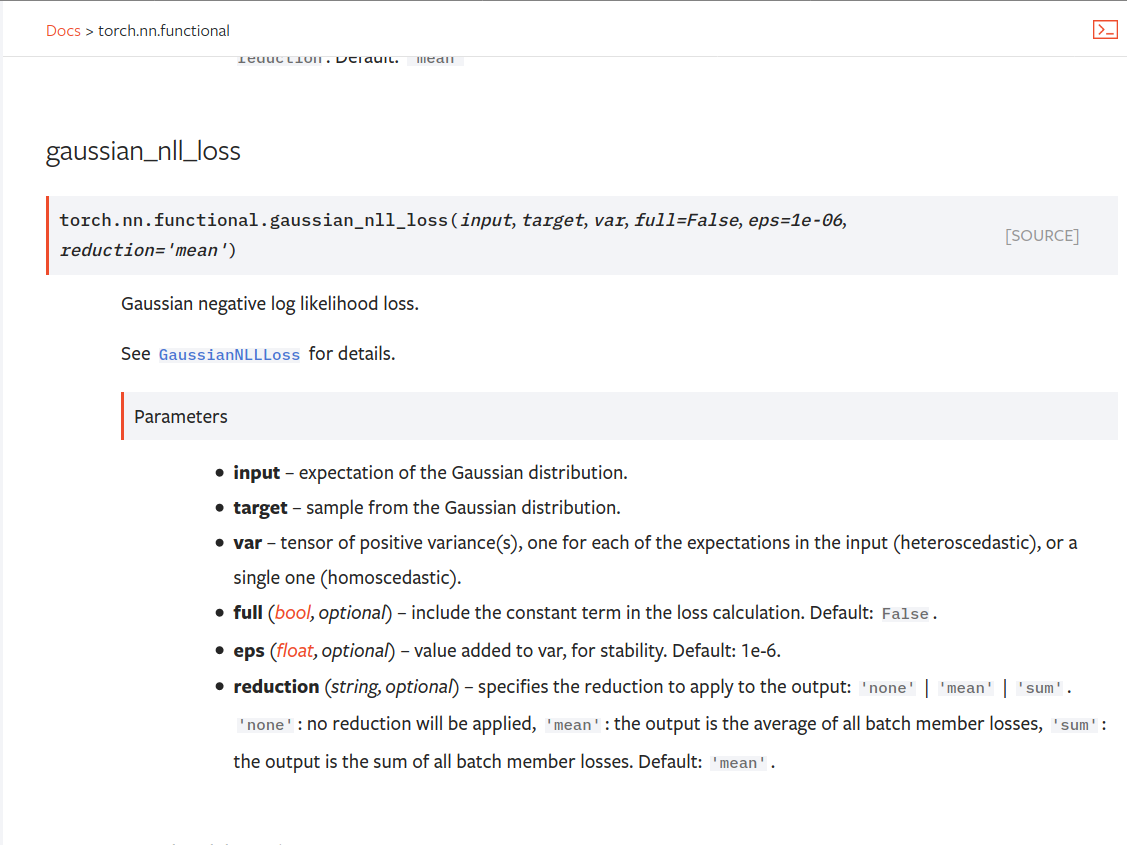

Summary:

Fixes https://github.com/pytorch/pytorch/issues/53964. cc albanD almson

## Major changes:

- Overhauled the actual loss calculation so that the shapes are now correct (in functional.py)

- added the missing doc in nn.functional.rst

## Minor changes (in functional.py):

- I removed the previous check on whether input and target were the same shape. This is to allow for broadcasting, say when you have 10 predictions that all have the same target.

- I added some comments to explain each shape check in detail. Let me know if these should be shortened/cut.

Screenshots of updated docs attached.

Let me know what you think, thanks!

## Edit: Description of change of behaviour (affecting BC):

The backwards-compatibility is only affected for the `reduction='none'` mode. This was the source of the bug. For tensors with size (N, D), the old returned loss had size (N), as incorrect summation was happening. It will now have size (N, D) as expected.

### Example

Define input tensors, all with size (2, 3).

`input = torch.tensor([[0., 1., 3.], [2., 4., 0.]], requires_grad=True)`

`target = torch.tensor([[1., 4., 2.], [-1., 2., 3.]])`

`var = 2*torch.ones(size=(2, 3), requires_grad=True)`

Initialise loss with reduction mode 'none'. We expect the returned loss to have the same size as the input tensors, (2, 3).

`loss = torch.nn.GaussianNLLLoss(reduction='none')`

Old behaviour:

`print(loss(input, target, var)) `

`# Gives tensor([3.7897, 6.5397], grad_fn=<MulBackward0>. This has size (2).`

New behaviour:

`print(loss(input, target, var)) `

`# Gives tensor([[0.5966, 2.5966, 0.5966], [2.5966, 1.3466, 2.5966]], grad_fn=<MulBackward0>)`

`# This has the expected size, (2, 3).`

To recover the old behaviour, sum along all dimensions except for the 0th:

`print(loss(input, target, var).sum(dim=1))`

`# Gives tensor([3.7897, 6.5397], grad_fn=<SumBackward1>.`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56469

Reviewed By: jbschlosser, agolynski

Differential Revision: D27894170

Pulled By: albanD

fbshipit-source-id: 197890189c97c22109491c47f469336b5b03a23f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54812

Needed for quantization since different attribute might refer to the same module instance

Test Plan: Imported from OSS

Reviewed By: vkuzo

Differential Revision: D27408376

fbshipit-source-id: cada85c4a1772d3dd9502c3f6f9a56d690d527e7

Summary:

Fixes https://github.com/pytorch/pytorch/issues/25100#43112

EDIT: pardon my inexperience since this is my first PR here, that I did not realize the doc should not have any trailing white spaces, and `[E712] comparison to False should be 'if cond is False:' or 'if not cond:'`, now both fixed.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55285

Reviewed By: mruberry

Differential Revision: D27765694

Pulled By: jbschlosser

fbshipit-source-id: c34774fa065d67c0ac130de20a54e66e608bdbf4

Summary:

This PR adds a `padding_idx` parameter to `nn.EmbeddingBag` and `nn.functional.embedding_bag`. As with `nn.Embedding`'s `padding_idx` argument, if an embedding's index is equal to `padding_idx` it is ignored, so it is not included in the reduction.

This PR does not add support for `padding_idx` for quantized or ONNX `EmbeddingBag` for opset10/11 (opset9 is supported). In these cases, an error is thrown if `padding_idx` is provided.

Fixes https://github.com/pytorch/pytorch/issues/3194

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49237

Reviewed By: walterddr, VitalyFedyunin

Differential Revision: D26948258

Pulled By: jbschlosser

fbshipit-source-id: 3ca672f7e768941f3261ab405fc7597c97ce3dfc

Summary:

Fixes https://github.com/pytorch/pytorch/issues/25100#43112

EDIT: pardon my inexperience since this is my first PR here, that I did not realize the doc should not have any trailing white spaces, and `[E712] comparison to False should be 'if cond is False:' or 'if not cond:'`, now both fixed.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55285

Reviewed By: ngimel

Differential Revision: D27710107

Pulled By: jbschlosser

fbshipit-source-id: c4363a4604548c0d84628c4997dd23d6b3afb4d9

Summary:

This PR adds the functionality to use channals_last_3d, aka, NDHWC, in Conv3d. It's only enabled when cuDNN version is greater than or equal to 8.0.5.

Todo:

- [x] add memory_format test

- [x] add random shapes functionality test

Close https://github.com/pytorch/pytorch/pull/52547

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48430

Reviewed By: mrshenli

Differential Revision: D27641452

Pulled By: ezyang

fbshipit-source-id: 0e98957cf30c50c3390903d307dd43bdafd28880

Summary:

There was an error when removing a parametrization with `leave_parametrized=True`. It had escaped the previous tests. This PR should fix that.

**Edit.**

I also took this chance to fix a few mistakes that the documentation had, and to also write the `set_original_` in a more compact way.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55456

Reviewed By: mrshenli

Differential Revision: D27620481

Pulled By: albanD

fbshipit-source-id: f1298ddbcf24566ef48850c62a1eb4d8a3576152

Summary:

Non-backwards-compatible change introduced in https://github.com/pytorch/pytorch/pull/53843 is tripping up a lot of code. Better to set it to False initially and then potentially flip to True in the later version to give people time to adapt.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55169

Reviewed By: mruberry

Differential Revision: D27511150

Pulled By: jbschlosser

fbshipit-source-id: 1ac018557c0900b31995c29f04aea060a27bc525

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48917

max_pool2d channels last support forward path

max_pool2d channels last support backward path

vectorize channels last forward path

rename the header file

fix windows build

combine PoolingKernel.h into Pool.h

add data type check

loosen test_max_pool2d_nhwc to cover device CPU

Test Plan: Imported from OSS

Reviewed By: glaringlee

Differential Revision: D25399470

Pulled By: VitalyFedyunin

fbshipit-source-id: b49b9581f1329a8c2b9c75bb10f12e2650e4c65a

Summary:

This PR enables using MIOpen for RNN FP16 on ROCM.

It does this by altering use_miopen to allow fp16. In the special case where LSTMs use projections we use the default implementation, as it is not implemented in MIOpen at this time. We do send out a warning once to let the user know.

We then remove the various asserts that are no longer necessary since we handle the case.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52475

Reviewed By: H-Huang

Differential Revision: D27449150

Pulled By: malfet

fbshipit-source-id: 06499adb94f28d4aad73fa52890d6ba361937ea6

Summary:

Skips the tests indicated as failing in https://github.com/pytorch/pytorch/issues/54535.

During the ROCm CI upgrade from 4.0.1 to 4.1, some tests regressed. Specifically, FFT tests in test_spectral_ops.py and test_grid_sample in test_nn.py. In order to keep a passing CI signal, we need to disable these temporarily.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54536

Reviewed By: H-Huang

Differential Revision: D27442974

Pulled By: malfet

fbshipit-source-id: 07dffb957757a5fc7afaa5bf78b935a427251ef4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54901

Some subtleties:

- Need to make sure not to clobber composite definitions when

deciding when to generate

- I was lazy and so I didn't make inplace on TensorList work,

nor did I make inplace functions that returned void work

- A few tests started complaining that these noop meta functions

weren't raising the errors they needed. This is tracked

in https://github.com/pytorch/pytorch/issues/54897

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Test Plan: Imported from OSS

Reviewed By: jbschlosser

Differential Revision: D27407232

Pulled By: ezyang

fbshipit-source-id: 5e706a267496368acdafd128942c310954e43d29

Summary:

Fixes https://github.com/pytorch/pytorch/issues/54452

The assertion that fails in the issue is necessary to appease mypy. Instead, I fix `_ntuple` to always return a `tuple`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54911

Reviewed By: H-Huang

Differential Revision: D27411088

Pulled By: jbschlosser

fbshipit-source-id: 7f5045c58dd4f5f3b07b4826d9b4ca85606c5bce

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53655

Currently EmbeddingBag and it variants support either int32 or int64 indices/offsets. We have use cases where there are mix of int32 and int64 indices which are not supported yet. To avoid introducing too many branches we could simply cast offsets type to indices type when they are not the same.

Test Plan: unit tests

Reviewed By: qizzzh

Differential Revision: D26820202

fbshipit-source-id: 3e8f09523329ea12393ea92ee9a6315aa40a0b7f

Summary:

**BC-breaking note**: This change throws errors for cases that used to silently pass. The old behavior can be obtained by setting `error_if_nonfinite=False`

Fixes https://github.com/pytorch/pytorch/issues/46849

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53843

Reviewed By: malfet

Differential Revision: D27291838

Pulled By: jbschlosser

fbshipit-source-id: 216d191b26e1b5919a44a3af5cde6f35baf825c4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54744

Fixes https://github.com/pytorch/pytorch/issues/54590

After the porting the upsample operators to be structured, they now forward memory_format information to the output. This is a problem for the cuda kernels, which are not implemented to deal with `torch.channels_last` memory format. The operators are:

* upsample_nearest2d

* upsample_bilinear2d

* upsample_nearest3d

* upsample_trilinear3d

This fix just allocates a temporary, contiguous output tensor when that happens, writes the results to the temporary and copies the results back to the output tensor.

I held off on adding tests to get the fix out quickly, but I wrote a script and ran some manual tests, that basically just asserts that the outputs are the same for cpu and cuda, for some threshold. I ran it for all 4 operators:

```

import torch

def basically_equal(t1, t2):

epsilon = 1e-4

diffs = torch.abs(t1 - t2)

print(torch.all(diffs < 1e-4))

# upsample 2d

a = torch.arange(48).reshape(2, 2, 3, 4).contiguous(memory_format=torch.channels_last).float()

out_cpu = torch.nn.functional.interpolate(a, scale_factor=2, mode='nearest')

out_cuda = torch.nn.functional.interpolate(a.to('cuda'), scale_factor=2, mode='nearest')

basically_equal(out_cpu, out_cuda.to("cpu"))

out_cpu = torch.nn.functional.interpolate(a, scale_factor=2, mode='bilinear', align_corners=True)

out_cuda = torch.nn.functional.interpolate(a.to('cuda'), scale_factor=2, mode='bilinear', align_corners=True)

basically_equal(out_cpu, out_cuda.to("cpu"))

# upsample 3d

a = torch.arange(96).reshape(2, 2, 2, 3, 4).contiguous(memory_format=torch.channels_last_3d).float()

out_cpu = torch.nn.functional.interpolate(a, scale_factor=3, mode='nearest')

out_cuda = torch.nn.functional.interpolate(a.to('cuda'), scale_factor=3, mode='nearest')

basically_equal(out_cpu, out_cuda.to("cpu"))

out_cpu = torch.nn.functional.interpolate(a, scale_factor=3, mode='trilinear', align_corners=True)

out_cuda = torch.nn.functional.interpolate(a.to('cuda'), scale_factor=3, mode='trilinear', align_corners=True)

basically_equal(out_cpu, out_cuda.to("cpu"))

```

prints

```

tensor(True)

tensor(True)

tensor(True)

tensor(True)

```

One thing that was weird- `upsample_bilinear2d` and `upsample_trilinear3d` were only accurate across cpu/cuda with an epsilon of `1e-4`. That tentatively sounds close enough to say that cuda isn't "wrong" (?), but that's not exactly "equal"... and I also ran the script before my change, and `bilinear2d` and `trilinear3d` were also the same across cpu/cuda with an epsilon of `1e-4`.

Test Plan: Imported from OSS

Reviewed By: ezyang

Differential Revision: D27351393

Pulled By: bdhirsh

fbshipit-source-id: b33f46e4855dc8b49b363770190b639beebbf5a7

Summary:

The fallback thnn 2d convolution uses `im2col` to get patches and `gemm` to implement convolution .

I has a shortcut to use `gemm` directly for kernel size 1, but this only works for stride == 1 and padding == 0.

This PR adds checks for stride == 1 and padding == 0 to determining whether `im2col` can be skipped.

Fixes https://github.com/pytorch/pytorch/issues/54036

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54080

Reviewed By: ejguan

Differential Revision: D27170482

Pulled By: zou3519

fbshipit-source-id: 055d6502239d34945934de409d78144d8a5c56f4

Summary:

Also modify the `tf32_on_and_off` decorator to make it support function without `device` argument.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52871

Reviewed By: ngimel

Differential Revision: D27286674

Pulled By: mruberry

fbshipit-source-id: 14f6d558271bd6a1d0bc40691c170d47e81de1ff

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45667

First part of #3867 (Pooling operators still to do)

This adds a `padding='same'` mode to the interface of `conv{n}d`and `nn.Conv{n}d`. This should match the behaviour of `tensorflow`. I couldn't find it explicitly documented but through experimentation I found `tensorflow` returns the shape `ceil(len/stride)` and always adds any extra asymmetric padding onto the right side of the input.

Since the `native_functions.yaml` schema doesn't seem to support strings or enums, I've moved the function interface into python and it now dispatches between the numerically padded `conv{n}d` and the `_conv{n}d_same` variant. Underscores because I couldn't see any way to avoid exporting a function into the `torch` namespace.

A note on asymmetric padding. The total padding required can be odd if both the kernel-length is even and the dilation is odd. mkldnn has native support for asymmetric padding, so there is no overhead there, but for other backends I resort to padding the input tensor by 1 on the right hand side to make the remaining padding symmetrical. In these cases, I use `TORCH_WARN_ONCE` to notify the user of the performance implications.

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision: D27170744

Pulled By: jbschlosser

fbshipit-source-id: b3d8a0380e0787ae781f2e5d8ee365a7bfd49f22

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53665

ngimel pointed out to me where we already test the behavior of the `Upsample` ops in `test_nn.py`. This PR deleting my bespoke tests in `test_torch.py` and updates those in `test_nn.py` to test memory format properly.

There were two reasons the original test didn't pick up on a memory format regression:

- They didn't test the memory format of the output tensor explicitly, i.e. `output.is_contiguous(memory_format=...)`

- Even with that change, the test tensors were to simple to fail the tests. From some trial and error, it looks like one of the first two dimensions in the inputs needs to be > 1 in order for the `channels_last` memory format to actually re-order the strides.

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision: D26929683

Pulled By: bdhirsh

fbshipit-source-id: d17bc660ff031e9b3e2c93c60a9e9308e56ea612

Summary:

Provides the implementation for feature request issue https://github.com/pytorch/pytorch/issues/28937.

Adds the `Parametrization` functionality and implements `Pruning` on top of it.

It adds the `auto` mode, on which the parametrization is just computed once per forwards pass. The previous implementation computed the pruning on every forward, which is not optimal when pruning RNNs for example.

It implements a caching mechanism for parameters. This is implemented through the mechanism proposed at the end of the discussion https://github.com/pytorch/pytorch/issues/7313. In particular, it assumes that the user will not manually change the updated parameters between the call to `backwards()` and the `optimizer.step()`. If they do so, they would need to manually call the `.invalidate()` function provided in the implementation. This could be made into a function that gets a model and invalidates all the parameters in it. It might be the case that this function has to be called in the `.cuda()` and `.to` and related functions.

As described in https://github.com/pytorch/pytorch/issues/7313, this could be used, to implement in a cleaner way the `weight_norm` and `spectral_norm` functions. It also allows, as described in https://github.com/pytorch/pytorch/issues/28937, for the implementation of constrained optimization on manifolds (i.e. orthogonal constraints, positive definite matrices, invertible matrices, weights on the sphere or the hyperbolic space...)

TODO (when implementation is validated):

- More thorough test

- Documentation

Resolves https://github.com/pytorch/pytorch/issues/28937

albanD

Pull Request resolved: https://github.com/pytorch/pytorch/pull/33344

Reviewed By: zhangguanheng66

Differential Revision: D26816708

Pulled By: albanD

fbshipit-source-id: 07c8f0da661f74e919767eae31335a9c60d9e8fe

Summary:

Fixes https://github.com/pytorch/pytorch/issues/38137

As mentioned in the issue, this is a workaround for [python issue 43367](https://bugs.python.org/issue43367). There are a number of other places where `sys.modules` is modified, if something changes in python perhaps those should be reviewed as well.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53107

Reviewed By: zou3519

Differential Revision: D26753571

Pulled By: ezyang

fbshipit-source-id: 2bda03bab39ff9ca58ce4bc13befe021da91b9c4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52671

Code is written with the assumption that new_size is unsigned value,

and when function is called with negative value it silently returns a nullptr rather than raise an exception.

Fix above-mentioned logic by converting new_size to unsigned type and let cpu_allocator raise exception on negative alloc.

Unroll nested if blocks by returning early if new_size is 0

Add TestNN.test_adaptive_pooling_size_overflow to indirecty validate the fix.

Fixes https://github.com/pytorch/pytorch/issues/50960

Test Plan: Imported from OSS

Reviewed By: walterddr

Differential Revision: D26607549

Pulled By: malfet

fbshipit-source-id: e3d4f7548b098f24fa5aba42d8f4e9288ece1e2e

Summary:

Fixes https://github.com/pytorch/pytorch/issues/52257

## Background

Reverts MHA behavior for `bias` flag to that of v1.5: flag enables or disables both in and out projection biases.

Updates type annotations for both in and out projections biases from `Tensor` to `Optional[Tensor]` for `torch.jit.script` usage.

Note: With this change, `_LinearWithBias` defined in `torch/nn/modules/linear.py` is no longer utilized. Completely removing it would require updates to quantization logic in the following files:

```

test/quantization/test_quantized_module.py

torch/nn/quantizable/modules/activation.py

torch/nn/quantized/dynamic/modules/linear.py

torch/nn/quantized/modules/linear.py

torch/quantization/quantization_mappings.py

```

This PR takes a conservative initial approach and leaves these files unchanged.

**Is it safe to fully remove `_LinearWithBias`?**

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52537

Test Plan:

```

python test/test_nn.py TestNN.test_multihead_attn_no_bias

```

## BC-Breaking Note

In v1.6, the behavior of `MultiheadAttention`'s `bias` flag was incorrectly changed to affect only the in projection layer. That is, setting `bias=False` would fail to disable the bias for the out projection layer. This regression has been fixed, and the `bias` flag now correctly applies to both the in and out projection layers.

Reviewed By: bdhirsh

Differential Revision: D26583639

Pulled By: jbschlosser

fbshipit-source-id: b805f3a052628efb28b89377a41e06f71747ac5b

Summary:

Some minor improvement for lazy modules introduced in https://github.com/pytorch/pytorch/issues/44538, https://github.com/pytorch/pytorch/issues/47350 and https://github.com/pytorch/pytorch/issues/51548.

This PR mainly turn the bias to `UninitializedParameter` and instead of creating empty tensors like

```python

self.bias = Parameter(torch.Tensor(0))

self.bias = UninitializedParameter()

```

I think it would be better to

```python

self.register_parameter('bias', None)

self.bias = UninitializedParameter()

```

In addition, I change the constructor of the `LazyBatchNorm` from

```python

self.running_mean = UninitializedBuffer()

```

to

```python

self.register_buffer('running_mean', UninitializedBuffer())

```

as the original one would not change the underlying `self._buffers`.

Thank you for your time on reviewing this PR :).

Gently ping albanD, mruberry

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52212

Reviewed By: jbschlosser

Differential Revision: D26504508

Pulled By: albanD

fbshipit-source-id: 7094d0bb4fa9e2a40a07b79d350ea12a6ebfd080

Summary:

Temporary disabling OneDNN conv for group size = 24 as OneDNN update came too late to be fully tested https://github.com/pytorch/pytorch/issues/50042

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52327

Reviewed By: agolynski

Differential Revision: D26474186

Pulled By: VitalyFedyunin

fbshipit-source-id: 8d6964d33c8dcab70e207088c3940810eabbd068

Summary:

Because this pull request (https://github.com/pytorch/pytorch/issues/40801) becomes an important part of recent 3D models, brings significant improvement in speed, and also have been open for a while. So I decided to resolve the previous review comment and modify it a bit so that it can be merged into the latest version of Pytorch.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51027

Reviewed By: albanD

Differential Revision: D26414116

Pulled By: ngimel

fbshipit-source-id: 562c099f4d7f6d603a9c2f2e2a518bc577b0d8ee

Summary:

Adding CUDA 11.2 to Windows CI.

Disabled tests:

The following ran into `CUDA error: misaligned address` for CUDA 11.2: (issue linked below)

`test_where_scalar_valid_combination_cuda_complex128` in test_torch.py

`test_sgn_complex_cuda` in test_autograd.py

The following ran into `CUDA error: too many resources requested for launch` for CUDA 11.2: (https://github.com/pytorch/pytorch/issues/52002)

test_EmbeddingBag_per_sample_weights_and_new_offsets_cuda_int64_float64

test_EmbeddingBag_per_sample_weights_and_offsets_cuda_int64_float64

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51598

Reviewed By: mrshenli

Differential Revision: D26344965

Pulled By: janeyx99

fbshipit-source-id: 3c9a4ed16d748969e96593220ec0a9f33e1ffcef

Summary:

For none support input, we should not do check in a parallel region, this PR will first do the dtype check, and then do parallel for.

Fixes https://github.com/pytorch/pytorch/issues/51352.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51443

Reviewed By: izdeby

Differential Revision: D26305584

Pulled By: ngimel

fbshipit-source-id: 6faa3148af5bdcd7246771c0ecb4db2b31ac82c6

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50794

Original commit changeset: b4a7948088c0

There are some subtle extra tweaks on top of the original. I can unbundle them, but I've opted to keep it with the port because it's the easiest way to make sure the changes are exercised.

* There's a bugfix in the codegen to test if a dispatch key is structured *before* short circuiting because the dispatch key was missing in the table. This accounts for mixed structured-nonstructured situations where the dispatch table is present, but the relevant structured key isn't (because the dispatch table only exists to register, e.g., QuantizedCPU)

* Dispatch tables for functions which delegate to structured kernels don't have Math entries from generated for them.

* It's now illegal to specify a structured dispatch key in a delegated structured kernel (it will be ignored!) add is now fixed to follow this

* There are some extra sanity checks for NativeFunctions validation

* Finally, unlike the original PR, I switched the .vec variant of upsample_nearest2d to also be DefaultBackend, bringing it inline with upsample_nearest1d.

ghstack-source-id: 120038038

Test Plan:

```

buck test mode/dev //coreai/tiefenrausch:python_tests -- --exact 'coreai/tiefenrausch:python_tests - test_can_run_local_async_inference_cpu (coreai.tiefenrausch.tests.python_test.TiefenrauschPY)' --run-disabled

```

Reviewed By: ngimel

Differential Revision: D25962873

fbshipit-source-id: d29a9c97f15151db3066ae5efe7a0701e6dc05a3

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50739

This does not turn on batched grad testing for autogenerated NewModuleTest

tests and CriterionTest tests. Those are coming later.

Test Plan: - run tests

Reviewed By: ejguan

Differential Revision: D25997677

Pulled By: zou3519

fbshipit-source-id: b4b2d68e0f99c3d573faf237e1e531d0b3fced40

Summary:

Fixes #{[24991](https://github.com/pytorch/pytorch/issues/24991)}

I used a value of 0.75 as suggested in the forums by Thomas: https://discuss.pytorch.org/t/calculate-gain-tanh/20854/6

I verified that the value keeps the gradient stable for a 100-layer network.

Code to reproduce (from [jpeg729](https://discuss.pytorch.org/t/calculate-gain-tanh/20854/4)):

```python

import torch

import torch.nn.functional as F

import sys

a = torch.randn(1000,1000, requires_grad=True)

b = a

print (f"in: {a.std().item():.4f}")

for i in range(100):

l = torch.nn.Linear(1000,1000, bias=False)

torch.nn.init.xavier_normal_(l.weight, torch.nn.init.calculate_gain("selu"))

b = getattr(F, 'selu')(l(b))

if i % 10 == 0:

print (f"out: {b.std().item():.4f}", end=" ")

a.grad = None

b.sum().backward(retain_graph=True)

print (f"grad: {a.grad.abs().mean().item():.4f}")

```

Output:

```

in: 1.0008

out: 0.7968 grad: 0.6509

out: 0.3127 grad: 0.2760

out: 0.2404 grad: 0.2337

out: 0.2062 grad: 0.2039

out: 0.2056 grad: 0.1795

out: 0.2044 grad: 0.1977

out: 0.2005 grad: 0.2045

out: 0.2042 grad: 0.2273

out: 0.1944 grad: 0.2034

out: 0.2085 grad: 0.2464

```

I included the necessary documentation change, and it passes the _test_calculate_gain_nonlinear_ unittest.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50664

Reviewed By: mruberry

Differential Revision: D25942217

Pulled By: ngimel

fbshipit-source-id: 29ff1be25713484fa7c516df71b12fdaecfb9af8

Summary:

Fixes https://github.com/pytorch/pytorch/issues/42588

The contiguity check used to be for memory format suggested by `grad_output->suggest_memory_format()`, but an invariant guaranteed by derivatives.yaml is `input->suggest_memory_format()`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50659

Reviewed By: mruberry

Differential Revision: D25938921

Pulled By: ngimel

fbshipit-source-id: a945bfef6ce3d91b17e7ff96babe89ffd508939a

Summary:

Building on top of the work of anjali411 (https://github.com/pytorch/pytorch/issues/46640)

Things added in this PR:

1. Modify backward and double-backward formulas

2. Add complex support for `new module tests` and criterion tests (and add complex tests for L1)

3. Modify some existing tests to support complex

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49912

Reviewed By: zhangguanheng66

Differential Revision: D25853036

Pulled By: soulitzer

fbshipit-source-id: df619f1b71c450ab2818eb17804e0c55990aa8ad

Summary:

Fixes https://github.com/pytorch/pytorch/issues/49726

Just cleaned up the unnecessary `ModuleAttributeError`

BC-breaking note:

`ModuleAttributeError` was added in the previous unsuccessful [PR](https://github.com/pytorch/pytorch/pull/49879) and removed here. If a user catches `ModuleAttributeError` specifically, this will no longer work. They should catch `AttributeError` instead.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50298

Reviewed By: mrshenli

Differential Revision: D25907620

Pulled By: jbschlosser

fbshipit-source-id: cdfa6b1ea76ff080cd243287c10a9d749a3f3d0a

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48378

This commit adds support for accepting custom importance scores to use for pruning mask computation, rather than only using the parameter.

This is useful if one wants to prune based on scores from different technique such as activations, gradients, weighted scoring of parameters, etc.

An alternative to the above approach would be pass the custom mask to the already available interface. However, the ability to accept importance scores is easier it can leverage the mask computation logic that has already been baked in.

In addition, the commit also makes some minor lint fixes.

Test Plan:

* Unit tests

* Circle CI

Differential Revision: D24997355

fbshipit-source-id: 30797897977b57d3e3bc197987da20e88febb1fa

Summary:

Fixes https://github.com/pytorch/pytorch/issues/598

This is BC-breaking as we now explicitly don't call the hook when there are not Tensors at the top level of the output.

This feature was not working anyways as the returned grad_input/grad_output were wrong (not respecting the output structure and wrong inputs for multi-Node Module).

This is also BC-breaking as we now report the correct gradients for `nn.Module`s that contain multiple autograd `Node`s while we use to return bad results before.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46163

Reviewed By: ailzhang, mruberry

Differential Revision: D24894180

Pulled By: albanD

fbshipit-source-id: e1b5d193d2818eb2f51e2a2722c7405c8bd13c2b

Summary:

Fixes https://github.com/pytorch/pytorch/issues/46213

I didn't yet update the documentation, will add those change soon. A few other things that I didn't do, but want to clarify if I maybe should.

1. I didn't expose projections in c++ API: torch/csrc/api/src/nn/modules/rnn.cpp. Let me know if this is desirable and I will add those changes.

2. I didn't expose projections in "lstm_cell" function and "_thnn_differentiable_lstm_cell_backward" functions from aten/src/ATen/native/RNN.cpp. As far as I understand, they are not needed for nn.LSTM CPU execution. For lstm_cell, projections don't bring any real benefit, since if cell is used separately, it can be easily added in Python. For "_thnn_differentiable_lstm_cell_backward", I'm actually not sure where exactly that function is used, so I also disabled projections there for now. Please let me know if I should change that.

3. I added check that projections are not supported for quantized LSTMs to quantized_lstm_<data/input> functions. But I didn't add any checks to LSTMCell code. It seems that since I disabled projections in "lstm_cell" function, they should also not be available for quantized models through any other API than quantized_lstm_<data/input>. Please let me know if I'm not correct and I will add checks to other places.

4. Projections are not supported for CuDNN versions < 7.1.2. Should I add the check for CuDNN version and disable projections in that case? If so, what will be the best way to do that?

5. Currently I added projection weight as the last weight, so the layout is "w_ih, w_hh, b_ih, b_hh, w_hr". This breaks the assumption that biases come after weights and thus I had to add additional if-s in various places. Alternative way would be to have "w_ih, w_hh, w_hr, b_ih, b_hh" layout, in which case the assumption will be true. But in that case I will need to split the loop in get_parameters function from aten/src/ATen/native/cudnn/RNN.cpp. And in some cases, I will still need to add an "undefined" tensor in the 3rd position, because we get all 5 weights from CuDNN most of the time. So I'm not sure which way is better. Let me know if you think I should change to the weights-then-biases layout.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/47725

Reviewed By: zou3519

Differential Revision: D25449794

Pulled By: ngimel

fbshipit-source-id: fe6ce59e481d1f5fd861a8ff7fa13d1affcedb0c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49187

Expands the implementation of PixelShuffle to support any number of batch dimensions

Test Plan: `buck test caffe2/test:nn -- test_pixel_shuffle`

Reviewed By: mruberry

Differential Revision: D25399058

fbshipit-source-id: ab0a7f593b276cafc9ebb46a177e2c1dce56d0de

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48916

optimize adaptive average pool2d forward path

optimize adaptive average pool2d backward path

remove unused headers

minor change

minor change

rename the header; add adaptive max pooling in future.

minor change

loosen adapative_pool2d test on nhwc to both device cuda and cpu

minor change

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision: D25399469

Pulled By: VitalyFedyunin

fbshipit-source-id: 86f9fda35194f21144bd4667b778c861c05a5bac

Summary:

Fixes https://github.com/pytorch/pytorch/issues/46983.

The solution is based of two components:

1. The introduction of the `_initialized` attribute. This will be used during ParameterList/Dict creation methods `__init__` (introduced in https://github.com/pytorch/pytorch/issues/47772) and `__setstate__` to not trigger warnings when setting general `Module` attributes.

2. The introduction of the `not hasattr(self, key)` check to avoid triggering warnings when changing general `Module` attributes such as `.training` during the `train()` and `eval()` methods.

Tests related to the fix are added.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48315

Reviewed By: mrshenli

Differential Revision: D25130217

Pulled By: albanD

fbshipit-source-id: 79e2abf1eab616f5de74f75f370c2fe149bed4cb

Summary:

Fixed test:

- `test_is_nonzero`, this is asserting exact match, which is flaky when `TORCH_SHOW_CPP_STACKTRACES=1`, I changed this to non-exact assert

- `test_pinverse` TF32

- `test_symeig` TF32

- `test_triangular_solve_batched_many_batches_cpu_float64` precision on CPU BLAS

- `test_qr` TF32, as well as the tensor factory forgets a `dtype=dtype`

- `test_lu` TF32

- `ConvTranspose2d` TF32

- `Conv3d_1x1x1_no_bias` TF32

- `Transformer*` TF32

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46941

Reviewed By: heitorschueroff

Differential Revision: D24852725

Pulled By: mruberry

fbshipit-source-id: ccd4740cc643476178d81059d1c78da34e5082ed

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46758

It's in general helpful to support int32 indices and offsets, especially when such tensors are large and need to be transferred to accelerator backends. Since it may not be very useful to support the combination of int32 indices and int64 offsets, here we enforce that these two must have the same type.

Test Plan: unit tests

Reviewed By: ngimel

Differential Revision: D24470808

fbshipit-source-id: 94b8a1d0b7fc9fe3d128247aa042c04d7c227f0b

Summary:

Fix https://github.com/pytorch/pytorch/issues/44601

I added bicubic grid sampler in both cpu and cuda side, but haven't in AVX2

There is a [colab notebook](https://colab.research.google.com/drive/1mIh6TLLj5WWM_NcmKDRvY5Gltbb781oU?usp=sharing) show some test results. The notebook use bilinear for test, since I could only use distributed version of pytorch in it. You could just download it and modify the `mode_torch=bicubic` to show the results.

There are some duplicate code about getting and setting values, since the helper function used in bilinear at first clip the coordinate beyond boundary, and then get or set the value. However, in bicubic, there are more points should be consider. I could refactor that part after making sure the overall calculation are correct.

Thanks

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44780

Reviewed By: mrshenli

Differential Revision: D24681114

Pulled By: mruberry

fbshipit-source-id: d39c8715e2093a5a5906cb0ef040d62bde578567

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46558

This PR fixes a bug with how pooling output shape was computed.

## BC Breaking Notes

Previously, a bug in the pooling code allowed a sliding window to be entirely off bounds. Now, sliding windows must start inside the input or left padding (not right padding, see https://github.com/pytorch/pytorch/issues/46929) and may only go off-bounds if ceil_mode=True.

fixes#45357

TODO

- [x] Ensure existing tests are checking for the correct output size

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D24633372

Pulled By: heitorschueroff

fbshipit-source-id: 55925243a53df5d6131a1983076f11cab7516d6b

Summary:

This PR disables the test_softmax and test_softmax_results in test_nn.py that were enabled in https://github.com/pytorch/pytorch/issues/46363. The softmax tests are causing failure on gfx906 machines. Disabling those until we root cause and fix them on 906.

cc: jeffdaily ezyang

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46793

Reviewed By: izdeby

Differential Revision: D24539211

Pulled By: ezyang

fbshipit-source-id: 633cb9dc497ad6359af85b85a711c4549d772b2a

Summary:

This pull request enables the following tests on ROCm:

* TestCuda.test_tiny_half_norm_

* TestNNDeviceTypeCUDA.test_softmax_cuda_float16

* TestNNDeviceTypeCUDA.test_softmax_cuda_float32

* TestNNDeviceTypeCUDA.test_softmax_results_cuda_float16

* TestNNDeviceTypeCUDA.test_softmax_results_cuda_float32

The earlier failures, because of which the tests were skipped, were because of a precision issue for FP16 compute on MI25 hardware with ROCm 3.7 and older. The fix was delivered in the compiler in ROCm 3.8.

The pull request fixes https://github.com/pytorch/pytorch/issues/37493

cc: jeffdaily ezyang malfet mruberry

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46363

Reviewed By: heitorschueroff

Differential Revision: D24325639

Pulled By: ezyang

fbshipit-source-id: a7dbb238cf38c04b6592baad40b4d71725a358c9

Summary:

Close https://github.com/pytorch/pytorch/issues/31690

I have verified the functionality of ConvTranspose2d (with this PR) on roughly 32,000 random shapes on V100, A100, using cuDNN 8.0.4 and CUDA 11.1. The 32,000 shapes contain 4x8,000 of (fp16, fp32) x (nchw, nhwc) each.

The random shapes are sampled from

```jsonc

{

"batch_size": {"low": 1, "high": 8},

"in_channels": {"low": 16, "high": 128},

"out_channels": {"low": 16, "high": 128},

"height": {"low": 16, "high": 224},

"stride": {"set": [[1, 1], [2, 2]]},

"padding": {"set": [[0, 0]]},

"output_padding": {"set": [[0, 0], [1, 1], [0, 1], [1, 0]]},

"kernel_size": {"set": [[3, 3], [1, 1], [1, 3], [3, 1], [2, 2]]},

"dilation": {"set": [[1, 1]]},

"deterministic": {"set": [true, false]},

"benchmark": {"set": [true, false]},

"allow_tf32": {"set": [true, false]},

"groups": {"set": [1, IN_CHANNELS]}

}

```

- Input `width` is the same as `height`.

- `groups` can be either 1, or the same as `in_channels` (grouped convolution). When `groups` is 1, `out_channels` is random; when `groups` is the same as `in_channels`, `out_channels` is also the same as `in_channels`

All of the checked shapes can be found in csv files here https://github.com/xwang233/code-snippet/tree/master/convtranspose2d-dilation/functionality-check-cudnn8.0.4.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46290

Reviewed By: mruberry

Differential Revision: D24422091

Pulled By: ngimel

fbshipit-source-id: 9f0120f2995ae1575c0502f1b2742390d7937b24

Summary:

Follow-up of https://github.com/pytorch/pytorch/issues/46461 with a similar goal

Makes them more readable and possibly faster. Care has to be taken because `map` applies the function immediately while `(x for x in xs)` is a generator expression which gets evaluated later. This is a benefit in some cases where it is not required to actually create the list of values in memory (e.g. when passing to `tuple` or `extend` or `join`)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46462

Reviewed By: zou3519

Differential Revision: D24422343

Pulled By: ezyang

fbshipit-source-id: 252e33499c92ac0b15238f2df32681dbbda2b237

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46572

When `num_samples == 0`, grid becomes zero. Although CUDA just silently proceeds, `cudaGetLastError()` will complain about the `Error: invalid configuration argument`. So it's actually failing in some future places that becomes really hard to debug.

Reviewed By: jianyuh

Differential Revision: D24409874

fbshipit-source-id: ca54de13b1ab48204bbad265e3f55b56b94a1a2f

Summary:

This PR makes it possible to cast the parameters of nn.Module to complex dtypes.

The following code works with the proposed changes.

```python

In [1]: import torch

In [2]: lin = torch.nn.Linear(5, 1).to(torch.complex64)

In [3]: lin(torch.zeros(3, 5, dtype=torch.complex64))

Out[3]:

tensor([[-0.1739+0.j],

[-0.1739+0.j],

[-0.1739+0.j]], grad_fn=<AddmmBackward>)

```

Fixes https://github.com/pytorch/pytorch/issues/43477.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44788

Reviewed By: zou3519

Differential Revision: D24307225

Pulled By: anjali411

fbshipit-source-id: dacc4f5c8c9a99303f74d1f5d807cd657b3b69b5

Summary:

Retake on https://github.com/pytorch/pytorch/issues/40493 after all the feedback from albanD

This PR implements the generic Lazy mechanism and a sample `LazyLinear` layer with the `UninitializedParameter`.

The main differences with the previous PR are two;

Now `torch.nn.Module` remains untouched.

We don't require an explicit initialization or a dummy forward pass before starting the training or inference of the actual module. Making this much simpler to use from the user side.

As we discussed offline, there was the suggestion of not using a mixin, but changing the `__class__` attribute of `LazyLinear` to become `Linear` once it's completely initialized. While this can be useful, by the time being we need `LazyLinear` to be a `torch.nn.Module` subclass since there are many checks that rely on the modules being instances of `torch.nn.Module`.

This can cause problems when we create complex modules such as

```

class MyNetwork(torch.nn.Module):

def __init__(self):

super(MyNetwork, self).__init__()

self.conv = torch.nn.Conv2d(20, 4, 2)

self.linear = torch.nn.LazyLinear(10)

def forward(self, x):

y = self.conv(x).clamp(min=0)

return self.linear(y)

```