BackendMeta offers a binary interface for the backend to attach arbitrary data to TensorImpl. TensorImpl has exactly one "slot" for backend metadata, however backend is free to compose any structure that is opaque to the framework beyond iheriting standard BackendMeta base.

Change-Id: I670fcdd16dd1c2b00f7eaa1cbc5b5dfea59a6221

Fixes #ISSUE_NUMBER

Pull Request resolved: https://github.com/pytorch/pytorch/pull/97429

Approved by: https://github.com/ezyang

Fixes https://github.com/pytorch/pytorch/issues/96887

We error out in BOTH the case when graph is created and when it is not created.

Still bc-breaking, but not as severe because we are limiting to the case where someone uses setup_context.

This makes setup_context and non-setup_context versions diverge in their behavior

- With the non-setup_context version, saved variables are assumed to have the grad_fn of the inputs.

- But now with the setup_context version, we produce an error for this case.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/97212

Approved by: https://github.com/zou3519

Fixes https://github.com/pytorch/pytorch/issues/96887

We error out in BOTH the case when graph is created and when it is not created.

Still bc-breaking, but not as severe because we are limiting to the case where someone uses setup_context.

This makes setup_context and non-setup_context versions diverge in their behavior

- With the non-setup_context version, saved variables are assumed to have the grad_fn of the inputs.

- But now with the setup_context version, we produce an error for this case.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/97212

Approved by: https://github.com/zou3519

Fixes#95796

### Implementation

Adds python implementation for `nn.ZeroPad1d` and `nn.ZeroPad3d` in `torch/nn/modules/padding.py`.

Adds cpp implementation for `nn::ZeroPad1d` and `nn::ZeroPad3d` in the following 3 files, refactored with templates similarly to `nn::ConstantPad`'s implementation: <br>

- `torch/crsc/api/include/torch/nn/modules/padding.h`

- `torch/csrc/api/include/torch/nn/options/padding.h`

- `torch/csrc/api/src/nn/modules/padding.cpp`

Also added relevant definitions in `torch/nn/modules/__init__.py`.

### Testing

Adds the following tests:

- cpp tests of similar length and structure as `ConstantPad` and the existing `ZeroPad2d` impl in `test/cpp/api/modules.cpp`

- cpp API parity tests in `torch/testing/_internal/common_nn.py`

- module init tests in `test/test_module_init.py`

Also added relevant definitions in `test/cpp_api_parity/parity-tracker.md`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/96295

Approved by: https://github.com/soulitzer

Attempts to fix#92656

BC-breaking! This changes the default of zero_grad in optim and in nn to default set grads to None instead of zero tensors. We are changing the default because there are proven perf wins and existing code has typically not regressed due to this change. (will probably have to flesh out this note more).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/92731

Approved by: https://github.com/ngimel

Fixes#81690

TODO:

* [x] C++ Unpickler Fix (locally tested pickled in Python and unpickled in C++)

* [x] C++ Pickler Fix (locally tested pickled in C++ and unpickled in Python)

* [x] Do quant_tensor, sparse_tensor, etc require similar changes? (Sparse and Quant don't need this)

* [x] Add Comments

* [x] How to make sure C++ and Python are in sync? (Functions in `pickler.h` help in getting and setting Tensor Metadata (math-bits for now) on a tensor. They are the only place which should handle this.)

Notes:

Quant Tensor don't support complex dtypes and for float they segfault with `_neg_view` : https://github.com/pytorch/pytorch/issues/88484

Sparse Tensor:

```python

>>> a = torch.tensor([[0, 2.], [3j, 0]]).to_sparse()

>>> a.conj().is_conj()

False

>>> a._neg_view()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

NotImplementedError: Cannot access storage of SparseTensorImpl

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/88182

Approved by: https://github.com/ezyang, https://github.com/anjali411

The symbol seems to conflict under some compiler versions, giving

an error like "relocation refers to global symbol which is defined in a

discarded section". Simple enough to put it in an anonymous namespace,

so why not.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Pull Request resolved: https://github.com/pytorch/pytorch/pull/86092

Approved by: https://github.com/Chillee

### Introduction

<!-- What did you change and why was it needed? -->

Removing unnecessary weight gradient calculation is very important for applications that need high-order derivatives during training. However, this is not supported by the current Autograd engine.

For more detail: The backward function of a `matmul` operator (e.g., `linear` `addmm` `mm`), has two matmuls, one for `input gradient` and another for `weight gradient`. For a typical neural network (nn) with a few linear layers and activation functions, if the user calls `torch.autograd.grad()` to calculate the derivative of the nn output `y` w.r.t the nn input `x`, only the `input gradient` of the `matmul` operator is needed, and the `weight gradient` is discarded. However, the current PyTorch autograd engine will always calculate the `weight gradient` if `weight` requires gradient (the calculation of the high-order derivative is performed during training).

The figure attached shows the autograd graph of the following code snippet:

```py

y = torch.nn.functional.linear(x, weight, bias)

y = y.pow(2)

# first order derivative

y__x, = torch.autograd.grad(y, x, grad_outputs=grad_outputs, create_graph=True)

# first order derivative

y__x__x, = torch.autograd.grad(y__x, x, grad_outputs=grad_outputs, create_graph=True)

```

The path with ❌ is not needed when calculating derivatives.

<img width="50%" alt="image" src="https://user-images.githubusercontent.com/9999318/182018117-719c5a23-bcc6-4a63-8e8d-1bca3ebda2e3.png">

### Issue

<!-- Link to Issue ticket or RFP -->

Related issue: https://github.com/pytorch/pytorch/issues/56500

### Method

When calling `torch.autograd.grad`, `exec_info_` is created for each GraphTask, which allows filtering paths on the graph that are not needed. However, when the GraphTask calls into the node, the node still does not know whether the edges are needed or not. In the case of matmul, `weight.requires_grad is True` so the weight gradient is always calculated.

Following https://github.com/pytorch/pytorch/issues/56500#issuecomment-825694656, this PR passes the graph task's thread_local `exec_info_` into the node, so it could trim unnecessary edges during `torch.autograd.grad` calls.

### Benchmark

Benchmark script: https://gist.github.com/yueyericardo/24158433a2021c51eeef9c3e2722df99

Benchmark result:

6 hidden layers, batch size 10000, on A100

FP32 result

| hessian benchmark | FP32 (before) | FP32 (After) | FP32 (Functorch v0.1.1) |

| ----------------------------- | ------------- | ----------------- | ----------------------- |

| Linear + ReLU (no backward) | 55.658 ms | 29.392 ms (1.90X) | 29.547 ms (1.90X) |

| Linear + ReLU (with backward) | 81.173 ms | 54.917 ms (1.47X) | 68.988 ms (1.18X) |

TF32 result

| hessian benchmark | TF32 (before) | TF32 (after) | TF32 (Functorch v0.1.1) |

| ----------------------------- | ------------- | ----------------- | ----------------------- |

| Linear + ReLU (no backward) | 19.801 ms | 11.259 ms (1.76X) | 10.754 ms (1.84X) |

| Linear + ReLU (with backward) | 29.167 ms | 20.466 ms (1.42X) | 22.784 ms (1.28X) |

For FP32 result, we could get 1.9X speed up for hessian calculation, and 1.47X speed up during training, which is even faster than functorch `vmap(jacfwd(jacrev` implementation. (functorch has performance regression on v0.2.0, https://github.com/pytorch/functorch/issues/989, so we are using v0.1.1 for benchmark)

@zou3519 does functorch also includes similar optimizations during hessian calculation? If not, what do we need to do so the functorch could also benefit from this PR?

### Testing

<!-- How did you test your change? -->

- [x] we need to figure out a way for unittest

### Thanks

Thanks for the great blog: [How Computational Graphs are Executed in PyTorch | PyTorch](https://pytorch.org/blog/how-computational-graphs-are-executed-in-pytorch/)

cc @zasdfgbnm @albanD

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82544

Approved by: https://github.com/soulitzer

And use it throughout the CMakeLists and rectify `IF(APPLE)`/`IF(GNU_CXX_VERSION VERSION_GREATER A.B)` and so on

Also, add `target_compile_options_if_supported` and use it in `Dependencies.cmake` as well as in test's `CMakeListst.txt`

Delete `-Wno-unknown-warning-option` to test that conditions indeed working as expected

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82883

Approved by: https://github.com/seemethere

And use it throughout the CMakeLists and rectify `IF(APPLE)`/`IF(GNU_CXX_VERSION VERSION_GREATER A.B)` and so on

Also, add `target_compile_options_if_supported` and use it in `Dependencies.cmake` as well as in test's `CMakeListst.txt`

Delete `-Wno-unknown-warning-option` to test that conditions indeed working as expected

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82883

Approved by: https://github.com/seemethere

See this doc: https://docs.google.com/document/d/1KiRdnoj6B4cI3yl017hTbCqcOGO1gWIpUf20sldipHM/edit#

Two issues (1) regarding hooks in general and (2) regarding retains grad hooks are fixed, Python hooks, which rely on a different mechanism are not discussed here:

- Hooks in cpp in general

- (fixed) new hooks to registered to a newer version of the tensor no longer get applied to grad_fn

associated with older version of the tensor when the first hook was ever registered

- (unchanged) hooks registered to the older version of the tensor remain active on

- Retains grad hooks

- (fixed) now get moved to the latest grad_fn. NB: To the user, retains_grad is not considered hooks

or expected to behave like hooks (which we consider properties of the grad_fn) vs retains_gradness

which is a property of the tensor.

- (not in this PR) Python hooks

- (will fix) same issue as hooks in cpp where new hooks are being applied to grad_fn associated

with the older version of the tensor

Pull Request resolved: https://github.com/pytorch/pytorch/pull/79996

Approved by: https://github.com/albanD

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/75244

Original commit changeset: d653a5af662a

Original Phabricator Diff: D35060736 (d9d34922a0)

Test Plan: Model loading test, verified that D35060736 (d9d34922a0) will cause the torch::save => torch::load failure.

Reviewed By: yinghai, jianyuh

Differential Revision: D35387009

fbshipit-source-id: 9d176992d402d57779e2af3d905b3c1538335298

(cherry picked from commit 6c8cc0d3b8a88b15e35702d70e18bbae8aa4628a)

Summary:

There are various possible approaches, but the approach chosen minimizes disruption to source control blame.

Addresses:

```

error: Function _ZN23FunctionalTest_Pad_Test8TestBodyEv is too big to optimize [-Werror,-Wignored-optimization-argument]

```

Test Plan: buck2 build mode/opt caffe2/test/cpp/api:functional

Reviewed By: jamesr66a

Differential Revision: D34027291

fbshipit-source-id: 9dfd771ad56d3d4bc0d41b38b04654c8dae7c006

(cherry picked from commit d43b5a7ed6)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/69394

Modified loops in files under fbsource/fbcode/caffe2/ from the format

```

for(TYPE var=x0;var<x_max;x++)

```

to the format

```

for(const auto var: irange(xmax))

```

This was achieved by running r-barnes's loop upgrader script (D28874212) with some modification to exclude all files under /torch/jit and a number of reversions or unused variable suppression warnings added by hand.

Test Plan: Sandcastle

Reviewed By: malfet

Differential Revision: D32837991

fbshipit-source-id: fc7c4f76d2f32a17a0faf329294b3fe7cb81df32

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66743

Modified loops in files under fbsource/fbcode/caffe2/ from the format

`for(TYPE var=x0;var<x_max;x++)`

to the format

`for(const auto var: irange(xmax))`

This was achieved by running r-barnes's loop upgrader script (D28874212) with some modification to exclude all files under /torch/jit and a number of reversions or unused variable suppression warnings added by hand.

Test Plan: Sandcastle

Reviewed By: malfet

Differential Revision: D31705359

fbshipit-source-id: c9ea2fbc0f9cd29e97a52dcb203addc5f2abb09b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67195

Now that `is_nonzero` is part of `at::native` refer https://github.com/pytorch/pytorch/pull/66663, replacing `TensorCompare::is_nonzero` to `at::native::is_nonzero`

ghstack-source-id: 141514416

Test Plan: CI

Reviewed By: larryliu0820

Differential Revision: D31704041

fbshipit-source-id: 36813e5411d0aa2eb2d0442e2a195bbed417b33d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66744

Modified loops in files under fbsource/fbcode/caffe2/ from the format

`for(TYPE var=x0;var<x_max;x++)`

to the format

`for(const auto var: irange(xmax))`

This was achieved by running r-barnes's loop upgrader script (D28874212) with some modification to exclude all files under /torch/jit and a number of reversions or unused variable suppression warnings added by hand.

Test Plan: Sandcastle

Reviewed By: ngimel

Differential Revision: D31705358

fbshipit-source-id: d6ea350cbaa8f452fc78f238160e5374be637a48

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66234

Modified loops in files under fbsource/fbcode/caffe2/ from the format

`for(TYPE var=x0;var<x_max;x++)`

to the format

`for(const auto var: irange(xmax))`

This was achieved by running r-barnes's loop upgrader script (D28874212) with some modification to exclude all files under /torch/jit and a number of reversions or unused variable suppression warnings added by hand.

bypass_size_limit

allow-large-files

Test Plan: Sandcastle

Reviewed By: ngimel

Differential Revision: D30652629

fbshipit-source-id: 0ae6c4bbbb554bad42e372792a6430e1acf15e3e

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63878

See https://github.com/pytorch/pytorch/issues/64407, https://github.com/pytorch/pytorch/issues/62032 for context:

In this PR:

- Add boxed kernel by replicating `gen_inplace_or_view`'s logic that is ONLY for use with the Autograd not-implemented kernel

- Unlike `gen_inplace_or_view` we always pass a view_func to as_view in order to ensure that an "derivative is not implemented" error is raised even if an in-place update is performed on the view. Without the `view_func`, the CopySlice + AsStridedBackward nodes would replace the NotImplemented node.

- This limitation makes it impossible to use this node for general use

- view relationship must be between first input (must be tensor) and first output (may be tensor or vec of tensor)

- do not support non-differentiable views (_values, _indices, view.dtype) - view relationship is always fw and bw differentiable

- Adds the macro `#define REGISTER_AUTOGRAD_NOT_IMPLEMENTED_FALLBACK(ns, op)` to be the interface for this feature:

- static initialization can be slowed down(? not measured) if there are many registrations, because each line translates to 2 library calls but the workaround is just to manually use the two functions `AutogradNotImplementedFallback` and `ADInplaceOrViewFallback` and call `m.impl`.

- Adds testing:

- for views: view relationship created

- performing in-place operation on the view, raises properly

- trying to create two view relationships is not allowed,

- single view relationship but not first input/first output should error

- view relation created properly for tensor vector output

- for in-place:

- version count bump

- triggers rebase_history

- multiple mutations is okay and also updates version counter

- TODO (follow up): Update tutorials for adding third-party operators (and document the above limitations)

- TODO (follow up): Look at torch-audio/torch-vision and identify places where this can simplify existing code

EDIT: Made it more clear what is introduced in this PR and moved some more contextual stuff into the issue itself

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D30901714

Pulled By: soulitzer

fbshipit-source-id: 48de14c28be023ff4bd31b7ea5e7cba88aeee04c

Summary:

Delete `-Wno-unused-variable` from top level `CMakeLists.txt`

Still suppress those warnings for tests and `torch_python`

Delete number of unused variables from caffe2 code

Use `(void)var;` to suppress unused variable in range loops

Use `C10_UNUSED` for global constructors and use `constexpr` instead of `static` for global constants

Do not delete `caffe2::OperatorBase::Output` calls as they have side effects

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66041

Reviewed By: ngimel

Differential Revision: D31360142

Pulled By: malfet

fbshipit-source-id: 6fdfb9f91efdc49ca984a2f2a17ee377d28210c8

Summary:

Delete `-Wno-unused-variable` from top level `CMakeLists.txt`

Still suppress those warnings for tests and `torch_python`

Delete number of unused variables from caffe2 code

Use `(void)var;` to suppress unused variable in range loops

Use `C10_UNUSED` for global constructors and use `constexpr` instead of `static` for global constants

Pull Request resolved: https://github.com/pytorch/pytorch/pull/65954

Reviewed By: ngimel

Differential Revision: D31326599

Pulled By: malfet

fbshipit-source-id: 924155f1257a2ba1896c50512f615e45ca1f61f3

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/65861

First in a series. This PR changes the code in deploy.h/cpp and

interpreter_impl.h/cpp to be camel case instead of snake case. Starting

with this as it has the most impact on downstream users.

Test Plan: Imported from OSS

Reviewed By: shannonzhu

Differential Revision: D31291183

Pulled By: suo

fbshipit-source-id: ba6f74042947c9a08fb9cb3ad7276d8dbb5b2934

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63612

This makes Tensor inherit from a new class TensorBase, that provides a subset of Tensor that doesn't

directly depend on native_functions.yaml. Code that only includes TensorBase.h with thus not need to

be rebuilt every time someone changes an operator signature.

Making `Tensor` inherit from this class means that `const TensorBase&` parameters will be callable

with an ordinary `Tensor`. I've also made `Tensor` constructible and assignable from `TensorBase` to

minimize friction in code mixing the two types.

To help enforce that `Tensor.h` and `Functions.h` aren't accidentally included, I've added an error

into `Operators.h` if `TORCH_ASSERT_NO_OPERATORS` is defined. We can either set this in the build

system for certain folders, or just define it at the top of any file.

I've also included an example of manually special-casing the commonly used `contiguous` operator.

The inline function's slow path defers to `TensorBase::__dispatch_contiguous` which is defined in

`Tensor.cpp`. I've made it so `OptionalTensorRef` is constructible from `TensorBase`, so I can

materialize a `Tensor` for use in dispatch without actually increasing its refcount.

Test Plan: Imported from OSS

Reviewed By: gchanan

Differential Revision: D30728580

Pulled By: ezyang

fbshipit-source-id: 2cbc8eee08043382ee6904ea8e743b1286921c03

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61801

resubmitting because the last one was unrecoverable due to making changes incorrectly in the stack

Test Plan: Imported from OSS

Reviewed By: desertfire

Differential Revision: D29812510

Pulled By: makslevental

fbshipit-source-id: ba9685dc81b6699724104d5ff3211db5852370a6

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63995

JIT methods already have name() in their interface, and Py methods have names in their implementation. I'm adding this for a particular case where someone tried to use name() on a JIT method that we're replacing with an IMethod.

Test Plan: add case to imethod API test

Reviewed By: suo

Differential Revision: D30559401

fbshipit-source-id: 76236721f5cd9a9d9d488ddba12bfdd01d679a2c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63345

This diff did the following few things to enable the tests:

1. Exposed IMethod as TORCH_API.

2. Linked torch_deploy to test_api if USE_DEPLOY == 1.

3. Generated torch::deploy examples when building torch_deploy library.

Test Plan: ./build/bin/test_api --gtest_filter=IMethodTest.*

Reviewed By: ngimel

Differential Revision: D30346257

Pulled By: alanwaketan

fbshipit-source-id: 932ae7d45790dfb6e00c51893933a054a0fad86d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62521

This diff did the following few things to enable the tests:

1. Exposed IMethod as TORCH_API.

2. Linked torch_deploy to test_api if USE_DEPLOY == 1.

Test Plan:

./build/bin/test_api --gtest_filter=IMethodTest.*

To be noted, one needs to run `python torch/csrc/deploy/example/generate_examples.py` before the above command.

Reviewed By: ezyang

Differential Revision: D30055372

Pulled By: alanwaketan

fbshipit-source-id: 50eb3689cf84ed0f48be58cd109afcf61ecca508

Summary:

Enable Gelu bf16/fp32 in CPU path using Mkldnn implementation. User doesn't need to_mkldnn() explicitly. New Gelu fp32 performs better than original one.

Add Gelu backward for https://github.com/pytorch/pytorch/pull/53615.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58525

Reviewed By: ejguan

Differential Revision: D29940369

Pulled By: ezyang

fbshipit-source-id: df9598262ec50e5d7f6e96490562aa1b116948bf

Summary:

Fixes https://github.com/pytorch/pytorch/issues/60747

Enhances the C++ versions of `Transformer`, `TransformerEncoderLayer`, and `TransformerDecoderLayer` to support callables as their activation functions. The old way of specifying activation function still works as well.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62342

Reviewed By: malfet

Differential Revision: D30022592

Pulled By: jbschlosser

fbshipit-source-id: d3c62410b84b1bd8c5ed3a1b3a3cce55608390c4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62442

For PythonMethodWrapper::setArgumentNames, make sure to use the correct method

specified by method_name_ rather than using the parent model_ obj which itself

_is_ callable, but that callable is not the right signature to extract.

For Python vs Script, unify the behavior to avoid the 'self' parameter, so we only

list the argument names to the unbound arguments which is what we need in practice.

Test Plan: update unit test and it passes

Reviewed By: alanwaketan

Differential Revision: D29965283

fbshipit-source-id: a4e6a1d0f393f2a41c3afac32285548832da3fb4

Summary:

As GoogleTest `TEST` macro is non-compliant with it as well as `DEFINE_DISPATCH`

All changes but the ones to `.clang-tidy` are generated using following script:

```

for i in `find . -type f -iname "*.c*" -or -iname "*.h"|xargs grep cppcoreguidelines-avoid-non-const-global-variables|cut -f1 -d:|sort|uniq`; do sed -i "/\/\/ NOLINTNEXTLINE(cppcoreguidelines-avoid-non-const-global-variables)/d" $i; done

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62008

Reviewed By: driazati, r-barnes

Differential Revision: D29838584

Pulled By: malfet

fbshipit-source-id: 1b2f8602c945bd4ce50a9bfdd204755556e31d13

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61903

### Remaining Tasks

- [ ] Collate results of benchmarks on two Intel Xeon machines (with & without CUDA, to check if CPU throttling causes issues with GPUs) - make graphs, including Roofline model plots (Intel Advisor can't make them with libgomp, though, but with Intel OpenMP).

### Summary

1. This draft PR produces binaries with with 3 types of ATen kernels - default, AVX2, AVX512 . Using the environment variable `ATEN_AVX512_256=TRUE` also results in 3 types of kernels, but the compiler can use 32 ymm registers for AVX2, instead of the default 16. ATen kernels for `CPU_CAPABILITY_AVX` have been removed.

2. `nansum` is not using AVX512 kernel right now, as it has poorer accuracy for Float16, than does AVX2 or DEFAULT, whose respective accuracies aren't very good either (#59415).

It was more convenient to disable AVX512 dispatch for all dtypes of `nansum` for now.

3. On Windows , ATen Quantized AVX512 kernels are not being used, as quantization tests are flaky. If `--continue-through-failure` is used, then `test_compare_model_outputs_functional_static` fails. But if this test is skipped, `test_compare_model_outputs_conv_static` fails. If both these tests are skipped, then a third one fails. These are hard to debug right now due to not having access to a Windows machine with AVX512 support, so it was more convenient to disable AVX512 dispatch of all ATen Quantized kernels on Windows for now.

4. One test is currently being skipped -

[test_lstm` in `quantization.bc](https://github.com/pytorch/pytorch/issues/59098) - It fails only on Cascade Lake machines, irrespective of the `ATEN_CPU_CAPABILITY` used, because FBGEMM uses `AVX512_VNNI` on machines that support it. The value of `reduce_range` should be used as `False` on such machines.

The list of the changes is at https://gist.github.com/imaginary-person/4b4fda660534f0493bf9573d511a878d.

Credits to ezyang for proposing `AVX512_256` - these use AVX2 intrinsics but benefit from 32 registers, instead of the 16 ymm registers that AVX2 uses.

Credits to limo1996 for the initial proposal, and for optimizing `hsub_pd` & `hadd_pd`, which didn't have direct AVX512 equivalents, and are being used in some kernels. He also refactored `vec/functional.h` to remove duplicated code.

Credits to quickwritereader for helping fix 4 failing complex multiplication & division tests.

### Testing

1. `vec_test_all_types` was modified to test basic AVX512 support, as tests already existed for AVX2.

Only one test had to be modified, as it was hardcoded for AVX2.

2. `pytorch_linux_bionic_py3_8_gcc9_coverage_test1` & `pytorch_linux_bionic_py3_8_gcc9_coverage_test2` are now using `linux.2xlarge` instances, as they support AVX512. They were used for testing AVX512 kernels, as AVX512 kernels are being used by default in both of the CI checks. Windows CI checks had already been using machines with AVX512 support.

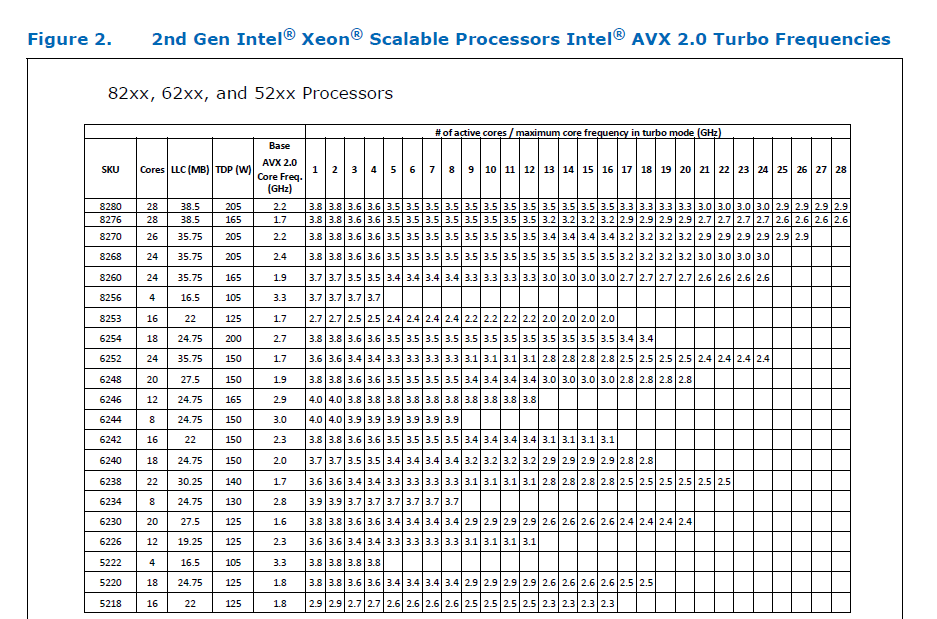

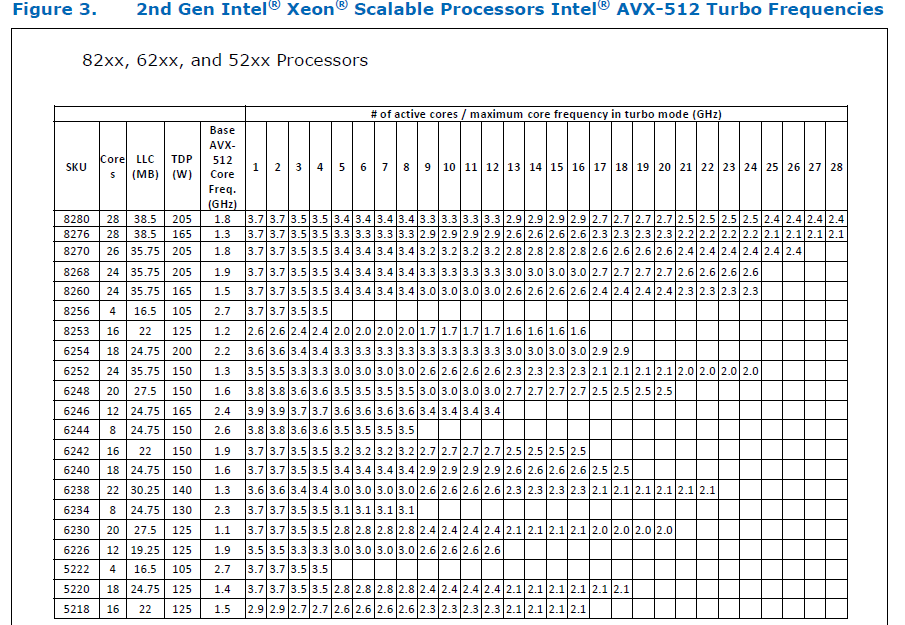

### Would the downclocking caused by AVX512 pose an issue?

I think it's important to note that AVX2 causes downclocking as well, and the additional downclocking caused by AVX512 may not hamper performance on some Skylake machines & beyond, because of the double vector-size. I think that [this post with verifiable references is a must-read](https://community.intel.com/t5/Software-Tuning-Performance/Unexpected-power-vs-cores-profile-for-MKL-kernels-on-modern-Xeon/m-p/1133869/highlight/true#M6450). Also, AVX512 would _probably not_ hurt performance on a high-end machine, [but measurements are recommended](https://lemire.me/blog/2018/09/07/avx-512-when-and-how-to-use-these-new-instructions/). In case it does, `ATEN_AVX512_256=TRUE` can be used for building PyTorch, as AVX2 can then use 32 ymm registers instead of the default 16. [FBGEMM uses `AVX512_256` only on Xeon D processors](https://github.com/pytorch/FBGEMM/pull/209), which are said to have poor AVX512 performance.

This [official data](https://www.intel.com/content/dam/www/public/us/en/documents/specification-updates/xeon-scalable-spec-update.pdf) is for the Intel Skylake family, and the first link helps understand its significance. Cascade Lake & Ice Lake SP Xeon processors are said to be even better when it comes to AVX512 performance.

Here is the corresponding data for [Cascade Lake](https://cdrdv2.intel.com/v1/dl/getContent/338848) -

The corresponding data isn't publicly available for Intel Xeon SP 3rd gen (Ice Lake SP), but [Intel mentioned that the 3rd gen has frequency improvements pertaining to AVX512](https://newsroom.intel.com/wp-content/uploads/sites/11/2021/04/3rd-Gen-Intel-Xeon-Scalable-Platform-Press-Presentation-281884.pdf). Ice Lake SP machines also have 48 KB L1D caches, so that's another reason for AVX512 performance to be better on them.

### Is PyTorch always faster with AVX512?

No, but then PyTorch is not always faster with AVX2 either. Please refer to #60202. The benefit from vectorization is apparent with with small tensors that fit in caches or in kernels that are more compute heavy. For instance, AVX512 or AVX2 would yield no benefit for adding two 64 MB tensors, but adding two 1 MB tensors would do well with AVX2, and even more so with AVX512.

It seems that memory-bound computations, such as adding two 64 MB tensors can be slow with vectorization (depending upon the number of threads used), as the effects of downclocking can then be observed.

Original pull request: https://github.com/pytorch/pytorch/pull/56992

Reviewed By: soulitzer

Differential Revision: D29266289

Pulled By: ezyang

fbshipit-source-id: 2d5e8d1c2307252f22423bbc14f136c67c3e6184

Summary:

Expose IMethod interface, which provides a unified interface to either script or python methods backed by torchscript or torchdeploy.

IMethod provides a way to depend on a torch method without depending on a particular runtime implementation such as torchscript or python/deploy.

Test Plan: add unit tests.

Reviewed By: suo

Differential Revision: D29463455

fbshipit-source-id: 903391d9af9fbdd8fcdb096c1a136ec6ac153b7c

Summary:

The grad() function needs to return the updated values, and hence

needs a non-empty inputs to populate.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52016

Test Plan:

Passes Python and C++ unit tests, and added new tests to catch this behavior.

Fixes https://github.com/pytorch/pytorch/issues/47061

Reviewed By: albanD

Differential Revision: D26406444

Pulled By: dagitses

fbshipit-source-id: 023aeca9a40cd765c5bad6a1a2f8767a33b75a1a

Summary:

Fixes https://github.com/pytorch/pytorch/issues/27655

This PR adds a C++ and Python version of ReflectionPad3d with structured kernels. The implementation uses lambdas extensively to better share code from the backward and forward pass.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59791

Reviewed By: gchanan

Differential Revision: D29242015

Pulled By: jbschlosser

fbshipit-source-id: 18e692d3b49b74082be09f373fc95fb7891e1b56

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58570

**What the PR does**

Generate a fast-path `at::meta::{op}` API for calling meta functions without having to go through the dispatcher. This will be important for perf for external backends that want to use meta functions for shape checking (which seems likely to be what we end up doing for LazyTensorCore).

**Details**

In order to avoid naming collisions I had to make two small changes:

- rename `MetaFunctions.h` template -> `NativeMetaFunctions.h` (this is the file that declares the impl() function for every structured operator).

- rename the meta class: `at::meta::{op}::meta()` -> `at::meta::structured_{op}::meta()`

I also deleted a few unnecessary includes, since any file that includes NativeFunctions.h will automatically include NativeMetaFunctions.h.

**Why I made the change**

This change isn't actually immediately used anywhere; I already started writing it because I thought it would be useful for structured composite ops, but that isn't actually true (see [comment](https://github.com/pytorch/pytorch/pull/58266#issuecomment-843213147)). The change feels useful and unambiguous though so I think it's safe to add. I added explicit tests for C++ meta function calls just to ensure that I wrote it correctly - which is actually how I hit the internal linkage issue in the PR below this in the stack.

Test Plan: Imported from OSS

Reviewed By: pbelevich

Differential Revision: D28711299

Pulled By: bdhirsh

fbshipit-source-id: d410d17358c2b406f0191398093f17308b3c6b9e

Summary:

Fixes https://github.com/pytorch/pytorch/issues/35379

- Adds `retains_grad` attribute backed by cpp as a native function. The python bindings for the function are skipped to be consistent with `is_leaf`.

- Tried writing it without native function, but the jit test `test_tensor_properties` seems to require that it be a native function (or alternatively maybe it could also work if we manually add a prim implementation?).

- Python API now uses `retain_grad` implementation from cpp

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59362

Reviewed By: jbschlosser

Differential Revision: D28969298

Pulled By: soulitzer

fbshipit-source-id: 335f2be50b9fb870cd35dc72f7dadd6c8666cc02

Summary:

Fixes https://github.com/pytorch/pytorch/issues/4661

- Add warnings in engine's `execute` function so it can be triggered through both cpp and python codepaths

- Adds an RAII guard version of `c10::Warning::set_warnAlways` and replaces all prior usages of the set_warnAlways with the new one

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59412

Reviewed By: jbschlosser

Differential Revision: D28969294

Pulled By: soulitzer

fbshipit-source-id: b03369c926a3be18ce1cf363b39edd82a14245f0

Summary:

Adds `is_inference` as a native function w/ manual cpp bindings.

Also changes instances of `is_inference_tensor` to `is_inference` to be consistent with other properties such as `is_complex`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58729

Reviewed By: mruberry

Differential Revision: D28874507

Pulled By: soulitzer

fbshipit-source-id: 0fa6bcdc72a4ae444705e2e0f3c416c1b28dadc7