Summary:

Enable Gelu bf16/fp32 in CPU path using Mkldnn implementation. User doesn't need to_mkldnn() explicitly. New Gelu fp32 performs better than original one.

Add Gelu backward for https://github.com/pytorch/pytorch/pull/53615.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58525

Reviewed By: ejguan

Differential Revision: D29940369

Pulled By: ezyang

fbshipit-source-id: df9598262ec50e5d7f6e96490562aa1b116948bf

Summary:

Fixes https://github.com/pytorch/pytorch/issues/60747

Enhances the C++ versions of `Transformer`, `TransformerEncoderLayer`, and `TransformerDecoderLayer` to support callables as their activation functions. The old way of specifying activation function still works as well.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62342

Reviewed By: malfet

Differential Revision: D30022592

Pulled By: jbschlosser

fbshipit-source-id: d3c62410b84b1bd8c5ed3a1b3a3cce55608390c4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62442

For PythonMethodWrapper::setArgumentNames, make sure to use the correct method

specified by method_name_ rather than using the parent model_ obj which itself

_is_ callable, but that callable is not the right signature to extract.

For Python vs Script, unify the behavior to avoid the 'self' parameter, so we only

list the argument names to the unbound arguments which is what we need in practice.

Test Plan: update unit test and it passes

Reviewed By: alanwaketan

Differential Revision: D29965283

fbshipit-source-id: a4e6a1d0f393f2a41c3afac32285548832da3fb4

Summary:

As GoogleTest `TEST` macro is non-compliant with it as well as `DEFINE_DISPATCH`

All changes but the ones to `.clang-tidy` are generated using following script:

```

for i in `find . -type f -iname "*.c*" -or -iname "*.h"|xargs grep cppcoreguidelines-avoid-non-const-global-variables|cut -f1 -d:|sort|uniq`; do sed -i "/\/\/ NOLINTNEXTLINE(cppcoreguidelines-avoid-non-const-global-variables)/d" $i; done

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62008

Reviewed By: driazati, r-barnes

Differential Revision: D29838584

Pulled By: malfet

fbshipit-source-id: 1b2f8602c945bd4ce50a9bfdd204755556e31d13

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61903

### Remaining Tasks

- [ ] Collate results of benchmarks on two Intel Xeon machines (with & without CUDA, to check if CPU throttling causes issues with GPUs) - make graphs, including Roofline model plots (Intel Advisor can't make them with libgomp, though, but with Intel OpenMP).

### Summary

1. This draft PR produces binaries with with 3 types of ATen kernels - default, AVX2, AVX512 . Using the environment variable `ATEN_AVX512_256=TRUE` also results in 3 types of kernels, but the compiler can use 32 ymm registers for AVX2, instead of the default 16. ATen kernels for `CPU_CAPABILITY_AVX` have been removed.

2. `nansum` is not using AVX512 kernel right now, as it has poorer accuracy for Float16, than does AVX2 or DEFAULT, whose respective accuracies aren't very good either (#59415).

It was more convenient to disable AVX512 dispatch for all dtypes of `nansum` for now.

3. On Windows , ATen Quantized AVX512 kernels are not being used, as quantization tests are flaky. If `--continue-through-failure` is used, then `test_compare_model_outputs_functional_static` fails. But if this test is skipped, `test_compare_model_outputs_conv_static` fails. If both these tests are skipped, then a third one fails. These are hard to debug right now due to not having access to a Windows machine with AVX512 support, so it was more convenient to disable AVX512 dispatch of all ATen Quantized kernels on Windows for now.

4. One test is currently being skipped -

[test_lstm` in `quantization.bc](https://github.com/pytorch/pytorch/issues/59098) - It fails only on Cascade Lake machines, irrespective of the `ATEN_CPU_CAPABILITY` used, because FBGEMM uses `AVX512_VNNI` on machines that support it. The value of `reduce_range` should be used as `False` on such machines.

The list of the changes is at https://gist.github.com/imaginary-person/4b4fda660534f0493bf9573d511a878d.

Credits to ezyang for proposing `AVX512_256` - these use AVX2 intrinsics but benefit from 32 registers, instead of the 16 ymm registers that AVX2 uses.

Credits to limo1996 for the initial proposal, and for optimizing `hsub_pd` & `hadd_pd`, which didn't have direct AVX512 equivalents, and are being used in some kernels. He also refactored `vec/functional.h` to remove duplicated code.

Credits to quickwritereader for helping fix 4 failing complex multiplication & division tests.

### Testing

1. `vec_test_all_types` was modified to test basic AVX512 support, as tests already existed for AVX2.

Only one test had to be modified, as it was hardcoded for AVX2.

2. `pytorch_linux_bionic_py3_8_gcc9_coverage_test1` & `pytorch_linux_bionic_py3_8_gcc9_coverage_test2` are now using `linux.2xlarge` instances, as they support AVX512. They were used for testing AVX512 kernels, as AVX512 kernels are being used by default in both of the CI checks. Windows CI checks had already been using machines with AVX512 support.

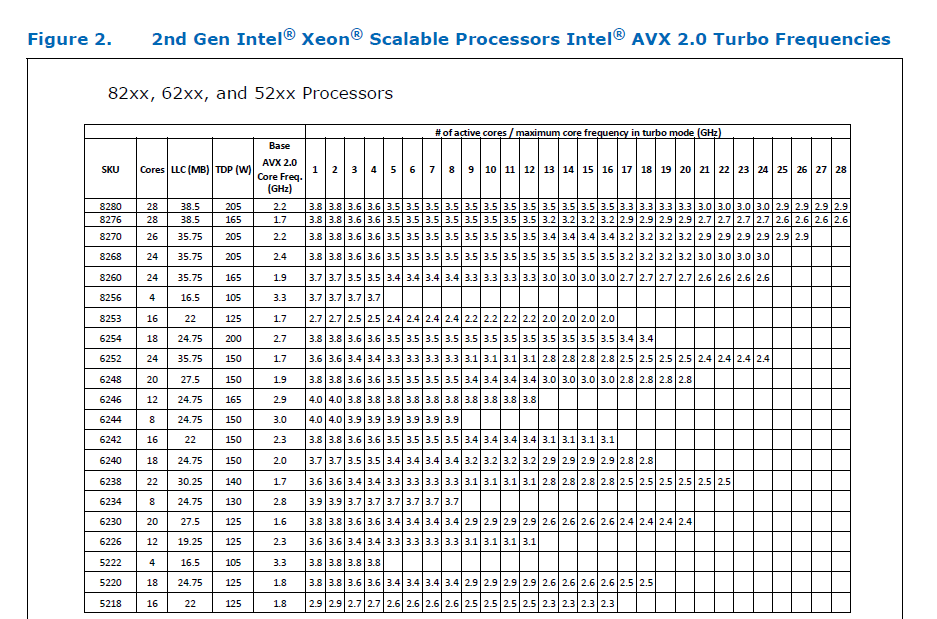

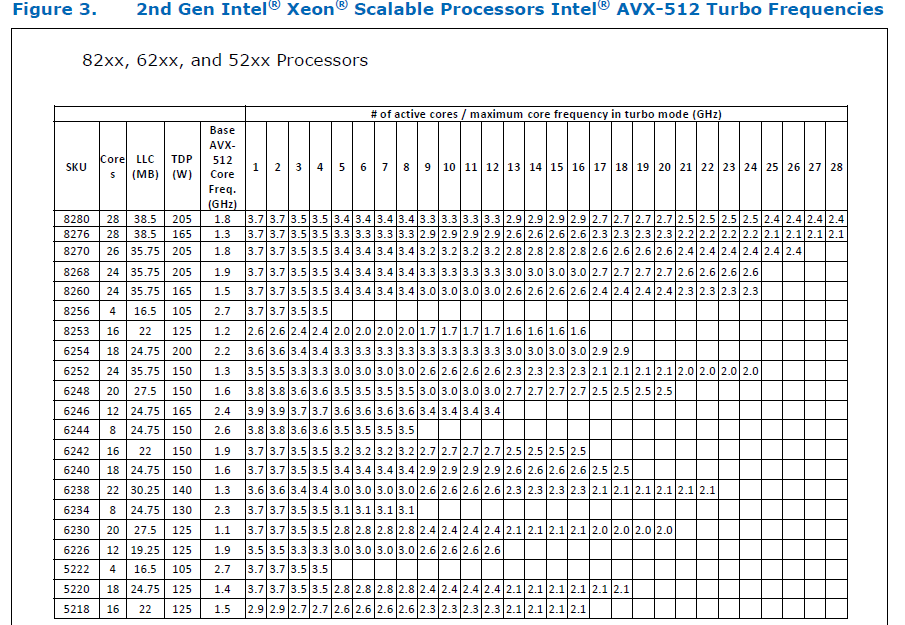

### Would the downclocking caused by AVX512 pose an issue?

I think it's important to note that AVX2 causes downclocking as well, and the additional downclocking caused by AVX512 may not hamper performance on some Skylake machines & beyond, because of the double vector-size. I think that [this post with verifiable references is a must-read](https://community.intel.com/t5/Software-Tuning-Performance/Unexpected-power-vs-cores-profile-for-MKL-kernels-on-modern-Xeon/m-p/1133869/highlight/true#M6450). Also, AVX512 would _probably not_ hurt performance on a high-end machine, [but measurements are recommended](https://lemire.me/blog/2018/09/07/avx-512-when-and-how-to-use-these-new-instructions/). In case it does, `ATEN_AVX512_256=TRUE` can be used for building PyTorch, as AVX2 can then use 32 ymm registers instead of the default 16. [FBGEMM uses `AVX512_256` only on Xeon D processors](https://github.com/pytorch/FBGEMM/pull/209), which are said to have poor AVX512 performance.

This [official data](https://www.intel.com/content/dam/www/public/us/en/documents/specification-updates/xeon-scalable-spec-update.pdf) is for the Intel Skylake family, and the first link helps understand its significance. Cascade Lake & Ice Lake SP Xeon processors are said to be even better when it comes to AVX512 performance.

Here is the corresponding data for [Cascade Lake](https://cdrdv2.intel.com/v1/dl/getContent/338848) -

The corresponding data isn't publicly available for Intel Xeon SP 3rd gen (Ice Lake SP), but [Intel mentioned that the 3rd gen has frequency improvements pertaining to AVX512](https://newsroom.intel.com/wp-content/uploads/sites/11/2021/04/3rd-Gen-Intel-Xeon-Scalable-Platform-Press-Presentation-281884.pdf). Ice Lake SP machines also have 48 KB L1D caches, so that's another reason for AVX512 performance to be better on them.

### Is PyTorch always faster with AVX512?

No, but then PyTorch is not always faster with AVX2 either. Please refer to #60202. The benefit from vectorization is apparent with with small tensors that fit in caches or in kernels that are more compute heavy. For instance, AVX512 or AVX2 would yield no benefit for adding two 64 MB tensors, but adding two 1 MB tensors would do well with AVX2, and even more so with AVX512.

It seems that memory-bound computations, such as adding two 64 MB tensors can be slow with vectorization (depending upon the number of threads used), as the effects of downclocking can then be observed.

Original pull request: https://github.com/pytorch/pytorch/pull/56992

Reviewed By: soulitzer

Differential Revision: D29266289

Pulled By: ezyang

fbshipit-source-id: 2d5e8d1c2307252f22423bbc14f136c67c3e6184

Summary:

Expose IMethod interface, which provides a unified interface to either script or python methods backed by torchscript or torchdeploy.

IMethod provides a way to depend on a torch method without depending on a particular runtime implementation such as torchscript or python/deploy.

Test Plan: add unit tests.

Reviewed By: suo

Differential Revision: D29463455

fbshipit-source-id: 903391d9af9fbdd8fcdb096c1a136ec6ac153b7c

Summary:

The grad() function needs to return the updated values, and hence

needs a non-empty inputs to populate.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52016

Test Plan:

Passes Python and C++ unit tests, and added new tests to catch this behavior.

Fixes https://github.com/pytorch/pytorch/issues/47061

Reviewed By: albanD

Differential Revision: D26406444

Pulled By: dagitses

fbshipit-source-id: 023aeca9a40cd765c5bad6a1a2f8767a33b75a1a

Summary:

Fixes https://github.com/pytorch/pytorch/issues/27655

This PR adds a C++ and Python version of ReflectionPad3d with structured kernels. The implementation uses lambdas extensively to better share code from the backward and forward pass.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59791

Reviewed By: gchanan

Differential Revision: D29242015

Pulled By: jbschlosser

fbshipit-source-id: 18e692d3b49b74082be09f373fc95fb7891e1b56

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58570

**What the PR does**

Generate a fast-path `at::meta::{op}` API for calling meta functions without having to go through the dispatcher. This will be important for perf for external backends that want to use meta functions for shape checking (which seems likely to be what we end up doing for LazyTensorCore).

**Details**

In order to avoid naming collisions I had to make two small changes:

- rename `MetaFunctions.h` template -> `NativeMetaFunctions.h` (this is the file that declares the impl() function for every structured operator).

- rename the meta class: `at::meta::{op}::meta()` -> `at::meta::structured_{op}::meta()`

I also deleted a few unnecessary includes, since any file that includes NativeFunctions.h will automatically include NativeMetaFunctions.h.

**Why I made the change**

This change isn't actually immediately used anywhere; I already started writing it because I thought it would be useful for structured composite ops, but that isn't actually true (see [comment](https://github.com/pytorch/pytorch/pull/58266#issuecomment-843213147)). The change feels useful and unambiguous though so I think it's safe to add. I added explicit tests for C++ meta function calls just to ensure that I wrote it correctly - which is actually how I hit the internal linkage issue in the PR below this in the stack.

Test Plan: Imported from OSS

Reviewed By: pbelevich

Differential Revision: D28711299

Pulled By: bdhirsh

fbshipit-source-id: d410d17358c2b406f0191398093f17308b3c6b9e

Summary:

Fixes https://github.com/pytorch/pytorch/issues/35379

- Adds `retains_grad` attribute backed by cpp as a native function. The python bindings for the function are skipped to be consistent with `is_leaf`.

- Tried writing it without native function, but the jit test `test_tensor_properties` seems to require that it be a native function (or alternatively maybe it could also work if we manually add a prim implementation?).

- Python API now uses `retain_grad` implementation from cpp

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59362

Reviewed By: jbschlosser

Differential Revision: D28969298

Pulled By: soulitzer

fbshipit-source-id: 335f2be50b9fb870cd35dc72f7dadd6c8666cc02

Summary:

Fixes https://github.com/pytorch/pytorch/issues/4661

- Add warnings in engine's `execute` function so it can be triggered through both cpp and python codepaths

- Adds an RAII guard version of `c10::Warning::set_warnAlways` and replaces all prior usages of the set_warnAlways with the new one

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59412

Reviewed By: jbschlosser

Differential Revision: D28969294

Pulled By: soulitzer

fbshipit-source-id: b03369c926a3be18ce1cf363b39edd82a14245f0

Summary:

Adds `is_inference` as a native function w/ manual cpp bindings.

Also changes instances of `is_inference_tensor` to `is_inference` to be consistent with other properties such as `is_complex`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58729

Reviewed By: mruberry

Differential Revision: D28874507

Pulled By: soulitzer

fbshipit-source-id: 0fa6bcdc72a4ae444705e2e0f3c416c1b28dadc7

Summary:

Fixes https://github.com/pytorch/pytorch/issues/56608

- Adds binding to the `c10::InferenceMode` RAII class in `torch._C._autograd.InferenceMode` through pybind. Also binds the `torch.is_inference_mode` function.

- Adds context manager `torch.inference_mode` to manage an instance of `c10::InferenceMode` (global). Implemented in `torch.autograd.grad_mode.py` to reuse the `_DecoratorContextManager` class.

- Adds some tests based on those linked in the issue + several more for just the context manager

Issues/todos (not necessarily for this PR):

- Improve short inference mode description

- Small example

- Improved testing since there is no direct way of checking TLS/dispatch keys

-

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58045

Reviewed By: agolynski

Differential Revision: D28390595

Pulled By: soulitzer

fbshipit-source-id: ae98fa036c6a2cf7f56e0fd4c352ff804904752c

Summary:

Fixes a bug introduced by https://github.com/pytorch/pytorch/issues/57057

cc ailzhang while writing the tests, I realized that for these functions, we don't properly set the CreationMeta in no grad mode and Inference mode. Added a todo there.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57669

Reviewed By: soulitzer

Differential Revision: D28231005

Pulled By: albanD

fbshipit-source-id: 08a68d23ded87027476914bc87f3a0537f01fc33

Summary:

This is an automatic change generated by the following script:

```

#!/usr/bin/env python3

from subprocess import check_output, check_call

import os

def get_compiled_files_list():

import json

with open("build/compile_commands.json") as f:

data = json.load(f)

files = [os.path.relpath(node['file']) for node in data]

for idx, fname in enumerate(files):

if fname.startswith('build/') and fname.endswith('.DEFAULT.cpp'):

files[idx] = fname[len('build/'):-len('.DEFAULT.cpp')]

return files

def run_clang_tidy(fname):

check_call(["python3", "tools/clang_tidy.py", "-c", "build", "-x", fname,"-s"])

changes = check_output(["git", "ls-files", "-m"])

if len(changes) == 0:

return

check_call(["git", "commit","--all", "-m", f"NOLINT stubs for {fname}"])

def main():

git_files = check_output(["git", "ls-files"]).decode("ascii").split("\n")

compiled_files = get_compiled_files_list()

for idx, fname in enumerate(git_files):

if fname not in compiled_files:

continue

if fname.startswith("caffe2/contrib/aten/"):

continue

print(f"[{idx}/{len(git_files)}] Processing {fname}")

run_clang_tidy(fname)

if __name__ == "__main__":

main()

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56892

Reviewed By: H-Huang

Differential Revision: D27991944

Pulled By: malfet

fbshipit-source-id: 5415e1eb2c1b34319a4f03024bfaa087007d7179

Summary:

`is_variable` spits out a deprecation warning during the build (if it's

still something that needs to be tested we can ignore deprecated

warnings for the whole test instead of this change).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56305

Pulled By: driazati

Reviewed By: ezyang

Differential Revision: D27834218

fbshipit-source-id: c7bbea7e9d8099bac232a3a732a27e4cd7c7b950

Summary:

Temporary fix to give people extra time to finish the deprecation.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56401

Reviewed By: xw285cornell, drdarshan

Differential Revision: D27862196

Pulled By: albanD

fbshipit-source-id: ed460267f314a136941ba550b904dee0321eb0c6

Summary:

This PR adds a `padding_idx` parameter to `nn.EmbeddingBag` and `nn.functional.embedding_bag`. As with `nn.Embedding`'s `padding_idx` argument, if an embedding's index is equal to `padding_idx` it is ignored, so it is not included in the reduction.

This PR does not add support for `padding_idx` for quantized or ONNX `EmbeddingBag` for opset10/11 (opset9 is supported). In these cases, an error is thrown if `padding_idx` is provided.

Fixes https://github.com/pytorch/pytorch/issues/3194

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49237

Reviewed By: walterddr, VitalyFedyunin

Differential Revision: D26948258

Pulled By: jbschlosser

fbshipit-source-id: 3ca672f7e768941f3261ab405fc7597c97ce3dfc

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54403

A few important points about InferenceMode behavior:

1. All tensors created in InferenceMode are inference tensors except for view ops.

- view ops produce output has the same is_inference_tensor property as their input.

Namely view of normal tensor inside InferenceMode produce a normal tensor, which is

exactly the same as creating a view inside NoGradMode. And view of

inference tensor outside InferenceMode produce inference tensor as output.

2. All ops are allowed inside InferenceMode, faster than normal mode.

3. Inference tensor cannot be saved for backward.

Test Plan: Imported from OSS

Reviewed By: ezyang

Differential Revision: D27316483

Pulled By: ailzhang

fbshipit-source-id: e03248a66d42e2d43cfe7ccb61e49cc4afb2923b

Summary:

Non-backwards-compatible change introduced in https://github.com/pytorch/pytorch/pull/53843 is tripping up a lot of code. Better to set it to False initially and then potentially flip to True in the later version to give people time to adapt.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55169

Reviewed By: mruberry

Differential Revision: D27511150

Pulled By: jbschlosser

fbshipit-source-id: 1ac018557c0900b31995c29f04aea060a27bc525

Summary:

*Context:* https://github.com/pytorch/pytorch/issues/53406 added a lint for trailing whitespace at the ends of lines. However, in order to pass FB-internal lints, that PR also had to normalize the trailing newlines in four of the files it touched. This PR adds an OSS lint to normalize trailing newlines.

The changes to the following files (made in 54847d0adb9be71be4979cead3d9d4c02160e4cd) are the only manually-written parts of this PR:

- `.github/workflows/lint.yml`

- `mypy-strict.ini`

- `tools/README.md`

- `tools/test/test_trailing_newlines.py`

- `tools/trailing_newlines.py`

I would have liked to make this just a shell one-liner like the other three similar lints, but nothing I could find quite fit the bill. Specifically, all the answers I tried from the following Stack Overflow questions were far too slow (at least a minute and a half to run on this entire repository):

- [How to detect file ends in newline?](https://stackoverflow.com/q/38746)

- [How do I find files that do not end with a newline/linefeed?](https://stackoverflow.com/q/4631068)

- [How to list all files in the Git index without newline at end of file](https://stackoverflow.com/q/27624800)

- [Linux - check if there is an empty line at the end of a file [duplicate]](https://stackoverflow.com/q/34943632)

- [git ensure newline at end of each file](https://stackoverflow.com/q/57770972)

To avoid giving false positives during the few days after this PR is merged, we should probably only merge it after https://github.com/pytorch/pytorch/issues/54967.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54737

Test Plan:

Running the shell script from the "Ensure correct trailing newlines" step in the `quick-checks` job of `.github/workflows/lint.yml` should print no output and exit in a fraction of a second with a status of 0. That was not the case prior to this PR, as shown by this failing GHA workflow run on an earlier draft of this PR:

- https://github.com/pytorch/pytorch/runs/2197446987?check_suite_focus=true

In contrast, this run (after correcting the trailing newlines in this PR) succeeded:

- https://github.com/pytorch/pytorch/pull/54737/checks?check_run_id=2197553241

To unit-test `tools/trailing_newlines.py` itself (this is run as part of our "Test tools" GitHub Actions workflow):

```

python tools/test/test_trailing_newlines.py

```

Reviewed By: malfet

Differential Revision: D27409736

Pulled By: samestep

fbshipit-source-id: 46f565227046b39f68349bbd5633105b2d2e9b19

Summary:

**BC-breaking note**: This change throws errors for cases that used to silently pass. The old behavior can be obtained by setting `error_if_nonfinite=False`

Fixes https://github.com/pytorch/pytorch/issues/46849

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53843

Reviewed By: malfet

Differential Revision: D27291838

Pulled By: jbschlosser

fbshipit-source-id: 216d191b26e1b5919a44a3af5cde6f35baf825c4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45667

First part of #3867 (Pooling operators still to do)

This adds a `padding='same'` mode to the interface of `conv{n}d`and `nn.Conv{n}d`. This should match the behaviour of `tensorflow`. I couldn't find it explicitly documented but through experimentation I found `tensorflow` returns the shape `ceil(len/stride)` and always adds any extra asymmetric padding onto the right side of the input.

Since the `native_functions.yaml` schema doesn't seem to support strings or enums, I've moved the function interface into python and it now dispatches between the numerically padded `conv{n}d` and the `_conv{n}d_same` variant. Underscores because I couldn't see any way to avoid exporting a function into the `torch` namespace.

A note on asymmetric padding. The total padding required can be odd if both the kernel-length is even and the dilation is odd. mkldnn has native support for asymmetric padding, so there is no overhead there, but for other backends I resort to padding the input tensor by 1 on the right hand side to make the remaining padding symmetrical. In these cases, I use `TORCH_WARN_ONCE` to notify the user of the performance implications.

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision: D27170744

Pulled By: jbschlosser

fbshipit-source-id: b3d8a0380e0787ae781f2e5d8ee365a7bfd49f22

Summary:

Fixes https://github.com/pytorch/pytorch/issues/50577

Learning rate schedulers had not yet been implemented for the C++ API.

This pull request introduces the learning rate scheduler base class and the StepLR subclass. Furthermore, it modifies the existing OptimizerOptions such that the learning rate scheduler can modify the learning rate.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52268

Reviewed By: mrshenli

Differential Revision: D26818387

Pulled By: glaringlee

fbshipit-source-id: 2b28024a8ea7081947c77374d6d643fdaa7174c1

Summary:

Context: https://github.com/pytorch/pytorch/pull/53299#discussion_r587882857

These are the only hand-written parts of this diff:

- the addition to `.github/workflows/lint.yml`

- the file endings changed in these four files (to appease FB-internal land-blocking lints):

- `GLOSSARY.md`

- `aten/src/ATen/core/op_registration/README.md`

- `scripts/README.md`

- `torch/csrc/jit/codegen/fuser/README.md`

The rest was generated by running this command (on macOS):

```

git grep -I -l ' $' -- . ':(exclude)**/contrib/**' ':(exclude)third_party' | xargs gsed -i 's/ *$//'

```

I looked over the auto-generated changes and didn't see anything that looked problematic.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53406

Test Plan:

This run (after adding the lint but before removing existing trailing spaces) failed:

- https://github.com/pytorch/pytorch/runs/2043032377

This run (on the tip of this PR) succeeded:

- https://github.com/pytorch/pytorch/runs/2043296348

Reviewed By: walterddr, seemethere

Differential Revision: D26856620

Pulled By: samestep

fbshipit-source-id: 3f0de7f7c2e4b0f1c089eac9b5085a58dd7e0d97

Summary:

Fixes https://github.com/pytorch/pytorch/issues/39784

At the time the issue was filed, there was only issue (1) below.

There are actually now two issues here:

1. We always set all inputs passed in through `inputs` arg as `needed = True` in exec_info. So if we pass in an input that has a grad_fn that is not materialized, we create an entry of exec_info with nullptr as key with `needed = True`. Coincidentally, when we perform simple arithmetic operations, such as "2 * x", one of the next edges of mul is an invalid edge, meaning that its grad_fn is also nullptr. This causes the discovery algorithm to set all grad_fns that have a path to this invalid_edge as `needed = True`.

2. Before the commit that enabled the engine skipped the dummy node, we knew that root node is always needed, i.e., we hardcode `exec_info[&graph_root]=true`. The issue was that this logic wasn't updated after the code was updated to skip the graph root.

To address (1), instead of passing in an invalid edge if an input in `inputs` has no grad_fn, we create a dummy grad_fn. This is done in both python and cpp entry points. The alternative is to add logic for both backward() and grad() cases to check whether the grad_fn is nullptr and set needed=false in that case (the .grad() case would be slightly more complicated than the .backward() case here).

For (2), we perform one final iteration of the discovery algorithm so that we really know whether we need to execute the graph root.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51940

Reviewed By: VitalyFedyunin

Differential Revision: D26369529

Pulled By: soulitzer

fbshipit-source-id: 14a01ae7988a8de621b967a31564ce1d7a00084e

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50321

Quantization team reported that when there are two empty tensors are replicated among ranks, the two empty tensors start to share storage after resizing.

The root cause is unflatten_dense_tensor unflattened the empty tensor as view of flat tensor and thus share storage with other tensors.

This PR is trying to avoid unflatten the empty tensor as view of flat tensor so that empty tensor will not share storage with other tensors.

Test Plan: unit test

Reviewed By: pritamdamania87

Differential Revision: D25859503

fbshipit-source-id: 5b760b31af6ed2b66bb22954cba8d1514f389cca

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49348

This is a redux of #45666 post refactor, based off of

d534f7d4c5

Credit goes to peterbell10 for the implementation.

Fixes#43945.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Test Plan: Imported from OSS

Reviewed By: smessmer

Differential Revision: D25594004

Pulled By: ezyang

fbshipit-source-id: c8eb876bb3348308d6dc8ba7bf091a2a3389450f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49138

See for details: https://fb.quip.com/QRtJAin66lPN

We need to model optional types explicitly, mostly for schema inference. So we cannot pass a `Tensor?[]` as `ArrayRef<Tensor>`, instead we need to pass it as an optional type. This PR changes it to `torch::List<c10::optional<Tensor>>`. It also makes the ops c10-full that were blocked by this.

## Backwards Compatibility

- This should not break the Python API because the representation in Python is the same and python_arg_parser just transforms the python list into a `List<optional<Tensor>>` instead of into a `List<Tensor>`.

- This should not break serialized models because there's some logic that allows loading a serialized `List<Tensor>` as `List<optional<Tensor>>`, see https://github.com/pytorch/pytorch/pull/49138/files#diff-9315f5dd045f47114c677174dcaa2f982721233eee1aa19068a42ff3ef775315R57

- This will break backwards compatibility for the C++ API. There is no implicit conversion from `ArrayRef<Tensor>` (which was the old argument type) to `List<optional<Tensor>>`. One common call pattern is `tensor.index({indices_tensor})`, where indices_tensor is another `Tensor`, and that will continue working because the `{}` initializer_list constructor for `List<optional<Tensor>>` can take `Tensor` elements that are implicitly converted to `optional<Tensor>`, but another common call pattern was `tensor.index(indices_tensor)`, where previously, the `Tensor` got implicitly converted to an `ArrayRef<Tensor>`, and to implicitly convert `Tensor -> optional<Tensor> -> List<optional<Tensor>>` would be two implicit conversions. C++ doesn't allow chaining. two implicit conversions. So those call sites have to be rewritten to `tensor.index({indices_tensor})`.

ghstack-source-id: 119269131

Test Plan:

## Benchmarks (C++ instruction counts):

### Forward

#### Script

```py

from torch.utils.benchmark import Timer

counts = Timer(

stmt="""

auto t = {{op call to measure}};

""",

setup="""

using namespace torch::indexing;

auto x = torch::ones({4, 4, 4});

""",

language="cpp",

).collect_callgrind(number=1_000)

print(counts)

```

#### Results

| Op call |before |after |delta | |

|------------------------------------------------------------------------|---------|--------|-------|------|

|x[0] = 1 |11566015 |11566015|0 |0.00% |

|x.index({0}) |6807019 |6801019 |-6000 |-0.09%|

|x.index({0, 0}) |13529019 |13557019|28000 |0.21% |

|x.index({0, 0, 0}) |10677004 |10692004|15000 |0.14% |

|x.index({"..."}) |5512015 |5506015 |-6000 |-0.11%|

|x.index({Slice(None, None, None)}) |6866016 |6936016 |70000 |1.02% |

|x.index({None}) |8554015 |8548015 |-6000 |-0.07%|

|x.index({false}) |22400000 |22744000|344000 |1.54% |

|x.index({true}) |27624088 |27264393|-359695|-1.30%|

|x.index({"...", 0, true, Slice(1, None, 2), torch::tensor({1, 2})})|123472000|123463306|-8694|-0.01%|

### Autograd

#### Script

```py

from torch.utils.benchmark import Timer

counts = Timer(

stmt="""

auto t = {{op call to measure}};

""",

setup="""

using namespace torch::indexing;

auto x = torch::ones({4, 4, 4}, torch::requires_grad());

""",

language="cpp",

).collect_callgrind(number=1_000)

print(counts)

```

Note: the script measures the **forward** path of an op call with autograd enabled (i.e. calls into VariableType). It does not measure the backward path.

#### Results

| Op call |before |after |delta | |

|------------------------------------------------------------------------|---------|--------|-------|------|

|x.index({0}) |14839019|14833019|-6000| 0.00% |

|x.index({0, 0}) |28342019|28370019|28000| 0.00% |

|x.index({0, 0, 0}) |24434004|24449004|15000| 0.00% |

|x.index({"..."}) |12773015|12767015|-6000| 0.00% |

|x.index({Slice(None, None, None)}) |14837016|14907016|70000| 0.47% |

|x.index({None}) |15926015|15920015|-6000| 0.00% |

|x.index({false}) |36958000|37477000|519000| 1.40% |

|x.index({true}) |41971408|42426094|454686| 1.08% |

|x.index({"...", 0, true, Slice(1, None, 2), torch::tensor({1, 2})}) |168184392|164545682|-3638710| -2.16% |

Reviewed By: bhosmer

Differential Revision: D25454632

fbshipit-source-id: 28ab0cffbbdbdff1c40b4130ca62ee72f981b76d