Summary:

https://github.com/pytorch/pytorch/issues/66406

implemented z arch 14/15 vector SIMD additions.

so far besides bfloat all other types have their SIMD implementation.

it has 99% coverage and currently passing the local test.

it is concise and the main SIMD file is only one header file

it's using template metaprogramming, mostly. but still, there are a few macrosses left with the intention not to modify PyTorch much

Sleef supports z15

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66407

Reviewed By: mrshenli

Differential Revision: D33370163

Pulled By: malfet

fbshipit-source-id: 0e5a57f31b22a718cd2a9ac59753fb468cdda140

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/68247

This splits `Functions.h`, `Operators.h`, `NativeFunctions.h` and

`NativeMetaFunctions.h` into seperate headers per operator base name.

With `at::sum` as an example, we can include:

```cpp

<ATen/core/sum.h> // Like Functions.h

<ATen/core/sum_ops.h> // Like Operators.h

<ATen/core/sum_native.h> // Like NativeFunctions.h

<ATen/core/sum_meta.h> // Like NativeMetaFunctions.h

```

The umbrella headers are still being generated, but all they do is

include from the `ATen/ops' folder.

Further, `TensorBody.h` now only includes the operators that have

method variants. Which means files that only include `Tensor.h` don't

need to be rebuilt when you modify function-only operators. Currently

there are about 680 operators that don't have method variants, so this

is potentially a significant win for incremental builds.

Test Plan: Imported from OSS

Reviewed By: mrshenli

Differential Revision: D32596272

Pulled By: albanD

fbshipit-source-id: 447671b2b6adc1364f66ed9717c896dae25fa272

Summary:

Remove all hardcoded AMD gfx targets

PyTorch build and Magma build will use rocm_agent_enumerator as

backup if PYTORCH_ROCM_ARCH env var is not defined

PyTorch extensions will use same gfx targets as the PyTorch build,

unless PYTORCH_ROCM_ARCH env var is defined

torch.cuda.get_arch_list() now works for ROCm builds

PyTorch CI dockers will continue to be built for gfx900 and gfx906 for now.

PYTORCH_ROCM_ARCH env var can be a space or semicolon separated list of gfx archs eg. "gfx900 gfx906" or "gfx900;gfx906"

cc jeffdaily sunway513 jithunnair-amd ROCmSupport KyleCZH

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61706

Reviewed By: seemethere

Differential Revision: D32735862

Pulled By: malfet

fbshipit-source-id: 3170e445e738e3ce373203e1e4ae99c84e645d7d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/69710

Namely no range-loop-analysis (that detect when loop variable can not be const reference

Test Plan: Imported from OSS

Reviewed By: r-barnes

Differential Revision: D32997003

Pulled By: malfet

fbshipit-source-id: dba0e7875e5b667e2cc394c70dd75e2403265918

Summary:

This PR upgrades oneDNN to [v2.3.3](https://github.com/oneapi-src/oneDNN/releases/tag/v2.3.3) and includes [Graph API preview release](https://github.com/oneapi-src/oneDNN/releases/tag/graph-v0.2) in one package.

- oneDNN will be located at `pytorch/third_party/ideep/mkl-dnn/third_party/oneDNN`

- The version of oneDNN will be [v2.3.3](https://github.com/oneapi-src/oneDNN/releases/tag/v2.3.3)

The main changes on CPU:

- v2.3

- Extended primitive cache to improve primitive descriptor creation performance.

- Improved primitive cache performance in multithreaded configurations.

- Introduced initial optimizations for bfloat16 compute functionality for future Intel Xeon Scalable processor (code name Sapphire Rapids).

- Improved performance of binary primitive and binary post-op for cases with broadcast and mixed source and destination formats.

- Improved performance of reduction primitive

- Improved performance of depthwise convolution primitive with NHWC activations for training cases

- v2.3.1

- Improved int8 GEMM performance for processors with Intel AVX2 and Intel DL Boost support

- Fixed integer overflow for inner product implementation on CPUs

- Fixed out of bounds access in GEMM implementation for Intel SSE 4.1

- v2.3.2

- Fixed performance regression in fp32 inner product primitive for processors with Intel AVX512 support

- v2.3.3

- Reverted check for memory descriptor stride validity for unit dimensions

- Fixed memory leak in CPU GEMM implementation

More changes can be found in https://github.com/oneapi-src/oneDNN/releases.

- The Graph API provides flexible API for aggressive fusion, and the preview2 supports fusion for FP32 inference. See the [Graph API release branch](https://github.com/oneapi-src/oneDNN/tree/dev-graph-preview2) and [spec](https://spec.oneapi.io/onednn-graph/latest/introduction.html) for more details. A separate PR will be submitted to integrate the oneDNN Graph API to Torchscript graph.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63748

Reviewed By: albanD

Differential Revision: D32153889

Pulled By: malfet

fbshipit-source-id: 536071168ffe312d452f75d54f34c336ca3778c1

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/68246

Currently the codegen produces a list of output files at CMake

configuration time and the build system has no way of knowing if the

outputs change. So if that happens, you basically need to delete the

build folder and re-run from scratch.

Instead, this generates the output list every time the code generation

is run and changes the output to be a `.cmake` file that gets included

in the main cmake configuration step. That means the build system

knows to re-run cmake automatically if a new output is added. So, for

example you could change the number of shards that `Operators.cpp` is

split into and it all just works transparently to the user.

Test Plan: Imported from OSS

Reviewed By: zou3519

Differential Revision: D32596268

Pulled By: albanD

fbshipit-source-id: 15e0896aeaead90aed64b9c8fda70cf28fef13a2

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/69251

This adds some actual documentation for deploy, which is probably useful

since we told everyone it was experimentally available so they will

probably be looking at what the heck it is.

It also wires up various compoenents of the OSS build to actually work

when used from an external project.

Differential Revision:

D32783312

D32783312

Test Plan: Imported from OSS

Reviewed By: wconstab

Pulled By: suo

fbshipit-source-id: c5c0a1e3f80fa273b5a70c13ba81733cb8d2c8f8

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67656

Currently, each cpu kernel file is copied into the build folder 3 times to give them different compilation flags. This changes it to instead generate 3 files that `#include` the original file. The biggest difference is that updating a copied file requires `cmake` to re-run, whereas include dependencies are natively handled by `ninja`.

A side benefit is that included files show up directly in the build dependency graph, whereas `cmake` file copies don't.

Test Plan: Imported from OSS

Reviewed By: dagitses

Differential Revision: D32566108

Pulled By: malfet

fbshipit-source-id: ae75368fede37e7ca03be6ade3d4e4a63479440d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/68180

Since we've open sourced the tracing-based selective build, we can deprecate the

op-dependency-graph-based selective build and the static analyzer tool that

produces the dependency graph.

ghstack-source-id: 143108377

Test Plan: CIs

Reviewed By: seemethere

Differential Revision: D32358467

fbshipit-source-id: c61523706b85a49361416da2230ec1b035b8b99c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67497

This allows more of the code-generation to happen in parallel, whereas

previously all codegen was serialized.

Test Plan: Imported from OSS

Reviewed By: dagitses, mruberry

Differential Revision: D32027250

Pulled By: albanD

fbshipit-source-id: 6407c4c3e25ad15d542aa73da6ded6a309c8eb6a

Summary:

OpenBLAS recently added support for bfloat16 GEMM, so this change has PyTorch call out to OpenBLAS for that, like it does for single and double precision

Our goal is to try to enable PyTorch to make calls to "sbgemm" in OpenBLAS.

We are prepared (if it is your preference) to add fences to the code to limit this change to the Power architecture,

but our first instinct is that anyone on any architecture that enables access to sbgemm in their OpenBLAS library

should be able to use this code. (but again, we respect that as we are just starting to modify PyTorch, we respect

your guidance!)

(there is no issue number related to this)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58831

Reviewed By: albanD

Differential Revision: D29951900

Pulled By: malfet

fbshipit-source-id: 3d0a4a638ac95b2ff2e9f6d08827772e28d397c3

Summary:

This PR is to update PyTorch with the following cub changes:

- Starting cub 1.13.1, cub requires users to define `CUB_NS_QUALIFIER` if `CUB_NS_PREFIX` is also defined. Besides that, a new mechanism `CUB_WRAPPED_NAMESPACE` is added.

And I do the following change to PyTorch:

- Starting CUDA 11.5, define `CUB_WRAPPED_NAMESPACE` globally as an nvcc flag.

- Fix caffe2 failures caused by the above change.

- Add a `aten/src/ATen/cuda/cub_definitions.cuh` that defines helper macros about feature availability.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66219

Reviewed By: bdhirsh

Differential Revision: D31626931

Pulled By: ngimel

fbshipit-source-id: 97ebf5ef671ade8bf46d0860edc317f22660f26d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/65401

Per https://github.com/pytorch/pytorch/issues/57744 statically linked CUPTI

causes exception handling to break on certain compiler configurations, likely

because CUPTI comes with incompatible libstdc++ symbols. Rather than pray that

something reasonable happens, use the safer configuration (dynamic linking) by

default and give a warning if the user inverts the setting.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Test Plan: Imported from OSS

Reviewed By: gdankel

Differential Revision: D31082208

Pulled By: ezyang

fbshipit-source-id: 14f66af920847e158436b5801c43f3124b109b34

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62445

PyTorch currently uses the old style of compiling CUDA in CMake which is just a

bunch of scripts in `FindCUDA.cmake`. Newer versions support CUDA natively as

a language just like C++ or C.

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision: D31503350

fbshipit-source-id: 2ee817edc9698531ae1b87eda3ad271ee459fd55

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/65610

- Replace HIP_PLATFORM_HCC with USE_ROCM

- Dont rely on CUDA_VERSION or HIP_VERSION and use USE_ROCM and ROCM_VERSION.

- In the next PR

- Will be removing the mapping from CUDA_VERSION to HIP_VERSION and CUDA to HIP in hipify.

- HIP_PLATFORM_HCC is deprecated, so will add HIP_PLATFORM_AMD to support HIP host code compilation on gcc.

cc jeffdaily sunway513 jithunnair-amd ROCmSupport amathews-amd

Reviewed By: jbschlosser

Differential Revision: D30909053

Pulled By: ezyang

fbshipit-source-id: 224a966ebf1aaec79beccbbd686fdf3d49267e06

Summary:

`include_directories` is old-style CMake which adds the include path to every file being compiled. This instead makes python, numpy and pybind11 into targets that only torch_python and caffe2_pybind_state are linked to. So, python libraries can't be accidentally included elsewhere.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/65654

Reviewed By: gchanan

Differential Revision: D31193205

Pulled By: malfet

fbshipit-source-id: 5c1b554a59d0e441a701a04ebb62f0032d38b208

Summary:

Syncing nvfuser code base from devel branch, Listing a few of our development since last sync:

- Extends support to normalization and reduction kernels.

- Multiple kernel launch for single `CudaFusionGroup`. Hierarchical caching system has been updated to cache graph segmentation.

- profile_ivalue is enabled to convert dynamic scalar into compile time constants, which are required by the codegen. (e.g. reduction axes).

To keep this PR simple and relatively review-free. We stripped most external changes and submitted them as separate PRs, so this gigantic PR is easier to handle.

internal updates are files located in:

1. updates in nvfuser codegen `torch/csrc/jit/coddgen/cuda`

2. added nvfuser specific benchmarks `benchmarks/cpp/nvfuser`

3. nvfuser jit cpp tests `test/cpp/jit/test_gpu.cpp` `test/cpp/jit/test_gpu_shift.cpp` `test/cpp/jit/test_gpu_validator.h`

updates affecting integration:

1. profile_ivalue enabled for nvfuser. related changes are in `torch/csrc/jit/runtime/*`,

2. exposed a few more symbols `aten/src/ATen/core/*` used by codegen

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63745

Reviewed By: saketh-are

Differential Revision: D30752939

Pulled By: malfet

fbshipit-source-id: ce122e80f01bcd3865f5bd3c4dfde660665fd84c

Summary:

The library will no longer link properly on VS 2019 (14.29.30133). To

ensure that engineers building on Windows can use and debug with this

build type, incremental linking needs to be turned off for this build

flag.

Verified that this build type successfully builds, links, and provides

debuggable Python modules on Windows.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64892

Reviewed By: jbschlosser

Differential Revision: D30902565

Pulled By: malfet

fbshipit-source-id: e5286a4c6f45c7cbe4cdc1b98560129bd386970b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63714

PocketFFT was disabled for CMake < 3.9 but CMake 3.11 is the first version to support `INCLUDE_DIRECTORIES` as a target property. So updating to CMake 3.10 causes the mobile builds to fail. Instead of limiting the CMake support, this just adds the include directory to the entire target,

Test Plan: Imported from OSS

Reviewed By: bdhirsh

Differential Revision: D30498369

Pulled By: malfet

fbshipit-source-id: 83372e29c477c97e7015763b7c29d6d7e456bcef

Summary:

We currently build breakpad from [this fork](https://github.com/driazati/breakpad) to include extra logic to restore signal handlers that were previously present. With some [new additions](https://github.com/google/breakpad/compare/main...driazati:main) this fork now includes a CMake based build, so we can add breakpad as a proper dependency rather than rely on including it in Docker images as a system library which is error prone (we have a bunch of images) and hard to extend to MacOS / Windows. This also includes some changes to the crash handling code to support MacOS / Windows in a similar way to Linux.

```python

import torch

# On Windows this writes crashes to C:\Users\<user>\AppData\pytorch_crashes

# On MacOS/Linux this writes crashes to /tmp/pytorch_crashes

torch.utils._crash_handler.enable_minidumps()

# Easy way to cause a segfault and trigger the handler

torch.bincount(input=torch.tensor([9223372036854775807]))

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63186

Reviewed By: malfet, seemethere

Differential Revision: D30318404

Pulled By: driazati

fbshipit-source-id: 0d7daf3701cfaba5451cc529a0730272ab1eb1dc

Summary:

When testing with clang-cl, the flag is added though it is unsupported and that generates a few warnings. Tried a few alternatives like https://cmake.org/cmake/help/latest/module/CheckLinkerFlag.html, but they just don't work.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62949

Reviewed By: zhouzhuojie, driazati

Differential Revision: D30359206

Pulled By: malfet

fbshipit-source-id: 1bd27ad5772fe6757fa8c3a4bddf904f88d70b7b

Summary:

Using https://github.com/mreineck/pocketfft

Also delete explicit installation of pocketfft during the build as it will be available via submodule

Limit PocketFFT support to cmake-3.10 or newer, as `set_source_files_properties` does not seem to work as expected with cmake-3.5

Partially addresses https://github.com/pytorch/pytorch/issues/62821

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62841

Reviewed By: seemethere

Differential Revision: D30140441

Pulled By: malfet

fbshipit-source-id: d1a1cf1b43375321f5ec5b3d0b538f58082f7825

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62419

This diff adds support for cpu only kineto profiler on mobile. Thus

enabling chrome trace generation on mobile. This bring cpp API for

mobile profiling on part with Torchscript.

This is done via:

1. Utilizating debug handle annotations in KinetoEvent.

2. Adding post processing capability, via callbacks, to

KinetoThreadLocalState

3. Creating new RAII stype profiler, KinetoEdgeCPUProfiler, which can be

used in surrounding scope of model execution. This will write chrome

trace to the location specified in profiler constructor.

Test Plan:

MobileProfiler.ModuleHierarchy

Imported from OSS

Reviewed By: raziel

Differential Revision: D29993660

fbshipit-source-id: 0b44f52f9e9c5f5aff81ebbd9273c254c3c03299

Summary:

- HIP_VERSION semantic versioning will change in ROCm4.3. The changes essentially remove the dependency on HIP_VERSION provided in the hip header to keep code compatible with older and newer versions of ROCm.

- TORCH_HIP_VERSION is derived from HIP_VERSION_MAJOR and HIP_VERSION_MINOR

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62786

Reviewed By: bdhirsh

Differential Revision: D30281682

Pulled By: seemethere

fbshipit-source-id: e41e69fb9e13de5ddd1af99ba5bbdcbb7b64b673

Summary:

BLAS library is found by cmake/Dependencies.cmake and then

LAPACK library is found by FindLAPACK.cmake which in turn calls

FindBLAS.cmake. This means that we are searching for BLAS twice

and they might be different things. By setting a few variables,

this can be avoided.

cc seemethere

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49647

Reviewed By: seemethere, ejguan

Differential Revision: D29943680

Pulled By: malfet

fbshipit-source-id: 3cbc350ea645a1a28dd92c19e5ee7f9eecdeff59

Summary:

This PR: (1) enables the use of a system-provided Intel TBB for building PyTorch, (2) removes `tbb:task_scheduler_init` references since it has been removed from TBB a while ago (3) marks the implementation of `_internal_set_num_threads` with a TODO as it requires a revision that fixes its thread allocation logic.

Tested with `test/run_test`; no new tests are introduced since there are no behavioral changes (removal of `tbb::task_scheduler_init` has no impact on the runtime behavior).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61934

Reviewed By: malfet

Differential Revision: D29805416

Pulled By: cbalioglu

fbshipit-source-id: 22042b428b57b8fede9dfcc83878d679a19561dd

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61903

### Remaining Tasks

- [ ] Collate results of benchmarks on two Intel Xeon machines (with & without CUDA, to check if CPU throttling causes issues with GPUs) - make graphs, including Roofline model plots (Intel Advisor can't make them with libgomp, though, but with Intel OpenMP).

### Summary

1. This draft PR produces binaries with with 3 types of ATen kernels - default, AVX2, AVX512 . Using the environment variable `ATEN_AVX512_256=TRUE` also results in 3 types of kernels, but the compiler can use 32 ymm registers for AVX2, instead of the default 16. ATen kernels for `CPU_CAPABILITY_AVX` have been removed.

2. `nansum` is not using AVX512 kernel right now, as it has poorer accuracy for Float16, than does AVX2 or DEFAULT, whose respective accuracies aren't very good either (#59415).

It was more convenient to disable AVX512 dispatch for all dtypes of `nansum` for now.

3. On Windows , ATen Quantized AVX512 kernels are not being used, as quantization tests are flaky. If `--continue-through-failure` is used, then `test_compare_model_outputs_functional_static` fails. But if this test is skipped, `test_compare_model_outputs_conv_static` fails. If both these tests are skipped, then a third one fails. These are hard to debug right now due to not having access to a Windows machine with AVX512 support, so it was more convenient to disable AVX512 dispatch of all ATen Quantized kernels on Windows for now.

4. One test is currently being skipped -

[test_lstm` in `quantization.bc](https://github.com/pytorch/pytorch/issues/59098) - It fails only on Cascade Lake machines, irrespective of the `ATEN_CPU_CAPABILITY` used, because FBGEMM uses `AVX512_VNNI` on machines that support it. The value of `reduce_range` should be used as `False` on such machines.

The list of the changes is at https://gist.github.com/imaginary-person/4b4fda660534f0493bf9573d511a878d.

Credits to ezyang for proposing `AVX512_256` - these use AVX2 intrinsics but benefit from 32 registers, instead of the 16 ymm registers that AVX2 uses.

Credits to limo1996 for the initial proposal, and for optimizing `hsub_pd` & `hadd_pd`, which didn't have direct AVX512 equivalents, and are being used in some kernels. He also refactored `vec/functional.h` to remove duplicated code.

Credits to quickwritereader for helping fix 4 failing complex multiplication & division tests.

### Testing

1. `vec_test_all_types` was modified to test basic AVX512 support, as tests already existed for AVX2.

Only one test had to be modified, as it was hardcoded for AVX2.

2. `pytorch_linux_bionic_py3_8_gcc9_coverage_test1` & `pytorch_linux_bionic_py3_8_gcc9_coverage_test2` are now using `linux.2xlarge` instances, as they support AVX512. They were used for testing AVX512 kernels, as AVX512 kernels are being used by default in both of the CI checks. Windows CI checks had already been using machines with AVX512 support.

### Would the downclocking caused by AVX512 pose an issue?

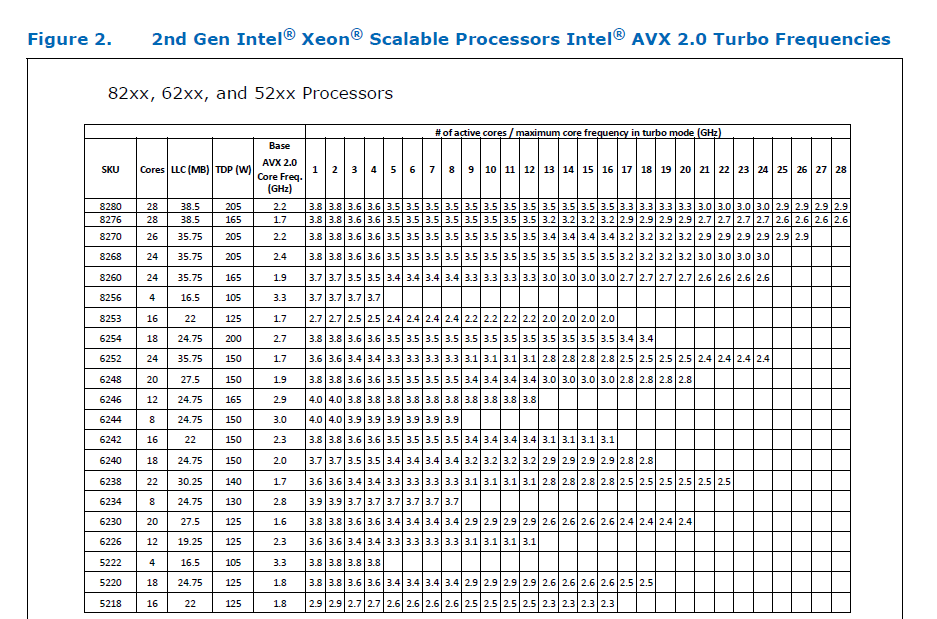

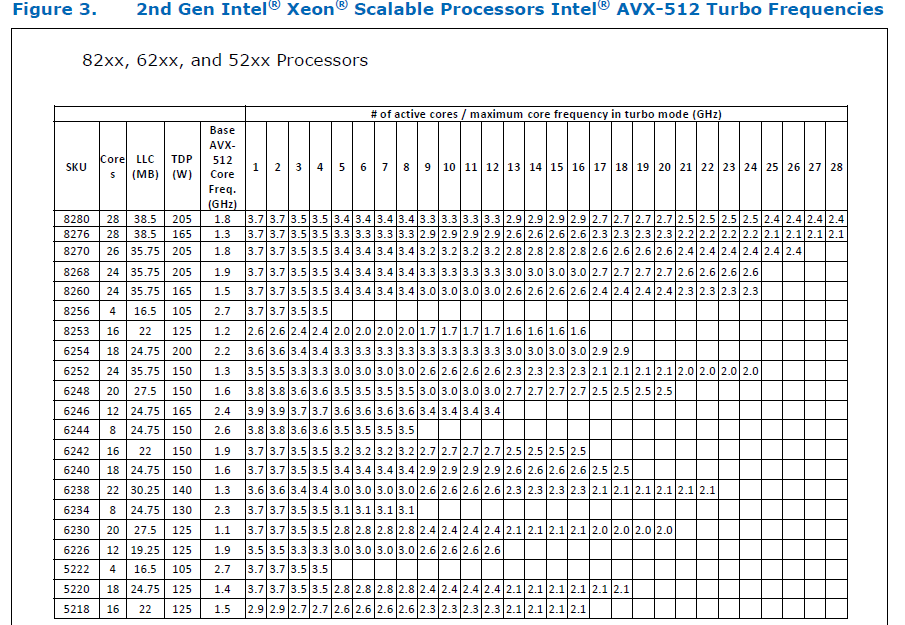

I think it's important to note that AVX2 causes downclocking as well, and the additional downclocking caused by AVX512 may not hamper performance on some Skylake machines & beyond, because of the double vector-size. I think that [this post with verifiable references is a must-read](https://community.intel.com/t5/Software-Tuning-Performance/Unexpected-power-vs-cores-profile-for-MKL-kernels-on-modern-Xeon/m-p/1133869/highlight/true#M6450). Also, AVX512 would _probably not_ hurt performance on a high-end machine, [but measurements are recommended](https://lemire.me/blog/2018/09/07/avx-512-when-and-how-to-use-these-new-instructions/). In case it does, `ATEN_AVX512_256=TRUE` can be used for building PyTorch, as AVX2 can then use 32 ymm registers instead of the default 16. [FBGEMM uses `AVX512_256` only on Xeon D processors](https://github.com/pytorch/FBGEMM/pull/209), which are said to have poor AVX512 performance.

This [official data](https://www.intel.com/content/dam/www/public/us/en/documents/specification-updates/xeon-scalable-spec-update.pdf) is for the Intel Skylake family, and the first link helps understand its significance. Cascade Lake & Ice Lake SP Xeon processors are said to be even better when it comes to AVX512 performance.

Here is the corresponding data for [Cascade Lake](https://cdrdv2.intel.com/v1/dl/getContent/338848) -

The corresponding data isn't publicly available for Intel Xeon SP 3rd gen (Ice Lake SP), but [Intel mentioned that the 3rd gen has frequency improvements pertaining to AVX512](https://newsroom.intel.com/wp-content/uploads/sites/11/2021/04/3rd-Gen-Intel-Xeon-Scalable-Platform-Press-Presentation-281884.pdf). Ice Lake SP machines also have 48 KB L1D caches, so that's another reason for AVX512 performance to be better on them.

### Is PyTorch always faster with AVX512?

No, but then PyTorch is not always faster with AVX2 either. Please refer to #60202. The benefit from vectorization is apparent with with small tensors that fit in caches or in kernels that are more compute heavy. For instance, AVX512 or AVX2 would yield no benefit for adding two 64 MB tensors, but adding two 1 MB tensors would do well with AVX2, and even more so with AVX512.

It seems that memory-bound computations, such as adding two 64 MB tensors can be slow with vectorization (depending upon the number of threads used), as the effects of downclocking can then be observed.

Original pull request: https://github.com/pytorch/pytorch/pull/56992

Reviewed By: soulitzer

Differential Revision: D29266289

Pulled By: ezyang

fbshipit-source-id: 2d5e8d1c2307252f22423bbc14f136c67c3e6184

Summary:

This PR deletes some code in `MiscCheck.cmake` that perform the exact

same functionality as `FindAVX.cmake`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61748

Reviewed By: ejguan

Differential Revision: D29791282

Pulled By: malfet

fbshipit-source-id: 6595fd1b61c8ae12b821fad8c9a34892dd52d213

Summary:

Not sure why (maybe from dependencies?) but it can certainly break package lookup upon re-entry of cmake.

So instead of checking whether they are defined, we should check whether there is any meaningful value inside.

Fixes https://github.com/pytorch/pytorch/issues/59887

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61230

Reviewed By: H-Huang

Differential Revision: D29668766

Pulled By: malfet

fbshipit-source-id: 79a59578740c4434327aff4f9a22eba9c4bf48d1

Summary:

This is a PR on build system that provides support for cross compiling on Jetson platforms.

The major change is:

1. Disable try runs for cross compiling in `COMPILER_WORKS`, `BLAS`, and `CUDA`. They will not be able to perform try run on a cross compile setup

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59764

Reviewed By: soulitzer

Differential Revision: D29524363

Pulled By: malfet

fbshipit-source-id: f06d1ad30b704c9a17d77db686c65c0754db07b8

Summary:

This PR bumps the `googletest` version to v1.11.0.

To facilitate this change, `CAFFE2_ASAN_FLAG` and `CAFFE2_TSAN_FLAG` are divided into corresponding compiler and linker variants. This is required because `googletest v1.11.0` sets the `-Werror` flag. The `-pie` flag is a linker flag, and passing it to a compiler invocation results in a `-Wunused-command-line-argument` warning, which in turn will cause `googletest` to fail to build with ASAN.

Fixes https://github.com/pytorch/pytorch/issues/60865

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61395

Reviewed By: iramazanli

Differential Revision: D29620970

Pulled By: 1ntEgr8

fbshipit-source-id: cdb1d3d12e0fff834c2e62971e42c03f8c3fbf1b

Summary:

Needed on platforms, that do not have MKL, such as aarch64 and M1

- Add `AT_POCKETFFT_ENABLED()` to Config.h.in

- Introduce torch._C.has_spectral that is true if PyTorch was compiled with either MKL or PocketFFT

- Modify spectral test to use skipCPUIfNoFFT instead of skipCPUIfNoMKL

Share implementation of `_out` functions as well as fft_fill_with_conjugate_symmetry_stub between MKL and PocketFFT implementations

Fixes https://github.com/pytorch/pytorch/issues/41592

Pull Request resolved: https://github.com/pytorch/pytorch/pull/60976

Reviewed By: walterddr, driazati, janeyx99, samestep

Differential Revision: D29466530

Pulled By: malfet

fbshipit-source-id: ac5edb3d40e7c413267825f92a5e8bc4bb249caf

Summary:

This is only important for builds where cuDNN is linked statically into libtorch_cpu.

Before this PR PyTorch wheels often accidentally contained several partial copies of cudnn_static library.

Splitting the interface into header only (cudnn-public) and library+headers(cudnn-private) prevents those from happening.

Preliminary step towards enabling optional linking whole cudnn_library to workaround issue reported in https://github.com/pytorch/pytorch/issues/50153

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59721

Reviewed By: ngimel

Differential Revision: D29000967

Pulled By: malfet

fbshipit-source-id: f054df92b265e9494076ab16c247427b39da9336

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59573

To do mobile selective build, we have several options:

1. static dispatch;

2. dynamic dispatch + static analysis (to create the dependency graph);

3. dynamic dispatch + tracing;

We are developing 3. For open source, we used to only support 1, and

currently we support both 1 and 2.

This file is only used for 2. It was introduced when we deprecated

the static dispatch (1). The motivation was to make sure we have a

low-friction selective build workflow for dynamic dispatch (2).

As the name indicates, it is the *default* dependency graph that users

can try if they don't bother to run the static analyzer themselves.

We have a CI to run the full workflow of 2 on every PR, which creates

the dependency graph on-the-fly instead of using the committed file.

Since the workflow to automatically update the file has been broken

for a while, it started to confuse other pytorch developers as people

are already manually editing it, and it might be broken for some models

already.

We reintroduced the static dispatch recently, so we decide to deprecate

this file now and automatically turn on static dispatch if users run

selective build without providing the static analysis graph.

The tracing-based selective build will be the ultimate solution we'd

like to provide for OSS, but it will take some more effort to polish

and release.

Differential Revision:

D28941020

D28941020

Test Plan: Imported from OSS

Reviewed By: dhruvbird

Pulled By: ljk53

fbshipit-source-id: 9977ab8568e2cc1bdcdecd3d22e29547ef63889e

Summary:

Before that, only dynamically linked OpenBLAS compield with OpenMP could

be found.

Also get rid of hardcoded codepath for libgfortran.a in FindLAPACK.cmake

Only affects aarch64 linux builds

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59428

Reviewed By: agolynski

Differential Revision: D28891314

Pulled By: malfet

fbshipit-source-id: 5af55a14c85ac66551ad2805c5716bbefe8d55b2

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57080

ONNX optimizer is removed in ONNX 1.9

This PR removes ONNX optimizer from a C++ code path and uses `try-except` block in Python to keep it compatible with both ONNX-1.8 and 1.9.

Test Plan: Imported from OSS

Reviewed By: heitorschueroff

Differential Revision: D28467330

Pulled By: malfet

fbshipit-source-id: 5e4669dd0537648898e593f9e253da18d6dc7568

Co-authored-by: neginraoof <neginmr@utexas.edu>

Co-authored-by: Nikita Shulga <nshulga@fb.com>

Summary:

Fixes upcoming changes that are part of ROCm 4.2 and affect PyTorch JIT.

- ROCM_VERSION macro must be available to both device and host compilation passes.

- Unifies some of CUDA and HIP differences in the code generated.

- NAN / POS_INFINITY / NEG_INFINITY

- Do not hipify `extern __shared__` -> `HIP_DYNAMIC_SHARED()` macro [deprecated]

- Differentiates bf16 codegen for HIP.

- Optionally provides missing macros when using hiprtc precompiled header feature.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57400

Reviewed By: ejguan

Differential Revision: D28421065

Pulled By: malfet

fbshipit-source-id: 215f476773c61d8b0d9d148a4e5f5d016f863074

Summary:

To make build behaviour aligned with other third_party/ libraries,

introduce `USE_SYSTEM_PYBIND11 (d55b25a633)` build option, which set to OFF by

default, which means PyTorch will be build with bundled pybind11 even if

other version is already installed locally.

Fixes https://github.com/pytorch/pytorch/issues/58750

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58951

Reviewed By: driazati

Differential Revision: D28690411

Pulled By: malfet

fbshipit-source-id: e56b5a8f2a23ee1834b2a6d3807f287149decf8c

Summary:

Library linking order matters during static linking

Not sure whether its a bug or a feature, but if cublas is reference

before CuDNN, it will be partially statically linked into the library,

even if it is not used

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58287

Reviewed By: janeyx99

Differential Revision: D28433165

Pulled By: malfet

fbshipit-source-id: 8dffa0533075126dc383428f838f7d048074205c

Summary:

While trying to build PyTorch with BLIS as the backend library,

we found a build issue due to some missing include files.

This was caused by a missing directory in the search path.

This patch adds that path in FindBLIS.cmake.

Fixes #{issue number}

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58166

Reviewed By: zou3519

Differential Revision: D28640460

Pulled By: malfet

fbshipit-source-id: d0cd3a680718a0a45788c46a502871b88fbadd52

Summary:

Since v1.7, oneDNN (MKL-DNN) has supported the use of Compute Library

for the Arm architeture to provide optimised convolution primitives

on AArch64.

This change enables the use of Compute Library in the PyTorch build.

Following the approach used to enable the use of CBLAS in MKLDNN,

It is enabled by setting the env vars USE_MKLDNN and USE_MKLDNN_ACL.

The location of the Compute Library build must be set useing `ACL_ROOT_DIR`.

This is an extension of the work in https://github.com/pytorch/pytorch/pull/50400

which added support for the oneDNN/MKL-DNN backend on AArch64.

_Note: this assumes that Compute Library has been built and installed at

ACL_ROOT_DIR. Compute library can be downloaded here:

`https://github.com/ARM-software/ComputeLibrary`_

Fixes #{issue number}

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55913

Reviewed By: ailzhang

Differential Revision: D28559516

Pulled By: malfet

fbshipit-source-id: 29d24996097d0a54efc9ab754fb3f0bded290005

Summary:

This PR is step 0 of adding PyTorch convolution bindings using the cuDNN frontend. The cuDNN frontend is the recommended way of using cuDNN v8 API. It is supposed to have faster release cycles, so that, for example, if people find a specific kernel has a bug, they can report it, and that kernel will be blocked in the cuDNN frontend and frameworks could just update that submodule without the need for waiting for a whole cuDNN release.

The work is not complete, and this PR is only step 0.

**What this PR does:**

- Add cudnn-frontend as a submodule.

- Modify cmake to build that submodule.

- Add bindings for convolution forward in `Conv_v8.cpp`, which is disabled by a macro by default.

- Tested manually by enabling the macro and run `test_nn.py`. All tests pass except those mentioned below.

**What this PR doesn't:**

- Only convolution forward, no backward. The backward will use v7 API.

- No 64bit-indexing support for some configuration. This is a known issue of cuDNN, and will be fixed in a later cuDNN version. PyTorch will not implement any workaround for issue, but instead, v8 API should be disabled on problematic cuDNN versions.

- No test beyond PyTorch's unit tests.

- Not tested for correctness on real models.

- Not benchmarked for performance.

- Benchmark cache is not thread-safe. (This is marked as `FIXME` in the code, and will be fixed in a follow-up PR)

- cuDNN benchmark is not supported.

- There are failing tests, which will be resolved later:

```

FAILED test/test_nn.py::TestNNDeviceTypeCUDA::test_conv_cudnn_nhwc_cuda_float16 - AssertionError: False is not true : Tensors failed to compare as equal!With rtol=0.001 and atol=1e-05, found 32 element(s) (out of 32) whose difference(s) exceeded the margin of error (in...

FAILED test/test_nn.py::TestNNDeviceTypeCUDA::test_conv_cudnn_nhwc_cuda_float32 - AssertionError: False is not true : Tensors failed to compare as equal!With rtol=1.3e-06 and atol=1e-05, found 32 element(s) (out of 32) whose difference(s) exceeded the margin of error (...

FAILED test/test_nn.py::TestNNDeviceTypeCUDA::test_conv_large_cuda - RuntimeError: CUDNN_BACKEND_OPERATION: cudnnFinalize Failed cudnn_status: 9

FAILED test/test_nn.py::TestNN::test_Conv2d_depthwise_naive_groups_cuda - AssertionError: False is not true : Tensors failed to compare as equal!With rtol=0 and atol=1e-05, found 64 element(s) (out of 64) whose difference(s) exceeded the margin of error (including 0 an...

FAILED test/test_nn.py::TestNN::test_Conv2d_deterministic_cudnn - RuntimeError: not supported yet

FAILED test/test_nn.py::TestNN::test_ConvTranspose2d_groups_cuda_fp32 - RuntimeError: cuDNN error: CUDNN_STATUS_BAD_PARAM

FAILED test/test_nn.py::TestNN::test_ConvTranspose2d_groups_cuda_tf32 - RuntimeError: cuDNN error: CUDNN_STATUS_BAD_PARAM

```

Although this is not a complete implementation of cuDNN v8 API binding, I still want to merge this first. This would allow me to do small and incremental work, for the ease of development and review.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51390

Reviewed By: malfet

Differential Revision: D28513167

Pulled By: ngimel

fbshipit-source-id: 9cc20c9dec5bbbcb1f94ac9e0f59b10c34f62740

Summary:

Expanding support to all builds

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56323

Test Plan: CI

Reviewed By: malfet

Differential Revision: D28171478

Pulled By: ilia-cher

fbshipit-source-id: 16bc752d1be3cbaeda5316f5d8a687ae05a83d22

Summary:

This adds some more compiler warnings ignores for everything that happens on a standard CPU build (CUDA builds still have a bunch of warnings so we can't turn on `-Werror` everywhere yet).

](https://our.intern.facebook.com/intern/diff/28005063/)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56630

Pulled By: driazati

Reviewed By: malfet

Differential Revision: D28005063

fbshipit-source-id: 541ed415eb0470ddf7e08c22c5eb6da9db26e9a0

Summary:

[distutils](https://docs.python.org/3/library/distutils.html) is on its way out and will be deprecated-on-import for Python 3.10+ and removed in Python 3.12 (see [PEP 632](https://www.python.org/dev/peps/pep-0632/)). There's no reason for us to keep it around since all the functionality we want from it can be found in `setuptools` / `sysconfig`. `setuptools` includes a copy of most of `distutils` (which is fine to use according to the PEP), that it uses under the hood, so this PR also uses that in some places.

Fixes#56527

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57040

Pulled By: driazati

Reviewed By: nikithamalgifb

Differential Revision: D28051356

fbshipit-source-id: 1ca312219032540e755593e50da0c9e23c62d720

Summary:

Revert "Revert D27449031 (2a7df657fe): [pytorch][PR] [ROCm] use hiprtc precompiled header". Reland PR https://github.com/pytorch/pytorch/issues/54350.

This reverts commit 204ac21bf1.

The original PR was reverted under suspicion that it was causing CI instability, but it was instead due to a hardware failure.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55965

Reviewed By: jbschlosser

Differential Revision: D27755907

Pulled By: malfet

fbshipit-source-id: 75bf0b9d888df3dee62f00a366b1123757e0474e

Summary:

MAGMA_HOME was previously set for the ubuntu-rocm/Dockerfile. However, this missed centos builds as well as any builds that do not use the CI image environments.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54511

Reviewed By: jbschlosser

Differential Revision: D27755983

Pulled By: malfet

fbshipit-source-id: 1ffd2cd100f4221c2bb64e6915fa3372ee1f6247

Summary:

Many model pipelines/workflows don't use MAGMA even though it is included in the build by default. Leaving MAGMA kernels out of the build can save 60+MB of GPU memory when loading `libtorch_cuda.so` (tested on V100, current upstream master).

A current sharp corner of this flag is that toggling it when rebuilding requires `torch/include/THC/THCGeneral.h` to be *manually* deleted by the user, as even running `make clean` or `setup.py` with `--cmake` does not properly regenerate it with the appropriate substitution for `#cmakedefine USE_MAGMA`. Is there a way to force the regeneration of the header during a rebuild?

CC malfet ptrblck

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55994

Reviewed By: mruberry

Differential Revision: D27766287

Pulled By: malfet

fbshipit-source-id: 93deca57befa0febb9c5b7875ecf0015c547d421

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/55814

I don't really know if the original issue is resolved but let's just

check and see if this passes CI so that we can potentially get some

speed up on our builds

Signed-off-by: Eli Uriegas <eliuriegas@fb.com>

Test Plan: Imported from OSS

Reviewed By: walterddr

Differential Revision: D27715734

Pulled By: seemethere

fbshipit-source-id: a8f90774dfd25b0abf8e57283fe3591a8d8f3c4b

Summary:

HIP's runtime compiler (hiprtc) is adding support for precompiled HIP headers in the ROCm 4.2 release. Conditionally add support for this feature. Using this feature will improve the ROCm torch wheel user experience; users will no longer need to install HIP headers separately to use torch JIT features.

The use of this feature is conditionalized on a new ROCM_VERSION macro.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54350

Reviewed By: H-Huang

Differential Revision: D27449031

Pulled By: malfet

fbshipit-source-id: 81a8d7847a47ce2bb253d1ea58740ef66ed154a3

Summary:

These changes provide the user with an additional option to choose the DNNL+BLIS path for PyTorch.

This assumes BLIS is already downloaded or built from source and the necessary library file is available at the location: $BLIS_HOME/lib/libblis.so and include files are available at: $BLIS_HOME/include/blis/blis.h and $BLIS_HOME/include/blis/cblas.h

Export the below variables to build PyTorch with MKLDNN+BLIS and proceed with the regular installation procedure as below:

$export BLIS_HOME=path-to-BLIS

$export PATH=$BLIS_HOME/include/blis:$PATH LD_LIBRARY_PATH=$BLIS_HOME/lib:$LD_LIBRARY_PATH

$export BLAS=BLIS USE_MKLDNN_CBLAS=ON WITH_BLAS=blis

$python setup.py install

CPU only Dockerfile to build PyTorch with AMD BLIS is available at : docker/cpu-blis/Dockerfile

Example command line to build using the Dockerfile:

sudo DOCKER_BUILDKIT=1 docker build . -t docker-image-repo-name

Example command line to run the built docker container:

sudo docker run --name container-name -it docker-image-repo-name

Fixes #{issue number}

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54953

Reviewed By: glaringlee

Differential Revision: D27466799

Pulled By: malfet

fbshipit-source-id: e03bae9561be3a67429df3b1be95a79005c63050

Summary:

Fixes the build of projects that depend on torch, such as torchaudio. Otherwise torchaudio will complain that gloo_hip is missing.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54727

Reviewed By: H-Huang

Differential Revision: D27361513

Pulled By: ezyang

fbshipit-source-id: 714cc2db23e7adf3e89303e941b78c27625b9460

Summary:

*Context:* https://github.com/pytorch/pytorch/issues/53406 added a lint for trailing whitespace at the ends of lines. However, in order to pass FB-internal lints, that PR also had to normalize the trailing newlines in four of the files it touched. This PR adds an OSS lint to normalize trailing newlines.

The changes to the following files (made in 54847d0adb9be71be4979cead3d9d4c02160e4cd) are the only manually-written parts of this PR:

- `.github/workflows/lint.yml`

- `mypy-strict.ini`

- `tools/README.md`

- `tools/test/test_trailing_newlines.py`

- `tools/trailing_newlines.py`

I would have liked to make this just a shell one-liner like the other three similar lints, but nothing I could find quite fit the bill. Specifically, all the answers I tried from the following Stack Overflow questions were far too slow (at least a minute and a half to run on this entire repository):

- [How to detect file ends in newline?](https://stackoverflow.com/q/38746)

- [How do I find files that do not end with a newline/linefeed?](https://stackoverflow.com/q/4631068)

- [How to list all files in the Git index without newline at end of file](https://stackoverflow.com/q/27624800)

- [Linux - check if there is an empty line at the end of a file [duplicate]](https://stackoverflow.com/q/34943632)

- [git ensure newline at end of each file](https://stackoverflow.com/q/57770972)

To avoid giving false positives during the few days after this PR is merged, we should probably only merge it after https://github.com/pytorch/pytorch/issues/54967.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54737

Test Plan:

Running the shell script from the "Ensure correct trailing newlines" step in the `quick-checks` job of `.github/workflows/lint.yml` should print no output and exit in a fraction of a second with a status of 0. That was not the case prior to this PR, as shown by this failing GHA workflow run on an earlier draft of this PR:

- https://github.com/pytorch/pytorch/runs/2197446987?check_suite_focus=true

In contrast, this run (after correcting the trailing newlines in this PR) succeeded:

- https://github.com/pytorch/pytorch/pull/54737/checks?check_run_id=2197553241

To unit-test `tools/trailing_newlines.py` itself (this is run as part of our "Test tools" GitHub Actions workflow):

```

python tools/test/test_trailing_newlines.py

```

Reviewed By: malfet

Differential Revision: D27409736

Pulled By: samestep

fbshipit-source-id: 46f565227046b39f68349bbd5633105b2d2e9b19

Summary:

This PR is a follow up to https://github.com/pytorch/pytorch/pull/53408.

It only loads hipfft if the version is rocm 4.1 or after and stops loading rocfft. This was done to resolve some issues observed in our internal ci due to conflicts.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54349

Reviewed By: ezyang

Differential Revision: D27374252

Pulled By: ngimel

fbshipit-source-id: 724e80df5011ea8fabd81739e18ae8a13d3a7ea0

Summary:

https://ccache.dev/ is a compiler cache that speeds up subsequent builds. Auto-detecting ccache ensures that it is used on systems where it is available, greatly improving build times for developers. There is no risk in enabling ccache in practice. Please refer to https://ccache.dev/ for a short summary / motivation

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49389

Reviewed By: ejguan

Differential Revision: D27169957

Pulled By: malfet

fbshipit-source-id: 673b60bbceb0d323901c8a992a75792c6da9b805

Summary:

This PR makes changes to how hipfft is loaded in pytorch. hipfft is packaged in a separate library to rocfft following rocm 4.1.

We check the rocm version and if it is past rocm 4.1 we load hipfft in addition to rocfft.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53408

Reviewed By: albanD

Differential Revision: D26952702

Pulled By: malfet

fbshipit-source-id: f42be304b587c060816e39d36f5c1a2cdc37bfab

Summary:

Context: https://github.com/pytorch/pytorch/pull/53299#discussion_r587882857

These are the only hand-written parts of this diff:

- the addition to `.github/workflows/lint.yml`

- the file endings changed in these four files (to appease FB-internal land-blocking lints):

- `GLOSSARY.md`

- `aten/src/ATen/core/op_registration/README.md`

- `scripts/README.md`

- `torch/csrc/jit/codegen/fuser/README.md`

The rest was generated by running this command (on macOS):

```

git grep -I -l ' $' -- . ':(exclude)**/contrib/**' ':(exclude)third_party' | xargs gsed -i 's/ *$//'

```

I looked over the auto-generated changes and didn't see anything that looked problematic.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53406

Test Plan:

This run (after adding the lint but before removing existing trailing spaces) failed:

- https://github.com/pytorch/pytorch/runs/2043032377

This run (on the tip of this PR) succeeded:

- https://github.com/pytorch/pytorch/runs/2043296348

Reviewed By: walterddr, seemethere

Differential Revision: D26856620

Pulled By: samestep

fbshipit-source-id: 3f0de7f7c2e4b0f1c089eac9b5085a58dd7e0d97

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53174

Enable Kineto also in the CPU builds (non-mobile, non-Windows(atm))

Test Plan: CI

Reviewed By: gdankel

Differential Revision: D26776112

Pulled By: ilia-cher

fbshipit-source-id: 8733f65c2993105136c853f2a7b6e497d0fa53bf

Summary:

Fix accidental regression introduced by https://github.com/pytorch/pytorch/issues/47940

`FIND_PACKAGE(OpenBLAS)` does not validate that discovered library can actually be used, while `check_fortran_libraries` does that

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53168

Test Plan: Build PyTorch with static OpenBLAS and check that `torch.svd(torch.ones(3, 3)).S` do not raise an exception

Reviewed By: walterddr

Differential Revision: D26772345

Pulled By: malfet

fbshipit-source-id: 3e4675c176b30dfe4f0490d7d3dfe4f9a4037134

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51419

## Summary

1. Add an option `BUILD_LITE_INTERPRETER` in `caffe2/CMakeLists.txt` and set `OFF` as default.

2. Update 'build_android.sh' with an argument to swtich `BUILD_LITE_INTERPRETER`, 'OFF' as default.

3. Add a mini demo app `lite_interpreter_demo` linked with `libtorch` library, which can be used for quick test.

## Test Plan

Built lite interpreter version of libtorch and test with Image Segmentation demo app ([android version](https://github.com/pytorch/android-demo-app/tree/master/ImageSegmentation)/[ios version](https://github.com/pytorch/ios-demo-app/tree/master/ImageSegmentation))

### Android

1. **Prepare model**: Prepare the lite interpreter version of model by run the script below to generate the scripted model `deeplabv3_scripted.pt` and `deeplabv3_scripted.ptl`

```

import torch

model = torch.hub.load('pytorch/vision:v0.7.0', 'deeplabv3_resnet50', pretrained=True)

model.eval()

scripted_module = torch.jit.script(model)

# Export full jit version model (not compatible lite interpreter), leave it here for comparison

scripted_module.save("deeplabv3_scripted.pt")

# Export lite interpreter version model (compatible with lite interpreter)

scripted_module._save_for_lite_interpreter("deeplabv3_scripted.ptl")

```

2. **Build libtorch lite for android**: Build libtorch for android for all 4 android abis (armeabi-v7a, arm64-v8a, x86, x86_64) `BUILD_LITE_INTERPRETER=1 ./scripts/build_pytorch_android.sh`. This pr is tested on Pixel 4 emulator with x86, so use cmd `BUILD_LITE_INTERPRETER=1 ./scripts/build_pytorch_android.sh x86` to specify abi to save built time. After the build finish, it will show the library path:

```

...

BUILD SUCCESSFUL in 55s

134 actionable tasks: 22 executed, 112 up-to-date

+ find /Users/chenlai/pytorch/android -type f -name '*aar'

+ xargs ls -lah

-rw-r--r-- 1 chenlai staff 13M Feb 11 11:48 /Users/chenlai/pytorch/android/pytorch_android/build/outputs/aar/pytorch_android-release.aar

-rw-r--r-- 1 chenlai staff 36K Feb 9 16:45 /Users/chenlai/pytorch/android/pytorch_android_torchvision/build/outputs/aar/pytorch_android_torchvision-release.aar

```

3. **Use the PyTorch Android libraries built from source in the ImageSegmentation app**: Create a folder 'libs' in the path, the path from repository root will be `ImageSegmentation/app/libs`. Copy `pytorch_android-release` to the path `ImageSegmentation/app/libs/pytorch_android-release.aar`. Copy 'pytorch_android_torchvision` (downloaded from [here](https://oss.sonatype.org/#nexus-search;quick~torchvision_android)) to the path `ImageSegmentation/app/libs/pytorch_android_torchvision.aar` Update the `dependencies` part of `ImageSegmentation/app/build.gradle` to

```

dependencies {

implementation 'androidx.appcompat:appcompat:1.2.0'

implementation 'androidx.constraintlayout:constraintlayout:2.0.2'

testImplementation 'junit:junit:4.12'

androidTestImplementation 'androidx.test.ext:junit:1.1.2'

androidTestImplementation 'androidx.test.espresso:espresso-core:3.3.0'

implementation(name:'pytorch_android-release', ext:'aar')

implementation(name:'pytorch_android_torchvision', ext:'aar')

implementation 'com.android.support:appcompat-v7:28.0.0'

implementation 'com.facebook.fbjni:fbjni-java-only:0.0.3'

}

```

Update `allprojects` part in `ImageSegmentation/build.gradle` to

```

allprojects {

repositories {

google()

jcenter()

flatDir {

dirs 'libs'

}

}

}

```

4. **Update model loader api**: Update `ImageSegmentation/app/src/main/java/org/pytorch/imagesegmentation/MainActivity.java` by

4.1 Add new import: `import org.pytorch.LiteModuleLoader;`

4.2 Replace the way to load pytorch lite model

```

// mModule = Module.load(MainActivity.assetFilePath(getApplicationContext(), "deeplabv3_scripted.pt"));

mModule = LiteModuleLoader.load(MainActivity.assetFilePath(getApplicationContext(), "deeplabv3_scripted.ptl"));

```

5. **Test app**: Build and run the ImageSegmentation app in Android Studio,

### iOS

1. **Prepare model**: Same as Android.

2. **Build libtorch lite for ios** `BUILD_PYTORCH_MOBILE=1 IOS_PLATFORM=SIMULATOR BUILD_LITE_INTERPRETER=1 ./scripts/build_ios.sh`

3. **Remove Cocoapods from the project**: run `pod deintegrate`

4. **Link ImageSegmentation demo app with the custom built library**:

Open your project in XCode, go to your project Target’s **Build Phases - Link Binaries With Libraries**, click the **+** sign and add all the library files located in `build_ios/install/lib`. Navigate to the project **Build Settings**, set the value **Header Search Paths** to `build_ios/install/include` and **Library Search Paths** to `build_ios/install/lib`.

In the build settings, search for **other linker flags**. Add a custom linker flag below

```

-all_load

```

Finally, disable bitcode for your target by selecting the Build Settings, searching for Enable Bitcode, and set the value to No.

**

5. Update library and api**

5.1 Update `TorchModule.mm``

To use the custom built libraries the project, replace `#import <LibTorch/LibTorch.h>` (in `TorchModule.mm`) which is needed when using LibTorch via Cocoapods with the code below:

```

//#import <LibTorch/LibTorch.h>

#include "ATen/ATen.h"

#include "caffe2/core/timer.h"

#include "caffe2/utils/string_utils.h"

#include "torch/csrc/autograd/grad_mode.h"

#include "torch/script.h"

#include <torch/csrc/jit/mobile/function.h>

#include <torch/csrc/jit/mobile/import.h>

#include <torch/csrc/jit/mobile/interpreter.h>

#include <torch/csrc/jit/mobile/module.h>

#include <torch/csrc/jit/mobile/observer.h>

```

5.2 Update `ViewController.swift`

```

// if let filePath = Bundle.main.path(forResource:

// "deeplabv3_scripted", ofType: "pt"),

// let module = TorchModule(fileAtPath: filePath) {

// return module

// } else {

// fatalError("Can't find the model file!")

// }

if let filePath = Bundle.main.path(forResource:

"deeplabv3_scripted", ofType: "ptl"),

let module = TorchModule(fileAtPath: filePath) {

return module

} else {

fatalError("Can't find the model file!")

}

```

### Unit test

Add `test/cpp/lite_interpreter`, with one unit test `test_cores.cpp` and a light model `sequence.ptl` to test `_load_for_mobile()`, `bc.find_method()` and `bc.forward()` functions.

### Size:

**With the change:**

Android:

x86: `pytorch_android-release.aar` (**13.8 MB**)

IOS:

`pytorch/build_ios/install/lib` (lib: **66 MB**):

```

(base) chenlai@chenlai-mp lib % ls -lh

total 135016

-rw-r--r-- 1 chenlai staff 3.3M Feb 15 20:45 libXNNPACK.a

-rw-r--r-- 1 chenlai staff 965K Feb 15 20:45 libc10.a

-rw-r--r-- 1 chenlai staff 4.6K Feb 15 20:45 libclog.a

-rw-r--r-- 1 chenlai staff 42K Feb 15 20:45 libcpuinfo.a

-rw-r--r-- 1 chenlai staff 39K Feb 15 20:45 libcpuinfo_internals.a

-rw-r--r-- 1 chenlai staff 1.5M Feb 15 20:45 libeigen_blas.a

-rw-r--r-- 1 chenlai staff 148K Feb 15 20:45 libfmt.a

-rw-r--r-- 1 chenlai staff 44K Feb 15 20:45 libpthreadpool.a

-rw-r--r-- 1 chenlai staff 166K Feb 15 20:45 libpytorch_qnnpack.a

-rw-r--r-- 1 chenlai staff 384B Feb 15 21:19 libtorch.a

-rw-r--r-- 1 chenlai staff **60M** Feb 15 20:47 libtorch_cpu.a

```

`pytorch/build_ios/install`:

```

(base) chenlai@chenlai-mp install % du -sh *

14M include

66M lib

2.8M share

```

**Master (baseline):**

Android:

x86: `pytorch_android-release.aar` (**16.2 MB**)

IOS:

`pytorch/build_ios/install/lib` (lib: **84 MB**):

```

(base) chenlai@chenlai-mp lib % ls -lh

total 172032

-rw-r--r-- 1 chenlai staff 3.3M Feb 17 22:18 libXNNPACK.a

-rw-r--r-- 1 chenlai staff 969K Feb 17 22:18 libc10.a

-rw-r--r-- 1 chenlai staff 4.6K Feb 17 22:18 libclog.a

-rw-r--r-- 1 chenlai staff 42K Feb 17 22:18 libcpuinfo.a

-rw-r--r-- 1 chenlai staff 1.5M Feb 17 22:18 libeigen_blas.a

-rw-r--r-- 1 chenlai staff 44K Feb 17 22:18 libpthreadpool.a

-rw-r--r-- 1 chenlai staff 166K Feb 17 22:18 libpytorch_qnnpack.a

-rw-r--r-- 1 chenlai staff 384B Feb 17 22:19 libtorch.a

-rw-r--r-- 1 chenlai staff 78M Feb 17 22:19 libtorch_cpu.a

```

`pytorch/build_ios/install`:

```

(base) chenlai@chenlai-mp install % du -sh *

14M include

84M lib

2.8M share

```

Test Plan: Imported from OSS

Reviewed By: iseeyuan

Differential Revision: D26518778

Pulled By: cccclai

fbshipit-source-id: 4503ffa1f150ecc309ed39fb0549e8bd046a3f9c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51957

This is a simplified version of #51554.

Compared to #51554, this version only supports statically dispatching to

a specific backend. The benefit is that it skipped the dispatch key

computation logic thus has less framework overhead. The downside is that

if input tensors do not match the specified backend it will throw error

instead of falling back to regular dispatch.

Sample code:

```

Tensor empty(IntArrayRef size, TensorOptions options, c10::optional<MemoryFormat> memory_format) {

return at::cpu::empty(size, options, memory_format);

}

// aten::conj(Tensor(a) self) -> Tensor(a)

Tensor conj(const Tensor & self) {

return at::math::conj(self);

}

// aten::conj.out(Tensor self, *, Tensor(a!) out) -> Tensor(a!)

Tensor & conj_out(Tensor & out, const Tensor & self) {

return at::cpu::conj_out(out, self);

}

// aten::conj.out(Tensor self, *, Tensor(a!) out) -> Tensor(a!)

Tensor & conj_outf(const Tensor & self, Tensor & out) {

return at::cpu::conj_out(out, self);

}

// aten::_conj(Tensor self) -> Tensor

Tensor _conj(const Tensor & self) {

return at::defaultbackend::_conj(self);

}

```

For ops without the specific backend dispatch, it will throw error:

```

// aten::_use_cudnn_ctc_loss(Tensor log_probs, Tensor targets, int[] input_lengths, int[] target_lengths, int blank) -> bool

bool _use_cudnn_ctc_loss(const Tensor & log_probs, const Tensor & targets, IntArrayRef input_lengths, IntArrayRef target_lengths, int64_t blank) {

TORCH_CHECK(false, "Static dispatch does not support _use_cudnn_ctc_loss for CPU.");

}

```

Differential Revision: D26337857

Test Plan: Imported from OSS

Reviewed By: bhosmer

Pulled By: ljk53

fbshipit-source-id: a8e95799115c349de3c09f04a26b01d21a679364

Summary:

Fixes following error during static linking, by enforcing that cudart dependency is put after cublasLt

```

/usr/bin/ld: /usr/local/cuda/lib64/libcublasLt_static.a(libcublasLt_static.a.o): undefined reference to symbol 'cudaStreamWaitEvent@libcudart.so.11.0'

/usr/local/cuda/lib64/libcudart.so: error adding symbols: DSO missing from command line

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52509

Reviewed By: janeyx99

Differential Revision: D26547622

Pulled By: malfet

fbshipit-source-id: 4e17f18cf0ab5479a549299faf2583a79fbda4b9

Summary:

When compiling libtorch on macOS there is the option to use the `vecLib` BLAS library from Apple's (Accelerate)[https://developer.apple.com/documentation/accelerate] framework. Recent versions of macOS have changed the location of veclib.h, this change adds the new locations to `FindvecLib.cmake`

To test run the following command:

```

BLAS=vecLib python setup.py install --cmake --cmake-only

```

The choice of BLAS library is confirmed in the output:

```

-- Trying to find preferred BLAS backend of choice: vecLib

-- Found vecLib: /Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/System/Library/Frameworks/Accelerate.framework/Versions/Current/Frameworks/vecLib.framework/Versions/Current/Headers

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51288

Reviewed By: jbschlosser

Differential Revision: D26531136

Pulled By: malfet

fbshipit-source-id: ce86807ccbf66973f33b3acb99b7f40cfd182b9b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52184

`auditwheel` inserts first 8 symbols of sha256 checksum of the library before relocating into the wheel package. This change adds logic for computing the same short sha sum and embedding it into LazyNVRTC as alternative name for libnvrt.so

Fixes https://github.com/pytorch/pytorch/issues/52075

Test Plan: Imported from OSS

Reviewed By: seemethere

Differential Revision: D26417403

Pulled By: malfet

fbshipit-source-id: e366dd22e95e219979f6c2fa39acb11585b34c72

Summary:

Necessary to ensure correct link order, especially if libraries are

linked statically. Otherwise, one might run into:

```

/usr/bin/ld: /usr/local/cuda/lib64/libcublasLt_static.a(libcublasLt_static.a.o): undefined reference to symbol 'cudaStreamWaitEvent@libcudart.so.11.0'

/usr/local/cuda/lib64/libcudart.so: error adding symbols: DSO missing from command line

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52243

Reviewed By: seemethere, ngimel

Differential Revision: D26437159

Pulled By: malfet

fbshipit-source-id: 33b8bb5040bda10537833f3ad737f535488452ea

Summary:

Fixes https://github.com/pytorch/pytorch/issues/48831.

- CI image is updated to build hipMAGMA from source and set env MAGMA_HOME.

- CMake is updated to separate different requirements for CUDA versus ROCm MAGMA.

- Some unit tests that become enabled with MAGMA are currently skipped for ROCm due to failures. Fixing these failures will be follow-on work.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51238

Reviewed By: ngimel

Differential Revision: D26184918

Pulled By: malfet

fbshipit-source-id: ada632f1ae7b413e8cae6543fe931dcd46985821

Summary:

Because of the size of our `libtorch_cuda.so`, linking with other hefty binaries presents a problem where 32bit relocation markers are too small and end up overflowing. This PR attempts to break up `torch_cuda` into `torch_cuda_cu` and `torch_cuda_cpp`.

`torch_cuda_cu`: all the files previously in `Caffe2_GPU_SRCS` that are

* pure `.cu` files in `aten`match

* all the BLAS files

* all the THC files, except for THCAllocator.cpp, THCCachingHostAllocator.cpp and THCGeneral.cpp

* all files in`detail`

* LegacyDefinitions.cpp and LegacyTHFunctionsCUDA.cpp

* Register*CUDA.cpp

* CUDAHooks.cpp

* CUDASolver.cpp

* TensorShapeCUDA.cpp

`torch_cuda_cpp`: all other files in `Caffe2_GPU_SRCS`

Accordingly, TORCH_CUDA_API and TORCH_CUDA_BUILD_MAIN_LIB usages are getting split as well to TORCH_CUDA_CU_API and TORCH_CUDA_CPP_API.

To test this locally, you can run `export BUILD_SPLIT_CUDA=ON && python setup.py develop`. In your `build/lib` folder, you should find binaries for both `torch_cuda_cpp` and `torch_cuda_cu`. To see that the SPLIT_CUDA option was toggled, you can grep the Summary of running cmake and make sure `Split CUDA` is ON.

This build option is tested on CI for CUDA 11.1 builds (linux for now, but windows soon).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49050

Reviewed By: walterddr

Differential Revision: D26114310

Pulled By: janeyx99

fbshipit-source-id: 0180f2519abb5a9cdde16a6fb7dd3171cff687a6

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50760

The SHM transport uses shared-memory-backed ringbuffers to transfer small payloads between processes on the same machine.

It was disabled in v1.6 due to a CMake mishap but we've since realized that it also doesn't work that well in docker and other setups. Enabling it here to see whether CircleCI fails.

ghstack-source-id: 120470890

Test Plan: Exported three times to CircleCI with tests consistently passing

Reviewed By: mrshenli

Differential Revision: D23814828

fbshipit-source-id: f355cb6515776debad536924de4f4d3fbb05a874

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50288

torch::deploy will bundle the objects contained in libtorch-python together with frozenpython into a shared library. Therefore, the libtorch-python objs can't bring with them a dependency on system python.

Buck TARGETS are added throughout the caffe2 tree to make available objects or headers that will be needed by torch::deploy but would have brought unsuitable dependencies if accessed using existing targets.

CMakeLists are modified to separate a torch-python-objs object library which lets torch::deploy compile these objs with the same compile flags as libttorch_python used, but without some of the link-time dependencies such as python.

CudaIPCTypes is moved from libtorch_python to libtorch_cuda because it is really not a python binding, and it statically registers a cuda_ipc_callback which would be duplicated if included in each copy of torch::deploy.

Test Plan: no new functionality, just ensure existing tests continue to pass

Reviewed By: malfet

Differential Revision: D25850785

fbshipit-source-id: b0b81c050cbee04e9de96888f8a09d29238a9db8

Summary:

… library builds, as it is already set in shared library builds from the target that was imported from Caffe2.

This was identified on Windows builds when PyTorch was built in shared Release mode, and a testapp was built with RelWithDebInfo in CMake.

The problem appeared to be that because IMPORTED_LOCATION (in TorchConfig.cmake) and IMPORTED_LOCATION_RELEASE were both set (in Caffe2Targets.cmake), there occurred some confusion in the build as to what was correct. The symptoms are the error:

ninja: error: 'torch-NOTFOUND', needed by 'test_pytorch.exe', missing and no known rule to make it

in a noddy consuming test application.

Fixes https://github.com/pytorch/pytorch/issues/48724

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49173

Reviewed By: malfet

Differential Revision: D25974151

Pulled By: ezyang

fbshipit-source-id: 3454c0d29cbbe7a37608beedaae3efbb624b0479

Summary:

draft enable fast_nvcc.

* cleaned up some non-standard usages

* added fall-back to wrap_nvcc

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49773

Test Plan:

Configuration to enable fast nvcc:

- install and enable `ccache` but delete `.ccache/` folder before each build.

- `TORCH_CUDA_ARCH_LIST=6.0;6.1;6.2;7.0;7.5`

- Toggling `USE_FAST_NVCC=ON/OFF` cmake config and run `cmake --build` to verify the build time.

Initial statistic for a full compilation:

* `cmake --build . -- -j $(nproc)`:

- fast NVCC

```

real 48m55.706s

user 1559m14.218s

sys 318m41.138s

```

- normal NVCC:

```

real 43m38.723s

user 1470m28.131s

sys 90m46.879s

```

* `cmake --build . -- -j $(nproc/4)`:

- fast NVCC:

```

real 53m44.173s

user 1130m18.323s

sys 71m32.385s

```

- normal NVCC:

```

real 81m53.768s

user 858m45.402s

sys 61m15.539s

```

* Conclusion: fast NVCC doesn't provide too much gain when compiler is set to use full CPU utilization, in fact it is **even worse** because of the thread switcing.

initial statistic for partial recompile (edit .cu files)

* `cmake --build . -- -j $(nproc)`

- fast NVCC:

```

[2021-01-13 18:10:24] [ 86%] Building NVCC (Device) object caffe2/CMakeFiles/torch_cuda.dir/__/aten/src/ATen/native/cuda/torch_cuda_generated_BinaryMiscOpsKernels.cu.o

[2021-01-13 18:11:08] [ 86%] Linking CXX shared library ../lib/libtorch_cuda.so

```

- normal NVCC:

```

[2021-01-13 17:35:40] [ 86%] Building NVCC (Device) object caffe2/CMakeFiles/torch_cuda.dir/__/aten/src/ATen/native/cuda/torch_cuda_generated_BinaryMiscOpsKernels.cu.o

[2021-01-13 17:38:08] [ 86%] Linking CXX shared library ../lib/libtorch_cuda.so

```

* Conclusion: Effective compilation time for single CU file modification reduced from from 2min30sec to only 40sec when compiling multiple architecture. This shows **4X** gain in speed up using fast NVCC -- reaching the theoretical limit of 5X when compiling 5 gencode architecture at the same time.

Follow up PRs:

- should have better fallback mechanism to detect whether a build is supported by fast_nvcc or not instead of dryruning then fail with fallback.

- performance measurement instrumentation to measure what's the total compile time vs the parallel tasks critical path time.

- figure out why `-j $(nproc)` gives significant sys overhead (`sys 318m41.138s` vs `sys 90m46.879s`) over normal nvcc, guess this is context switching, but not exactly sure

Reviewed By: malfet

Differential Revision: D25692758

Pulled By: walterddr

fbshipit-source-id: c244d07b9b71f146e972b6b3682ca792b38c4457

Summary:

Since version 1.6, oneDNN has provided limited support for AArch64 builds.

This minor change is to detect an AArch64 CPU and permit the use of

`USE_MKLDNN` in that case.

Build flags for oneDNN are also modified accordingly.

Note: oneDNN on AArch64, by default, will use oneDNN's reference C++ kernels.

These are not optimised for AArch64, but oneDNN v1.7 onwards provides support

for a limited set of primitives based Arm Compute Library.

See: https://github.com/oneapi-src/oneDNN/pull/795

and: https://github.com/oneapi-src/oneDNN/pull/820

for more details. Support for ACL-based oneDNN primitives in PyTorch

will require some further modification,

Fixes #{issue number}

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50400

Reviewed By: izdeby

Differential Revision: D25886589

Pulled By: malfet

fbshipit-source-id: 2c81277a28ad4528c2d2211381e7c6692d952bc1

Summary:

Fixes https://github.com/pytorch/pytorch/issues/21737

With this fix, TORCH_LIBRARIES variable can provide all nessesary static libraries build from pytorch repo.

User program (if do static build) now can just link with ${TORCH_LIBRARIES} + MKL + cuda runtime.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49458

Reviewed By: mrshenli

Differential Revision: D25895354

Pulled By: malfet

fbshipit-source-id: 8ff47d14ae1f90036522654d4354256ed5151e5c

Summary:

This PR is a step towards enabling cross compilation from x86_64 to arm64.

The following has been added:

1. When cross compilation is detected, compile a local universal fatfile to use as protoc.

2. For the simple compile check in MiscCheck.cmake, make sure to compile the small snippet as a universal binary in order to run the check.

**Test plan:**

Kick off a minimal build on a mac intel machine with the macOS 11 SDK with this command:

```

CMAKE_OSX_ARCHITECTURES=arm64 USE_MKLDNN=OFF USE_QNNPACK=OFF USE_PYTORCH_QNNPACK=OFF BUILD_TEST=OFF USE_NNPACK=OFF python setup.py install

```

(If you run the above command before this change, or without macOS 11 SDK set up, it will fail.)

Then check the platform of the built binaries using this command:

```

lipo -info build/lib/libfmt.a

```

Output:

- Before this PR, running a regular build via `python setup.py install` (instead of using the flags listed above):

```

Non-fat file: build/lib/libfmt.a is architecture: x86_64

```

- Using this PR:

```

Non-fat file: build/lib/libfmt.a is architecture: arm64

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50243

Reviewed By: malfet

Differential Revision: D25849955

Pulled By: janeyx99

fbshipit-source-id: e9853709a7279916f66aa4c4e054dfecced3adb1

Summary:

Since caffe2 and torch have been consolidated, CAFFE2_API should be merged with TORCH_API. Addresses a TODO.

Manually edited some references of the removed `CAFFE2_API`:

* `CONTRIBUTING.md`

* `caffe2/proto/CMakeLists.txt`

* `cmake/ProtoBuf.cmake`

* `c10/macros/Export.h`

* `torch/csrc/WindowsTorchApiMacro.h`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49496

Reviewed By: malfet, samestep

Differential Revision: D25600726

Pulled By: janeyx99

fbshipit-source-id: 7e068d959e397ac183c097d7e9a9afeca5ddd782

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49201

This unblocks kineto profiler for 1.8 release.

This PR supercedes https://github.com/pytorch/pytorch/pull/48391

Note: this will somewhat increase the size of linux server binaries, bc

we add libkineto.a and libcupti_static.a:

-rw-r--r-- 1 jenkins jenkins 1107502 Dec 10 21:16 build/lib/libkineto.a

-rw-r--r-- 1 root root 13699658 Nov 13 2019 /usr/local/cuda/lib64/libcupti_static.a

Test Plan:

CI

https://github.com/pytorch/pytorch/pull/48391

Imported from OSS

Reviewed By: ngimel

Differential Revision: D25480770

fbshipit-source-id: 037cd774f5547d9918d6055ef5cc952a54e48e4c

Summary:

### Pytorch Vec256 ppc64le support

implemented types:

- double

- float

- int16

- int32

- int64

- qint32

- qint8

- quint8

- complex_float

- complex_double

Notes:

All basic vector operations are implemented:

There are a few problems:

- minimum maximum nan propagation for ppc64le is missing and was not checked

- complex multiplication, division, sqrt, abs are implemented as PyTorch x86. they can overflow and have precision problems than std ones. That's why they were either excluded or tested in smaller domain range

- precisions of the implemented float math functions

~~Besides, I added CPU_CAPABILITY for power. but as because of quantization errors for DEFAULT I had to undef and use vsx for DEFAULT too~~

#### Details

##### Supported math functions