This PR adds the bare minimum functionality to get torchbind working in an e2e testable way on PT2.

It implements:

* ProxyTensor support

* Simple torch.export support (proxytensor-only path, e.g. non-strict).

* add some tests exercising the path.

Because all this is not fully baked, I hide the functionality behind a feature flag (`enable_torchbind_tracing()`) so it does not affect regular users for now.

Still on the agenda:

* Dynamo support

* Actual FakeMode support

* Mutability support

Hoping to get this first bit in as a standalone, as it will unblock some more extensive experimentation/testing going on internally.

Differential Revision: [D51825372](https://our.internmc.facebook.com/intern/diff/D51825372/)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/117697

Approved by: https://github.com/SherlockNoMad

This adds a function `statically_known_true` for `SymBool` that works

like inductor's `is_expr_static_and_true`. That is, it tries to simplify the

expression to a constant or returns `False` if it cannot be simplified.

This is useful in cases that can be optimized if the condition is met,

otherwise it doesn't effect correctness so we can avoid adding guards.

I also use this new function in inductor for `FakeTensorUpdater` and

`remove_noop_pass` which both generated unexpected guards previously.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/117359

Approved by: https://github.com/lezcano

Continuation of #112185, following the design in this [doc](https://docs.google.com/document/d/1ipSxcTzEMMOAPvxP-YJlD5JBZZmIGgh8Q34ixtOUCRo).

Summary:

* Introduce `SubclassSymbolicPolicy` containing separate dynamic dim / constraint policies for the outer and inner tensors

* Expand the automatic dynamic algorithm to recurse into inner tensors and produce one of these for a subclass instance

* Maintain legacy behavior for subclasses by recursively calling `mark_dynamic()` on inner tensors *of the same dim as outer* when `mark_dynamic(outer, ...)` is called

* Addresses this: 6a86cf00ad/torch/_dynamo/variables/builder.py (L1750)

* Add `outer_size` and `outer_stride` arguments to `__tensor_unflatten__()` so that you can find out what symbols were allocated for the outer size / stride (you are expected to return a tensor that compares equal to the outer symbols)

* Signatures now:

```python

# attrs is a list of inner tensor attributes on x; inner_tensor = getattr(x, attr)

# ctx is anything useful for rebuilding the class we want to guard on

attrs, ctx = x.__tensor_flatten__()

...

# inner_tensors is a dict of {attr -> tensor}

# ctx is taken unmodified from flattening and (eventually) guarded on

# outer_size is the expected size of the output; possibly symbolic

# outer_stride is the expected strides of the output; possibly symbolic

y = MySubclass.__tensor_unflatten__(inner_tensors, ctx, outer_size, outer_stride)

# at the __tensor_unflatten__() call-site in PT2, we assert y.shape == outer_size and y.stride() == outer_stride

# the assert simplifies symbols when there are relationships between outer and inner symbols

```

* Size info needed for `NestedTensor` at least, stride info needed for `DTensor` at least

* Punting on `outer_storage_offset` because storage_offset handling is horribly broken in PT2 right now

* ~~Add new `__tensor_mark_dynamic__()` to allow overriding the behavior of mark_dynamic on a per-subclass basis~~ (booted to future work)

* ~~Add guards for tensor subclasses by calling `__tensor_flatten__()` in the guard to test equality on `ctx`~~

* Now handled in #114469

* Next PR: add TENSOR_MATCH guards on inner tensors

Pull Request resolved: https://github.com/pytorch/pytorch/pull/114311

Approved by: https://github.com/ezyang, https://github.com/drisspg, https://github.com/voznesenskym, https://github.com/bdhirsh

Summary:

The primary problem we are setting out to solve here is fake tensor freshness. Before this PR, fake tensors after dynamo represented fake tensors *at the end* of trace, so subsequent retraces like aot_autograd would start off with fake tensors in the wrong (end result) state, rather than their expected fresh state. The solution here is to start a fresh fake mode, and re-fakify the tensors. The nuance comes from ensuring that symbols are uniformly created for the symbolic sizes and strides of the tensor.

This PR is the result of *a lot* of back and forth with ezyang and eellison. Initially, the first pass at this was not super different from what we have in the PR - the broad strokes were the same:

1) We cache source->symbol in shape_env

2) We pass policy objects around, stored at dynamo fakificaiton time, and reused for later fakification

3) We create a new fake mode for backends

(from https://github.com/pytorch/pytorch/pull/113605/files)

This is ugly, and has some layering violations. We detoured our decision making through a few other alternatives. Immutable/mutable fake tensor mode was the most interesting alternative, https://github.com/pytorch/pytorch/pull/113653, and was struck down on concerns of complexity in fake mode combined with it not covering all edge cases. We also detoured on what to do about tensor memoization returning back potentially different tensors than requested, and if that was an anti pattern (it is) we want to hack in with the symbol cache (we don't).

We went back to the drawing board here, but with a few concessions:

1) the cache for source->symbol must live outside of shape_env, for both lifecycle, and layering reasons

2) A good amount of work needs to be done to pipe policy around fake_mode and meta_utils correctly, to cover all the cases (ezyang did this)

cc penguinwu EikanWang jgong5 Guobing-Chen XiaobingSuper zhuhaozhe blzheng wenzhe-nrv jiayisunx chenyang78 aakhundov kadeng

imported-using-ghimport

Test Plan: Imported from OSS

Reviewed By: huydhn, Chillee

Differential Revision: D51566250

Pulled By: voznesenskym

Pull Request resolved: https://github.com/pytorch/pytorch/pull/114526

Approved by: https://github.com/Chillee, https://github.com/huydhn

The primary problem we are setting out to solve here is fake tensor freshness. Before this PR, fake tensors after dynamo represented fake tensors *at the end* of trace, so subsequent retraces like aot_autograd would start off with fake tensors in the wrong (end result) state, rather than their expected fresh state. The solution here is to start a fresh fake mode, and re-fakify the tensors. The nuance comes from ensuring that symbols are uniformly created for the symbolic sizes and strides of the tensor.

This PR is the result of *a lot* of back and forth with @ezyang and @eellison. Initially, the first pass at this was not super different from what we have in the PR - the broad strokes were the same:

1) We cache source->symbol in shape_env

2) We pass policy objects around, stored at dynamo fakificaiton time, and reused for later fakification

3) We create a new fake mode for backends

(from https://github.com/pytorch/pytorch/pull/113605/files)

This is ugly, and has some layering violations. We detoured our decision making through a few other alternatives. Immutable/mutable fake tensor mode was the most interesting alternative, https://github.com/pytorch/pytorch/pull/113653, and was struck down on concerns of complexity in fake mode combined with it not covering all edge cases. We also detoured on what to do about tensor memoization returning back potentially different tensors than requested, and if that was an anti pattern (it is) we want to hack in with the symbol cache (we don't).

We went back to the drawing board here, but with a few concessions:

1) the cache for source->symbol must live outside of shape_env, for both lifecycle, and layering reasons

2) A good amount of work needs to be done to pipe policy around fake_mode and meta_utils correctly, to cover all the cases (@ezyang did this)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/113926

Approved by: https://github.com/ezyang, https://github.com/eellison

Thanks aakhundov for constructing the test case. This PR was constructed by running the failing test case, and then fixing problems until we got all the way to the end. There are a few distinct fixes:

* AOTAutograd performs equality tests on tensor metadata to determine if a metadata mutation had occurred. If we test i0 vs i1, we should report these are NOT equal, since obviously we have somehow resized the tensor from i0 to i1 (even if, on a particular run, it is possible i0 == i1).

* There's a sketchy fix for `test_aot_autograd_exhaustive_matmul_cpu_float32` where we check if the output shape equals the tangent shape. Unfortunately, the same `definitely_true` treatment does not work here, it still fails on the example. I piled an extra sketchy fix on top of it, where I just try my best to avoid doing the view. Maybe we should have some sort of logging here.

* Partitioner needs to get out a size for unbacked SymInt when partitioning. I just feed it a random heuristic value in this case, similar to how we've been dealing with this in Inductor.

Signed-off-by: Edward Z. Yang <ezyang@meta.com>

Pull Request resolved: https://github.com/pytorch/pytorch/pull/113159

Approved by: https://github.com/aakhundov, https://github.com/bdhirsh

We spend somewhere on the order 1% in `sympy.Expr.free_symbols` as it is called millions of times.

Most of the time we actually just want to know "is this a constant", however `e.is_constant()` is

horribly slow. It turns out though that there is another propery `is_number` that does what we want.

> property is_number:

>

> Returns True if self has no free symbols and no undefined functions (AppliedUndef, to be precise). It will be faster

> than if not self.free_symbols, however, since is_number will fail as soon as it hits a free symbol or undefined

> function.

Even further, we also avoid the overhead of building the unnecessary set object.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/112688

Approved by: https://github.com/lezcano

This PR:

- Moves TrueDiv, LShift, RShift, IsNonOverlappingAndDenseIndicator to `_sympy.functions.py`

- Moves SymNode to `fx.experimental.sym_node`.

- This file does not have any SymPy dependencies at import time

- It installs the magic methods in Sym{Bool,Int,Float}.

- N.b. With this split, we may be able to move Sym{Bool,Int,Float} to this file, and remove quite a few of the hacks around these classes

- Imports `sym_node` in `torch/__init__.py` rather than the whole `symbolic_shapes.py`.

This breaks the import-time dependency between torch and SymPy

Pull Request resolved: https://github.com/pytorch/pytorch/pull/112037

Approved by: https://github.com/peterbell10

ghstack dependencies: #112035, #112036

This PR supports sym_ite. This is useful for converting SymBool to SymInt in e.g. #109916. Internally, it uses sympy.Piecewise. We cannot use sympy.ITE because it expects the arguments and output all to be boolean type but we want return SymInt type when converting a SymBool to SymInt. So we use sympy.Piecewise to denote the symbolic relationship.

Note that this pr uses the range analysis for sympy.Piecewise implemented in https://github.com/pytorch/pytorch/blob/main/torch/utils/_sympy/value_ranges.py.

Test Plan:

See added test.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/111440

Approved by: https://github.com/ezyang

Add non-package python modules to the public API checks.

The original change is to remove the `ispkg` check in this line

https://github.com/pytorch/pytorch/blob/main/docs/source/conf.py#L518

Everything else is to add the appropriate modules to the rst files, make sure every module we provide can be imported (fixed by either making optional dependencies optional or just deleting files that have been un-importable for 3 years), make API that are both modules and functions (like torch.autograd.gradcheck) properly rendered on the docs website without confusion and add every non-documented API to the allow list (~3k of them).

Next steps will be to try and fix these missing docs

Pull Request resolved: https://github.com/pytorch/pytorch/pull/110568

Approved by: https://github.com/zou3519

As per title.

Note that the c++ side code for the minidumps part was removed. So trying to call any of these 3 functions today results in an error saying that `torch._C` doesn't have these attributes.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/105142

Approved by: https://github.com/janeyx99

We want to make TorchRec sharded models TorchScriptable.

TorchRec sharded models uses generic types Awaitable[W] and LazyAwaitable[W] (https://github.com/pytorch/torchrec/blob/main/torchrec/distributed/types.py#L212).

In sharded model those types are used instead of contained type W, having the initialization function that produces object of type W.

At the moment when the first attribute of W is requested - `LazyAwaitable[W]` will call its initialization function (on the same stack), cache the result inside and work transparently as an object of W. So we can think about it as a delayed object initialization.

To support this behavior in TorchScript - we propose a new type to TorchScript - `Await`.

In eager mode it works the same as `LazyAwaitable[W]` in TorchRec, being dynamically typed - acting as a type `W` while it is `Await[W]`.

Within torchscript it is `Await[W]` and can be only explicitly converted to W, using special function `torch.jit.awaitable_wait(aw)`.

Creation of this `Await[W]` is done via another special function `torch.jit.awaitable(func, *args)`.

The semantic is close to `torch.jit.Future`, fork, wait and uses the same jit mechanics (inline fork Closures) with the difference that it does not start this function in parallel on fork. It only stores as a lambda inside IValue that will be called on the same thread when `torch.jit.awaitable_wait` is called.

For example (more examples in this PR `test/jit/test_await.py`)

```

def delayed(z: Tensor) -> Tensor:

return Tensor * 3

@torch.jit.script

def fn(x: Tensor):

aw: Await[int] = torch.jit._awaitable(delayed, 99)

a = torch.eye(2)

b = torch.jit._awaitable_wait(aw)

return a + b + x

```

Functions semantics:

`_awaitable(func -> Callable[Tuple[...], W], *args, **kwargs) -> Await[W]`

Creates Await object, owns args and kwargs. Once _awaitable_wait calls, executes function func and owns the result of the function. Following _awaitable_wait calls will return this result from the first function call.

`_awaitable_wait(Await[W]) -> W`

Returns either cached result of W if it is not the first _awaitable_wait call to this Await object or calls specified function if the first.

`_awaitable_nowait(W) -> Await[W]`

Creates trivial Await[W] wrapper on specified object To be type complaint for the corner cases.

Differential Revision: [D42502706](https://our.internmc.facebook.com/intern/diff/D42502706)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/90863

Approved by: https://github.com/davidberard98

We have known for a while that we should in principle support SymBool as a separate concept from SymInt and SymFloat ( in particular, every distinct numeric type should get its own API). However, recent work with unbacked SymInts in, e.g., https://github.com/pytorch/pytorch/pull/90985 have made this a priority to implement. The essential problem is that our logic for computing the contiguity of tensors performs branches on the passed in input sizes, and this causes us to require guards when constructing tensors from unbacked SymInts. Morally, this should not be a big deal because, we only really care about the regular (non-channels-last) contiguity of the tensor, which should be guaranteed since most people aren't calling `empty_strided` on the tensor, however, because we store a bool (not a SymBool, prior to this PR it doesn't exist) on TensorImpl, we are forced to *immediately* compute these values, even if the value ends up not being used at all. In particular, even when a user allocates a contiguous tensor, we still must compute channels-last contiguity (as some contiguous tensors are also channels-last contiguous, but others are not.)

This PR implements SymBool, and makes TensorImpl use SymBool to store the contiguity information in ExtraMeta. There are a number of knock on effects, which I now discuss below.

* I introduce a new C++ type SymBool, analogous to SymInt and SymFloat. This type supports logical and, logical or and logical negation. I support the bitwise operations on this class (but not the conventional logic operators) to make it clear that logical operations on SymBool are NOT short-circuiting. I also, for now, do NOT support implicit conversion of SymBool to bool (creating a guard in this case). This does matter too much in practice, as in this PR I did not modify the equality operations (e.g., `==` on SymInt) to return SymBool, so all preexisting implicit guards did not need to be changed. I also introduced symbolic comparison functions `sym_eq`, etc. on SymInt to make it possible to create SymBool. The current implementation of comparison functions makes it unfortunately easy to accidentally introduce guards when you do not mean to (as both `s0 == s1` and `s0.sym_eq(s1)` are valid spellings of equality operation); in the short term, I intend to prevent excess guarding in this situation by unit testing; in the long term making the equality operators return SymBool is probably the correct fix.

* ~~I modify TensorImpl to store SymBool for the `is_contiguous` fields and friends on `ExtraMeta`. In practice, this essentially meant reverting most of the changes from https://github.com/pytorch/pytorch/pull/85936 . In particular, the fields on ExtraMeta are no longer strongly typed; at the time I was particularly concerned about the giant lambda I was using as the setter getting a desynchronized argument order, but now that I have individual setters for each field the only "big list" of boolean arguments is in the constructor of ExtraMeta, which seems like an acceptable risk. The semantics of TensorImpl are now that we guard only when you actually attempt to access the contiguity of the tensor via, e.g., `is_contiguous`. By in large, the contiguity calculation in the implementations now needs to be duplicated (as the boolean version can short circuit, but the SymBool version cannot); you should carefully review the duplicate new implementations. I typically use the `identity` template to disambiguate which version of the function I need, and rely on overloading to allow for implementation sharing. The changes to the `compute_` functions are particularly interesting; for most of the functions, I preserved their original non-symbolic implementation, and then introduce a new symbolic implementation that is branch-less (making use of our new SymBool operations). However, `compute_non_overlapping_and_dense` is special, see next bullet.~~ This appears to cause performance problems, so I am leaving this to an update PR.

* (Update: the Python side pieces for this are still in this PR, but they are not wired up until later PRs.) While the contiguity calculations are relatively easy to write in a branch-free way, `compute_non_overlapping_and_dense` is not: it involves a sort on the strides. While in principle we can still make it go through by using a data oblivious sorting network, this seems like too much complication for a field that is likely never used (because typically, it will be obvious that a tensor is non overlapping and dense, because the tensor is contiguous.) So we take a different approach: instead of trying to trace through the logic computation of non-overlapping and dense, we instead introduce a new opaque operator IsNonOverlappingAndDenseIndicator which represents all of the compute that would have been done here. This function returns an integer 0 if `is_non_overlapping_and_dense` would have returned `False`, and an integer 1 otherwise, for technical reasons (Sympy does not easily allow defining custom functions that return booleans). The function itself only knows how to evaluate itself if all of its arguments are integers; otherwise it is left unevaluated. This means we can always guard on it (as `size_hint` will always be able to evaluate through it), but otherwise its insides are left a black box. We typically do NOT expect this custom function to show up in actual boolean expressions, because we will typically shortcut it due to the tensor being contiguous. It's possible we should apply this treatment to all of the other `compute_` operations, more investigation necessary. As a technical note, because this operator takes a pair of a list of SymInts, we need to support converting `ArrayRef<SymNode>` to Python, and I also unpack the pair of lists into a single list because I don't know if Sympy operations can actually validly take lists of Sympy expressions as inputs. See for example `_make_node_sizes_strides`

* On the Python side, we also introduce a SymBool class, and update SymNode to track bool as a valid pytype. There is some subtlety here: bool is a subclass of int, so one has to be careful about `isinstance` checks (in fact, in most cases I replaced `isinstance(x, int)` with `type(x) is int` for expressly this reason.) Additionally, unlike, C++, I do NOT define bitwise inverse on SymBool, because it does not do the correct thing when run on booleans, e.g., `~True` is `-2`. (For that matter, they don't do the right thing in C++ either, but at least in principle the compiler can warn you about it with `-Wbool-operation`, and so the rule is simple in C++; only use logical operations if the types are statically known to be SymBool). Alas, logical negation is not overrideable, so we have to introduce `sym_not` which must be used in place of `not` whenever a SymBool can turn up. To avoid confusion with `__not__` which may imply that `operators.__not__` might be acceptable to use (it isn't), our magic method is called `__sym_not__`. The other bitwise operators `&` and `|` do the right thing with booleans and are acceptable to use.

* There is some annoyance working with booleans in Sympy. Unlike int and float, booleans live in their own algebra and they support less operations than regular numbers. In particular, `sympy.expand` does not work on them. To get around this, I introduce `safe_expand` which only calls expand on operations which are known to be expandable.

TODO: this PR appears to greatly regress performance of symbolic reasoning. In particular, `python test/functorch/test_aotdispatch.py -k max_pool2d` performs really poorly with these changes. Need to investigate.

Signed-off-by: Edward Z. Yang <ezyang@meta.com>

Pull Request resolved: https://github.com/pytorch/pytorch/pull/92149

Approved by: https://github.com/albanD, https://github.com/Skylion007

This refactor was prompted by challenges handling mixed int/float

operations in C++. A previous version of this patch

added overloads for each permutation of int/float and was unwieldy

https://github.com/pytorch/pytorch/pull/87722/ This PR takes a different

approach.

The general outline of the patch is to combine the C++ types SymIntNode

and SymFloatNode into a single type, SymNode. This is type erased; we

no longer know statically at C++ if we have an int/float and have to test

it with the is_int()/is_float() virtual methods. This has a number of

knock on effects.

- We no longer have C++ classes to bind to Python. Instead, we take an

entirely new approach to our Python API, where we have a SymInt/SymFloat

class defined entirely in Python, which hold a SymNode (which corresponds

to the C++ SymNode). However, SymNode is not pybind11-bound; instead,

it lives as-is in Python, and is wrapped into C++ SymNode using PythonSymNode

when it goes into C++. This implies a userland rename.

In principle, it is also possible for the canonical implementation of SymNode

to be written in C++, and then bound to Python with pybind11 (we have

this code, although it is commented out.) However, I did not implement

this as we currently have no C++ implementations of SymNode.

Because we do return SymInt/SymFloat from C++ bindings, the C++ binding

code needs to know how to find these classes. Currently, this is done

just by manually importing torch and getting the attributes.

- Because SymInt/SymFloat are easy Python wrappers, __sym_dispatch__ now

takes SymInt/SymFloat, rather than SymNode, bringing it in line with how

__torch_dispatch__ works.

Some miscellaneous improvements:

- SymInt now has a constructor that takes SymNode. Note that this

constructor is ambiguous if you pass in a subclass of SymNode,

so an explicit downcast is necessary. This means toSymFloat/toSymInt

are no more. This is a mild optimization as it means rvalue reference

works automatically.

- We uniformly use the caster for c10::SymInt/SymFloat, rather than

going the long way via the SymIntNode/SymFloatNode.

- Removed some unnecessary toSymInt/toSymFloat calls in normalize_*

functions, pretty sure this doesn't do anything.

- guard_int is now a free function, since to guard on an int you cannot

assume the method exists. A function can handle both int and SymInt

inputs.

- We clean up the magic method definition code for SymInt/SymFloat/SymNode.

ONLY the user classes (SymInt/SymFloat) get magic methods; SymNode gets

plain methods; this is to help avoid confusion between the two types.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

cc @jansel @mlazos @soumith @voznesenskym @yanboliang @penguinwu @anijain2305

Pull Request resolved: https://github.com/pytorch/pytorch/pull/87817

Approved by: https://github.com/albanD, https://github.com/anjali411

This is a new version of #15648 based on the latest master branch.

Unlike the previous PR where I fixed a lot of the doctests in addition to integrating xdoctest, I'm going to reduce the scope here. I'm simply going to integrate xdoctest, and then I'm going to mark all of the failing tests as "SKIP". This will let xdoctest run on the dashboards, provide some value, and still let the dashboards pass. I'll leave fixing the doctests themselves to another PR.

In my initial commit, I do the bare minimum to get something running with failing dashboards. The few tests that I marked as skip are causing segfaults. Running xdoctest results in 293 failed, 201 passed tests. The next commits will be to disable those tests. (unfortunately I don't have a tool that will insert the `#xdoctest: +SKIP` directive over every failing test, so I'm going to do this mostly manually.)

Fixes https://github.com/pytorch/pytorch/issues/71105

@ezyang

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82797

Approved by: https://github.com/ezyang

Done via

```

git grep -l 'SymbolicIntNode' | xargs sed -i 's/SymbolicIntNode/SymIntNodeImpl/g'

```

Reasoning for the change:

* Sym is shorter than Symbolic, and consistent with SymInt

* You usually will deal in shared_ptr<...>, so we're going to

reserve the shorter name (SymIntNode) for the shared pointer.

But I don't want to update the Python name, so afterwards I ran

```

git grep -l _C.SymIntNodeImpl | xargs sed -i 's/_C.SymIntNodeImpl/_C.SymIntNode/'

```

and manually fixed up the binding code

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82350

Approved by: https://github.com/Krovatkin

This PR adds support for `SymInt`s in python. Namely,

* `THPVariable_size` now returns `sym_sizes()`

* python arg parser is modified to parse PyObjects into ints and `SymbolicIntNode`s

* pybind11 bindings for `SymbolicIntNode` are added, so size expressions can be traced

* a large number of tests added to demonstrate how to implement python symints.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/78135

Approved by: https://github.com/ezyang

Summary:

Following https://github.com/pytorch/rfcs/blob/master/RFC-0019-Extending-PyTorch-Quantization-to-Custom-Backends.md we implemented

the backend configuration for fbgemm/qnnpack backend, currently it was under fx folder, but we'd like to use this for all different

workflows, including eager, fx graph and define by run quantization, this PR moves it to torch.ao.quantization namespace so that

it can be shared by different workflows

Also moves some utility functions specific to fx to fx/backend_config_utils.py and some files are kept in fx folder (quantize_handler.py and fuse_handler.py)

Test Plan:

python test/teset_quantization.py TestQuantizeFx

python test/teset_quantization.py TestQuantizeFxOps

python test/teset_quantization.py TestQuantizeFxModels

python test/test_quantization.py TestAOMigrationQuantization

python test/test_quantization.py TestAOMigrationQuantizationFx

Reviewers:

Subscribers:

Tasks:

Tags:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/75823

Approved by: https://github.com/vkuzo

Summary:

Working towards https://docs.google.com/document/d/10yx2-4gs0gTMOimVS403MnoAWkqitS8TUHX73PN8EjE/edit?pli=1#

This PR:

- Ensure that all the submodules are listed in a rst file (that ensure they are considered by the coverage tool)

- Remove some long deprecated code that just error out on import

- Remove the allow list altogether to ensure nothing gets added back there

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73983

Reviewed By: anjali411

Differential Revision: D34787908

Pulled By: albanD

fbshipit-source-id: 163ce61e133b12b2f2e1cbe374f979e3d6858db7

(cherry picked from commit c9edfead7a01dc45bfc24eaf7220d2a84ab1f62e)

PR #72405 added four new types to the public python API:

`torch.ComplexFloatTensor`, `torch.ComplexDoubleTensor`,

`torch.cuda.ComplexFloatTensor` and `torch.cuda.ComplexDoubleTensor`.

I believe this was unintentional and a clarifying comment as to the

purpose of `all_declared_types` is needed to avoid this in future.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73370

Summary:

This pull request introduces `SoftplusTransform` to `torch.distributions.transforms`. `SoftplusTransform` transforms via the mapping `Softplus(x) = log(1 + exp(x))`. Note that the transform is different to [`torch.nn.Softplus`](https://pytorch.org/docs/stable/generated/torch.nn.Softplus.html#torch.nn.Softplus), as that has additional `beta` and `threshold` parameters. Inverse and `log_abs_det_jacobian` for a more complex `SoftplusTransform` can be added in the future.

vitkl fritzo

Addresses the issue discussed here: [pyro issue 855](https://github.com/pyro-ppl/numpyro/issues/855)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52300

Reviewed By: albanD, ejguan

Differential Revision: D34082655

Pulled By: neerajprad

fbshipit-source-id: 6114e74ee5d73c1527191bed612a142d691e2094

(cherry picked from commit a181a3a9e53a34214a503d38760ad7778d08a680)

Summary:

Fixespytorch/pytorch.github.io#929

The pytorch doc team would like to move to only major.minor documentation at https://pytorch.org/docs/versions.html, not major.minor.patch. This has been done in the CI scripts, but the generated documentation still has the patch version. Remove it when building RELEASE documentation. This allows simplifying the logic, using `'.'.join(torch_version.split('.')[:2])` since we no longer care about trimming off the HASH: it automatically gets removed.

holly1238, brianjo

Pull Request resolved: https://github.com/pytorch/pytorch/pull/72706

Reviewed By: samdow

Differential Revision: D34215815

Pulled By: albanD

fbshipit-source-id: 8437036cc6636674d9ab8b1666f37b561d0527e1

(cherry picked from commit d8caf988f9)

Summary:

This PR adds a transform that uses the cumulative distribution function of a given probability distribution.

For example, the following code constructs a simple Gaussian copula.

```python

# Construct a Gaussian copula from a multivariate normal.

base_dist = MultivariateNormal(

loc=torch.zeros(2),

scale_tril=LKJCholesky(2).sample(),

)

transform = CumulativeDistributionTransform(Normal(0, 1))

copula = TransformedDistribution(base_dist, [transform])

```

The following snippet creates a "wrapped" Gaussian copula for correlated positive variables with Weibull marginals.

```python

transforms = [

CumulativeDistributionTransform(Normal(0, 1)),

CumulativeDistributionTransform(Weibull(4, 2)).inv,

]

wrapped_copula = TransformedDistribution(base_dist, transforms)

```

cc fritzo

Pull Request resolved: https://github.com/pytorch/pytorch/pull/72495

Reviewed By: ejguan

Differential Revision: D34085919

Pulled By: albanD

fbshipit-source-id: 7917391519a96b0d9b54c52db65d1932f961d070

(cherry picked from commit 572196146e)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/72499

Pull Request resolved: https://github.com/pytorch/benchmark/pull/740

To fx2trt out of tree to remove bloatness of PyTorch core.

It's the first and major step. Next, we will move acc_tracer out of the tree and rearrange some fx passes.

Reviewed By: suo

Differential Revision: D34065866

fbshipit-source-id: c72b7ad752d0706abd9a63caeef48430e85ec56d

(cherry picked from commit 91647adbca)

Summary:

**Summary:** This commit adds the `torch.nn.qat.dynamic.modules.Linear`

module, the dynamic counterpart to `torch.nn.qat.modules.Linear`.

Functionally these are very similar, except the dynamic version

expects a memoryless observer and is converted into a dynamically

quantized module before inference.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67325

Test Plan:

`python3 test/test_quantization.py TestQuantizationAwareTraining.test_dynamic_qat_linear`

**Reviewers:** Charles David Hernandez, Jerry Zhang

**Subscribers:** Charles David Hernandez, Supriya Rao, Yining Lu

**Tasks:** 99696812

**Tags:** pytorch

Reviewed By: malfet, jerryzh168

Differential Revision: D32178739

Pulled By: andrewor14

fbshipit-source-id: 5051bdd7e06071a011e4e7d9cc7769db8d38fd73

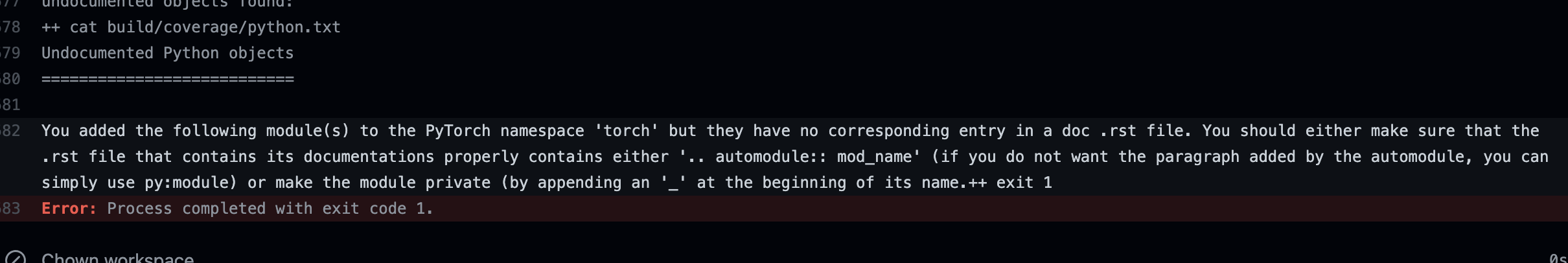

Summary:

Add check to make sure we do not add new submodules without documenting them in an rst file.

This is especially important because our doc coverage only runs for modules that are properly listed.

temporarily removed "torch" from the list to make sure the failure in CI looks as expected. EDIT: fixed now

This is what a CI failure looks like for the top level torch module as an example:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67440

Reviewed By: jbschlosser

Differential Revision: D32005310

Pulled By: albanD

fbshipit-source-id: 05cb2abc2472ea4f71f7dc5c55d021db32146928

Summary:

This reduces the chance of a newly added functions to be ignored by mistake.

The only test that this impacts is the coverage test that runs as part of the python doc build. So if that one works, it means that the update to the list here is correct.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67395

Reviewed By: jbschlosser

Differential Revision: D31991936

Pulled By: albanD

fbshipit-source-id: 5b4ce7764336720827501641311cc36f52d2e516

Summary:

Sphinx 4.x is out, but it seems that requires many more changes to

adopt. So instead use the latest version of 3.x, which includes

several nice features.

* Add some noindex directives to deal with warnings that would otherwise

be triggered by this change due to conflicts between the docstrings

declaring a function and the autodoc extension declaring the

same function.

* Update distributions.utils.lazy_property to make it look like a

regular property when sphinx autodoc inspects classes.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61601

Reviewed By: ejguan

Differential Revision: D29801876

Pulled By: albanD

fbshipit-source-id: 544d2434a15ceb77bff236e934dbd8e4dbd9d160

Summary:

CI built the documentation for the recent 1.9.0rc1 tag, but left the git version in the `version`, so (as of now) going to https://pytorch.org/docs/1.9.0/index.html and looking at the version in the upper-left corner shows "1.9.0a0+git5f0bbb3" not "1.9.0". This PR should change that to cut off everything after and including the "a".

It should be cherry-picked to the release/1.9 branch so that the next rc will override the current documentation with a "cleaner" version.

brianjo

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58486

Reviewed By: zou3519

Differential Revision: D28640476

Pulled By: malfet

fbshipit-source-id: 9fd1063f4a2bc90fa8c1d12666e8c0de3d324b5c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61556

Prior to 1.10.0 `torch.__version__` was stored as a str and so many did

comparisons against `torch.__version__` as if it were a str. In order to not

break them we have TorchVersion which masquerades as a str while also

having the ability to compare against both packaging.version.Version as

well as tuples of values, eg. (1, 2, 1)

Examples:

Comparing a TorchVersion object to a Version object

```

TorchVersion('1.10.0a') > Version('1.10.0a')

```

Comparing a TorchVersion object to a Tuple object

```

TorchVersion('1.10.0a') > (1, 2) # 1.2

TorchVersion('1.10.0a') > (1, 2, 1) # 1.2.1

```

Comparing a TorchVersion object against a string

```

TorchVersion('1.10.0a') > '1.2'

TorchVersion('1.10.0a') > '1.2.1'

```

Resolves https://github.com/pytorch/pytorch/issues/61540

Signed-off-by: Eli Uriegas <eliuriegas@fb.com>

Test Plan: Imported from OSS

Reviewed By: zou3519

Differential Revision: D29671234

Pulled By: seemethere

fbshipit-source-id: 6044805918723b4aca60bbec4b5aafc1189eaad7

Summary:

Trying to run the doctests for the complete documentation hangs if it reaches the examples of `torch.futures`. It turns out to be only syntax errors, which are normally just reported. My guess is that `doctest` probably doesn't work well for failures within async stuff.

Anyway, while debugging this, I fixed the syntax.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61029

Reviewed By: mruberry

Differential Revision: D29571923

Pulled By: mrshenli

fbshipit-source-id: bb8112be5302c6ec43151590b438b195a8f30a06

Summary:

brianjo

- Add a javascript snippet to close the expandable left navbar sections 'Notes', 'Language Bindings', 'Libraries', 'Community'

- Fix two latex bugs that were causing output in the log that might have been misleading when looking for true doc build problems

- Change the way release versions interact with sphinx. I tested these via building docs twice: once with `export RELEASE=1` and once without.

- Remove perl scripting to turn the static version text into a link to the versions.html document. Instead, put this where it belongs in the layout.html template. This is the way the domain libraries (text, vision, audio) do it.

- There were two separate templates for master and release, with the only difference between them is that the master has an admonition "You are viewing unstable developer preview docs....". Instead toggle that with the value of `release`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53851

Reviewed By: mruberry

Differential Revision: D27085875

Pulled By: ngimel

fbshipit-source-id: c2d674deb924162f17131d895cb53cef08a1f1cb

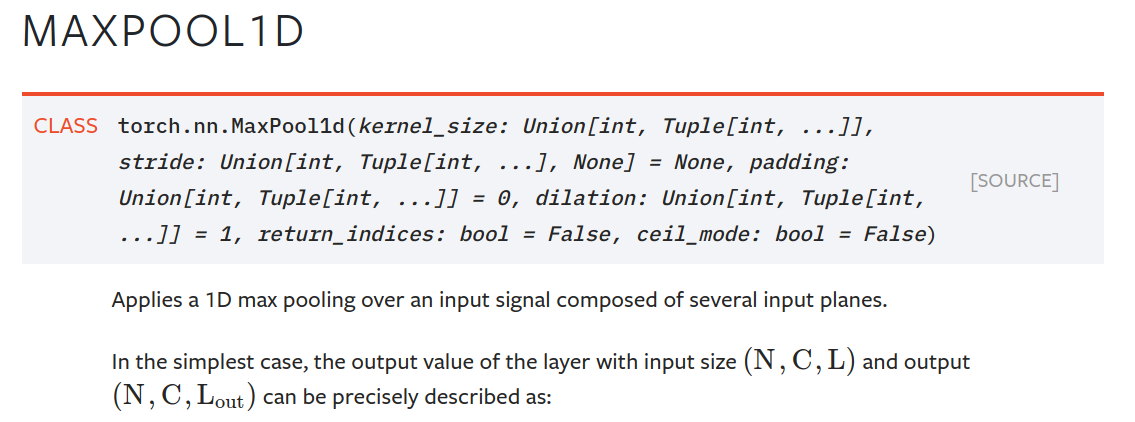

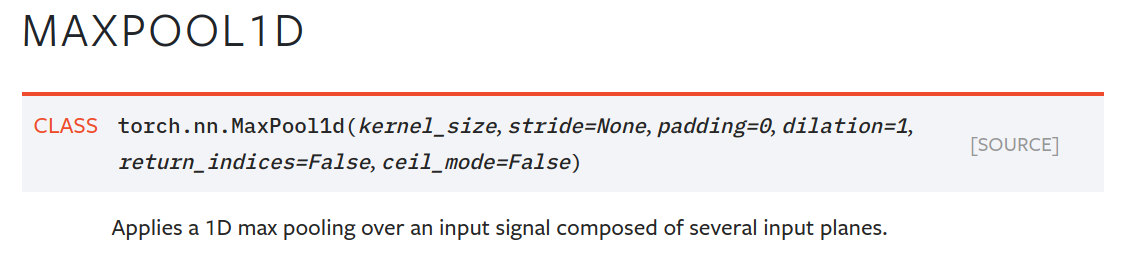

Summary:

One unintended side effect of moving type annotations inline was that those annotations now show up in signatures in the html docs. This is more confusing and ugly than it is helpful. An example for `MaxPool1d`:

This makes the docs readable again. The parameter descriptions often already have type information, and there will be many cases where the type annotations will make little sense to the user (e.g., returning typevar T, long unions).

Change to `MaxPool1d` example:

Note that once we can build the docs with Sphinx 3 (which is far off right now), we have two options to make better use of the extra type info in the annotations (some of which is useful):

- `autodoc_type_aliases`, so we can leave things like large unions unevaluated to keep things readable

- `autodoc_typehints = 'description'`, which moves the annotations into the parameter descriptions.

Another, more labour-intensive option, is what vadimkantorov suggested in gh-44964: show annotations on hover. Could also be done with some foldout, or other optional way to make things visible. Would be nice, but requires a Sphinx contribution or plugin first.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49294

Reviewed By: glaringlee

Differential Revision: D25535272

Pulled By: ezyang

fbshipit-source-id: 5017abfea941a7ae8c4595a0d2bdf8ae8965f0c4

Summary:

Fixes gh-39007

We replaced actual content with links to generated content in many places to break the documentation into manageable chunks. This caused references like

```

https://pytorch.org/docs/stable/torch.html#torch.flip

```

to become

```

https://pytorch.org/docs/master/generated/torch.flip.html#torch.flip

```

The textual content that was located at the old reference was replaced with a link to the new reference. This PR adds a `<p id="xxx"/p>` reference next to the link, so that the older references from outside tutorials and forums still work: they will bring the user to the link that they can then follow through to see the actual content.

The way this is done is to monkeypatch the sphinx writer method that produces the link. It is ugly but practical, and in my mind not worse than adding javascript to do the same thing.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/39086

Differential Revision: D22462421

Pulled By: jlin27

fbshipit-source-id: b8f913b38c56ebb857c5a07bded6509890900647

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/38490

A meta tensor is a tensor that is a lot like a normal tensor,

except it doesn't actually have any data associated with it.

You can use them to carry out shape/dtype computations without

actually having to run the actual code; for example, this could

be used to do shape inference in a JIT analysis pass.

Check out the description in DispatchKey.h for more information.

Meta tensors are part of a larger project to rationalize how we

write kernels so that we don't have to duplicate shape logic

in CPU kernel, CUDA kernel and meta kernel (this PR makes the

duplication problem worse!) However, that infrastructure can

be built on top of this proof of concept, which just shows how

you can start writing meta kernels today even without this

infrastructure.

There are a lot of things that don't work:

- I special cased printing for dense tensors only; if you try to

allocate a meta sparse / quantized tensor things aren't going

to work.

- The printing formula implies that torch.tensor() can take an

ellipsis, but I didn't add this.

- I wrote an example formula for binary operators, but it isn't

even right! (It doesn't do type promotion of memory layout

correctly). The most future proof way to do it right is to

factor out the relevant computation out of TensorIterator,

as it is quite involved.

- Nothing besides torch.add works right now

- Meta functions are ALWAYS included in mobile builds (selective

build doesn't work on them). This isn't a big deal for now

but will become more pressing as more meta functions are added.

One reason I'm putting up this PR now is to check with Yinghai Lu

if we can unblock shape inference for accelerators, while we are

still working on a long term plan for how to unify all shape

computation across our kernels.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Test Plan: Imported from OSS

Differential Revision: D21935609

Pulled By: ezyang

fbshipit-source-id: f7d8636eeb8516b6bc296db99a16e56029972eee

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/39331

Fixes gh-37590

Adds an extra `make coverage` to document building, which uses the built-in facility in sphinx to check docstring coverage. Also fixes a failure to import `torch/jit/supported_ops.py` which broke the [Torchscript Builtins](https://pytorch.org/docs/stable/jit_builtin_functions.html) page.

This also adds the required `SPHINXOPTS` to turn warnings into error, but this is commented out. Note that since documentation of `torchvision` is merged in here, failures there would cause failures here if this is made active. Some thought might be needed about pinning the torchvision version merged into documentation.

The first commit should fail, since the "ScriptModule" class is commented out. I did that in order to check that a CI failure is properly reported.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/38244

Differential Revision: D21640589

Pulled By: ezyang

fbshipit-source-id: 1e240d81669b5f21404d596de4a27d192dc9fd8a

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/38149

This is for (#21290) (#31894)

Instead of putting "Pytorch master documentation" in header's html title, now we use "Pytorch 1.x.x documentation", this is similar to tensorFlow and numpy doc page.

In google search, we will get

Pytorch Documentation - Pytorch 1.x.x Documentation instead.

Test Plan: Imported from OSS

Differential Revision: D21586559

Pulled By: glaringlee

fbshipit-source-id: 2995709ac3c22dbb0183b5b4abfde7d795f1f8eb

Summary:

xref gh-32838, gh-34032

This is a major refactor of parts of the documentation to split it up using sphinx's `autosummary` feature which will build out `autofuction` and `autoclass` stub files and link to them. The end result is that the top module pages like torch.nn.rst and torch.rst are now more like table-of-contents to the actual single-class or single-function documentations pages.

Along the way, I modified many of the docstrings to eliminate sphinx warnings when building. I think the only thing I changed from a non-documentation perspective is to add names to `__all__` when adding them to `globals()` in `torch.__init__.py`

I do not know the CI system: are the documentation build artifacts available after the build, so reviewers can preview before merging?

Pull Request resolved: https://github.com/pytorch/pytorch/pull/37419

Differential Revision: D21337640

Pulled By: ezyang

fbshipit-source-id: d4ad198780c3ae7a96a9f22651e00ff2d31a0c0f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/27927

This fixes

`WARNING: html_static_path entry '_images' does not exist`

by removing '_images' from conf.py. As far as I can tell, '_images' in

`html_static_path` is only necessary if images already exist in the

`_images` folder; otherwise, sphinx is able to auto-generate _images

into the build directory and populate it correctly.

Test Plan: - build and view the docs locally.

Differential Revision: D17915109

Pulled By: zou3519

fbshipit-source-id: ebcc1f331475f52c0ceadd3e97c3a4a0d606e14b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/27850

Many of these are real problems in the documentation (i.e., link or

bullet point doesn't display correctly).

Test Plan: - built and viewed the documentation for each change locally.

Differential Revision: D17908123

Pulled By: zou3519

fbshipit-source-id: 65c92a352c89b90fb6b508c388b0874233a3817a

Summary:

resolves issues:

https://github.com/pytorch/pytorch/issues/27703

Updates to index for v1.3.0

* add javasphinx to the required sphinx plugins

* Update "Package Reference" to "Python API"

* Add in torchaudio and torchtext reference links so they show up across all docs not just the main page

* Add "Other Languages" section, add in C++ docs, add in Javadocs

* Add link to XLA docs under Notes: http://pytorch.org/xla/

this includes changes to:

docs/source/conf.py

docs/source/index.rst

docs/source/nn.rst

docs/requirements.txt

Pull Request resolved: https://github.com/pytorch/pytorch/pull/27721

Differential Revision: D17881973

Pulled By: jlin27

fbshipit-source-id: ccc1e9e4da17837ad99d25df997772613f76aea8

Summary:

- Update torch.rst to remove certain autofunction calls

- Add reference to Quantization Functions section in nn.rst

- Update javadocs for v1.3.0

- Update index.rst:

- Update "Package Reference" to "Python API"

- Add in torchaudio and torchtext reference links so they show up across all docs not just the main page

- Add "Other Languages" section, add in C++ docs, add in Javadocs

- Add link to XLA docs under Notes: http://pytorch.org/xla/

Pull Request resolved: https://github.com/pytorch/pytorch/pull/27676

Differential Revision: D17850696

Pulled By: brianjo

fbshipit-source-id: 3de146f065222d1acd9a33aae3b543927a63532a

Summary:

According to https://github.com/pytorch/pytorch/issues/27285 , seems we do not intend to use shebang as an indication of Python version, thus

we enable EXE001 flake8 check.

For violations, we either remove shebang from non-executable Python scripts or grant them executable permission.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/27560

Differential Revision: D17831782

Pulled By: ezyang

fbshipit-source-id: 6282fd3617b25676a6d959af0d318faf05c09b26

Summary:

All of the code examples should now run as unit tests, save for those

that require interaction (i.e. show `pdb` usage) and those that use

CUDA.

`save` had to be moved before `load` in `jit/__init__.py` so `load`

could use the file generated by `save`

](https://our.intern.facebook.com/intern/diff/17192417/)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/25668

Pulled By: driazati

Differential Revision: D17192417

fbshipit-source-id: 931b310ae0c3d2cc6affeabccae5296f53fe42bc

Summary:

Stacked PRs

* #24445 - [jit] Misc doc updates #2

* **#24435 - [jit] Add docs to CI**

This integrates the [doctest](http://www.sphinx-doc.org/en/master/usage/extensions/doctest.html) module into `jit.rst` so that we can run our code examples as unit tests. They're added to `test_jit.py` under the `TestDocs` class (which takes about 30s to run). This should help prevent things like #24429 from happening in the future. They can be run manually by doing `cd docs && make doctest`.

* The test setup requires a hack since `doctest` defines everything in the `builtins` module which upsets `inspect`

* There are several places where the code wasn't testable (i.e. it threw an exception on purpose). This may be resolvable, but I'd prefer to leave that for a follow up. For now there are `TODO` comments littered around.

](https://our.intern.facebook.com/intern/diff/16840882/)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/24435

Pulled By: driazati

Differential Revision: D16840882

fbshipit-source-id: c4b26e7c374cd224a5a4a2d523163d7b997280ed

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/23376

This uses master version of sphinxcontrib-katex as it only

recently got prerender support.

Fixes#20984

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Test Plan: Imported from OSS

Differential Revision: D16582064

Pulled By: ezyang

fbshipit-source-id: 9ef24c5788c19572515ded2db2e8ebfb7a5ed44d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18598

ghimport-source-id: c74597e5e7437e94a43c163cee0639b20d0d0c6a

Stack from [ghstack](https://github.com/ezyang/ghstack):

* **#18598 Turn on F401: Unused import warning.**

This was requested by someone at Facebook; this lint is turned

on for Facebook by default. "Sure, why not."

I had to noqa a number of imports in __init__. Hypothetically

we're supposed to use __all__ in this case, but I was too lazy

to fix it. Left for future work.

Be careful! flake8-2 and flake8-3 behave differently with

respect to import resolution for # type: comments. flake8-3 will

report an import unused; flake8-2 will not. For now, I just

noqa'd all these sites.

All the changes were done by hand.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Differential Revision: D14687478

fbshipit-source-id: 30d532381e914091aadfa0d2a5a89404819663e3

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18507

ghimport-source-id: 1c3642befad2da78a7e5f39d6d58732b85c76267

Stack from [ghstack](https://github.com/ezyang/ghstack):

* **#18507 Upgrade flake8-bugbear to master, fix the new lints.**

It turns out Facebobok is internally using the unreleased master

flake8-bugbear, so upgrading it grabs a few more lints that Phabricator

was complaining about but we didn't get in open source.

A few of the getattr sites that I fixed look very suspicious (they're

written as if Python were a lazy language), but I didn't look more

closely into the matter.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Differential Revision: D14633682

fbshipit-source-id: fc3f97c87dca40bbda943a1d1061953490dbacf8

Summary:

The stylesheet at docs/source/_static/css/pytorch_theme.css is no longer necessary for the html docs build. The new html docs theme styles are located at https://github.com/pytorch/pytorch_sphinx_theme.

The Lato font is also no longer used in the new theme.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/13699

Differential Revision: D12967448

Pulled By: soumith

fbshipit-source-id: 7de205162a61e3acacfd8b499660d328ff3812ec

Summary:

Deleted this section by mistake in last PR.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/11938

Reviewed By: SsnL

Differential Revision: D9993258

Pulled By: brianjo

fbshipit-source-id: 2552178cebd005a1105a22930c4d128c67247378

Summary:

I'm 80% sure that this fixes the math bug. But I can't repro locally so I don't know.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/11472

Differential Revision: D9755328

Pulled By: SsnL

fbshipit-source-id: 130be664d3c6ceee3c0c166c1a86fc9ec3b79d74

* docs: enable redirect link to work for each specific page

* docs: add canonical_url for search engines

closes#7222

* docs: update redirect link to canonical_url

* Add more detail to CUDA documentation

Also adds better cross-linking to the pages that discuss relevant topics.

* Adds recommendation to torch.save docs

* Make the version numbers for the docs dynamic

Might need tweaks for beta, 1.0, etc.

Here's the command I used to invoke autopep8 (in parallel!):

git ls-files | grep '\.py$' | xargs -n1 -P`nproc` autopep8 -i

Several rules are ignored in setup.cfg. The goal is to let autopep8

handle everything which it can handle safely, and to disable any rules

which are tricky or controversial to address. We may want to come back

and re-enable some of these rules later, but I'm trying to make this

patch as safe as possible.

Also configures flake8 to match pep8's behavior.

Also configures TravisCI to check the whole project for lint.