Driss Guessous

70026aaad6

[SDPA] update type hint for scaled_dot_product_attention and documentation ( #94008 )

...

# Summary

- Adds type hinting support for SDPA

- Updates the documentation adding warnings and notes on the context manager

- Adds scaled_dot_product_attention to the non-linear activation function section of nn.functional docs

Pull Request resolved: https://github.com/pytorch/pytorch/pull/94008

Approved by: https://github.com/cpuhrsch

2023-02-10 18:02:43 +00:00

Natalia Gimelshein

a5daea69fb

teach inductor to handle floor ( #94341 )

...

Per title, happen when there's upsampling with non-integer scale.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/94341

Approved by: https://github.com/ezyang

2023-02-10 11:21:57 +00:00

PyTorch MergeBot

6007874bbb

Revert "teach inductor to handle floor ( #94341 )"

...

This reverts commit e7df9aaec8https://github.com/pytorch/pytorch/pull/94341 on behalf of https://github.com/huydhn due to Sorry for reverting your PR, but the CudaTest failure looks related. It fails on both PR and trunk e7df9aaec8

2023-02-09 19:31:08 +00:00

Natalia Gimelshein

e7df9aaec8

teach inductor to handle floor ( #94341 )

...

Per title, happen when there's upsampling with non-integer scale.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/94341

Approved by: https://github.com/ezyang

2023-02-09 17:09:35 +00:00

milesial

6c555b29a8

MHA optimizations ( #93234 )

...

Slight perf optimizations for regular MHA by reducing the number of kernels called

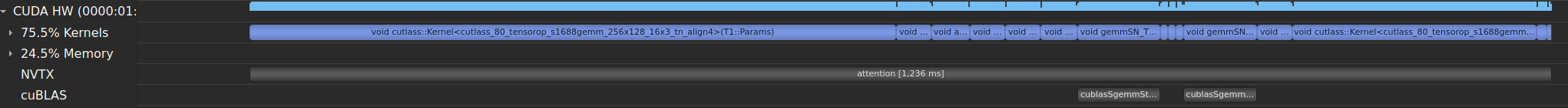

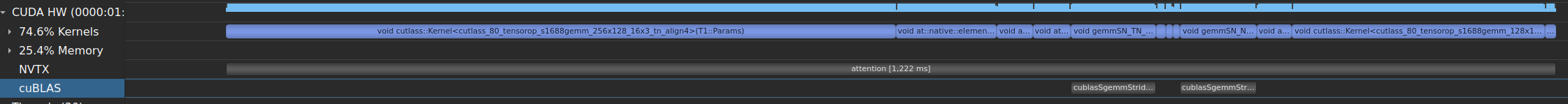

Before:

After:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/93234

Approved by: https://github.com/drisspg

2023-02-03 15:18:35 +00:00

Driss Guessous

3df0e26e20

[SDPA] Remove private version and only utilize public version ( #94004 )

...

# Summary

Due to internal failures we needed to keep the private call in torch.nn.mha. This PR undoes this change, so that we call the public function and remove the private function.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/94004

Approved by: https://github.com/cpuhrsch , https://github.com/albanD

2023-02-03 08:12:09 +00:00

103yiran

d9117b93fb

unsqueeze only when dim = 3 ( #91052 )

...

unsqueeze is not necessary if use view

Pull Request resolved: https://github.com/pytorch/pytorch/pull/91052

Approved by: https://github.com/albanD

2023-01-31 16:28:23 +00:00

Driss Guessous

ca8f5e177a

Use the old aten underscored function for Predictor ( #93096 )

...

Summary:

Errors reported via https://fb.prod.workplace.com/groups/1405155842844877/permalink/6644919482201794/

The problem is that the scriptable op set between predictor and the latest build of master is different.

Test Plan: Sandcastle testing

Differential Revision: D42786069

Pull Request resolved: https://github.com/pytorch/pytorch/pull/93096

Approved by: https://github.com/mikekgfb

2023-01-28 03:14:18 +00:00

Michael Gschwind

7265f60ad0

Regularize mask handling for attn_mask and key_padding_mask ( #92733 )

...

Summary:

Regularize mask handling for attn_mask and key_padding_mask

* Update documentation to remove reference to byte masks (which were deprecated long ago)

* Introduce check and warn about deprecation if attn_mask and key_padding_mask types mismatch

* Convert all masks to float before combining

* Combine by adding

Test Plan: sandcastle & github CI

Differential Revision: D42653215

Pull Request resolved: https://github.com/pytorch/pytorch/pull/92733

Approved by: https://github.com/ngimel , https://github.com/drisspg

2023-01-24 14:12:05 +00:00

Driss Guessous

df14650f0b

[SDPA] Update SDPA API and make function Public ( #92189 )

...

# Summary

In preparation for pt 2.0 launch this PR updates SDPA's API and makes the function a nn.funcitonal public function.

## Changes

### API

Previously the the function signature was:

`scaled_dot_product_attention(query, key, value, attn_mask=None, need_attn_weights=False, dropout_p=0.0, is_causal=False) -> (Tensor, Tensor)`

Updated signature:

`scaled_dot_product_attention(query, key, value, attn_mask=None, dropout_p=0.0, is_causal=False) -> Tensor`

This PR removes the need_attn_weights optional boolean variable and updates the return type to a singular tensor.

#### Reasoning:

The main goal of this function is to provide an easy interface for users to call into fused attention kernels e.g. (FlashAttention). The fused kernels do not currently support arbitrary attn_mask or dropout but there is a PR to mem-efficient attention to enable these. We want to have the API surface ready for when the backing kernels get updated.

The fused kernels save on memory usage by not materializing the weights and it is unlikely that a fast fused implementation will enable this feature so we are removing.

Discussed with folks at FAIR/Xformers and +1 this API change.

#### Make function Public

In preparation for the pt 2.0 launch we make the function public to start to generate user feedback

Pull Request resolved: https://github.com/pytorch/pytorch/pull/92189

Approved by: https://github.com/cpuhrsch

2023-01-23 20:50:46 +00:00

Michael Gschwind

af589b3d1f

switch causal mask for is_causal flag ( #91171 )

...

Summary: switch causal mask for is_causal flag

Test Plan: sandcastle & github

Differential Revision: D42089340

Pull Request resolved: https://github.com/pytorch/pytorch/pull/91171

Approved by: https://github.com/wushirong , https://github.com/drisspg

2022-12-30 17:24:58 +00:00

joncrall

ad782ff7df

Enable xdoctest runner in CI for real this time ( #83816 )

...

Builds on #83317 and enables running the doctests. Just need to figure out what is causing the failures.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/83816

Approved by: https://github.com/ezyang , https://github.com/malfet

2022-12-29 05:32:42 +00:00

Joel Schlosser

3d8834bdbf

SymIntify F.interpolate() with recompute_scale_factor=True ( #91318 )

...

This PR makes the minor changes necessary to get `F.interpolate()` working with symbolic shapes when `recompute_scale_factor=True` + adds `OpInfo` samples to test.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/91318

Approved by: https://github.com/ezyang

2022-12-29 01:42:56 +00:00

Michael Gschwind

512ec181ec

Introduce causal mask ( #90508 )

...

Summary: Introduce causal mask

This PR introduces a causal mask option _causal_mask (as well as causal mask detection if attn_mask is provided), since current custom kernels do not support arbitrary masks.

Test Plan: sandcastle & github ci/cd

Differential Revision: D41723137

Pull Request resolved: https://github.com/pytorch/pytorch/pull/90508

Approved by: https://github.com/albanD

2022-12-16 21:39:42 +00:00

Driss Guessous

78bdb858f9

Call _sdp_attention in nn.functional.mha ( #89470 )

...

# Summary

Replaces the the inline block of code in nn.funcitonal.mha with `_scaled_dot_product_attention`. This function allows the fused kernels to be called if all the required input conditions are met.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/89470

Approved by: https://github.com/cpuhrsch , https://github.com/mikekgfb

2022-12-02 19:46:22 +00:00

PyTorch MergeBot

f1415b8cb6

Revert "Call _sdp_attention in nn.functional.mha ( #89470 )"

...

This reverts commit 4d7ec30220https://github.com/pytorch/pytorch/pull/89470 on behalf of https://github.com/jeanschmidt due to breaking internal builds

2022-11-30 16:16:24 +00:00

PyTorch MergeBot

618a585f6c

Revert "replace double transpose with single permute in nn.f.mha ( #89847 )"

...

This reverts commit b9afa92827https://github.com/pytorch/pytorch/pull/89847 on behalf of https://github.com/jeanschmidt due to Need to revert this commit as it is causing conflict when reverting #89470

2022-11-30 16:03:48 +00:00

Driss Guessous

b9afa92827

replace double transpose with single permute in nn.f.mha ( #89847 )

...

# Summary

I forgot about permute which was exactly what I wanted. Quick perf bump

Pull Request resolved: https://github.com/pytorch/pytorch/pull/89847

Approved by: https://github.com/cpuhrsch , https://github.com/albanD

2022-11-29 22:18:42 +00:00

Driss Guessous

4d7ec30220

Call _sdp_attention in nn.functional.mha ( #89470 )

...

# Summary

Replaces the the inline block of code in nn.funcitonal.mha with `_scaled_dot_product_attention`. This function allows the fused kernels to be called if all the required input conditions are met.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/89470

Approved by: https://github.com/cpuhrsch , https://github.com/mikekgfb

2022-11-29 03:02:10 +00:00

foram-chandra

e19a7165fd

[nn] Remove deprecation warning from nn.functional.{tanh, sigmoid} ( #86905 )

...

Fixes #65909

Pull Request resolved: https://github.com/pytorch/pytorch/pull/86905

Approved by: https://github.com/albanD , https://github.com/kit1980

2022-11-24 00:34:26 +00:00

Nikita Karetnikov

0a1a53083e

[primTorch] Enable regex error testing for some refs ( #87765 )

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/87765

Approved by: https://github.com/mruberry

2022-11-23 23:36:27 +00:00

David Boetius

b652fbc57a

Fix torch.nn.functional.gelu docstring formatting ( #89061 )

...

The docstring of `torch.nn.functional.gelu` is formatted incorrectly, so that part of the math isn't rendered and there are extra blocks when there shouldn't: https://pytorch.org/docs/stable/generated/torch.nn.functional.gelu.html

I didn't build the docs, so I am not 100% sure that I got the formatting right, but I am confident.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/89061

Approved by: https://github.com/bdhirsh , https://github.com/kit1980

2022-11-18 01:57:41 +00:00

Ryan Spring

534ae6ae47

[primTorch] Implement group norm reference ( #87054 )

...

Add group norm reference

Split from #81191

Pull Request resolved: https://github.com/pytorch/pytorch/pull/87054

Approved by: https://github.com/mruberry

2022-11-11 01:08:20 +00:00

Kazuaki Ishizaki

2ddefbdc3c

Fix typos used in documents under torch directory ( #88300 )

...

This PR fixes typos, in comments of Python files, that are found from a search box at https://pytorch.org/docs/master/search.html

Pull Request resolved: https://github.com/pytorch/pytorch/pull/88300

Approved by: https://github.com/lezcano

2022-11-02 09:38:13 +00:00

Rui Zhu

4b757f4633

Assert if padding mask type is unexpected ( #86353 ) ( #87106 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/86353

Fix the issue described in

https://github.com/pytorch/pytorch/issues/86120

Test Plan: buck test mode/opt caffe2/test:test_transformers -- test_train_with_long_type_pad

Differential Revision: D40129968

Pull Request resolved: https://github.com/pytorch/pytorch/pull/87106

Approved by: https://github.com/malfet

2022-10-20 16:01:54 +00:00

Andrew M. James

db65909255

[Docs] Update mm family ops and F.linear to note limited sparse support. ( #86220 )

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/86220

Approved by: https://github.com/cpuhrsch

2022-10-18 19:55:18 +00:00

Nikita Karetnikov

d56017a14f

[primTorch] Add ref for triplet_margin_loss, improve triplet_margin_with_distance_loss ( #85614 )

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/85614

Approved by: https://github.com/lezcano , https://github.com/mruberry

2022-10-12 18:37:58 +00:00

lezcano

787028cadb

Implement col2im decomposition and fix im2col and add a few preconditions ( #85541 )

...

As per title

Pull Request resolved: https://github.com/pytorch/pytorch/pull/85541

Approved by: https://github.com/jansel

2022-09-30 09:31:53 +00:00

Srikumar Sastry

c8776dca6a

Remove extra with in value error exception statement ( #84713 )

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/84713

Approved by: https://github.com/ngimel

2022-09-27 18:43:39 +00:00

Driss Guessous

253ffbf28b

Exposing native _scaled_dot_product_attention to torch.nn ( #85044 )

...

# Summary

This exposes the _scaled_dot_product_attention function to python in the nn namespace. It is still underscored because the api for args, and kwargs is still in flux for the next few weeks and will eventually land as a prototype feature.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/85044

Approved by: https://github.com/cpuhrsch

2022-09-22 16:30:16 +00:00

PyTorch MergeBot

a3dc338ee1

Revert "Exposing native _scaled_dot_product_attention to torch.nn ( #85044 )"

...

This reverts commit 9fdd8a8b7fhttps://github.com/pytorch/pytorch/pull/85044 on behalf of https://github.com/huydhn due to This breaks CUDA 10.2 in trunk. We are deprecating CUDA 10.2, but it is still here in the mean time

2022-09-21 08:34:51 +00:00

Driss Guessous

9fdd8a8b7f

Exposing native _scaled_dot_product_attention to torch.nn ( #85044 )

...

# Summary

This exposes the _scaled_dot_product_attention function to python in the nn namespace. It is still underscored because the api for args, and kwargs is still in flux for the next few weeks and will eventually land as a prototype feature.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/85044

Approved by: https://github.com/cpuhrsch

2022-09-21 03:09:08 +00:00

joncrall

b136f3f310

More doctest refinements. ( #83317 )

...

Follow up to #82797

Now that the doctests themselves are in a better state, we should be able to enable xdoctest on the CI so they stay that way.

@ezyang @vadimkantorov

Pull Request resolved: https://github.com/pytorch/pytorch/pull/83317

Approved by: https://github.com/ezyang

2022-08-22 20:07:26 +00:00

Edward Z. Yang

cb64b558ee

Add spaces so example is flake8 compatible ( #83420 )

...

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Pull Request resolved: https://github.com/pytorch/pytorch/pull/83420

Approved by: https://github.com/jbschlosser

2022-08-15 21:39:57 +00:00

joncrall

4618371da5

Integrate xdoctest - Rebased ( #82797 )

...

This is a new version of #15648 based on the latest master branch.

Unlike the previous PR where I fixed a lot of the doctests in addition to integrating xdoctest, I'm going to reduce the scope here. I'm simply going to integrate xdoctest, and then I'm going to mark all of the failing tests as "SKIP". This will let xdoctest run on the dashboards, provide some value, and still let the dashboards pass. I'll leave fixing the doctests themselves to another PR.

In my initial commit, I do the bare minimum to get something running with failing dashboards. The few tests that I marked as skip are causing segfaults. Running xdoctest results in 293 failed, 201 passed tests. The next commits will be to disable those tests. (unfortunately I don't have a tool that will insert the `#xdoctest: +SKIP` directive over every failing test, so I'm going to do this mostly manually.)

Fixes https://github.com/pytorch/pytorch/issues/71105

@ezyang

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82797

Approved by: https://github.com/ezyang

2022-08-12 02:08:01 +00:00

Alex Li

1fedd40424

Update cross entropy documentation to metion logits clearly ( #82538 )

...

### Description

Improved the documentation for cross entropy as it is a common point of confusion.

### Issue

#82081

### Testing

I did not test this change as it is tiny and documentation-only

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82538

Approved by: https://github.com/jbschlosser

2022-08-08 22:24:28 +00:00

ProGamerGov

357b7d589c

Fix docstring inconsistencies: string -> str, boolean -> bool ( #82410 )

...

### Description

Throughout the PyTorch docs and codebase, the `string` type in docstrings is referred to by two separate names. This leads to inconsistent docs, like you can see here: https://pytorch.org/docs/stable/generated/torch.nn.Conv3d.html#torch.nn.Conv3d

This PR fixes this issue by ensuring that all mentions of the string type in docstrings, are using the same format that Sphinx generates hyperlinks for.

### Testing

No testing should be required for this change

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82410

Approved by: https://github.com/jbschlosser

2022-07-28 21:29:57 +00:00

kylematoba

66cf1b6459

correct argument name in docs ( #81485 )

...

Recently introduced `average_attn_weights` argument is documented incorrectly.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/81485

Approved by: https://github.com/albanD

2022-07-20 20:07:16 +00:00

soulitzer

bd75b2fea1

Add ref for nn.functional.prelu ( #79768 )

...

TODO:

- not sure if these error-inputs work for all devices (awaiting CI)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/79768

Approved by: https://github.com/mruberry

2022-07-07 17:04:47 +00:00

Albert Chung

b4ed13ea0f

Update docstring for scale_factor in torch.nn.functional.interpolate. ( #80807 )

...

Fixes #80786

Pull Request resolved: https://github.com/pytorch/pytorch/pull/80807

Approved by: https://github.com/ezyang

2022-07-04 04:36:16 +00:00

Joel Benjamin Schlosser

5953fd9133

Revert behavior of Dropout2d on 3D inputs to 1D channel-wise dropout behavior & warn

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/79549

Approved by: https://github.com/ngimel , https://github.com/albanD

2022-06-15 14:56:43 +00:00

Joel Benjamin Schlosser

2d73c8e6e0

Add Dropout1d module

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/79545

Approved by: https://github.com/ngimel , https://github.com/albanD

2022-06-15 14:39:07 +00:00

PyTorch MergeBot

3556457dd2

Revert "kl_div: fix for grads wrt target, double backward, forward-over-reverse AD support. ( #79007 )"

...

This reverts commit 72ad222cffhttps://github.com/pytorch/pytorch/pull/79007 on behalf of https://github.com/janeyx99 due to Broke test_fn_fwgrad_bwgrad_nn_functional_kl_div_cpu_float64 on trunk https://hud.pytorch.org/minihud?name_filter=pull%20/%20linux-xenial-py3.7-clang7-asan%20/%20test%20(default,%202,%205,%20linux.2xlarge)

2022-06-09 13:07:03 +00:00

Nikita Vedeneev

72ad222cff

kl_div: fix for grads wrt target, double backward, forward-over-reverse AD support. (#79007 )

...

Fixes https://github.com/pytorch/pytorch/issues/78867 ,

fixes https://github.com/pytorch/pytorch/issues/65466 .

Adds forward-over-reverse AD support.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/79007

Approved by: https://github.com/soulitzer , https://github.com/jbschlosser

2022-06-09 09:06:52 +00:00

Rohit Goswami

5a95b20d0f

DOC: Harmonize ELU documentation with the module doc ( #78909 )

...

Fixes #77055 by simply referring to the module docs as noted in the issue.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/78909

Approved by: https://github.com/albanD

2022-06-06 14:14:11 +00:00

samdow

b7cb4eae6b

Fix embedding jvp support by making embedding_renorm ignore forward mode AD ( #78560 )

...

On functorch, we started seeing [embedding forward mode fail](https://github.com/pytorch/functorch/pull/816 ). From looking at it, we figured out that recently [embedding got forward mode support enabled](369d9f4137https://github.com/pytorch/pytorch/blob/master/torch/testing/_internal/common_methods_invocations.py#L8877-L8881 ), so it's not checked.

What was happening is that `embedding_renorm` was setting `torch.no_grad()` which only turns off the backwards mode AD so functorch's jvp tests were still using forward mode AD during the `embedding_renorm` call. This makes it so that we don't use forward mode during the embedding_renorm call

Pull Request resolved: https://github.com/pytorch/pytorch/pull/78560

Approved by: https://github.com/soulitzer , https://github.com/albanD

2022-06-03 19:14:51 +00:00

PyTorch MergeBot

d578197747

Revert "Fix embedding jvp support by making embedding_renorm ignore forward mode AD ( #78560 )"

...

This reverts commit ce7c7bb2a9https://github.com/pytorch/pytorch/pull/78560 on behalf of https://github.com/malfet due to broke XLA (on CI and trunk), see ce7c7bb2a9

2022-06-02 17:40:34 +00:00

samdow

ce7c7bb2a9

Fix embedding jvp support by making embedding_renorm ignore forward mode AD ( #78560 )

...

On functorch, we started seeing [embedding forward mode fail](https://github.com/pytorch/functorch/pull/816 ). From looking at it, we figured out that recently [embedding got forward mode support enabled](369d9f4137https://github.com/pytorch/pytorch/blob/master/torch/testing/_internal/common_methods_invocations.py#L8877-L8881 ), so it's not checked.

What was happening is that `embedding_renorm` was setting `torch.no_grad()` which only turns off the backwards mode AD so functorch's jvp tests were still using forward mode AD during the `embedding_renorm` call. This makes it so that we don't use forward mode during the embedding_renorm call

Pull Request resolved: https://github.com/pytorch/pytorch/pull/78560

Approved by: https://github.com/soulitzer , https://github.com/albanD

2022-06-02 13:40:21 +00:00

Kshiteej K

4e1f41f66a

[docs][nn] conv: complex support note ( #78351 )

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/78351

Approved by: https://github.com/anjali411 , https://github.com/jbschlosser

2022-05-26 20:33:36 +00:00

Natalia Gimelshein

362525724b

type promote clamp ( #77035 )

...

Fixes #76630

When clamp(Tensor, Tensor) is structured, big parts of this PR won't be needed, but for now let's fix type promotion to make behavior more regular.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/77035

Approved by: https://github.com/mruberry

2022-05-09 05:54:17 +00:00

vitrioil

f92cddd890

Removed direct doc formatting

...

Fixes #76034

This does not make python remove all `__doc__` because in some places `__doc__` is assigned to a string.

Example:

04b3313379/torch/nn/modules/conv.py (L174-L233)https://github.com/pytorch/pytorch/pull/76619

Approved by: https://github.com/albanD

2022-05-02 14:14:33 +00:00

Yuge Zhang

3ac27e78ca

Fix typehint of multi_head_attention_forward

...

Fixes #76169

Pull Request resolved: https://github.com/pytorch/pytorch/pull/76170

Approved by: https://github.com/jbschlosser

2022-04-27 13:47:43 +00:00

Peter Bell

cb37e7a080

Remove F.pad python implementation

...

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73433

Approved by: https://github.com/albanD , https://github.com/jbschlosser

2022-04-23 00:13:20 +00:00

vitrioil

29b004be7a

Corrected documentation for supported padding

...

Fixes #72521

Pull Request resolved: https://github.com/pytorch/pytorch/pull/76117

Approved by: https://github.com/jbschlosser

2022-04-20 17:36:01 +00:00

Mike Ruberry

b09769992f

Improves the OpInfo out= tests

...

Edit: OpInfos separated into their own PRs to debug an ASAN failure that doesn't identify the failing test properly. This PR now just updates the out tests.

Adds OpInfos for:

- nn.functional.smooth_l1_loss

- nn.functional.l1_loss

- nn.functional.pdist

- nn.functional.binary_cross_entropy

- nn.functional.triplet_margin_loss

- nn.functional.triplet_margin_with_distance_loss

- nn.functional.max_unpool{1, 2, 3}D

- nn.functional.alpha_dropout

- nn.functional.soft_margin_loss

- nn.functional.multilabel_soft_margin_loss

- nn.functional.multilabel_margin_loss

- nn.functional.multi_margin_loss

- nn.functional.margin_ranking_loss

These OpInfos were taken from https://github.com/pytorch/pytorch/pull/67560 , https://github.com/pytorch/pytorch/pull/67823 , https://github.com/pytorch/pytorch/pull/68625 , and https://github.com/pytorch/pytorch/pull/67079 . The sample input update from https://github.com/pytorch/pytorch/pull/67017 is also rolled into this PR.

cc @zou3519 @nikitaved @pmeier @vfdev-5 @dagitses

Pull Request resolved: https://github.com/pytorch/pytorch/pull/75782

Approved by: https://github.com/ngimel

2022-04-15 06:16:01 +00:00

Edward Z. Yang

0a1bc5f501

Miscellaneous __torch_function__ fixes

...

I figured these out by unconditionally turning on a no-op torch function

mode on the test suite and then fixing errors as they showed up. Here's

what I found:

- _parse_to failed internal assert when __torch_function__'ed because it

claims its name is "to" to the argument parser; added a name override

so we know how to find the correct name

- Infix operator magic methods on Tensor did not uniformly handle

__torch_function__ and TypeError to NotImplemented. Now, we always

do the __torch_function__ handling in

_wrap_type_error_to_not_implemented and your implementation of

__torch_function__ gets its TypeErrors converted to NotImplemented

(for better or for worse; see

https://github.com/pytorch/pytorch/issues/75462 )

- A few cases where code was incorrectly testing if a Tensor was

Tensor-like in the wrong way, now use is_tensor_like (in grad

and in distributions). Also update docs for has_torch_function to

push people to use is_tensor_like.

- is_grads_batched was dropped from grad in handle_torch_function, now

fixed

- Report that you have a torch function even if torch function is

disabled if a mode is enabled. This makes it possible for a mode

to return NotImplemented, pass to a subclass which does some

processing and then pass back to the mode even after the subclass

disables __torch_function__ (so the tensors are treated "as if"

they are regular Tensors). This brings the C++ handling behavior

in line with the Python behavior.

- Make the Python implementation of overloaded types computation match

the C++ version: when torch function is disabled, there are no

overloaded types (because they all report they are not overloaded).

Signed-off-by: Edward Z. Yang <ezyangfb.com>

Pull Request resolved: https://github.com/pytorch/pytorch/pull/75484

Approved by: https://github.com/zou3519

2022-04-11 16:52:16 +00:00

Scott Wolchok

87f40ee6d6

[PyTorch] Existing MHA: fuse the attn_mask addition ( #73219 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73219

Saw a report that this elementwise add is causing overhead. IIUC this is easy to fuse?

ghstack-source-id: 152549975

Test Plan:

CI, review

Ran benchmark_transformers.par mha --batch-size 64 --max-sequence-length 128 --avg-sequence-length 256 --large --use-real-data-distribution --use-mask

and looked at the PT time number

```

before:

B=64, T=128, Half=True, GPU=True, Seed=1234, Padded tokens=54.92%, Use Mask=True PT Time: 1.24ms, NativePT Time: 1000000000.00ms, HF Time: 1.10ms, PT FLOPS: 59.07TFLOP/s, NativePT FLOPS: 0.00TFLOP/s, HF FLOPS: 66.46TFLOP/s

B=64, T=128, Half=True, GPU=True, Seed=1234, Padded tokens=54.92%, Use Mask=True PT Time: 1.23ms, NativePT Time: 1000000000.00ms, HF Time: 1.09ms, PT FLOPS: 59.57TFLOP/s, NativePT FLOPS: 0.00TFLOP/s, HF FLOPS: 66.75TFLOP/s

B=64, T=128, Half=True, GPU=True, Seed=1234, Padded tokens=54.92%, Use Mask=True PT Time: 1.24ms, NativePT Time: 1000000000.00ms, HF Time: 1.09ms, PT FLOPS: 58.87TFLOP/s, NativePT FLOPS: 0.00TFLOP/s, HF FLOPS: 66.77TFLOP/s

after:

B=64, T=128, Half=True, GPU=True, Seed=1234, Padded tokens=54.92%, Use Mask=True PT Time: 1.22ms, NativePT Time: 1000000000.00ms, HF Time: 1.10ms, PT FLOPS: 60.07TFLOP/s, NativePT FLOPS: 0.00TFLOP/s, HF FLOPS: 66.51TFLOP/s

B=64, T=128, Half=True, GPU=True, Seed=1234, Padded tokens=54.92%, Use Mask=True PT Time: 1.22ms, NativePT Time: 1000000000.00ms, HF Time: 1.09ms, PT FLOPS: 59.80TFLOP/s, NativePT FLOPS: 0.00TFLOP/s, HF FLOPS: 66.69TFLOP/s

B=64, T=128, Half=True, GPU=True, Seed=1234, Padded tokens=54.92%, Use Mask=True PT Time: 1.21ms, NativePT Time: 1000000000.00ms, HF Time: 1.09ms, PT FLOPS: 60.21TFLOP/s, NativePT FLOPS: 0.00TFLOP/s, HF FLOPS: 66.86TFLOP/s

```

Inspected a Kineto trace and confirmed that an elementwise add was fused into baddbmm.

Additional opportunity: I see a copy_ inside baddbmm that wasn't happening with the bmm path and I'm not sure why. Perhaps something went wrong with the structured kernels port by ezyang?

Reviewed By: ezyang

Differential Revision: D34160547

fbshipit-source-id: 78d406fb035e6f3bf13af2c9443a886eada35ac4

(cherry picked from commit aaffc39b24058742cb9ae42105f95b3eafe9d7f5)

2022-04-04 20:31:22 +00:00

Peter Bell

7f051b4d2b

Implement F.pad in ATen

...

This moves the C++ torch pad function into ATen proper. Once the

forward-compatibility period is over, the python interface can use

this directly.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73431

Approved by: https://github.com/ezyang

2022-04-01 01:10:12 +00:00

Davit Kobaladze

8e12d2bf25

fixes torch.jit.script lp_pool bug. ( #73287 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/60258

I used the solution proposed in https://github.com/pytorch/pytorch/issues/61275 . His solution failed unit tests and there was no progress after 08/07/2021. I'm willing to fix problems if they arise during CI.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73287

Reviewed By: navahgar, zou3519

Differential Revision: D35057812

Pulled By: eellison

fbshipit-source-id: 8e82e9f73b9536979aecf476c5c65336cdffc93a

(cherry picked from commit e85e912a4edec1111623c5cbbba4171fe3bc5b1d)

2022-03-28 23:16:07 +00:00

Peter Bell

f86bb2d6e4

Implement _pad_circular in ATen

...

Closes #44459

This migrates the python implementation of `_pad_circular` to ATen and

removes the old C++ implementation that had diverged from python.

Note that `pad` can't actually use this until the

forward-compatibility period is over.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73410

Approved by: https://github.com/ezyang

2022-03-25 02:09:01 +00:00

Kushashwa Ravi Shrimali

452c26bbeb

Fix functional.max_poolNd warning spam in the CI

...

Fixes https://github.com/pytorch/pytorch/issues/71257 .

Warnings have been removed, please see [this](https://github.com/pytorch/pytorch/pull/71258#issuecomment-1058503649 ) comment.

cc: @Lezcano @jbschlosser @zou3519

Pull Request resolved: https://github.com/pytorch/pytorch/pull/71258

Approved by: https://github.com/Lezcano , https://github.com/jbschlosser

2022-03-04 18:42:23 +00:00

Scott Wolchok

28339ddc25

[PyTorch] Hit fused addmm path in linear() for existing MHA ( #72871 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/72871

We do this same trick in the native MHA implementation; backport it for purposes of fair comparison.

ghstack-source-id: 149526858

Test Plan: CI

Reviewed By: ngimel

Differential Revision: D34176090

fbshipit-source-id: 8b578c29c4dcf0d85bae74dfbbb82db9a8f32dc7

(cherry picked from commit fd50170935

2022-02-22 19:33:46 +00:00

Joel Schlosser

f670179c0a

Fix doc regressions for various modules and functional forms ( #73014 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/73014

Fixes #72501

Fixes #72502

Fixes #72503

Fixes #72504

Fixes #72505

Fixes #72506

Fixes #72507

Fixes #72509

Fixes #72510

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D34305640

Pulled By: jbschlosser

fbshipit-source-id: 62f341633fdb0316eaa346cf7247865290eb830a

(cherry picked from commit 8362d264e7

2022-02-17 22:40:18 +00:00

Vitaly Fedyunin

81fbeea760

Add docstrings to native_channel_shuffle ( #72919 )

...

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/72919

Test Plan: Imported from OSS

Reviewed By: bdhirsh

Differential Revision: D34274717

Pulled By: VitalyFedyunin

fbshipit-source-id: fa42f91ef2335e2594b19ef65d914c711f7a94fd

(cherry picked from commit a6f6fe9112

2022-02-17 02:33:08 +00:00

Ryan Spring

4f8b986e28

Implement Tanh Gelu Approximation ( #61439 )

...

Summary:

1. Implements https://github.com/pytorch/pytorch/issues/39853

2. Adds approximate boolean flag to Gelu

3. Enables Tanh Gelu approximation

4. Adds double backward support for Gelu

5. Enable Tanh Gelu in NvFuser

```

def gelu(x, approximate : str = 'none'):

if approximate == 'tanh':

# sqrt(2/pi) = 0.7978845608028654

return 0.5 * x * (1.0 + torch.tanh(0.7978845608028654 * (x + 0.044715 * torch.pow(x, 3.0))))

else:

return x * normcdf(x)

```

Linking XLA PR - https://github.com/pytorch/xla/pull/3039

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61439

Reviewed By: VitalyFedyunin

Differential Revision: D33894937

Pulled By: jbschlosser

fbshipit-source-id: b65e8fb6ea66168af8f34f45ed50e92737a33851

(cherry picked from commit 6e986f91a9

2022-02-14 03:40:32 +00:00

kshitij12345

02f6226bff

[fix] Dropout2d-3d no-batch-dim ( #69885 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/69801

TODO:

* [x] Update C++ API

cc albanD mruberry jbschlosser walterddr kshitij12345

Pull Request resolved: https://github.com/pytorch/pytorch/pull/69885

Reviewed By: mruberry

Differential Revision: D33175470

Pulled By: jbschlosser

fbshipit-source-id: c9d7d9e0f59ba290a0157725c338a345f3d58b9f

(cherry picked from commit 7e4271a156

2022-02-02 16:40:32 +00:00

pejato

b8a4ee5e35

Clean up old warnings in F.interpolate ( #72093 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/71720

This PR removes the old warnings for `recompute_scale_factor` and `align_corners`.

Looking at this, I realize that the tests I modified don't really catch whether or not a warning is created for `recompute_scale_factor`. If desired, I can add a couple lines into the tests there to pass a floating point in the `scale_factors` kwarg, along with `recompute_scale_factor=None`.

Let me know how this looks, thanks so much!

Pull Request resolved: https://github.com/pytorch/pytorch/pull/72093

Reviewed By: mruberry

Differential Revision: D33917615

Pulled By: albanD

fbshipit-source-id: e822f0a15b813ecf312cdc6ed0b693e7f1d1ca89

(cherry picked from commit c14852b85c

2022-02-01 21:18:29 +00:00

Peter Bell

e8d226cd9a

Remove some unnecessary python functional wrappers ( #61608 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61608

See #61544 for an example of issues created by functional wrappers. In this

case, these are directly wrapping the native function with no added

functionality. One exception was `bilinear` which was just missing the default

argument in C++, but was otherwise the same.

I've kept the symbol `torch.functional.istft` because it looks like public API,

but it could just as easily be moved to `_torch_docs.py`.

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision: D31401361

Pulled By: albanD

fbshipit-source-id: 162b74d0b2d4f2e5c4834687a94541960cefdd52

(cherry picked from commit 700cd73ca1

2022-02-01 16:59:26 +00:00

Nikita Shulga

74c44ba9d6

Revert D33850228: [pytorch][PR] Implement Tanh Gelu Approximation

...

Test Plan: revert-hammer

Differential Revision:

D33850228 (23d03025dc23d03025dcc9efb58223

2022-01-31 17:44:19 +00:00

Ryan Spring

23d03025dc

Implement Tanh Gelu Approximation ( #61439 )

...

Summary:

1. Implements https://github.com/pytorch/pytorch/issues/39853

2. Adds approximate boolean flag to Gelu

3. Enables Tanh Gelu approximation

4. Adds double backward support for Gelu

5. Enable Tanh Gelu in NvFuser

```

def gelu(x, approximate : str = 'none'):

if approximate == 'tanh':

# sqrt(2/pi) = 0.7978845608028654

return 0.5 * x * (1.0 + torch.tanh(0.7978845608028654 * (x + 0.044715 * torch.pow(x, 3.0))))

else:

return x * normcdf(x)

```

Linking XLA PR - https://github.com/pytorch/xla/pull/3039

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61439

Reviewed By: cpuhrsch

Differential Revision: D33850228

Pulled By: jbschlosser

fbshipit-source-id: 3cc33fb298e480d7ecc5c67716da019d60c6ab33

(cherry picked from commit 3a53b3e94f

2022-01-31 17:07:45 +00:00

vfdev

63429bf4b3

Removed JIT FC tweaks for interpolation options ( #71937 )

...

Summary:

Description:

- Removed JIT FC tweaks for interpolation options : nearest-exact and antialiasing

They were added in

- https://github.com/pytorch/pytorch/pull/64501 (Sept 04 2021)

- https://github.com/pytorch/pytorch/pull/65142 (Sept 16 2021)

cc jbschlosser

Pull Request resolved: https://github.com/pytorch/pytorch/pull/71937

Reviewed By: mrshenli

Differential Revision: D33845502

Pulled By: jbschlosser

fbshipit-source-id: 8a94454fd643cd2aef21b06689f72a0f16620d30

(cherry picked from commit b21173d64c

2022-01-28 19:56:59 +00:00

Joel Schlosser

cb823d9f07

Revert D33744717: [pytorch][PR] Implement Tanh Gelu Approximation

...

Test Plan: revert-hammer

Differential Revision:

D33744717 (f499ab9ceff499ab9cefe9fb2d1db1

2022-01-28 18:35:01 +00:00

Ryan Spring

f499ab9cef

Implement Tanh Gelu Approximation ( #61439 )

...

Summary:

1. Implements https://github.com/pytorch/pytorch/issues/39853

2. Adds approximate boolean flag to Gelu

3. Enables Tanh Gelu approximation

4. Adds double backward support for Gelu

5. Enable Tanh Gelu in NvFuser

```

def gelu(x, approximate : str = 'none'):

if approximate == 'tanh':

# sqrt(2/pi) = 0.7978845608028654

return 0.5 * x * (1.0 + torch.tanh(0.7978845608028654 * (x + 0.044715 * torch.pow(x, 3.0))))

else:

return x * normcdf(x)

```

Linking XLA PR - https://github.com/pytorch/xla/pull/3039

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61439

Reviewed By: mikaylagawarecki

Differential Revision: D33744717

Pulled By: jbschlosser

fbshipit-source-id: d64532a562ed53247bb4fa52bb16722634d5c187

(cherry picked from commit 4713dd9cca

2022-01-28 16:59:09 +00:00

kshitij12345

2981534f54

[nn] cross_entropy: no batch dim support ( #71055 )

...

Summary:

Reference: https://github.com/pytorch/pytorch/issues/60585

cc albanD mruberry jbschlosser walterddr kshitij12345

Pull Request resolved: https://github.com/pytorch/pytorch/pull/71055

Reviewed By: anjali411

Differential Revision: D33567403

Pulled By: jbschlosser

fbshipit-source-id: 4d0a311ad7419387c4547e43e533840c8b6d09d8

2022-01-13 14:48:51 -08:00

George Qi

d7db5fb462

ctc loss no batch dim support ( #70092 )

...

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/70092

Test Plan: Imported from OSS

Reviewed By: jbschlosser

Differential Revision: D33280068

Pulled By: george-qi

fbshipit-source-id: 3278fb2d745a396fe27c00fb5f40df0e7f584f81

2022-01-07 14:33:22 -08:00

Joel Schlosser

e6befbe85c

Add flag to optionally average output attention weights across heads ( #70055 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/47583

Pull Request resolved: https://github.com/pytorch/pytorch/pull/70055

Reviewed By: bhosmer

Differential Revision: D33457866

Pulled By: jbschlosser

fbshipit-source-id: 17746b3668b0148c1e1ed8333227b7c42f1e3bf5

2022-01-06 17:32:37 -08:00

kshitij12345

1aa98c7540

[docs] multi_head_attention_forward no-batch dim support ( #70590 )

...

Summary:

no batch dim support added in https://github.com/pytorch/pytorch/issues/67176

Pull Request resolved: https://github.com/pytorch/pytorch/pull/70590

Reviewed By: VitalyFedyunin

Differential Revision: D33405283

Pulled By: jbschlosser

fbshipit-source-id: 86217d7d540184fd12f3a9096605d2b1e9aa313e

2022-01-05 08:26:25 -08:00

vfdev

d2abf3f981

Added antialias flag to interpolate (CPU only, bicubic) ( #68819 )

...

Summary:

Description:

- Added antialias flag to interpolate (CPU only)

- forward and backward for bicubic mode

- added tests

Previous PR for bilinear, https://github.com/pytorch/pytorch/pull/65142

### Benchmarks

<details>

<summary>

Forward pass, CPU. PTH interpolation vs PIL

</summary>

Cases:

- PTH RGB 3 Channels, float32 vs PIL RGB uint8 (apples vs pears)

- PTH 1 Channel, float32 vs PIL 1 Channel Float

Code: https://gist.github.com/vfdev-5/b173761a567f2283b3c649c3c0574112

```

Torch config: PyTorch built with:

- GCC 9.3

- C++ Version: 201402

- OpenMP 201511 (a.k.a. OpenMP 4.5)

- CPU capability usage: AVX2

- CUDA Runtime 11.1

- NVCC architecture flags: -gencode;arch=compute_61,code=sm_61

- CuDNN 8.0.5

- Build settings: BUILD_TYPE=Release, CUDA_VERSION=11.1, CUDNN_VERSION=8.0.5, CXX_COMPILER=/usr/bin/c++, CXX_FLAGS= -Wno-deprecated -fvisibility-inlines-hidden -DUSE_PTHREADPOOL -fopenmp -DNDEBUG -DUSE_KINETO -DUSE_PYTORCH_QNNPACK -DSYMBOLICATE_MOBILE_DEBUG_HANDLE -DEDGE_PROFILER_USE_KINETO -O2 -fPIC -Wno-narrowing -Wall -Wextra -Werror=return-type -Wno-missing-field-initializers -Wno-type-limits -Wno-array-bounds -Wno-unknown-pragmas -Wno-sign-compare -Wno-unused-parameter -Wno-unused-function -Wno-unused-result -Wno-unused-local-typedefs -Wno-strict-overflow -Wno-strict-aliasing -Wno-error=deprecated-declarations -Wno-stringop-overflow -Wno-psabi -Wno-error=pedantic -Wno-error=redundant-decls -Wno-error=old-style-cast -fdiagnostics-color=always -faligned-new -Wno-unused-but-set-variable -Wno-maybe-uninitialized -fno-math-errno -fno-trapping-math -Werror=format -Werror=cast-function-type -Wno-stringop-overflow, PERF_WITH_AVX=1, PERF_WITH_AVX2=1, PERF_WITH_AVX512=1, TORCH_VERSION=1.11.0, USE_CUDA=1, USE_CUDNN=1, USE_EIGEN_FOR_BLAS=ON, USE_EXCEPTION_PTR=1, USE_GFLAGS=OFF, USE_GLOG=OFF, USE_MKL=OFF, USE_MKLDNN=OFF, USE_MPI=OFF, USE_NCCL=ON, USE_NNPACK=0, USE_OPENMP=ON, USE_ROCM=OFF,

Num threads: 1

[------------------- Downsampling (bicubic): torch.Size([1, 3, 906, 438]) -> (320, 196) -------------------]

| Reference, PIL 8.4.0, mode: RGB | 1.11.0a0+gitb0bdf58

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 4.5 | 5.2

channels_last non-contiguous torch.float32 | 4.5 | 5.3

Times are in milliseconds (ms).

[------------------- Downsampling (bicubic): torch.Size([1, 3, 906, 438]) -> (460, 220) -------------------]

| Reference, PIL 8.4.0, mode: RGB | 1.11.0a0+gitb0bdf58

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 5.7 | 6.4

channels_last non-contiguous torch.float32 | 5.7 | 6.4

Times are in milliseconds (ms).

[------------------- Downsampling (bicubic): torch.Size([1, 3, 906, 438]) -> (120, 96) --------------------]

| Reference, PIL 8.4.0, mode: RGB | 1.11.0a0+gitb0bdf58

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 3.0 | 4.0

channels_last non-contiguous torch.float32 | 2.9 | 4.1

Times are in milliseconds (ms).

[------------------ Downsampling (bicubic): torch.Size([1, 3, 906, 438]) -> (1200, 196) -------------------]

| Reference, PIL 8.4.0, mode: RGB | 1.11.0a0+gitb0bdf58

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 14.7 | 17.1

channels_last non-contiguous torch.float32 | 14.8 | 17.2

Times are in milliseconds (ms).

[------------------ Downsampling (bicubic): torch.Size([1, 3, 906, 438]) -> (120, 1200) -------------------]

| Reference, PIL 8.4.0, mode: RGB | 1.11.0a0+gitb0bdf58

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 3.5 | 3.9

channels_last non-contiguous torch.float32 | 3.5 | 3.9

Times are in milliseconds (ms).

[---------- Downsampling (bicubic): torch.Size([1, 1, 906, 438]) -> (320, 196) ---------]

| Reference, PIL 8.4.0, mode: F | 1.11.0a0+gitb0bdf58

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 2.4 | 1.8

Times are in milliseconds (ms).

[---------- Downsampling (bicubic): torch.Size([1, 1, 906, 438]) -> (460, 220) ---------]

| Reference, PIL 8.4.0, mode: F | 1.11.0a0+gitb0bdf58

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 3.1 | 2.2

Times are in milliseconds (ms).

[---------- Downsampling (bicubic): torch.Size([1, 1, 906, 438]) -> (120, 96) ----------]

| Reference, PIL 8.4.0, mode: F | 1.11.0a0+gitb0bdf58

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 1.6 | 1.4

Times are in milliseconds (ms).

[--------- Downsampling (bicubic): torch.Size([1, 1, 906, 438]) -> (1200, 196) ---------]

| Reference, PIL 8.4.0, mode: F | 1.11.0a0+gitb0bdf58

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 7.9 | 5.7

Times are in milliseconds (ms).

[--------- Downsampling (bicubic): torch.Size([1, 1, 906, 438]) -> (120, 1200) ---------]

| Reference, PIL 8.4.0, mode: F | 1.11.0a0+gitb0bdf58

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 1.7 | 1.3

Times are in milliseconds (ms).

```

</details>

Code is moved from torchvision: https://github.com/pytorch/vision/pull/3810 and https://github.com/pytorch/vision/pull/4208

Pull Request resolved: https://github.com/pytorch/pytorch/pull/68819

Reviewed By: mikaylagawarecki

Differential Revision: D33339117

Pulled By: jbschlosser

fbshipit-source-id: 6a0443bbba5439f52c7dbc1be819b75634cf67c4

2021-12-29 14:04:43 -08:00

srijan789

73b5b6792f

Adds reduction args to signature of F.multilabel_soft_margin_loss docs ( #70420 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/70301

Pull Request resolved: https://github.com/pytorch/pytorch/pull/70420

Reviewed By: gchanan

Differential Revision: D33336924

Pulled By: jbschlosser

fbshipit-source-id: 18189611b3fc1738900312efe521884bced42666

2021-12-28 09:48:05 -08:00

George Qi

7c690ef1c2

FractionalMaxPool3d with no_batch_dim support ( #69732 )

...

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/69732

Test Plan: Imported from OSS

Reviewed By: jbschlosser

Differential Revision: D33280090

Pulled By: george-qi

fbshipit-source-id: aaf90a372b6d80da0554bad28d56436676f9cb89

2021-12-22 14:30:32 -08:00

rohitgr7

78bea1bb66

update example in classification losses ( #69816 )

...

Summary:

Just updated a few examples that were either failing or raising deprecated warnings.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/69816

Reviewed By: bdhirsh

Differential Revision: D33217585

Pulled By: albanD

fbshipit-source-id: c6804909be74585c8471b8166b69e6693ad62ca7

2021-12-21 02:46:48 -08:00

kshitij12345

e8d5c7cf7f

[nn] mha : no-batch-dim support (python) ( #67176 )

...

Summary:

Reference: https://github.com/pytorch/pytorch/issues/60585

* [x] Update docs

* [x] Tests for shape checking

Tests take roughly 20s on system that I use. Below is the timings for slowest 20 tests.

```

pytest test/test_modules.py -k _multih --durations=20

============================================================================================== test session starts ===============================================================================================

platform linux -- Python 3.10.0, pytest-6.2.5, py-1.10.0, pluggy-1.0.0

rootdir: /home/kshiteej/Pytorch/pytorch_no_batch_mha, configfile: pytest.ini

plugins: hypothesis-6.23.2, repeat-0.9.1

collected 372 items / 336 deselected / 36 selected

test/test_modules.py ..............ssssssss.............. [100%]

================================================================================================ warnings summary ================================================================================================

../../.conda/envs/pytorch-cuda-dev/lib/python3.10/site-packages/torch/backends/cudnn/__init__.py:73

test/test_modules.py::TestModuleCUDA::test_factory_kwargs_nn_MultiheadAttention_cuda_float32

/home/kshiteej/.conda/envs/pytorch-cuda-dev/lib/python3.10/site-packages/torch/backends/cudnn/__init__.py:73: UserWarning: PyTorch was compiled without cuDNN/MIOpen support. To use cuDNN/MIOpen, rebuild PyTorch making sure the library is visible to the build system.

warnings.warn(

-- Docs: https://docs.pytest.org/en/stable/warnings.html

============================================================================================== slowest 20 durations ==============================================================================================

8.66s call test/test_modules.py::TestModuleCUDA::test_gradgrad_nn_MultiheadAttention_cuda_float64

2.02s call test/test_modules.py::TestModuleCPU::test_gradgrad_nn_MultiheadAttention_cpu_float64

1.89s call test/test_modules.py::TestModuleCUDA::test_grad_nn_MultiheadAttention_cuda_float64

1.01s call test/test_modules.py::TestModuleCUDA::test_factory_kwargs_nn_MultiheadAttention_cuda_float32

0.51s call test/test_modules.py::TestModuleCPU::test_grad_nn_MultiheadAttention_cpu_float64

0.46s call test/test_modules.py::TestModuleCUDA::test_forward_nn_MultiheadAttention_cuda_float32

0.45s call test/test_modules.py::TestModuleCUDA::test_non_contiguous_tensors_nn_MultiheadAttention_cuda_float64

0.44s call test/test_modules.py::TestModuleCUDA::test_non_contiguous_tensors_nn_MultiheadAttention_cuda_float32

0.21s call test/test_modules.py::TestModuleCUDA::test_pickle_nn_MultiheadAttention_cuda_float64

0.21s call test/test_modules.py::TestModuleCUDA::test_pickle_nn_MultiheadAttention_cuda_float32

0.18s call test/test_modules.py::TestModuleCUDA::test_forward_nn_MultiheadAttention_cuda_float64

0.17s call test/test_modules.py::TestModuleCPU::test_non_contiguous_tensors_nn_MultiheadAttention_cpu_float32

0.16s call test/test_modules.py::TestModuleCPU::test_non_contiguous_tensors_nn_MultiheadAttention_cpu_float64

0.11s call test/test_modules.py::TestModuleCUDA::test_factory_kwargs_nn_MultiheadAttention_cuda_float64

0.08s call test/test_modules.py::TestModuleCPU::test_pickle_nn_MultiheadAttention_cpu_float32

0.08s call test/test_modules.py::TestModuleCPU::test_pickle_nn_MultiheadAttention_cpu_float64

0.06s call test/test_modules.py::TestModuleCUDA::test_repr_nn_MultiheadAttention_cuda_float64

0.06s call test/test_modules.py::TestModuleCUDA::test_repr_nn_MultiheadAttention_cuda_float32

0.06s call test/test_modules.py::TestModuleCPU::test_forward_nn_MultiheadAttention_cpu_float32

0.06s call test/test_modules.py::TestModuleCPU::test_forward_nn_MultiheadAttention_cpu_float64

============================================================================================ short test summary info =============================================================================================

=========================================================================== 28 passed, 8 skipped, 336 deselected, 2 warnings in 19.71s ===========================================================================

```

cc albanD mruberry jbschlosser walterddr

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67176

Reviewed By: dagitses

Differential Revision: D33094285

Pulled By: jbschlosser

fbshipit-source-id: 0dd08261b8a457bf8bad5c7f3f6ded14b0beaf0d

2021-12-14 13:21:21 -08:00

Pearu Peterson

48771d1c7f

[BC-breaking] Change dtype of softmax to support TorchScript and MyPy ( #68336 )

...

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/68336

Test Plan: Imported from OSS

Reviewed By: VitalyFedyunin

Differential Revision: D32470965

Pulled By: cpuhrsch

fbshipit-source-id: 254b62db155321e6a139bda9600722c948f946d3

2021-11-18 11:26:14 -08:00

Richard Zou

f9ef807f4d

Replace empty with new_empty in nn.functional.pad ( #68565 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/68565

This makes it so that we can now vmap over nn.functional.pad (circular

variant). Previously we could not because we were effectively doing

`out.copy_(input)` where the out was created with empty.

This also has the added side effect of cleaning up the code.

Test Plan:

- I tested this using functorch.vmap and can confirm that vmap now

works.

- Unfortunately this doesn't work with the vmap in core so I cannot add

a test for this here.

Reviewed By: albanD

Differential Revision: D32520188

Pulled By: zou3519

fbshipit-source-id: 780a7e8207d7c45fcba645730a5803733ebfd7be

2021-11-18 06:06:50 -08:00

vfdev-5

3da2e09c9b

Added antialias flag to interpolate (CPU only, bilinear) ( #65142 )

...

Summary:

Description:

- Added antialias flag to interpolate (CPU only)

- forward and backward for bilinear mode

- added tests

### Benchmarks

<details>

<summary>

Forward pass, CPU. PTH interpolation vs PIL

</summary>

Cases:

- PTH RGB 3 Channels, float32 vs PIL RGB uint8 (apply vs pears)

- PTH 1 Channel, float32 vs PIL 1 Channel Float

Code: https://gist.github.com/vfdev-5/b173761a567f2283b3c649c3c0574112

```

# OMP_NUM_THREADS=1 python bench_interp_aa_vs_pillow.py

Torch config: PyTorch built with:

- GCC 9.3

- C++ Version: 201402

- OpenMP 201511 (a.k.a. OpenMP 4.5)

- CPU capability usage: AVX2

- CUDA Runtime 11.1

- NVCC architecture flags: -gencode;arch=compute_75,code=sm_75

- CuDNN 8.0.5

- Build settings: BUILD_TYPE=Release, CUDA_VERSION=11.1, CUDNN_VERSION=8.0.5, CXX_COMPILER=/usr/bin/c++, CXX_FLAGS= -Wno-deprecated -fvisibility-inlines-hidden -DUSE_PTHREADPOOL -fopenmp -DNDEBUG -DUSE_KINETO -DUSE_PYTORCH_QNNPACK -DSYMBOLICATE_MOBILE_DEBUG_HANDLE -DEDGE_PROFILER_USE_KINETO -O2 -fPIC -Wno-narrowing -Wall -Wextra -Werror=return-type -Wno-missing-field-initializers -Wno-type-limits -Wno-array-bounds -Wno-unknown-pragmas -Wno-sign-compare -Wno-unused-parameter -Wno-unused-variable -Wno-unused-function -Wno-unused-result -Wno-unused-local-typedefs -Wno-strict-overflow -Wno-strict-aliasing -Wno-error=deprecated-declarations -Wno-stringop-overflow -Wno-psabi -Wno-error=pedantic -Wno-error=redundant-decls -Wno-error=old-style-cast -fdiagnostics-color=always -faligned-new -Wno-unused-but-set-variable -Wno-maybe-uninitialized -fno-math-errno -fno-trapping-math -Werror=format -Werror=cast-function-type -Wno-stringop-overflow, PERF_WITH_AVX=1, PERF_WITH_AVX2=1, PERF_WITH_AVX512=1, TORCH_VERSION=1.10.0, USE_CUDA=1, USE_CUDNN=1, USE_EIGEN_FOR_BLAS=ON, USE_EXCEPTION_PTR=1, USE_GFLAGS=OFF, USE_GLOG=OFF, USE_MKL=OFF, USE_MKLDNN=OFF, USE_MPI=OFF, USE_NCCL=ON, USE_NNPACK=0, USE_OPENMP=ON,

Num threads: 1

[------------------------ Downsampling: torch.Size([1, 3, 906, 438]) -> (320, 196) ------------------------]

| Reference, PIL 8.3.2, mode: RGB | 1.10.0a0+git1e87d91

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 2.9 | 3.1

channels_last non-contiguous torch.float32 | 2.6 | 3.6

Times are in milliseconds (ms).

[------------------------ Downsampling: torch.Size([1, 3, 906, 438]) -> (460, 220) ------------------------]

| Reference, PIL 8.3.2, mode: RGB | 1.10.0a0+git1e87d91

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 3.4 | 4.0

channels_last non-contiguous torch.float32 | 3.4 | 4.8

Times are in milliseconds (ms).

[------------------------ Downsampling: torch.Size([1, 3, 906, 438]) -> (120, 96) -------------------------]

| Reference, PIL 8.3.2, mode: RGB | 1.10.0a0+git1e87d91

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 1.6 | 1.8

channels_last non-contiguous torch.float32 | 1.6 | 1.9

Times are in milliseconds (ms).

[----------------------- Downsampling: torch.Size([1, 3, 906, 438]) -> (1200, 196) ------------------------]

| Reference, PIL 8.3.2, mode: RGB | 1.10.0a0+git1e87d91

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 9.0 | 11.3

channels_last non-contiguous torch.float32 | 8.9 | 12.5

Times are in milliseconds (ms).

[----------------------- Downsampling: torch.Size([1, 3, 906, 438]) -> (120, 1200) ------------------------]

| Reference, PIL 8.3.2, mode: RGB | 1.10.0a0+git1e87d91

1 threads: -------------------------------------------------------------------------------------------------

channels_first contiguous torch.float32 | 2.1 | 1.8

channels_last non-contiguous torch.float32 | 2.1 | 3.4

Times are in milliseconds (ms).

[--------------- Downsampling: torch.Size([1, 1, 906, 438]) -> (320, 196) --------------]

| Reference, PIL 8.3.2, mode: F | 1.10.0a0+git1e87d91

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 1.2 | 1.0

Times are in milliseconds (ms).

[--------------- Downsampling: torch.Size([1, 1, 906, 438]) -> (460, 220) --------------]

| Reference, PIL 8.3.2, mode: F | 1.10.0a0+git1e87d91

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 1.4 | 1.3

Times are in milliseconds (ms).

[--------------- Downsampling: torch.Size([1, 1, 906, 438]) -> (120, 96) ---------------]

| Reference, PIL 8.3.2, mode: F | 1.10.0a0+git1e87d91

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 719.9 | 599.9

Times are in microseconds (us).

[-------------- Downsampling: torch.Size([1, 1, 906, 438]) -> (1200, 196) --------------]

| Reference, PIL 8.3.2, mode: F | 1.10.0a0+git1e87d91

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 3.7 | 3.5

Times are in milliseconds (ms).

[-------------- Downsampling: torch.Size([1, 1, 906, 438]) -> (120, 1200) --------------]

| Reference, PIL 8.3.2, mode: F | 1.10.0a0+git1e87d91

1 threads: ------------------------------------------------------------------------------

contiguous torch.float32 | 834.4 | 605.7

Times are in microseconds (us).

```

</details>

Code is moved from torchvision: https://github.com/pytorch/vision/pull/4208

Pull Request resolved: https://github.com/pytorch/pytorch/pull/65142

Reviewed By: mrshenli

Differential Revision: D32432405

Pulled By: jbschlosser

fbshipit-source-id: b66c548347f257c522c36105868532e8bc1d4c6d

2021-11-17 09:10:15 -08:00

vfdev-5

6adbe044e3

Added nearest-exact interpolation mode ( #64501 )

...

Summary:

Added "nearest-exact" interpolation mode to fix the issues: https://github.com/pytorch/pytorch/issues/34808 and https://github.com/pytorch/pytorch/issues/62237 .

Description:

As we can not fix "nearest" mode without large impact on already trained model [it was suggested](https://github.com/pytorch/pytorch/pull/64501#pullrequestreview-749771815 ) to introduce new mode instead of fixing exising "nearest" mode.

- New mode "nearest-exact" performs index computation for nearest interpolation to match scikit-image, pillow, TF2 and while "nearest" mode still match opencv INTER_NEAREST, which appears to be buggy, see https://ppwwyyxx.com/blog/2021/Where-are-Pixels/#Libraries .

"nearest":

```

input_index_f32 = output_index * scale

input_index = floor(input_index_f32)

```

"nearest-exact"

```

input_index_f32 = (output_index + 0.5) * scale - 0.5

input_index = round(input_index_f32)

```

Comparisions with other libs: https://gist.github.com/vfdev-5/a5bd5b1477b1c82a87a0f9e25c727664

PyTorch version | 1.9.0 "nearest" | this PR "nearest" | this PR "nearest-exact"

---|---|---|---

Resize option: | |

OpenCV INTER_NEAREST result mismatches | 0 | 0 | 10

OpenCV INTER_NEAREST_EXACT result mismatches | 9 | 9 | 9

Scikit-Image result mismatches | 10 | 10 | 0

Pillow result mismatches | 10 | 10 | 7

TensorFlow result mismatches | 10 | 10 | 0

Rescale option: | | |

size mismatches (https://github.com/pytorch/pytorch/issues/62396 ) | 10 | 10 | 10

OpenCV INTER_NEAREST result mismatches | 3 | 3| 5

OpenCV INTER_NEAREST_EXACT result mismatches | 3 | 3| 4

Scikit-Image result mismatches | 4 | 4 | 0

Scipy result mismatches | 4 | 4 | 0

TensorFlow: no such option | - | -

Versions:

```

skimage: 0.19.0.dev0

opencv: 4.5.4-dev

scipy: 1.7.2

Pillow: 8.4.0

TensorFlow: 2.7.0

```

Implementations in other libs:

- Pillow:

- ee079ae67e/src/libImaging/Geometry.c (L889-L899)ee079ae67e/src/libImaging/Geometry.c (L11)38fae50c3f/skimage/transform/_warps.py (L180-L188)47bb6febaa/scipy/ndimage/src/ni_interpolation.c (L775-L779)47bb6febaa/scipy/ndimage/src/ni_interpolation.c (L479)https://github.com/pytorch/pytorch/pull/64501

Reviewed By: anjali411

Differential Revision: D32361901

Pulled By: jbschlosser

fbshipit-source-id: df906f4d25a2b2180e1942ffbab2cc14600aeed2

2021-11-15 14:28:19 -08:00

Junjie Wang

301369a774

[PyTorch][Fix] Pass the arguments of embedding as named arguments ( #67574 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67574

When adding the optional params for sharded embedding op. Found that we cannot get these params from `__torch_function__` override. The reason is that we don't pass them via keyword arguments. So maybe we want to change them to kwargs?

ghstack-source-id: 143029375

Test Plan: CI

Reviewed By: albanD

Differential Revision: D32039152

fbshipit-source-id: c7e598e49eddbabff6e11e3f8cb0818f57c839f6

2021-11-11 15:22:10 -08:00

Kushashwa Ravi Shrimali

9e7b314318

OpInfo for nn.functional.conv1d ( #67747 )

...

Summary:

This PR adds OpInfo for `nn.functional.conv1d`. There is a minor typo fix in the documentation as well.

Issue tracker: https://github.com/pytorch/pytorch/issues/54261

cc: mruberry

Pull Request resolved: https://github.com/pytorch/pytorch/pull/67747

Reviewed By: malfet

Differential Revision: D32309258

Pulled By: mruberry

fbshipit-source-id: add21911b8ae44413e033e19398f398210737c6c

2021-11-11 09:23:04 -08:00

Natalia Gimelshein

8dfbc620d4

don't hardcode mask type in mha ( #68077 )

...

Summary:

Fixes #{issue number}

Pull Request resolved: https://github.com/pytorch/pytorch/pull/68077

Reviewed By: zou3519

Differential Revision: D32292410

Pulled By: ngimel

fbshipit-source-id: 67213cf5474dc3f83e90e28cf5a823abb683a6f9

2021-11-10 09:41:21 -08:00

vfdev-5

49bf24fc83

Updated error message for nn.functional.interpolate ( #66417 )

...

Summary:

Description:

- Updated error message for nn.functional.interpolate

Fixes https://github.com/pytorch/pytorch/issues/63845

cc vadimkantorov

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66417

Reviewed By: albanD

Differential Revision: D31924761

Pulled By: jbschlosser

fbshipit-source-id: ca74c77ac34b4f644aa10440b77b3fcbe4e770ac

2021-10-26 10:33:24 -07:00

kshitij12345

828a9dcc04

[nn] MarginRankingLoss : no batch dim ( #64975 )

...

Summary:

Reference: https://github.com/pytorch/pytorch/issues/60585

cc albanD mruberry jbschlosser walterddr

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64975

Reviewed By: albanD

Differential Revision: D31906528

Pulled By: jbschlosser

fbshipit-source-id: 1127242a859085b1e06a4b71be19ad55049b38ba

2021-10-26 09:03:31 -07:00

Mikayla Gawarecki

5569d5824c

Fix documentation of arguments for torch.nn.functional.Linear ( #66884 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66884

Addressing docs fix mentioned in issue 64978 on Github

ghstack-source-id: 141093449

Test Plan: https://pxl.cl/1Rxkz

Reviewed By: anjali411

Differential Revision: D31767303

fbshipit-source-id: f1ca10fed5bb768749bce3ddc240bbce1dfb3f84

2021-10-20 12:02:58 -07:00

vfdev

62ca5a81c0

Exposed recompute_scale_factor into nn.Upsample ( #66419 )

...

Summary:

Description:

- Exposed recompute_scale_factor into nn.Upsample such that recompute_scale_factor=True option could be used

Context: https://github.com/pytorch/pytorch/pull/64501#discussion_r710205190

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66419

Reviewed By: gchanan

Differential Revision: D31731276

Pulled By: jbschlosser

fbshipit-source-id: 2118489e6f5bc1142f2a64323f4cfd095a9f3c42

2021-10-20 07:59:25 -07:00

kshitij12345

1db50505d5

[nn] MultiLabelSoftMarginLoss : no batch dim support ( #65690 )

...

Summary:

Reference: https://github.com/pytorch/pytorch/issues/60585

cc albanD mruberry jbschlosser walterddr

Pull Request resolved: https://github.com/pytorch/pytorch/pull/65690

Reviewed By: zou3519

Differential Revision: D31731162

Pulled By: jbschlosser

fbshipit-source-id: d26f27555f78afdadd49126e0548a8bfda50cc5a

2021-10-18 15:30:01 -07:00

Kushashwa Ravi Shrimali

909694fd88

Fix nn.functional.max_poolNd dispatch (for arg: return_indices) ( #62544 )

...

Summary:

Please see https://github.com/pytorch/pytorch/issues/62545 for context.

The order of `return_indices, ceil_mode` is different for `nn.functional.max_poolNd` functions to what seen with `torch.nn.MaxPoolNd` (modular form). While this should be resolved in the future, it was decided to first raise a warning that the behavior will be changed in the future. (please see https://github.com/pytorch/pytorch/pull/62544#issuecomment-893770955 for more context)

This PR thus raises appropriate warnings and updates the documentation to show the full signature (along with a note) for `torch.nn.functional.max_poolNd` functions.

**Quick links:**

(_upstream_)

* Documentation of [`nn.functional.max_pool1d`](https://pytorch.org/docs/1.9.0/generated/torch.nn.functional.max_pool1d.html ), [`nn.functional.max_pool2d`](https://pytorch.org/docs/stable/generated/torch.nn.functional.max_pool2d.html ), and [`nn.functional.max_pool3d`](https://pytorch.org/docs/stable/generated/torch.nn.functional.max_pool3d.html ).

(_this branch_)

* Documentation of [`nn.functional.max_pool1d`](https://docs-preview.pytorch.org/62544/generated/torch.nn.functional.max_pool1d.html?highlight=max_pool1d ), [`nn.functional.max_pool2d`](https://docs-preview.pytorch.org/62544/generated/torch.nn.functional.max_pool2d.html?highlight=max_pool2d#torch.nn.functional.max_pool2d ), and [`nn.functional.max_pool3d`](https://docs-preview.pytorch.org/62544/generated/torch.nn.functional.max_pool3d.html?highlight=max_pool3d#torch.nn.functional.max_pool3d ).

cc mruberry jbschlosser

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62544

Reviewed By: gchanan

Differential Revision: D31179038

Pulled By: jbschlosser

fbshipit-source-id: 0a2c7215df9e132ce9ec51448c5b3c90bbc69030

2021-10-18 08:34:38 -07:00

Natalia Gimelshein

4a50b6c490

fix cosine similarity dimensionality check ( #66191 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/66086

Pull Request resolved: https://github.com/pytorch/pytorch/pull/66191

Reviewed By: dagitses, malfet

Differential Revision: D31436997

Pulled By: ngimel

fbshipit-source-id: 363556eea4e1696d928ae08320d298451c286b10

2021-10-06 15:44:51 -07:00

John Clow

36485d36b6

Docathon: Add docs for nn.functional.*d_max_pool ( #63264 )

...

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63264

Adding docs to max_pool to resolve docathon issue #60904

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision: D31071491

Pulled By: Gamrix

fbshipit-source-id: f4f6ec319c62ff1dfaeed8bb6bb0464b9514a7e9

2021-09-23 11:59:50 -07:00

kshitij12345

a012216b96

[nn] Fold : no batch dim ( #64909 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/64907

Reference: https://github.com/pytorch/pytorch/issues/60585

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64909

Reviewed By: cpuhrsch, heitorschueroff

Differential Revision: D30991087

Pulled By: jbschlosser

fbshipit-source-id: 91a37e0b1d51472935ff2308719dfaca931513f3

2021-09-23 08:37:32 -07:00

Samantha Andow

c7c711bfb8

Add optional tensor arguments to ( #63967 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63435

Adds optional tensor arguments to check handling torch function checks. The only one I didn't do this for in the functional file was `multi_head_attention_forward` since that already took care of some optional tensor arguments but not others so it seemed like arguments were specifically chosen

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63967

Reviewed By: albanD

Differential Revision: D30640441

Pulled By: ezyang

fbshipit-source-id: 5ef9554d2fb6c14779f8f45542ab435fb49e5d0f

2021-08-30 19:21:26 -07:00

Thomas J. Fan

d3bcba5f85

ENH Adds label_smoothing to cross entropy loss ( #63122 )

...

Summary:

Fixes https://github.com/pytorch/pytorch/issues/7455

Partially resolves pytorch/vision#4281

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63122

Reviewed By: iramazanli

Differential Revision: D30586076

Pulled By: jbschlosser

fbshipit-source-id: 06afc3aa1f8b9edb07fe9ed68c58968ad1926924

2021-08-29 23:33:04 -07:00