Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57386

Here is the PR for what's discussed in the RFC https://github.com/pytorch/pytorch/issues/55374 to enable the autocast for CPU device. Currently, this PR only enable BF16 as the lower precision datatype.

Changes:

1. Enable new API `torch.cpu.amp.autocast` for autocast on CPU device: include the python API, C++ API, new Dispatchkey etc.

2. Consolidate the implementation for each cast policy sharing between CPU and GPU devices.

3. Add the operation lists to corresponding cast policy for cpu autocast.

Test Plan: Imported from OSS

Reviewed By: soulitzer

Differential Revision: D28572219

Pulled By: ezyang

fbshipit-source-id: db3db509973b16a5728ee510b5e1ee716b03a152

Summary:

This adds the methods `Tensor.cfloat()` and `Tensor.cdouble()`.

I was not able to find the tests for `.float()` functions. I'd be happy to add similar tests for these functions once someone points me to them.

Fixes https://github.com/pytorch/pytorch/issues/56014

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58137

Reviewed By: ejguan

Differential Revision: D28412288

Pulled By: anjali411

fbshipit-source-id: ff3653cb3516bcb3d26a97b9ec3d314f1f42f83d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58039

The new function has the following signature

`inv_ex(Tensor inpit, *, bool check_errors=False) -> (Tensor inverse, Tensor info)`.

When `check_errors=True`, an error is thrown if the matrix is not invertible; `check_errors=False` - responsibility for checking the result is on the user.

`linalg_inv` is implemented using calls to `linalg_inv_ex` now.

Resolves https://github.com/pytorch/pytorch/issues/25095

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision: D28405148

Pulled By: mruberry

fbshipit-source-id: b8563a6c59048cb81e206932eb2f6cf489fd8531

Summary:

Fixes https://github.com/pytorch/pytorch/issues/56608

- Adds binding to the `c10::InferenceMode` RAII class in `torch._C._autograd.InferenceMode` through pybind. Also binds the `torch.is_inference_mode` function.

- Adds context manager `torch.inference_mode` to manage an instance of `c10::InferenceMode` (global). Implemented in `torch.autograd.grad_mode.py` to reuse the `_DecoratorContextManager` class.

- Adds some tests based on those linked in the issue + several more for just the context manager

Issues/todos (not necessarily for this PR):

- Improve short inference mode description

- Small example

- Improved testing since there is no direct way of checking TLS/dispatch keys

-

Pull Request resolved: https://github.com/pytorch/pytorch/pull/58045

Reviewed By: agolynski

Differential Revision: D28390595

Pulled By: soulitzer

fbshipit-source-id: ae98fa036c6a2cf7f56e0fd4c352ff804904752c

Summary:

Backward methods for `torch.lu` and `torch.lu_solve` require the `torch.lu_unpack` method.

However, while `torch.lu` is a Python wrapper over a native function, so its gradient is implemented via `autograd.Function`,

`torch.lu_solve` is a native function, so it cannot access `torch.lu_unpack` as it is implemented in Python.

Hence this PR presents a native (ATen) `lu_unpack` version. It is also possible to update the gradients for `torch.lu` so that backward+JIT is supported (no JIT for `autograd.Function`) with this function.

~~The interface for this method is different from the original `torch.lu_unpack`, so it is decided to keep it hidden.~~

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46913

Reviewed By: albanD

Differential Revision: D28355725

Pulled By: mruberry

fbshipit-source-id: 281260f3b6e93c15b08b2ba66d5a221314b00e78

Summary:

Backward methods for `torch.lu` and `torch.lu_solve` require the `torch.lu_unpack` method.

However, while `torch.lu` is a Python wrapper over a native function, so its gradient is implemented via `autograd.Function`,

`torch.lu_solve` is a native function, so it cannot access `torch.lu_unpack` as it is implemented in Python.

Hence this PR presents a native (ATen) `lu_unpack` version. It is also possible to update the gradients for `torch.lu` so that backward+JIT is supported (no JIT for `autograd.Function`) with this function.

~~The interface for this method is different from the original `torch.lu_unpack`, so it is decided to keep it hidden.~~

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46913

Reviewed By: astaff

Differential Revision: D28117714

Pulled By: mruberry

fbshipit-source-id: befd33db12ecc147afacac792418b6f4948fa4a4

Summary:

This PR is focused on the API for `linalg.matrix_norm` and delegates computations to `linalg.norm` for the moment.

The main difference between the norms is when `dim=None`. In this case

- `linalg.norm` will compute a vector norm on the flattened input if `ord=None`, otherwise it requires the input to be either 1D or 2D in order to disambiguate between vector and matrix norm

- `linalg.vector_norm` will flatten the input

- `linalg.matrix_norm` will compute the norm over the last two dimensions, treating the input as batch of matrices

In future PRs, the computations will be moved to `torch.linalg.matrix_norm` and `torch.norm` and `torch.linalg.norm` will delegate computations to either `linalg.vector_norm` or `linalg.matrix_norm` based on the arguments provided.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57127

Reviewed By: mrshenli

Differential Revision: D28186736

Pulled By: mruberry

fbshipit-source-id: 99ce2da9d1c4df3d9dd82c0a312c9570da5caf25

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57180

We have now a separate function for computing only the singular values.

`compute_uv` argument is not needed and it was decided in the

offline discussion to remove it. This is a BC-breaking change but our

linalg module is beta, therefore we can do it without a deprecation

notice.

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision: D28142163

Pulled By: mruberry

fbshipit-source-id: 3fac1fcae414307ad5748c9d5ff50e0aa4e1b853

Summary:

As per discussion here https://github.com/pytorch/pytorch/pull/57127#discussion_r624948215

Note that we cannot remove the optional type from the `dim` parameter because the default is to flatten the input tensor which cannot be easily captured by a value other than `None`

### BC Breaking Note

This PR changes the `ord` parameter of `torch.linalg.vector_norm` so that it no longer accepts `None` arguments. The default behavior of `2` is equivalent to the previous default of `None`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/57662

Reviewed By: albanD, mruberry

Differential Revision: D28228870

Pulled By: heitorschueroff

fbshipit-source-id: 040fd8055bbe013f64d3c8409bbb4b2c87c99d13

Summary:

The new function has the following signature `cholesky_ex(Tensor input, *, bool check_errors=False) -> (Tensor L, Tensor infos)`. When `check_errors=True`, an error is thrown if the decomposition fails; `check_errors=False` - responsibility for checking the decomposition is on the user.

When `check_errors=False`, we don't have host-device memory transfers for checking the values of the `info` tensor.

Rewrote the internal code for `torch.linalg.cholesky`. Added `cholesky_stub` dispatch. `linalg_cholesky` is implemented using calls to `linalg_cholesky_ex` now.

Resolves https://github.com/pytorch/pytorch/issues/57032.

Ref. https://github.com/pytorch/pytorch/issues/34272, https://github.com/pytorch/pytorch/issues/47608, https://github.com/pytorch/pytorch/issues/47953

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56724

Reviewed By: ngimel

Differential Revision: D27960176

Pulled By: mruberry

fbshipit-source-id: f05f3d5d9b4aa444e41c4eec48ad9a9b6fd5dfa5

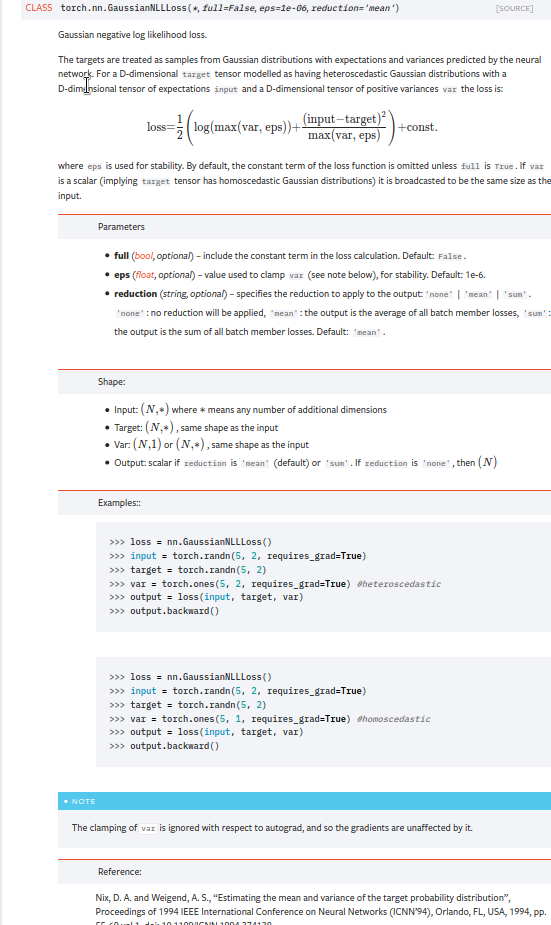

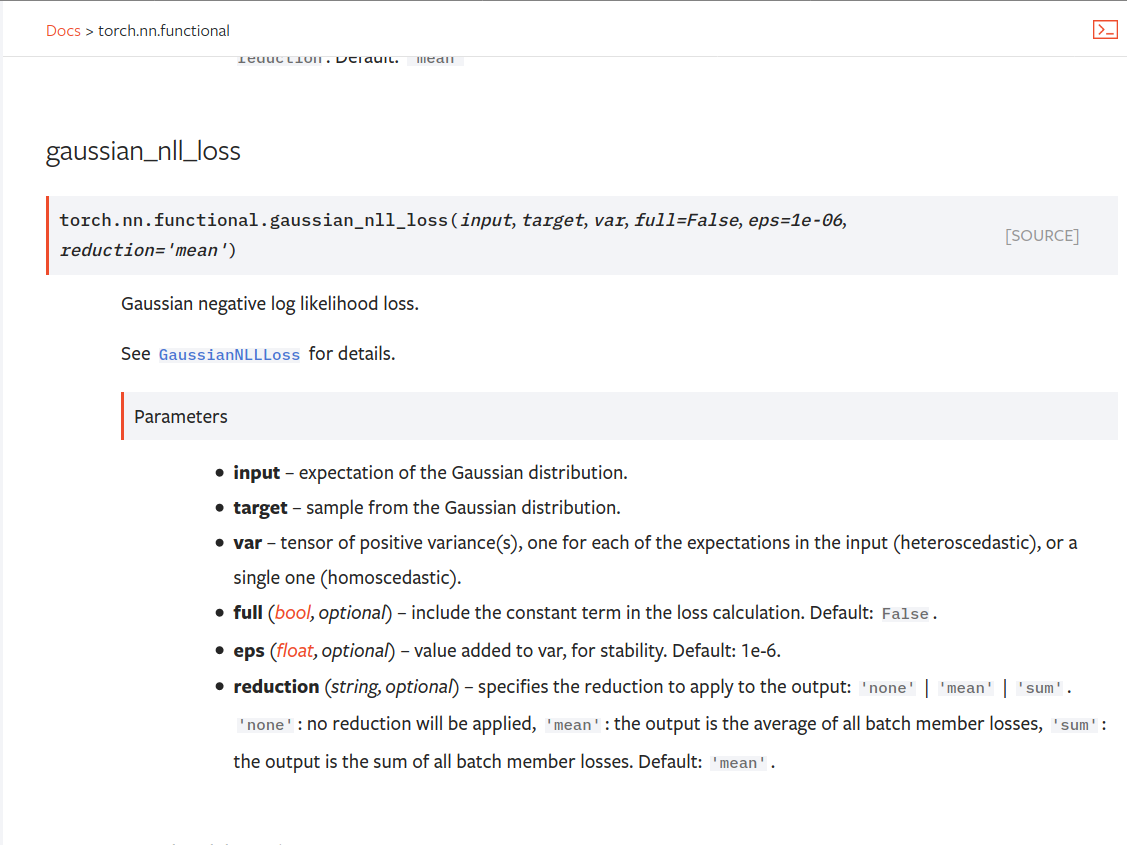

Summary:

Fixes https://github.com/pytorch/pytorch/issues/53964. cc albanD almson

## Major changes:

- Overhauled the actual loss calculation so that the shapes are now correct (in functional.py)

- added the missing doc in nn.functional.rst

## Minor changes (in functional.py):

- I removed the previous check on whether input and target were the same shape. This is to allow for broadcasting, say when you have 10 predictions that all have the same target.

- I added some comments to explain each shape check in detail. Let me know if these should be shortened/cut.

Screenshots of updated docs attached.

Let me know what you think, thanks!

## Edit: Description of change of behaviour (affecting BC):

The backwards-compatibility is only affected for the `reduction='none'` mode. This was the source of the bug. For tensors with size (N, D), the old returned loss had size (N), as incorrect summation was happening. It will now have size (N, D) as expected.

### Example

Define input tensors, all with size (2, 3).

`input = torch.tensor([[0., 1., 3.], [2., 4., 0.]], requires_grad=True)`

`target = torch.tensor([[1., 4., 2.], [-1., 2., 3.]])`

`var = 2*torch.ones(size=(2, 3), requires_grad=True)`

Initialise loss with reduction mode 'none'. We expect the returned loss to have the same size as the input tensors, (2, 3).

`loss = torch.nn.GaussianNLLLoss(reduction='none')`

Old behaviour:

`print(loss(input, target, var)) `

`# Gives tensor([3.7897, 6.5397], grad_fn=<MulBackward0>. This has size (2).`

New behaviour:

`print(loss(input, target, var)) `

`# Gives tensor([[0.5966, 2.5966, 0.5966], [2.5966, 1.3466, 2.5966]], grad_fn=<MulBackward0>)`

`# This has the expected size, (2, 3).`

To recover the old behaviour, sum along all dimensions except for the 0th:

`print(loss(input, target, var).sum(dim=1))`

`# Gives tensor([3.7897, 6.5397], grad_fn=<SumBackward1>.`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/56469

Reviewed By: jbschlosser, agolynski

Differential Revision: D27894170

Pulled By: albanD

fbshipit-source-id: 197890189c97c22109491c47f469336b5b03a23f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53238

There is a tension for the Vitals design: (1) we want a macro based logging API for C++ and (2) we want a clean python API. Furthermore, we want to this to work with "print on destruction" semantics.

The unfortunate resolution is that there are (2) ways to define vitals:

(1) Use the macros for local use only within C++ - this keeps the semantics people enjoy

(2) For vitals to be used through either C++ or Python, we use a global VitalsAPI object.

Both these go to the same place for the user: printing to stdout as the globals are destructed.

The long history on this diff shows many different ways to try to avoid having 2 different paths... we tried weak pointers & shared pointers, verbose switch cases, etc. Ultimately each ran into an ugly trade-off and this cuts the difference better the alternatives.

Test Plan:

buck test mode/dev caffe2/test:torch -- --regex vital

buck test //caffe2/aten:vitals

Reviewed By: orionr

Differential Revision: D26736443

fbshipit-source-id: ccab464224913edd07c1e8532093f673cdcb789f

Summary:

Reference: https://github.com/pytorch/pytorch/issues/50345

Changes:

* Add `i0e`

* Move some kernels from `UnaryOpsKernel.cu` to `UnarySpecialOpsKernel.cu` to decrease compilation time per file.

Time taken by i0e_vs_scipy tests: around 6.33.s

<details>

<summary>Test Run Log</summary>

```

(pytorch-cuda-dev) kshiteej@qgpu1:~/Pytorch/pytorch_module_special$ pytest test/test_unary_ufuncs.py -k _i0e_vs

======================================================================= test session starts ========================================================================

platform linux -- Python 3.8.6, pytest-6.1.2, py-1.9.0, pluggy-0.13.1

rootdir: /home/kshiteej/Pytorch/pytorch_module_special, configfile: pytest.ini

plugins: hypothesis-5.38.1

collected 8843 items / 8833 deselected / 10 selected

test/test_unary_ufuncs.py ...sss.... [100%]

========================================================================= warnings summary =========================================================================

../../.conda/envs/pytorch-cuda-dev/lib/python3.8/site-packages/torch/backends/cudnn/__init__.py:73

test/test_unary_ufuncs.py::TestUnaryUfuncsCUDA::test_special_i0e_vs_scipy_cuda_bfloat16

/home/kshiteej/.conda/envs/pytorch-cuda-dev/lib/python3.8/site-packages/torch/backends/cudnn/__init__.py:73: UserWarning: PyTorch was compiled without cuDNN/MIOpen support. To use cuDNN/MIOpen, rebuild PyTorch making sure the library is visible to the build system.

warnings.warn(

-- Docs: https://docs.pytest.org/en/stable/warnings.html

===================================================================== short test summary info ======================================================================

SKIPPED [3] test/test_unary_ufuncs.py:1182: not implemented: Could not run 'aten::_copy_from' with arguments from the 'Meta' backend. This could be because the operator doesn't exist for this backend, or was omitted during the selective/custom build process (if using custom build). If you are a Facebook employee using PyTorch on mobile, please visit https://fburl.com/ptmfixes for possible resolutions. 'aten::_copy_from' is only available for these backends: [BackendSelect, Named, InplaceOrView, AutogradOther, AutogradCPU, AutogradCUDA, AutogradXLA, UNKNOWN_TENSOR_TYPE_ID, AutogradMLC, AutogradNestedTensor, AutogradPrivateUse1, AutogradPrivateUse2, AutogradPrivateUse3, Tracer, Autocast, Batched, VmapMode].

BackendSelect: fallthrough registered at ../aten/src/ATen/core/BackendSelectFallbackKernel.cpp:3 [backend fallback]

Named: registered at ../aten/src/ATen/core/NamedRegistrations.cpp:7 [backend fallback]

InplaceOrView: fallthrough registered at ../aten/src/ATen/core/VariableFallbackKernel.cpp:56 [backend fallback]

AutogradOther: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradCPU: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradCUDA: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradXLA: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

UNKNOWN_TENSOR_TYPE_ID: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradMLC: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradNestedTensor: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradPrivateUse1: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradPrivateUse2: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

AutogradPrivateUse3: registered at ../torch/csrc/autograd/generated/VariableType_4.cpp:8761 [autograd kernel]

Tracer: registered at ../torch/csrc/autograd/generated/TraceType_4.cpp:9348 [kernel]

Autocast: fallthrough registered at ../aten/src/ATen/autocast_mode.cpp:250 [backend fallback]

Batched: registered at ../aten/src/ATen/BatchingRegistrations.cpp:1016 [backend fallback]

VmapMode: fallthrough registered at ../aten/src/ATen/VmapModeRegistrations.cpp:33 [backend fallback]

==================================================== 7 passed, 3 skipped, 8833 deselected, 2 warnings in 6.33s =====================================================

```

</details>

TODO:

* [x] Check rendered docs (https://11743402-65600975-gh.circle-artifacts.com/0/docs/special.html)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54409

Reviewed By: jbschlosser

Differential Revision: D27760472

Pulled By: mruberry

fbshipit-source-id: bdfbcaa798b00c51dc9513c34626246c8fc10548

Summary:

This PR adds a `padding_idx` parameter to `nn.EmbeddingBag` and `nn.functional.embedding_bag`. As with `nn.Embedding`'s `padding_idx` argument, if an embedding's index is equal to `padding_idx` it is ignored, so it is not included in the reduction.

This PR does not add support for `padding_idx` for quantized or ONNX `EmbeddingBag` for opset10/11 (opset9 is supported). In these cases, an error is thrown if `padding_idx` is provided.

Fixes https://github.com/pytorch/pytorch/issues/3194

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49237

Reviewed By: walterddr, VitalyFedyunin

Differential Revision: D26948258

Pulled By: jbschlosser

fbshipit-source-id: 3ca672f7e768941f3261ab405fc7597c97ce3dfc

Summary:

Reference: https://github.com/pytorch/pytorch/issues/50345

Chages:

* Alias for sigmoid and logit

* Adds out variant for C++ API

* Updates docs to link back to `special` documentation

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54759

Reviewed By: mrshenli

Differential Revision: D27615208

Pulled By: mruberry

fbshipit-source-id: 8bba908d1bea246e4aa9dbadb6951339af353556

Summary:

This PR adds `torch.linalg.eig`, and `torch.linalg.eigvals` for NumPy compatibility.

MAGMA uses a hybrid CPU-GPU algorithm and doesn't have a GPU interface for the non-symmetric eigendecomposition. It means that it forces us to transfer inputs living in GPU memory to CPU first before calling MAGMA, and then transfer results from MAGMA to CPU. That is rather slow for smaller matrices and MAGMA is faster than CPU path only for matrices larger than 3000x3000.

Unfortunately, there is no cuSOLVER function for this operation.

Autograd support for `torch.linalg.eig` will be added in a follow-up PR.

Ref https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52491

Reviewed By: anjali411

Differential Revision: D27563616

Pulled By: mruberry

fbshipit-source-id: b42bb98afcd2ed7625d30bdd71cfc74a7ea57bb5

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52859

This reverts commit 92a4ee1cf6.

Added support for bfloat16 for CUDA 11 and removed fast-path for empty input tensors that was affecting autograd graph.

Test Plan: Imported from OSS

Reviewed By: H-Huang

Differential Revision: D27402390

Pulled By: heitorschueroff

fbshipit-source-id: 73c5ccf54f3da3d29eb63c9ed3601e2fe6951034

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/54702

This fixes subclassing for __iter__ so that it returns an iterator over

subclasses properly instead of Tensor.

Test Plan: Imported from OSS

Reviewed By: H-Huang

Differential Revision: D27352563

Pulled By: ezyang

fbshipit-source-id: 4c195a86c8f2931a6276dc07b1e74ee72002107c

Summary:

Reference: https://github.com/pytorch/pytorch/issues/38349

Wrapper around the existing `torch.gather` with broadcasting logic.

TODO:

* [x] Add Doc entry (see if phrasing can be improved)

* [x] Add OpInfo

* [x] Add test against numpy

* [x] Handle broadcasting behaviour and when dim is not given.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52833

Reviewed By: malfet

Differential Revision: D27319038

Pulled By: mruberry

fbshipit-source-id: 00f307825f92c679d96e264997aa5509172f5ed1

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53727

This is first diff to add native support for segment reduction in PyTorch. It provides similar functionality like torch.scatter or "numpy.ufunc.reduceat".

This diff mainly focuses on API layer to make sure future improvements will not cause backward compatibility issues. Once API is settled, here are next steps I am planning:

- Add support for other major reduction types (e.g. min, sum) for 1D tensor

- Add Cuda support

- Backward support

- Documentation for the op

- Perf optimizations and benchmark util

- Support for multi dimensional tensors (on data and lengths) (not high priority)

- Support for 'indices' (not high priority)

Test Plan: Added unit test

Reviewed By: ngimel

Differential Revision: D26952075

fbshipit-source-id: 8040ec96def3013e7240cf675d499ee424437560

Summary:

This PR adds autograd support for `torch.orgqr`.

Since `torch.orgqr` is one of few functions that expose LAPACK's naming and all other linear algebra routines were renamed a long time ago, I also added a new function with a new name and `torch.orgqr` now is an alias for it.

The new proposed name is `householder_product`. For a matrix `input` and a vector `tau` LAPACK's orgqr operation takes columns of `input` (called Householder vectors or elementary reflectors) scalars of `tau` that together represent Householder matrices and then the product of these matrices is computed. See https://www.netlib.org/lapack/lug/node128.html.

Other linear algebra libraries that I'm aware of do not expose this LAPACK function, so there is some freedom in naming it. It is usually used internally only for QR decomposition, but can be useful for deep learning tasks now when it supports differentiation.

Resolves https://github.com/pytorch/pytorch/issues/50104

Pull Request resolved: https://github.com/pytorch/pytorch/pull/52637

Reviewed By: agolynski

Differential Revision: D27114246

Pulled By: mruberry

fbshipit-source-id: 9ab51efe52aec7c137aa018c7bd486297e4111ce

Summary:

Close https://github.com/pytorch/pytorch/issues/51108

Related https://github.com/pytorch/pytorch/issues/38349

This PR implements the `cpu_kernel_multiple_outputs` to support returning multiple values in a CPU kernel.

```c++

auto iter = at::TensorIteratorConfig()

.add_output(out1)

.add_output(out2)

.add_input(in1)

.add_input(in2)

.build();

at::native::cpu_kernel_multiple_outputs(iter,

[=](float a, float b) -> std::tuple<float, float> {

float add = a + b;

float mul = a * b;

return std::tuple<float, float>(add, mul);

}

);

```

The `out1` will equal to `torch.add(in1, in2)`, while the result of `out2` will be `torch.mul(in1, in2)`.

It helps developers implement new torch functions that return two tensors more conveniently, such as NumPy-like functions [divmod](https://numpy.org/doc/1.18/reference/generated/numpy.divmod.html?highlight=divmod#numpy.divmod) and [frexp](https://numpy.org/doc/stable/reference/generated/numpy.frexp.html#numpy.frexp).

This PR adds `torch.frexp` function to exercise the new functionality provided by `cpu_kernel_multiple_outputs`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51097

Reviewed By: albanD

Differential Revision: D26982619

Pulled By: heitorschueroff

fbshipit-source-id: cb61c7f2c79873ab72ab5a61cbdb9203531ad469

Summary:

Fixes https://github.com/pytorch/pytorch/issues/44378 by providing a wider range of drivers similar to what SciPy is doing.

The supported CPU drivers are `gels, gelsy, gelsd, gelss`.

The CUDA interface has only `gels` implemented but only for overdetermined systems.

The current state of this PR:

- [x] CPU interface

- [x] CUDA interface

- [x] CPU tests

- [x] CUDA tests

- [x] Memory-efficient batch-wise iteration with broadcasting which fixes https://github.com/pytorch/pytorch/issues/49252

- [x] docs

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49093

Reviewed By: albanD

Differential Revision: D26991788

Pulled By: mruberry

fbshipit-source-id: 8af9ada979240b255402f55210c0af1cba6a0a3c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53276

- One of the tests had a syntax error (but the test

wasn't fine grained enough to catch this; any error

was a pass)

- Doesn't work on ROCm

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Differential Revision: D26820048

Test Plan: Imported from OSS

Reviewed By: mruberry

Pulled By: ezyang

fbshipit-source-id: b02c4252d10191c3b1b78f141d008084dc860c45

Summary:

per title

This PR did

- Migrate `apex.parallel.SyncBatchNorm` channels_last to pytorch `torch.nn.SyncBatchNorm`

- Fix a TODO here by fusing `sum`, `div` kernels into backward elementwise kernel

b167402e2e/torch/nn/modules/_functions.py (L76-L95)

Todo

- [x] Discuss a regression introduced in https://github.com/pytorch/pytorch/pull/37133#discussion_r512530389, which is the synchronized copy here

b167402e2e/torch/nn/modules/_functions.py (L32-L34)

**Comment**: This PR uses apex version for the size check. Test passed and I haven't seen anything wrong so far.

- [x] The restriction to use channels_last kernel will be like this

```

inline bool batch_norm_use_channels_last_kernels(const at::Tensor& self) {

return self.is_contiguous(at::MemoryFormat::ChannelsLast) || self.ndimension() == 2;

}

```

I think we can relax that for channels_last_3d as well?

**Comment**: we don't have benchmark for this now, will check this and add functionality later when needed.

- [x] Add test

- [x] Add benchmark

Detailed benchmark is at https://github.com/xwang233/code-snippet/tree/master/syncbn-channels-last

Close https://github.com/pytorch/pytorch/issues/50781

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46906

Reviewed By: albanD

Differential Revision: D26771437

Pulled By: malfet

fbshipit-source-id: d00387044e9d43ac7e6c0e32a2db22c63d1504de

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/53143

Meta is now an honest to goodness device type, like cpu, so you can use

device='meta' to trigger allocation of meta tensors. This way better

than empty_meta since we now have working API for most factory functions

(they don't necessarily work yet, though, because need to register Meta

versions of those functions.)

Some subtleties:

- I decided to drop the concept of CPU versus CUDA meta tensors; meta

tensors are device agnostic. It's hard to say exactly what the

correct level of abstraction here is, but in this particular case

implementation considerations trump semantic considerations: it

is way easier to have just a meta device, than to have a meta device

AND a cpu device AND a cuda device. This may limit the applicability

of meta tensors for tracing models that do explicit cpu()/cuda()

conversions (unless, perhaps, we make those operations no-ops on meta

tensors).

- I noticed that the DeviceType uppercase strings are kind of weird.

Are they really supposed to be all caps? That's weird.

- I moved the Meta dispatch key to live with the rest of the "device"

dispatch keys.

- I intentionally did NOT add a Backend for Meta. For now, I'm going to

hope meta tensors never exercise any of the Backend conversion code;

even if it does, better to fix the code to just stop converting to and

from Backend.

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Test Plan: Imported from OSS

Reviewed By: samestep

Differential Revision: D26763552

Pulled By: ezyang

fbshipit-source-id: 14633b6ca738e60b921db66a763155d01795480d

Summary:

Fixes https://github.com/pytorch/pytorch/issues/44378 by providing a wider range of drivers similar to what SciPy is doing.

The supported CPU drivers are `gels, gelsy, gelsd, gelss`.

The CUDA interface has only `gels` implemented but only for overdetermined systems.

The current state of this PR:

- [x] CPU interface

- [x] CUDA interface

- [x] CPU tests

- [x] CUDA tests

- [x] Memory-efficient batch-wise iteration with broadcasting which fixes https://github.com/pytorch/pytorch/issues/49252

- [x] docs

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49093

Reviewed By: H-Huang

Differential Revision: D26723384

Pulled By: mruberry

fbshipit-source-id: c9866a95f14091955cf42de22f4ac9e2da009713

Summary:

Apple recently announced ML Compute, a new framework available in macOS Big Sur, which enables users to accelerate the training of neural networks on Mac hardware. This PR is the first on a series of PRs that will enable the integration with ML Compute. Most of the integration code will live on a separate subrepo named `mlc`.

The integration with `mlc` (ML Compute) will be very similar to that of xla. We rely on registering our ops through:

TORCH_LIBRARY_IMPL(aten, PrivateUse1, m) {

m.impl_UNBOXED(<op_schema_name>, &customized_op_kernel)

...

}

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50634

Reviewed By: malfet

Differential Revision: D26614213

Pulled By: smessmer

fbshipit-source-id: 3b492b346c61cc3950ac880ac01a82fbdddbc07b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51807

Implemented torch.linalg.multi_dot similar to [numpy.linalg.multi_dot](https://numpy.org/doc/stable/reference/generated/numpy.linalg.multi_dot.html).

This function does not support broadcasting or batched inputs at the moment.

**NOTE**

numpy.linalg.multi_dot allows the first and last tensors to have more than 2 dimensions despite their docs stating these must be either 1D or 2D. This PR diverges from NumPy in that it enforces this restriction.

**TODO**

- [ ] Benchmark against NumPy

- [x] Add OpInfo testing

- [x] Remove unnecessary copy for out= argument

Test Plan: Imported from OSS

Reviewed By: nikithamalgifb

Differential Revision: D26375734

Pulled By: heitorschueroff

fbshipit-source-id: 839642692424c4b1783606c76dd5b34455368f0b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51878

`fake_quantize_per_tensor_affine_cachemask` and

`fake_quantize_per_channel_affine_cachemask` are implementation

details of `fake_quantize_per_tensor_affine` and

`fake_quantize_per_channel_affine`, removing the

Python bindings for them since there is no need to

expose them.

Test Plan:

```

python test/test_quantization.py TestFakeQuantize

```

Imported from OSS

Reviewed By: albanD, bugra

Differential Revision: D26314173

fbshipit-source-id: 733c93a3951453e739b6ed46b72fbad2244f6e97

Summary:

Toward fixing https://github.com/pytorch/pytorch/issues/47624

~Step 1: add `TORCH_WARN_MAYBE` which can either warn once or every time in c++, and add a c++ function to toggle the value.

Step 2 will be to expose this to python for tests. Should I continue in this PR or should we take a different approach: add the python level exposure without changing any c++ code and then over a series of PRs change each call site to use the new macro and change the tests to make sure it is being checked?~

Step 1: add a python and c++ toggle to convert TORCH_WARN_ONCE into TORCH_WARN so the warnings can be caught in tests

Step 2: add a python-level decorator to use this toggle in tests

Step 3: (in future PRs): use the decorator to catch the warnings instead of `maybeWarnsRegex`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48560

Reviewed By: ngimel

Differential Revision: D26171175

Pulled By: mruberry

fbshipit-source-id: d83c18f131d282474a24c50f70a6eee82687158f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51706

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50280

As mentioned in gh-43874, this adds a `rounding_mode={'true', 'trunc', 'floor'}`

argument so `torch.div` can be used as a replacement for `floor_divide` during

the transitional period.

I've included dedicated kernels for truncated and floor division which

aren't strictly necessary for float, but do perform significantly better (~2x) than

doing true division followed by a separate rounding kernel.

Note: I introduce new overloads for `aten::div` instead of just adding a default

`rounding_mode` because various JIT passes rely on the exact operator schema.

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision: D26123271

Pulled By: mruberry

fbshipit-source-id: 51a83717602114597ec9c4d946e35a392eb01d46

Summary:

Implements `np.diff` for single order differences only:

- method and function variants for `diff` and function variant for `diff_out`

- supports out variant, but not in-place since shape changes

- adds OpInfo entry, and test in `test_torch`

- automatic autograd because we are using the `Math` dispatch

_Update: we only support Tensors for prepend and append in this PR. See discussion below and comments for more details._

Currently there is a quirk in the c++ API based on how this is implemented: it is not possible to specify scalar prepend and appends without also specifying all 4 arguments.

That is because the goal is to match NumPy's diff signature of `diff(int n=1, int dim=-1, Union[Scalar, Tensor] prepend=None, Union[Scalar, Tensor] append)=None` where all arguments are optional, positional and in the correct order.

There are a couple blockers. One is c++ ambiguity. This prevents us from simply doing `diff(int n=1, int dim=-1, Scalar? prepend=None, Tensor? append=None)` etc for all combinations of {Tensor, Scalar} x {Tensor, Scalar}.

Why not have append, prepend not have default args and then write out the whole power set of {Tensor, Scalar, omitted} x {Tensor, Scalar, omitted} you might ask. Aside from having to write 18 overloads, this is actually illegal because arguments with defaults must come after arguments without defaults. This would mean having to write `diff(prepend, append, n, dim)` which is not desired. Finally writing out the entire power set of all arguments n, dim, prepend, append is out of the question because that would actually involve 2 * 2 * 3 * 3 = 36 combinations. And if we include the out variant, that would be 72 overloads!

With this in mind, the current way this is implemented is actually to still do `diff(int n=1, int dim=-1, Scalar? prepend=None, Tensor? append=None)`. But also make use of `cpp_no_default_args`. The idea is to only have one of the 4 {Tensor, Scalar} x {Tensor, Scalar} provide default arguments for the c++ api, and add `cpp_no_default_args` for the remaining 3 overloads. With this, Python api works as expected, but some calls such as `diff(prepend=1)` won't work on c++ api.

We can optionally add 18 more overloads that cover the {dim, n, no-args} x {scalar-tensor, tensor-scalar, scalar-scalar} x {out, non-out} cases for c++ api. _[edit: counting is hard - just realized this number is still wrong. We should try to count the cases we do cover instead and subtract that from the total: (2 * 2 * 3 * 3) - (3 + 2^4) = 17. 3 comes from the 3 of 4 combinations of {tensor, scalar}^2 that we declare to be `cpp_no_default_args`, and the one remaining case that has default arguments has covers 2^4 cases. So actual count is 34 additional overloads to support all possible calls]_

_[edit: thanks to https://github.com/pytorch/pytorch/issues/50767 hacky_wrapper is no longer necessary; it is removed in the latest commit]_

hacky_wrapper was also necessary here because `Tensor?` will cause dispatch to look for the `const optional<Tensor>&` schema but also generate a `const Tensor&` declaration in Functions.h. hacky_wrapper allows us to define our function as `const Tensor&` but wraps it in optional for us, so this avoids both the errors while linking and loading.

_[edit: rewrote the above to improve clarity and correct the fact that we actually need 18 more overloads (26 total), not 18 in total to complete the c++ api]_

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50569

Reviewed By: H-Huang

Differential Revision: D26176105

Pulled By: soulitzer

fbshipit-source-id: cd8e77cc2de1117c876cd71c29b312887daca33f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/51255

This is the same as #50561, but for per-channel fake_quant.

TODO before land write up better

Memory and performance impact (MobileNetV2): TODO

Performance impact (microbenchmarks): https://gist.github.com/vkuzo/fbe1968d2bbb79b3f6dd776309fbcffc

* forward pass on cpu: 512ms -> 750ms (+46%)

* forward pass on cuda: 99ms -> 128ms (+30%)

* note: the overall performance impact to training jobs should be minimal, because this is used for weights, and relative importance of fq is dominated by fq'ing the activations

* note: we can optimize the perf in a future PR by reading once and writing twice

Test Plan:

```

python test/test_quantization.py TestFakeQuantize.test_forward_per_channel_cachemask_cpu

python test/test_quantization.py TestFakeQuantize.test_forward_per_channel_cachemask_cuda

python test/test_quantization.py TestFakeQuantize.test_backward_per_channel_cachemask_cpu

python test/test_quantization.py TestFakeQuantize.test_backward_per_channel_cachemask_cuda

```

Imported from OSS

Reviewed By: jerryzh168

Differential Revision: D26117721

fbshipit-source-id: 798b59316dff8188a1d0948e69adf9e5509e414c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/50561

Not for review yet, a bunch of TODOs need finalizing.

tl;dr; add an alternative implementation of `fake_quantize` which saves

a ask during the forward pass and uses it to calculate the backward.

There are two benefits:

1. the backward function no longer needs the input Tensor, and it can be

gc'ed earlier by autograd. On MobileNetV2, this reduces QAT overhead

by ~15% (TODO: link, and absolute numbers). We add an additional mask Tensor

to pass around, but its size is 4x smaller than the input tensor. A

future optimization would be to pack the mask bitwise and unpack in the

backward.

2. the computation of `qval` can be done only once in the forward and

reused in the backward. No perf change observed, TODO verify with better

matrics.

TODO: describe in more detail

Test Plan:

OSS / torchvision / MobileNetV2

```

python references/classification/train_quantization.py

--print-freq 1

--data-path /data/local/packages/ai-group.imagenet-256-smallest-side/prod/

--output-dir ~/nfs/pytorch_vision_tests/

--backend qnnpack

--epochs 5

TODO paste results here

```

TODO more

Imported from OSS

Reviewed By: ngimel

Differential Revision: D25918519

fbshipit-source-id: ec544ca063f984de0f765bf833f205c99d6c18b6

Summary:

Add a new device type 'XPU' ('xpu' for lower case) to PyTorch. Changes are needed for code related to device model and kernel dispatch, e.g. DeviceType, Backend and DispatchKey etc.

https://github.com/pytorch/pytorch/issues/48246

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49786

Reviewed By: mrshenli

Differential Revision: D25893962

Pulled By: ezyang

fbshipit-source-id: 7ff0a316ee34cf0ed6fc7ead08ecdeb7df4b0052

Summary:

This PR adds `torch.linalg.slogdet`.

Changes compared to the original torch.slogdet:

- Complex input now works as in NumPy

- Added out= variant (allocates temporary and makes a copy for now)

- Updated `slogdet_backward` to work with complex input

Ref. https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49194

Reviewed By: VitalyFedyunin

Differential Revision: D25916959

Pulled By: mruberry

fbshipit-source-id: cf9be8c5c044870200dcce38be48cd0d10e61a48

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49502

It broke the OSS CI the last time I landed it, mostly cuda tests and python bindings.

Similar to permute_out, add the out variant of `aten::narrow` (slice in c2) which does an actual copy. `aten::narrow` creates a view, however, an copy is incurred when we call `input.contiguous` in the ops that follow `aten::narrow`, in `concat_add_mul_replacenan_clip`, `casted_batch_one_hot_lengths`, and `batch_box_cox`.

{F351263599}

Test Plan:

Unit test:

```

buck test //caffe2/aten:math_kernel_test

buck test //caffe2/test:sparse -- test_narrow

```

Benchmark with the adindexer model:

```

bs = 1 is neutral

Before:

I1214 21:32:51.919239 3285258 PyTorchPredictorBenchLib.cpp:209] PyTorch run finished. Milliseconds per iter: 0.0886948. Iters per second: 11274.6

After:

I1214 21:32:52.492352 3285277 PyTorchPredictorBenchLib.cpp:209] PyTorch run finished. Milliseconds per iter: 0.0888019. Iters per second: 11261

bs = 20 shows more gains probably because the tensors are bigger and therefore the cost of copying is higher

Before:

I1214 21:20:19.702445 3227229 PyTorchPredictorBenchLib.cpp:209] PyTorch run finished. Milliseconds per iter: 0.527563. Iters per second: 1895.51

After:

I1214 21:20:20.370173 3227307 PyTorchPredictorBenchLib.cpp:209] PyTorch run finished. Milliseconds per iter: 0.508734. Iters per second: 1965.67

```

Reviewed By: ajyu

Differential Revision: D25596290

fbshipit-source-id: da2f5a78a763895f2518c6298778ccc4d569462c

Summary:

This PR adds `torch.linalg.pinv`.

Changes compared to the original `torch.pinverse`:

* New kwarg "hermitian": with `hermitian=True` eigendecomposition is used instead of singular value decomposition.

* `rcond` argument can now be a `Tensor` of appropriate shape to apply matrix-wise clipping of singular values.

* Added `out=` variant (allocates temporary and makes a copy for now)

Ref. https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48399

Reviewed By: zhangguanheng66

Differential Revision: D25869572

Pulled By: mruberry

fbshipit-source-id: 0f330a91d24ba4e4375f648a448b27594e00dead

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48965

This PR pulls `__torch_function__` checking entirely into C++, and adds a special `object_has_torch_function` method for ops which only have one arg as this lets us skip tuple construction and unpacking. We can now also do away with the Python side fast bailout for `Tensor` (e.g. `if any(type(t) is not Tensor for t in tensors) and has_torch_function(tensors)`) because they're actually slower than checking with the Python C API.

Test Plan: Existing unit tests. Benchmarks are in #48966

Reviewed By: ezyang

Differential Revision: D25590732

Pulled By: robieta

fbshipit-source-id: 6bd74788f06cdd673f3a2db898143d18c577eb42

Summary:

This PR adds `torch.linalg.inv` for NumPy compatibility.

`linalg_inv_out` uses in-place operations on provided `result` tensor.

I modified `apply_inverse` to accept tensor of Int instead of std::vector, that way we can write a function similar to `linalg_inv_out` but removing the error checks and device memory synchronization.

I fixed `lda` (leading dimension parameter which is max(1, n)) in many places to handle 0x0 matrices correctly.

Zero batch dimensions are also working and tested.

Ref https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48261

Reviewed By: gchanan

Differential Revision: D25849590

Pulled By: mruberry

fbshipit-source-id: cfee6f1daf7daccbe4612ec68f94db328f327651

Summary:

This is related to https://github.com/pytorch/pytorch/issues/42666 .

I am opening this PR to have the opportunity to discuss things.

First, we need to consider the differences between `torch.svd` and `numpy.linalg.svd`:

1. `torch.svd` takes `some=True`, while `numpy.linalg.svd` takes `full_matrices=True`, which is effectively the opposite (and with the opposite default, too!)

2. `torch.svd` returns `(U, S, V)`, while `numpy.linalg.svd` returns `(U, S, VT)` (i.e., V transposed).

3. `torch.svd` always returns a 3-tuple; `numpy.linalg.svd` returns only `S` in case `compute_uv==False`

4. `numpy.linalg.svd` also takes an optional `hermitian=False` argument.

I think that the plan is to eventually deprecate `torch.svd` in favor of `torch.linalg.svd`, so this PR does the following:

1. Rename/adapt the old `svd` C++ functions into `linalg_svd`: in particular, now `linalg_svd` takes `full_matrices` and returns `VT`

2. Re-implement the old C++ interface on top of the new (by negating `full_matrices` and transposing `VT`).

3. The C++ version of `linalg_svd` *always* returns a 3-tuple (we can't do anything else). So, there is a python wrapper which manually calls `torch._C._linalg.linalg_svd` to tweak the return value in case `compute_uv==False`.

Currently, `linalg_svd_backward` is broken because it has not been adapted yet after the `V ==> VT` change, but before continuing and spending more time on it I wanted to make sure that the general approach is fine.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45562

Reviewed By: H-Huang

Differential Revision: D25803557

Pulled By: mruberry

fbshipit-source-id: 4966f314a0ba2ee391bab5cda4563e16275ce91f

Summary:

I am opening this PR early to have a place to discuss design issues.

The biggest difference between `torch.qr` and `numpy.linalg.qr` is that the former `torch.qr` takes a boolean parameter `some=True`, while the latter takes a string parameter `mode='reduced'` which can be one of the following:

`reduced`

this is completely equivalent to `some=True`, and both are the default.

`complete`

this is completely equivalent to `some=False`.

`r`

this returns only `r` instead of a tuple `(r, q)`. We have already decided that we don't want different return types depending on the parameters, so I propose to return `(r, empty_tensor)` instead. I **think** that in this mode it will be impossible to implement the backward pass, so we should raise an appropriate error in that case.

`raw`

in this mode, it returns `(h, tau)` instead of `(q, r)`. Internally, `h` and `tau` are obtained by calling lapack's `dgeqrf` and are later used to compute the actual values of `(q, r)`. The numpy docs suggest that these might be useful to call other lapack functions, but at the moment none of them is exposed by numpy and I don't know how often it is used in the real world.

I suppose the implementing the backward pass need attention to: the most straightforward solution is to use `(h, tau)` to compute `(q, r)` and then use the normal logic for `qr_backward`, but there might be faster alternatives.

`full`, `f`

alias for `reduced`, deprecated since numpy 1.8.0

`economic`, `e`

similar to `raw but it returns only `h` instead of `(h, tau). Deprecated since numpy 1.8.0

To summarize:

* `reduce`, `complete` and `r` are straightforward to implement.

* `raw` needs a bit of extra care, but I don't know how much high priority it is: since it is used rarely, we might want to not support it right now and maybe implement it in the future?

* I think we should just leave `full` and `economic` out, and possibly add a note to the docs explaining what you need to use instead

/cc mruberry

Pull Request resolved: https://github.com/pytorch/pytorch/pull/47764

Reviewed By: ngimel

Differential Revision: D25708870

Pulled By: mruberry

fbshipit-source-id: c25c70a23a02ec4322430d636542041e766ebe1b

Summary:

This PR adds `torch.linalg.inv` for NumPy compatibility.

`linalg_inv_out` uses in-place operations on provided `result` tensor.

I modified `apply_inverse` to accept tensor of Int instead of std::vector, that way we can write a function similar to `linalg_inv_out` but removing the error checks and device memory synchronization.

I fixed `lda` (leading dimension parameter which is max(1, n)) in many places to handle 0x0 matrices correctly.

Zero batch dimensions are also working and tested.

Ref https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48261

Reviewed By: ngimel

Differential Revision: D25690129

Pulled By: mruberry

fbshipit-source-id: edb2d03721f22168c42ded8458513cb23dfdc712

Summary:

Fixes https://github.com/pytorch/pytorch/issues/49214

**BC-Breaking**

Before this PR, `%=` didn't actually do the operation inplace and returned a new tensor.

After this PR, `%=` operation is actually inplace and the modified input tensor is returned.

Before PR,

```python

>>> import torch

>>> a = torch.tensor([11,12,13])

>>> id(a)

139627966219328

>>> a %= 10

>>> id(a)

139627966219264

```

After PR,

```python

>>> import torch

>>> a = torch.tensor([11,12,13])

>>> id(a)

139804702425280

>>> a %= 10

>>> id(a)

139804702425280

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/49390

Reviewed By: izdeby

Differential Revision: D25560423

Pulled By: zou3519

fbshipit-source-id: 2b92bfda260582aa4ac22c4025376295e51f854e

Summary:

Related https://github.com/pytorch/pytorch/issues/38349

Implement NumPy-like function `torch.broadcast_to` to broadcast the input tensor to a new shape.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48997

Reviewed By: anjali411, ngimel

Differential Revision: D25663937

Pulled By: mruberry

fbshipit-source-id: 0415c03f92f02684983f412666d0a44515b99373

Summary:

This PR adds `torch.linalg.solve`.

`linalg_solve_out` uses in-place operations on the provided result tensor.

I modified `apply_solve` to accept tensor of Int instead of std::vector, that way we can write a function similar to `linalg_solve_out` but removing the error checks and device memory synchronization.

In comparison to `torch.solve` this routine accepts 1-dimensional tensors and batches of 1-dim tensors for the right-hand-side term. `torch.solve` requires it to be at least 2-dimensional.

Ref. https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48456

Reviewed By: izdeby

Differential Revision: D25562222

Pulled By: mruberry

fbshipit-source-id: a9355c029e2442c2e448b6309511919631f9e43b

Summary:

This PR is to change the `aten::native_layer_norm` and `aten::native_layer_norm_backward` signature to match `torch.layer_norm` definition. The current definition doesn't provide enough information to the PyTorch JIT to fuse layer_norm during training.

`native_layer_norm(X, gamma, beta, M, N, eps)` =>

`native_layer_norm(input, normalized_shape, weight, bias, eps)`

`native_layer_norm_backward(dY, X, mean, rstd, gamma, M, N, grad_input_mask)` =>

`native_layer_norm_backward(dY, input, normalized_shape, mean, rstd, weight, bias, grad_input_mask)`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48971

Reviewed By: izdeby

Differential Revision: D25574070

Pulled By: ngimel

fbshipit-source-id: 23e2804295a95bda3f1ca6b41a1e4c5a3d4d31b4

Summary:

Ref https://github.com/pytorch/pytorch/issues/42175

This removes the 4 deprecated spectral functions: `torch.{fft,rfft,ifft,irfft}`. `torch.fft` is also now imported by by default.

The actual `at::native` functions are still used in `torch.stft` so can't be full removed yet. But will once https://github.com/pytorch/pytorch/issues/47601 has been merged.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48594

Reviewed By: heitorschueroff

Differential Revision: D25298929

Pulled By: mruberry

fbshipit-source-id: e36737fe8192fcd16f7e6310f8b49de478e63bf0

Summary:

Fixes https://github.com/pytorch/pytorch/issues/43837

This adds a `torch.broadcast_shapes()` function similar to Pyro's [broadcast_shape()](7c2c22c10d/pyro/distributions/util.py (L151)) and JAX's [lax.broadcast_shapes()](https://jax.readthedocs.io/en/test-docs/_modules/jax/lax/lax.html). This helper is useful e.g. in multivariate distributions that are parameterized by multiple tensors and we want to `torch.broadcast_tensors()` but the parameter tensors have different "event shape" (e.g. mean vectors and covariance matrices). This helper is already heavily used in Pyro's distribution codebase, and we would like to start using it in `torch.distributions`.

- [x] refactor `MultivariateNormal`'s expansion logic to use `torch.broadcast_shapes()`

- [x] add unit tests for `torch.broadcast_shapes()`

- [x] add docs

cc neerajprad

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43935

Reviewed By: bdhirsh

Differential Revision: D25275213

Pulled By: neerajprad

fbshipit-source-id: 1011fdd597d0a7a4ef744ebc359bbb3c3be2aadc

Summary:

This PR adds `torch.linalg.matrix_rank`.

Changes compared to the original `torch.matrix_rank`:

- input with the complex dtype is supported

- batched input is supported

- "symmetric" kwarg renamed to "hermitian"

Should I update the documentation for `torch.matrix_rank`?

For the input with no elements (for example 0×0 matrix), the current implementation is divergent from NumPy. NumPy stumbles on not defined max for such input, here I chose to return appropriately sized tensor of zeros. I think that's mathematically a correct thing to do.

Ref https://github.com/pytorch/pytorch/issues/42666.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/48206

Reviewed By: albanD

Differential Revision: D25211965

Pulled By: mruberry

fbshipit-source-id: ae87227150ab2cffa07f37b4a3ab228788701837

Summary:

The approach is to simply reuse `torch.repeat` but adding one more functionality to tile, which is to prepend 1's to reps arrays if there are more dimensions to the tensors than the reps given in input. Thus for a tensor of shape (64, 3, 24, 24) and reps of (2, 2) will become (1, 1, 2, 2), which is what NumPy does.

I've encountered some instability with the test on my end, where I could get a random failure of the test (due to, sometimes, random value of `self.dim()`, and sometimes, segfaults). I'd appreciate any feedback on the test or an explanation for this instability so I can this.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/47974

Reviewed By: ngimel

Differential Revision: D25148963

Pulled By: mruberry

fbshipit-source-id: bf63b72c6fe3d3998a682822e669666f7cc97c58

Summary:

This PR adds `torch.linalg.eigh`, and `torch.linalg.eigvalsh` for NumPy compatibility.

The current `torch.symeig` uses (on CPU) a different LAPACK routine than NumPy (`syev` vs `syevd`). Even though it shouldn't matter in practice, `torch.linalg.eigh` uses `syevd` (as NumPy does).

Ref https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45526

Reviewed By: gchanan

Differential Revision: D25022659

Pulled By: mruberry

fbshipit-source-id: 3676b77a121c4b5abdb712ad06702ac4944e900a

Summary:

Adds ldexp operator for https://github.com/pytorch/pytorch/issues/38349

I'm not entirely sure the changes to `NamedRegistrations.cpp` were needed but I saw other operators in there so I added it.

Normally the ldexp operator is used along with the frexp to construct and deconstruct floating point values. This is useful for performing operations on either the mantissa and exponent portions of floating point values.

Sleef, std math.h, and cuda support both ldexp and frexp but not for all data types. I wasn't able to figure out how to get the iterators to play nicely with a vectorized kernel so I have left this with just the normal CPU kernel for now.

This is the first operator I'm adding so please review with an eye for errors.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45370

Reviewed By: mruberry

Differential Revision: D24333516

Pulled By: ranman

fbshipit-source-id: 2df78088f00aa9789aae1124eda399771e120d3f

Summary:

Reference https://github.com/pytorch/pytorch/issues/38349

Delegates to `torch.transpose` (not sure what is the best way to alias)

TODO:

* [x] Add test

* [x] Add documentation

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46041

Reviewed By: gchanan

Differential Revision: D25022816

Pulled By: mruberry

fbshipit-source-id: c80223d081cef84f523ef9b23fbedeb2f8c1efc5

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/47225

Summary

-------

This PR implements Tensor.new_empty_strided. Many of our torch.* factory

functions have a corresponding new_* method (e.g., torch.empty and

torch.new_empty), but there is no corresponding method to

torch.empty_strided. This PR adds one.

Motivation

----------

The real motivation behind this is for vmap to be able to work through

CopySlices. CopySlices shows up a lot in double backwards because a lot

of view functions have backward formulas that perform view+inplace.

e0fd590ec9/torch/csrc/autograd/functions/tensor.cpp (L78-L106)

To support vmap through CopySlices, the approach in this stack is to:

- add `Tensor.new_empty_strided` and replace `empty_strided` in

CopySlices with that so that we can propagate batch information.

- Make some slight modifications to AsStridedBackward (and add

as_strided batching rule)

Please let me know if it would be better if I squashed everything related to

supporting vmap over CopySlices together into a single big PR.

Test Plan

---------

- New tests.

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision: D24741688

Pulled By: zou3519

fbshipit-source-id: b688047d2eb3f92998896373b2e9d87caf2c4c39

Summary:

This PR adds a function for calculating the Kronecker product of tensors.

The implementation is based on `at::tensordot` with permutations and reshape.

Tests pass.

TODO:

- [x] Add more test cases

- [x] Write documentation

- [x] Add entry `common_methods_invokations.py`

Ref. https://github.com/pytorch/pytorch/issues/42666

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45358

Reviewed By: mrshenli

Differential Revision: D24680755

Pulled By: mruberry

fbshipit-source-id: b1f8694589349986c3abfda3dc1971584932b3fa

Summary:

Fixes https://github.com/pytorch/pytorch/issues/46373

As noted in https://github.com/pytorch/pytorch/issues/46373, there needs to be a flag passed into the engine that indicates whether it was executed through the backward api or grad api. Tentatively named the flag `accumulate_grad` since functionally, backward api accumulates grad into .grad while grad api captures the grad and returns it.

Moving changes not necessary to the python api (cpp, torchscript) to a new PR.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46855

Reviewed By: ngimel

Differential Revision: D24649054

Pulled By: soulitzer

fbshipit-source-id: 6925d5a67d583eeb781fc7cfaec807c410e1fc65

Summary:

Related https://github.com/pytorch/pytorch/issues/38349

This PR implements `column_stack` as the composite ops of `torch.reshape` and `torch.hstack`, and makes `row_stack` as the alias of `torch.vstack`.

Todo

- [x] docs

- [x] alias pattern for `row_stack`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/46313

Reviewed By: ngimel

Differential Revision: D24585471

Pulled By: mruberry

fbshipit-source-id: 62fc0ffd43d051dc3ecf386a3e9c0b89086c1d1c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45847

Original PR here https://github.com/pytorch/pytorch/pull/45084. Created this one because I was having problems with ghstack.

Test Plan: Imported from OSS

Reviewed By: mruberry

Differential Revision: D24136629

Pulled By: heitorschueroff

fbshipit-source-id: dd7c7540a33f6a19e1ad70ba2479d5de44abbdf9

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/45586

Test Plan: The unit test has been softened to be less platform sensitive.

Reviewed By: mruberry

Differential Revision: D24025415

Pulled By: robieta

fbshipit-source-id: ee986933b984e736cf1525e1297de6b21ac1f0cf

Summary:

This PR allows Timer to collect deterministic instruction counts for (some) snippets. Because of the intrusive nature of Valgrind (effectively replacing the CPU with an emulated one) we have to perform our measurements in a separate process. This PR writes a `.py` file containing the Timer's `setup` and `stmt`, and executes it within a `valgrind` subprocess along with a plethora of checks and error handling. There is still a bit of jitter around the edges due to the Python glue that I'm using, but the PyTorch signal is quite good and thus this provides a low friction way of getting signal. I considered using JIT as an alternative, but:

A) Python specific overheads (e.g. parsing) are important

B) JIT might do rewrites which would complicate measurement.

Consider the following bit of code, related to https://github.com/pytorch/pytorch/issues/44484:

```

from torch.utils._benchmark import Timer

counts = Timer(

"x.backward()",

setup="x = torch.ones((1,)) + torch.ones((1,), requires_grad=True)"

).collect_callgrind()

for c, fn in counts[:20]:

print(f"{c:>12} {fn}")

```

```

812800 ???:_dl_update_slotinfo

355600 ???:update_get_addr

308300 work/Python/ceval.c:_PyEval_EvalFrameDefault'2

304800 ???:__tls_get_addr

196059 ???:_int_free

152400 ???:__tls_get_addr_slow

138400 build/../c10/core/ScalarType.h:c10::typeMetaToScalarType(caffe2::TypeMeta)

126526 work/Objects/dictobject.c:_PyDict_LoadGlobal

114268 ???:malloc

101400 work/Objects/unicodeobject.c:PyUnicode_FromFormatV

85900 work/Python/ceval.c:_PyEval_EvalFrameDefault

79946 work/Objects/typeobject.c:_PyType_Lookup

72000 build/../c10/core/Device.h:c10::Device::validate()

70000 /usr/include/c++/8/bits/stl_vector.h:std::vector<at::Tensor, std::allocator<at::Tensor> >::~vector()

66400 work/Objects/object.c:_PyObject_GenericGetAttrWithDict

63000 ???:pthread_mutex_lock

61200 work/Objects/dictobject.c:PyDict_GetItem

59800 ???:free

58400 work/Objects/tupleobject.c:tupledealloc

56707 work/Objects/dictobject.c:lookdict_unicode_nodummy

```

Moreover, if we backport this PR to 1.6 (just copy the `_benchmarks` folder) and load those counts as `counts_1_6`, then we can easily diff them:

```

print(f"Head instructions: {sum(c for c, _ in counts)}")

print(f"1.6 instructions: {sum(c for c, _ in counts_1_6)}")

count_dict = {fn: c for c, fn in counts}

for c, fn in counts_1_6:

_ = count_dict.setdefault(fn, 0)

count_dict[fn] -= c

count_diffs = sorted([(c, fn) for fn, c in count_dict.items()], reverse=True)

for c, fn in count_diffs[:15] + [["", "..."]] + count_diffs[-15:]:

print(f"{c:>8} {fn}")

```

```

Head instructions: 7609547

1.6 instructions: 6059648

169600 ???:_dl_update_slotinfo

101400 work/Objects/unicodeobject.c:PyUnicode_FromFormatV

74200 ???:update_get_addr

63600 ???:__tls_get_addr

46800 work/Python/ceval.c:_PyEval_EvalFrameDefault

33512 work/Objects/dictobject.c:_PyDict_LoadGlobal

31800 ???:__tls_get_addr_slow

31700 build/../aten/src/ATen/record_function.cpp:at::RecordFunction::RecordFunction(at::RecordScope)

28300 build/../torch/csrc/utils/python_arg_parser.cpp:torch::FunctionSignature::parse(_object*, _object*, _object*, _object**, bool)

27800 work/Objects/object.c:_PyObject_GenericGetAttrWithDict

27401 work/Objects/dictobject.c:lookdict_unicode_nodummy

24115 work/Objects/typeobject.c:_PyType_Lookup

24080 ???:_int_free

21700 work/Objects/dictobject.c:PyDict_GetItemWithError

20700 work/Objects/dictobject.c:PyDict_GetItem

...

-3200 build/../c10/util/SmallVector.h:at::TensorIterator::binary_op(at::Tensor&, at::Tensor const&, at::Tensor const&, bool)

-3400 build/../aten/src/ATen/native/TensorIterator.cpp:at::TensorIterator::resize_outputs(at::TensorIteratorConfig const&)

-3500 /usr/include/c++/8/x86_64-redhat-linux/bits/gthr-default.h:std::unique_lock<std::mutex>::unlock()

-3700 build/../torch/csrc/utils/python_arg_parser.cpp:torch::PythonArgParser::raw_parse(_object*, _object*, _object**)

-4207 work/Objects/obmalloc.c:PyMem_Calloc

-4500 /usr/include/c++/8/bits/stl_vector.h:std::vector<at::Tensor, std::allocator<at::Tensor> >::~vector()

-4800 build/../torch/csrc/autograd/generated/VariableType_2.cpp:torch::autograd::VariableType::add__Tensor(at::Tensor&, at::Tensor const&, c10::Scalar)

-5000 build/../c10/core/impl/LocalDispatchKeySet.cpp:c10::impl::ExcludeDispatchKeyGuard::ExcludeDispatchKeyGuard(c10::DispatchKey)

-5300 work/Objects/listobject.c:PyList_New

-5400 build/../torch/csrc/utils/python_arg_parser.cpp:torch::FunctionParameter::check(_object*, std::vector<pybind11::handle, std::allocator<pybind11::handle> >&)

-5600 /usr/include/c++/8/bits/std_mutex.h:std::unique_lock<std::mutex>::unlock()

-6231 work/Objects/obmalloc.c:PyMem_Free

-6300 work/Objects/listobject.c:list_repeat

-11200 work/Objects/listobject.c:list_dealloc

-28900 build/../torch/csrc/utils/python_arg_parser.cpp:torch::FunctionSignature::parse(_object*, _object*, _object**, bool)

```

Remaining TODOs:

* Include a timer in the generated script for cuda sync.

* Add valgrind to CircleCI machines and add a unit test.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44717

Reviewed By: soumith

Differential Revision: D24010742

Pulled By: robieta

fbshipit-source-id: df6bc765f8efce7193893edba186cd62b4b23623

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44433

Not entirely sure why, but changing the type of beta from `float` to `double in autocast_mode.cpp and FunctionsManual.h fixes my compiler errors, failing instead at link time

fixing some type errors, updated fn signature in a few more files

removing my usage of Scalar, making beta a double everywhere instead

Test Plan: Imported from OSS

Reviewed By: mrshenli

Differential Revision: D23636720

Pulled By: bdhirsh

fbshipit-source-id: caea2a1f8dd72b3b5fd1d72dd886b2fcd690af6d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45149

The choose_qparams_optimized calculates the the optimized qparams.

It uses a greedy approach to nudge the min and max and calculate the l2 norm

and tries to minimize the quant error by doing `torch.norm(x-fake_quant(x,s,z))`

Test Plan: Imported from OSS

Reviewed By: raghuramank100

Differential Revision: D23848060

fbshipit-source-id: c6c57c9bb07664c3f1c87dd7664543e09f634aee

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43680

As discussed [here](https://github.com/pytorch/pytorch/issues/43342),

adding in a Python-only implementation of the triplet-margin loss that takes a

custom distance function. Still discussing whether this is necessary to add to

PyTorch Core.

Test Plan:

python test/run_tests.py

Imported from OSS

Reviewed By: albanD

Differential Revision: D23363898

fbshipit-source-id: 1cafc05abecdbe7812b41deaa1e50ea11239d0cb

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/39955

resolves https://github.com/pytorch/pytorch/issues/36323 by adding `torch.sgn` for complex tensors.

`torch.sgn` returns `x/abs(x)` for `x != 0` and returns `0 + 0j` for `x==0`

This PR doesn't test the correctness of the gradients. It will be done as a part of auditing all the ops in future once we decide the autograd behavior (JAX vs TF) and add gradchek.

Test Plan: Imported from OSS

Reviewed By: mruberry

Differential Revision: D23460526

Pulled By: anjali411

fbshipit-source-id: 70fc4e14e4d66196e27cf188e0422a335fc42f92

Summary:

These alias are consistent with NumPy. Note that C++'s naming would be different (std::multiplies and std::divides), and that PyTorch's existing names (mul and div) are consistent with Python's dunders.

This also improves the instructions for adding an alias to clarify that dispatch keys should be removed when copying native_function.yaml entries to create the alias entries.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44463

Reviewed By: ngimel

Differential Revision: D23670782

Pulled By: mruberry

fbshipit-source-id: 9f1bdf8ff447abc624ff9e9be7ac600f98340ac4

Summary:

Ref https://github.com/pytorch/pytorch/issues/42175, fixes https://github.com/pytorch/pytorch/issues/34797

This adds complex support to `torch.stft` and `torch.istft`. Note that there are really two issues with complex here: complex signals, and returning complex tensors.

## Complex signals and windows

`stft` currently assumes all signals are real and uses `rfft` with `onesided=True` by default. Similarly, `istft` always takes a complex fourier series and uses `irfft` to return real signals.

For `stft`, I now allow complex inputs and windows by calling the full `fft` if either are complex. If the user gives `onesided=True` and the signal is complex, then this doesn't work and raises an error instead. For `istft`, there's no way to automatically know what to do when `onesided=False` because that could either be a redundant representation of a real signal or a complex signal. So there, the user needs to pass the argument `return_complex=True` in order to use `ifft` and get a complex result back.

## stft returning complex tensors

The other issue is that `stft` returns a complex result, represented as a `(... X 2)` real tensor. I think ideally we want this to return proper complex tensors but to preserver BC I've had to add a `return_complex` argument to manage this transition. `return_complex` defaults to false for real inputs to preserve BC but defaults to True for complex inputs where there is no BC to consider.

In order to `return_complex` by default everywhere without a sudden BC-breaking change, a simple transition plan could be:

1. introduce `return_complex`, defaulted to false when BC is an issue but giving a warning. (this PR)

2. raise an error in cases where `return_complex` defaults to false, making it a required argument.

3. change `return_complex` default to true in all cases.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43886

Reviewed By: glaringlee

Differential Revision: D23760174

Pulled By: mruberry

fbshipit-source-id: 2fec4404f5d980ddd6bdd941a63852a555eb9147

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44393

torch.quantile now correctly propagates nan and implemented torch.nanquantile similar to numpy.nanquantile.

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D23649613

Pulled By: heitorschueroff

fbshipit-source-id: 5201d076745ae1237cedc7631c28cf446be99936

Summary:

This PR adds the following aliaes:

- not_equal for torch.ne

- greater for torch.gt

- greater_equal for torch.ge

- less for torch.lt

- less_equal for torch.le

This aliases are consistent with NumPy's naming for these functions.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43870

Reviewed By: zou3519

Differential Revision: D23498975

Pulled By: mruberry

fbshipit-source-id: 78560df98c9f7747e804a420c1e53fd1dd225002

Summary:

Adds two more "missing" NumPy aliases: arctanh and arcsinh, and simplifies the dispatch of other arc* aliases.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43762

Reviewed By: ngimel

Differential Revision: D23396370

Pulled By: mruberry

fbshipit-source-id: 43eb0c62536615fed221d460c1dec289526fb23c

Summary:

Add a max/min operator that only return values.

## Some important decision to discuss

| **Question** | **Current State** |

|---------------------------------------|-------------------|

| Expose torch.max_values to python? | No |

| Remove max_values and only keep amax? | Yes |

| Should amax support named tensors? | Not in this PR |

## Numpy compatibility

Reference: https://numpy.org/doc/stable/reference/generated/numpy.amax.html

| Parameter | PyTorch Behavior |

|--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------|

| `axis`: None or int or tuple of ints, optional. Axis or axes along which to operate. By default, flattened input is used. If this is a tuple of ints, the maximum is selected over multiple axes, instead of a single axis or all the axes as before. | Named `dim`, behavior same as `torch.sum` (https://github.com/pytorch/pytorch/issues/29137) |

| `out`: ndarray, optional. Alternative output array in which to place the result. Must be of the same shape and buffer length as the expected output. | Same |

| `keepdims`: bool, optional. If this is set to True, the axes which are reduced are left in the result as dimensions with size one. With this option, the result will broadcast correctly against the input array. | implemented as `keepdim` |

| `initial`: scalar, optional. The minimum value of an output element. Must be present to allow computation on empty slice. | Not implemented in this PR. Better to implement for all reductions in the future. |

| `where`: array_like of bool, optional. Elements to compare for the maximum. | Not implemented in this PR. Better to implement for all reductions in the future. |

**Note from numpy:**

> NaN values are propagated, that is if at least one item is NaN, the corresponding max value will be NaN as well. To ignore NaN values (MATLAB behavior), please use nanmax.

PyTorch has the same behavior

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43092

Reviewed By: ngimel

Differential Revision: D23360705

Pulled By: mruberry

fbshipit-source-id: 5bdeb08a2465836764a5a6fc1a6cc370ae1ec09d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/42708

Add rowwise prune pytorch op.

This operator introduces sparsity to the 'weights' matrix with the help

of the importance indicator 'mask'.

A row is considered important and not pruned if the mask value for that

particular row is 1(True) and not important otherwise.

Test Plan:

buck test caffe2/torch/fb/sparsenn:test -- rowwise_prune

buck test caffe2/test:pruning

Reviewed By: supriyar

Differential Revision: D22849432

fbshipit-source-id: 456f4f77c04158cdc3830b2e69de541c7272a46d

Summary:

Related to https://github.com/pytorch/pytorch/issues/38349

Implement NumPy-like functions `maximum` and `minimum`.

The `maximum` and `minimum` functions compute input tensors element-wise, returning a new array with the element-wise maxima/minima.

If one of the elements being compared is a NaN, then that element is returned, both `maximum` and `minimum` functions do not support complex inputs.

This PR also promotes the overloaded versions of torch.max and torch.min, by re-dispatching binary `torch.max` and `torch.min` to `torch.maximum` and `torch.minimum`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/42579

Reviewed By: mrshenli

Differential Revision: D23153081

Pulled By: mruberry

fbshipit-source-id: 803506c912440326d06faa1b71964ec06775eac1

Summary:

This adds the torch.arccosh alias and updates alias testing to validate the consistency of the aliased and original operations. The alias testing is also updated to run on CPU and CUDA, which revealed a memory leak when tracing (see https://github.com/pytorch/pytorch/issues/43119).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43107

Reviewed By: ngimel

Differential Revision: D23156472

Pulled By: mruberry

fbshipit-source-id: 6155fac7954fcc49b95e7c72ed917c85e0eabfcd

Summary:

This PR:

- updates test_op_normalization.py, which verifies that aliases are correctly translated in the JIT