Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45946

Also, make these functions static - they are not using anything from

`LoopNest` and can be applied to any `Stmt`.

Test Plan: Imported from OSS

Reviewed By: bertmaher

Differential Revision: D24156002

Pulled By: ZolotukhinM

fbshipit-source-id: 1c7d205f85a2a1684e07eb836af662f10d0a50fc

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45936

`Tensor` has been a view into a `Function` that was supposed to be used

for a more general case when we have multiple computations over the same

domain (aka multiple output functions). We have never got to a point

where we need this and now have other ideas in mind on how to support

this case if need be. For now, let's just nuke `Function` to reduce the

overall system complexity.

The change should not affect any existing behavior.

Test Plan: Imported from OSS

Reviewed By: bertmaher

Differential Revision: D24153214

Pulled By: ZolotukhinM

fbshipit-source-id: 26d5f11db5d661ff5e1135f4a49eff1c6d4c1bd5

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45900

Use `torch:cuda::nccl:all2all` from `ProcesGroupNCCL.cpp`

Fixes https://github.com/pytorch/pytorch/issues/42517

Here is a NCCL dependency graph:

```

libnccl.a --> libtorch_cuda.so ---> libtorch_python.so

| ^

| |

--------> libc10d.a -----------------

```

When static library is linked into a dynamic library or an executable, linker is removes all unused/duplicate symbols from that library, unless `-whole-archive` option is used. Before https://github.com/pytorch/pytorch/pull/42514 all nccl call made from `ProcessGroupNCCL.cpp` were also made from `torch/csrc/cuda/nccl.cpp`, which is compiled as part of `libtorch_cuda.so`

But adding `ncclSend`|`ncclRecv` to ProcesGroupNCCL.cpp forced linker to embed those into `libtorch_python.so`, which also resulted in linking other dependent symbols into the library.

This PR adds `nccl[Send|Recv]` call to `torch_cuda.so` by implementing `all2all` in `torch_cuda` and thus avoids double linking the static library.

More involved, but prone solution, would be to use wrappers exported in `torch::cuda::nccl` namespace, instead of making direct NCCL API calls.

Test Plan: Imported from OSS

Reviewed By: mingzhe09088

Differential Revision: D24138011

Pulled By: malfet

fbshipit-source-id: 33305197fc7d8707b7fd3a66b543f7733b9241a1

Summary:

This is a rewrite of the Registerizer, supporting scalar replacement in *vastly* more situations. As a refresher, the registerizer does this:

Before:

``` A[0] = 0;

for (int x = 0; x < 10; x++) {

A[0] = (A[0]) + x;

}

```

After:

```

int A_ = 0;

for (int x = 0; x < 10; x++) {

A_ = x + A_;

}

A[0] = A_;

```

Which can greatly reduce the number of accesses to main memory in a kernel. There are cases where doing this gets complicated, and the existing implementation bails out whenever encountering multiple partial overlaps of the same buffer, or conditional accesses under any circumstances. This makes it much less useful in the presence of complex (ie. real world not example) kernels. This new version should work optimally in almost all cases (I have a few minor follow ups).

I tested this version extensively, and found quite a few bugs in the original implementation I'd prefer not to back port fixes for - so I'm in favor of landing this even if we don't immediately see a perf win. I believe the killer app for this kind of optimization is fused reductions and we haven't enabled many examples of that yet.

It is safe to move two accesses of the same Tensor element to a local scalar Var if between all usages of the element there are no other Loads or Stores that may refer to it. In the comments I refer to this as overlapping the access, or "cutting" the existing AccessInfo. In the case where a candidate for registerization is cut, it may be possible to finalize the access early by writing it back to the Tensor and then create a new scalar variable after the overlapping access is complete. We will attempt to do this when it saves memory accesses.

There are a few cases that make this more challenging:

- For: Loops change the number of real usages of a buffer by the loop extent, but only if we can pull the definition and finalization of the scalar variable out of the loop block. For loops often create accesses which are conditional on a loop var and will overlap large ranges of elements.

E.g. Before:

```

A[0] = 2;

for (int x1 = 0; x1 < 10; x1++) {

A[0] = (A[0]) + x1;

}

for (int x2 = 1; x2 < 10; x2++) {

A[x2] = A[x2 - 1];

}

for (int x3 = 0; x3 < 10; x3++) {

A[0] = (A[0]) + x3;

}

```

After:

```

int A_1 = 2;

for (int x1 = 0; x1 < 10; x1++) {

A_1 = A_1 + x1;

}

A[0] = A_1;

for (int x2 = 1; x2 < 10; x2++) {

A[x2] = A[x2 - 1];

}

int A_2 = A[0];

for (int x3 = 0; x3 < 10; x3++) {

A_2 = A_2 + x3;

}

A[0] = A_2;

```

- Cond: Conditions complicate lifting scalars out of internal scopes. Generally we cannot lift an access outside of a conditional scope unless there is already a reference to that same access at the higher scope, since we don't know if the condition was guarding an array access not safe at the higher scope. In the comments I refer to this as the condition "hiding" the access, and the outer access "unhiding" it.

E.g. this example:

```

if (x<5 ? 1 : 0) {

A[x] = (A[x]) + 1;

}

A[x] = (A[x]) + 1;

if (x>5 ? 1 : 0) {

A[x] = (A[x]) + 1;

}

```

The A[x] access can be registerized due to the unconditional access between the two conditions:

```

int A_1 = A[x];

if (x<5 ? 1 : 0) {

A_1 = A_1 + 1;

}

A_1 = A_1 + 1;

if (x>5 ? 1 : 0) {

A_1 = A_1 + 1;

}

A[x] = A_1;

```

But this example has no accesses that can be registerized:

```

if (x<5 ? 1 : 0) {

A[x] = (A[x]) + 1;

}

if (x>5 ? 1 : 0) {

A[x] = (A[x]) + 1;

}

```

- IfThenElse: Same situation as Cond, except since IfThenElse is an Expr rather than a Stmt we cannot insert the scalar definition or finalizer within the conditional scope. Accesses inside an IfThenElse can be safely combined with external accesses but cannot exist completely within.

E.g in this example the `B[x]` cannot be registerized as there is no safe place to define it.

```

A[x] = IfThenElse(x<3 ? 1 : 0, (B[x]) + (B[x]), B[x]);

```

But the equivalent kernel using Cond can be registerized:

```

if (x<3 ? 1 : 0) {

float B_1 = B[x];

A[x] = B_1 + B_1;

} else {

A[x] = B[x];

}

```

- Let: Accesses dependent on local variables via Let Stmts, or loop vars, cannot be raised outside of the scope of the dependent var.

E.g. no accesses in this example can be registerized:

```

for (int x = 0; x < 10; x++) {

int y = 30;

A[y] = x + (A[y]);

}

```

But they can in this example:

```

int y = 30;

for (int x = 0; x < 10; x++) {

A[y] = x + (A[y]);

}

```

**Testing**

The majority of this PR is tests, over 3k lines of them, because there are many different rules to consider and they can interact together more or less arbitrarily. I'd greatly appreciate any ideas for situations we could encounter that are not covered by the tests.

**Performance**

Still working on it, will update. In many FastRRNS sub kernels this diff reduces the number of total calls to Store or Load by 4x, but since those kernels use Concat very heavily (meaning a lot of branches) the actual number encountered by any particular thread on GPU is reduced only slightly. Overall perf improved by a very small amount.

Reductions is where this optimization should really shine, and in particular the more complex the kernel gets (with extra fusions, etc) the better this version of the registerizer should do compared the existing version.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45574

Reviewed By: albanD

Differential Revision: D24151517

Pulled By: nickgg

fbshipit-source-id: 9f0b2d98cc213eeea3fda16fee3d144d49fd79ae

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45929

We were checking `and` when we should have been checking `or`.

Test Plan: Imported from OSS

Reviewed By: bertmaher

Differential Revision: D24148804

Pulled By: eellison

fbshipit-source-id: 9c394ea10ac91a588169d934b1e3208512c71b9d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45857

Fix for https://github.com/pytorch/pytorch/issues/45627

Op was calling `insert` instead of `insert_or_assign`, so it wouldn't overwrite an existing key.

Test Plan: Imported from OSS

Reviewed By: bertmaher

Differential Revision: D24148805

Pulled By: eellison

fbshipit-source-id: bf39c71d5d928890b82cff1a9a0985dc47c1ffac

Summary:

This PR fixes a bug when torch is used with pyspark, by converting namedtuples in `torch.utils.data._utils.worker` into classes.

Before this PR, creating an IterableDataset and then running `list(torch.utils.data.DataLoader(MyIterableDataset(...), num_workers=2)))` will not terminate, if pyspark is also being used. This is because pyspark hijacks namedtuples to make them pickleable ([see here](https://github.com/apache/spark/blob/master/python/pyspark/serializers.py#L370)). So `_IterableDatasetStopIteration` would be modified, and then the check at [this line in dataloader.py](5472426b9f/torch/utils/data/dataloader.py (L1072)) is never true.

Converting the namedtuples to classes avoids this hijack and allows the iteration to correctly stop when signaled.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45870

Reviewed By: ngimel

Differential Revision: D24162748

Pulled By: albanD

fbshipit-source-id: 52f009784500fa594b2bbd15a8b2e486e00c37fb

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45780

When training jobs running with NCCL fail sometimes it is hard to

debug the reason of the failure and our logging doesn't provide enough

information at times to narrow down the issue.

To improve the debugging experience, I've enhanced our logging to add a lot

more information about what the ProcessGroup is doing under the hood.

#Closes: https://github.com/pytorch/pytorch/issues/45310

Sample output:

```

> I1002 15:18:48.539551 1822062 ProcessGroupNCCL.cpp:528] [Rank 2] NCCL watchdog thread started!

> I1002 15:18:48.539533 1821946 ProcessGroupNCCL.cpp:492] [Rank 2] ProcessGroupNCCL initialized with following options:

> NCCL_ASYNC_ERROR_HANDLING: 0

> NCCL_BLOCKING_WAIT: 1

> TIMEOUT(ms): 1000

> USE_HIGH_PRIORITY_STREAM: 0

> I1002 15:18:51.080338 1822035 ProcessGroupNCCL.cpp:530] [Rank 1] NCCL watchdog thread terminated normally

> I1002 15:18:52.161218 1821930 ProcessGroupNCCL.cpp:385] [Rank 0] Wrote aborted communicator id to store: NCCLABORTEDCOMM:a0e17500002836080c8384c50000000100000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000

> I1002 15:18:52.161238 1821930 ProcessGroupNCCL.cpp:388] [Rank 0] Caught collective operation timeout for work: WorkNCCL(OpType=ALLREDUCE, TensorShape=[10], Timeout(ms)=1000)

> I1002 15:18:52.162120 1821957 ProcessGroupNCCL.cpp:530] [Rank 0] NCCL watchdog thread terminated normally

> I1002 15:18:58.539937 1822062 ProcessGroupNCCL.cpp:649] [Rank 2] Found key in store: NCCLABORTEDCOMM:a0e17500002836080c8384c50000000100000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000, from rank: 0, aborting appropriate communicators

> I1002 15:19:34.740937 1822062 ProcessGroupNCCL.cpp:662] [Rank 2] Aborted communicators for key in store: NCCLABORTEDCOMM:a0e17500002836080c8384c50000000100000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000

> I1002 15:19:34.741678 1822062 ProcessGroupNCCL.cpp:530] [Rank 2] NCCL watchdog thread terminated normally

```

ghstack-source-id: 113731163

Test Plan: waitforbuildbot

Reviewed By: osalpekar

Differential Revision: D24093032

fbshipit-source-id: 240b03562f8ccccc3d872538f5e331df598ceca7

Summary:

Currently, a GraphRoot instance doesn't have an associated stream. Streaming backward synchronization logic assumes the instance ran on the default stream, and tells consumer ops to sync with the default stream. If the gradient the GraphRoot instance passes to consumer backward ops was populated on a non-default stream, we have a race condition.

The race condition can exist even if the user doesn't give a manually populated gradient:

```python

with torch.cuda.stream(side_stream):

# loss.backward() implicitly synthesizes a one-element 1.0 tensor on side_stream

# GraphRoot passes it to consumers, but consumers first sync on default stream, not side_stream.

loss.backward()

# Internally to backward(), streaming-backward logic takes over, stuff executes on the same stream it ran on in forward,

# and the side_stream context is irrelevant. GraphRoot's interaction with its first consumer(s) is the spot where

# the side_stream context causes a problem.

```

This PR fixes the race condition by associating a GraphRoot instance, at construction time, with the current stream(s) on the device(s) of the grads it will pass to consumers. (i think this relies on GraphRoot executing in the main thread, before backward thread(s) fork, because the grads were populated on the main thread.)

The test demonstrates the race condition. It fails reliably without the PR's GraphRoot diffs and passes with the GraphRoot diffs.

With the GraphRoot diffs, manually populating an incoming-gradient arg for `backward` (or `torch.autograd.grad`) and the actual call to `autograd.backward` will have the same stream-semantics relationship as any other pair of ops:

```python

# implicit population is safe

with torch.cuda.stream(side_stream):

loss.backward()

# explicit population in side stream then backward in side stream is safe

with torch.cuda.stream(side_stream):

kickoff_grad = torch.ones_like(loss)

loss.backward(gradient=kickoff_grad)

# explicit population in one stream then backward kickoff in another stream

# is NOT safe, even with this PR's diffs, but that unsafety is consistent with

# stream-semantics relationship of any pair of ops

kickoff_grad = torch.ones_like(loss)

with torch.cuda.stream(side_stream):

loss.backward(gradient=kickoff_grad)

# Safe, as you'd expect for any pair of ops

kickoff_grad = torch.ones_like(loss)

side_stream.wait_stream(torch.cuda.current_stream())

with torch.cuda.stream(side_stream):

loss.backward(gradient=kickoff_grad)

```

This PR also adds the last three examples above to cuda docs and references them from autograd docstrings.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45787

Reviewed By: nairbv

Differential Revision: D24138376

Pulled By: albanD

fbshipit-source-id: bc4cd9390f9f0358633db530b1b09f9c1080d2a3

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45686

This uses an online graphviz viewer. The code is simpler, and

since it embeds all the data in the url you can just click the url

from your terminal.

Test Plan: Imported from OSS

Reviewed By: ZolotukhinM

Differential Revision: D24059157

Pulled By: zdevito

fbshipit-source-id: 94d755cc2986c4226180b09ba36f8d040dda47cc

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45665Fixes#43944

Note that the codegen doesn't use a proper parser so, in the same way as with lists, the string `, ` cannot appear in defaults or it will be interpreted as a splitting point between arguments.

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D24141835

Pulled By: ezyang

fbshipit-source-id: 578127861fd2504917f4486c44100491a2c40343

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45752

Use the torch.quint4x2 dtype to create 4-bit packed tensors in the previous PR.

These packed tensors can be directly consumed by the operator.

Serialization of the packed tensors is supported using torchbind custom class.

Module support will follow in a later PR.

Test Plan:

python test/test_quantization.py TestEmbeddingBagOps

Imported from OSS

Reviewed By: jerryzh168

Differential Revision: D24120996

fbshipit-source-id: 2639353b3343ebc69e058b5ba237d3fc56728e1c

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45639

`StaticRuntime::run_individual` is to mimic the caffe2 operator benchmark `SimpleNet::TEST_Benchmark`, so we can accurate information on the operator breakdown. We found that the PyTorch AutogradProfiler adds a lot of overhead to small models, such as the adindexer precomputation_merge net, 100% for batch_size 1, 33% for batch_size 20. This implementation adds very little overhead, as shown in the test plan.

Test Plan: Test results are fb internal only.

Reviewed By: yinghai, dzhulgakov

Differential Revision: D24012088

fbshipit-source-id: f32eb420aace93e2de421a15e4209fce6a3d90f0

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45262

**Summary**

This commit adds an API for ignoring arbitrary module attributes during

scripting. A class attribute named `ignored_attributes` containing names

of attributes to ignore can be added to the class of the instance being

scripted. Attributes ignored in this fashion cannot be used in

`forward`, methods used by `forward` or by `exported` methods. They

are, however, copied to the `RecursiveScriptModule` wrapper and can be

used by `ignored` methods and regular Python code.

**Test Plan**

This commit adds unit tests to `TestScriptPy3` to test this new API.

Test Plan: Imported from OSS

Reviewed By: eellison

Differential Revision: D23971882

Pulled By: SplitInfinity

fbshipit-source-id: 8c81fb415fde7b78aa2f87e5d83a477e876a7cc3

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45714

Hit the problem when writing a test like following:

```

class M(...):

def forward(self, x):

x = x.some_op()

return x

```

we need to know the scope of `x` to figure out the qconfig for `x`

Test Plan: Imported from OSS

Reviewed By: z-a-f

Differential Revision: D24069959

fbshipit-source-id: 95ac8963c802ebce5d0e54d55f5ebb42085ca8a6

Summary:

**BC-breaking note**

For ease of exposition let a_min be the value of the "min" argument to clamp, and a_max be the value of the "max" argument to clamp.

This PR changes the behavior of torch.clamp to always compute min(max(a, a_min), a_max). torch.clamp currently computes this in its vectorized CPU specializations:

78b95b6204/aten/src/ATen/cpu/vec256/vec256_double.h (L304)

but in other places it clamps differently:

78b95b6204/aten/src/ATen/cpu/vec256/vec256_base.h (L624)78b95b6204/aten/src/ATen/native/cuda/UnaryOpsKernel.cu (L160)

These implementations are the same when a_min < a_max, but divergent when a_min > a_max. This divergence is easily triggered:

```

t = torch.arange(200).to(torch.float)

torch.clamp(t, 4, 2)[0]

: tensor(2.)

torch.clamp(t.cuda(), 4, 2)[0]

: tensor(4., device='cuda:0')

torch.clamp(torch.tensor(0), 4, 2)

: tensor(4)

```

This PR makes the behavior consistent with NumPy's clip. C++'s std::clamp's behavior is undefined when a_min > a_max, but Clang's std::clamp will return 10 in this case (although the program, per the above comment, is in error). Python has no standard clamp implementation.

**PR Summary**

Fixes discrepancy between AVX, CUDA, and base vector implementation for clamp, such that all implementations are consistent and use min(max_vec, max(min_vec, x) formula, thus making it equivalent to numpy.clip in all implementations.

The same fix as in https://github.com/pytorch/pytorch/issues/32587 but isolated to the kernel change only, so that the internal team can benchmark.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43288

Reviewed By: colesbury

Differential Revision: D24079453

Pulled By: mruberry

fbshipit-source-id: 67f30d2f2c86bbd3e87080b32f00e8fb131a53f7

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45712

Eager mode will still be able to use functional leaky relu, but it will be less accurate than

LeakyReLU module.

FX graph mode will support both leaky relu functional and module

Test Plan: Imported from OSS

Reviewed By: z-a-f

Differential Revision: D24069961

fbshipit-source-id: 8d91c3c50c0bcd068ba3072378ebb4da9549be3b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45899

Use function polymorphism to avoid repeated casts

I.e. instead of using `NCCL_CHECK(from_nccl_result(` add variant of the function that takes `ncclResult_t` as input argument

Add non-pointer variant of `to_nccl_comm` to avoid `*to_nccl_comm(&comm)` pattern

Test Plan: Imported from OSS

Reviewed By: walterddr

Differential Revision: D24138012

Pulled By: malfet

fbshipit-source-id: 7f62a03e108cbe455910e86e894afdd1c27e8ff1

Summary:

Clarified that the `Categorical` distribution will actually accept input of any arbitrary tensor shape, not just 1D and 2D tensors.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45804

Reviewed By: dzhulgakov

Differential Revision: D24125415

Pulled By: VitalyFedyunin

fbshipit-source-id: 5fa1f07911bd85e172199b28d79763428db3a0f4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45867

In most cases the lock ordering was hold a lock in local autograd and

then hold a lock in DistAutogradContext.

In case of `set_exception_without_signal` the lock order was in reverse and as

a result we saw potential deadlock issues in our TSAN tests. To fix this, I

removed the lock and instead just used std::atomic exchange.

In addition to this, I fixed TestE2E to ensure that we use the appropriate

timeout.

TestE2EProcessGroup was flaky for these two reasons and now is fixed.

ghstack-source-id: 113592709

Test Plan: waitforbuildbot.

Reviewed By: albanD

Differential Revision: D24120962

fbshipit-source-id: 12447b84ceae772b91e9a183c90d1e6340f44e66

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44922

For NCCL send/recv operations, we will create NCCL communicator on demand following the same design as how it's currently done for collective operations.

ghstack-source-id: 113592757

Test Plan: to add

Reviewed By: pritamdamania87

Differential Revision: D23773726

fbshipit-source-id: 0d47c29d670ddc07f7181e8485af0e02e2c9cfaf

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44921

This diff adds support for Process Group point-to-point operations on NCCL backend based on ncclSend/ncclRecv. See https://github.com/pytorch/pytorch/issues/43995 for more context.

ghstack-source-id: 113592785

Test Plan: unittest

Reviewed By: jiayisuse

Differential Revision: D23709848

fbshipit-source-id: cdf38050379ecbb10450f3394631317b41163258

Summary:

Instead of dynamically loading `caffe2_nvrtc`, lazyNVRTC provides the same functionality by binding all the hooks to lazy bind implementation, very similar to the shared library jump tables:

On the first call, each function from the list tries to get a global handle to the respective shared library and replace itself with the dynamically resolved symbol, using the following template:

```

auto fn = reinterpret_cast<decltype(&NAME)>(getCUDALibrary().sym(C10_SYMBOLIZE(NAME)));

if (!fn)

throw std::runtime_error("Can't get" ## NAME);

lazyNVRTC.NAME = fn;

return fn(...)

```

Fixes https://github.com/pytorch/pytorch/issues/31985

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45674

Reviewed By: ezyang

Differential Revision: D24073946

Pulled By: malfet

fbshipit-source-id: 1479a75e5200e14df003144625a859d312885874

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45763

**Summary**

This commit updates the documentation for `EmbeddingBag` to say that for

bags of constant length with no per-sample weights, the class is

equivalent to `Embedding` followed by `torch.sum(dim=1)`. The current

docs say `dim=0` and this is readily falsifiable.

**Test Plan**

1) Tried `Embedding` + `sum` with `dim`=0,1 in interpreter and compared

to `EmbeddingBag`

```

>>> import torch

>>> weights = torch.nn.Parameter(torch.randn(10, 3))

>>> e = torch.nn.Embedding(10, 3)

>>> eb = torch.nn.EmbeddingBag(10, 3, mode="sum")

>>> e.weight = weights

>>> eb.weight = weights

# Use 2D inputs because we are trying to test the case in which bags have constant length

>>> inputs = torch.LongTensor([[4,1,2,7],[5,6,0,3]])

>>> eb(inputs)

tensor([[-2.5497, -0.1556, -0.5166],

[ 2.2528, -0.3627, 2.5822]], grad_fn=<EmbeddingBagBackward>)

>>> torch.sum(e(inputs), dim=0)

tensor([[ 1.6181, -0.8739, 0.8168],

[ 0.0295, 2.3274, 1.2558],

[-0.7958, -0.4228, 0.5961],

[-1.1487, -1.5490, -0.6031]], grad_fn=<SumBackward1>)

>>> torch.sum(e(inputs), dim=1)

tensor([[-2.5497, -0.1556, -0.5166],

[ 2.2528, -0.3627, 2.5822]], grad_fn=<SumBackward1>)

```

So clearly `torch.sum` with `dim=0` is not correct here.

2) Built docs and viewed in browser.

*Before*

<img width="882" alt="Captura de Pantalla 2020-10-02 a la(s) 12 26 20 p m" src="https://user-images.githubusercontent.com/4392003/94963035-557be100-04ac-11eb-986c-088965ac3050.png">

*After*

<img width="901" alt="Captura de Pantalla 2020-10-05 a la(s) 11 26 51 a m" src="https://user-images.githubusercontent.com/4392003/95117732-ea294d80-06fd-11eb-9d6b-9b4e6c805cd0.png">

**Fixes**

This commit closes#43197.

Test Plan: Imported from OSS

Reviewed By: ansley

Differential Revision: D24118206

Pulled By: SplitInfinity

fbshipit-source-id: cd0d6b5db33e415d8e04ba04f2c7074dcecf3eee

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45471

Intead of assuming that 'torch' is the only module used by generated code,

use the qualified names of builtin functions to generate import statements

for all builtins. This allows user-captured functions to also get code generated correctly.

Test Plan: Imported from OSS

Reviewed By: jamesr66a

Differential Revision: D23978696

Pulled By: zdevito

fbshipit-source-id: ecbff150e3de38532531cdadbfe4965468f29a38

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45766

As per subj, making KeyError message more verbose.

Test Plan:

Verified that breakage can be successfully investigated with verbose error message

unit tests

Reviewed By: esqu1

Differential Revision: D24080362

fbshipit-source-id: f4e22a78809e5cff65a69780d5cbbc1e8b11b2e5

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45642

Prior to https://github.com/pytorch/pytorch/pull/45181, initializing a

NCCL process group would work even if no GPUs were present. Although, now since

init_process_group calls `barrier()` this would fail.

In general the problem was that we could initialize ProcessGroupNCCL without

GPUs and then if we called a method like `barrier()` the process would crash

since we do % numGPUs resulting in division by zero.

ghstack-source-id: 113490343

Test Plan: waitforbuildbot

Reviewed By: osalpekar

Differential Revision: D24038839

fbshipit-source-id: a1f1db52cabcfb83e06c1a11ae9744afbf03f8dc

Summary:

Rename jobs for testing GraphExecutor configurations to something a little bit more sensical.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45715

Reviewed By: ezyang, anjali411

Differential Revision: D24114344

Pulled By: Krovatkin

fbshipit-source-id: 89e5f54aaebd88f8c5878e060e983c6f1f41b9bb

Summary:

The torchbind tests didn't work be cause somehow we missed the rename of caffe2_gpu to torch_... (hip for us) in https://github.com/pytorch/pytorch/issues/20774 (merged 2019-06-13, oops) and still tried to link against it.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45426

Reviewed By: VitalyFedyunin

Differential Revision: D24112439

Pulled By: walterddr

fbshipit-source-id: a66a574e63714728183399c543d2dafbd6c028f7

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45776

Splitting out backend and custom class registration into their own library is

not currently implemented in fbcode, so detect that we are running tests in

fbcode and disable those tests.

Test Plan: buck test mode/no-gpu mode/dev caffe2/test:jit

Reviewed By: smessmer

Differential Revision: D24085871

fbshipit-source-id: 1fcc0547880bc4be59428e2810b6a7f6e50ef798

Summary:

* Add a pass at end of runCleanupPasses to annotate `aten::warn` so that each has its unique id

* Enhanced interpreter so that it tracks which `aten::warn` has been executed before and skip them

* Improved insertInstruction so that it correctly checks for overflow

Fixes https://github.com/pytorch/pytorch/issues/45108

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45382

Reviewed By: mrshenli

Differential Revision: D24060677

Pulled By: gmagogsfm

fbshipit-source-id: 9221bc55b9ce36b374bdf614da3fe47496b481c1

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45726

FB has an old internal platform that uses some random llvm version

that looks sort of like llvm 7. I've guarded that with the appropriate

LLVM_VERSION_PATCH.

I've also swapped out some of our uses of ThreadSafeModule/ThreadSafeContext

for the variants without ThreadSafe in the name. As far as I can tell we

weren't using the bundled locks anyways, but I'm like 85% sure this is OK since

we compile under the Torch JIT lock anyways.

Test Plan: unit tests

Reviewed By: ZolotukhinM, asuhan

Differential Revision: D24072697

fbshipit-source-id: 7f56b9f3cbe5e6d54416acdf73876338df69ddb2

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44220

Closes https://github.com/pytorch/pytorch/issues/44009

Currently if a dataloader returns objects created with a

collections.namedtuple, this will incorrectly be cast to a tuple. As a result, if we have data of these types, there can be runtime errors during the forward pass if the module is expecting a named tuple.

Fix this in

`scatter_gather.py` to resolve the issue reported in

https://github.com/pytorch/pytorch/issues/44009

ghstack-source-id: 113423287

Test Plan: CI

Reviewed By: colesbury

Differential Revision: D23536752

fbshipit-source-id: 3838e60162f29ebe424e83e474c4350ae838180b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45543

This PR adds documentation for the c10d Store to the public docs. Previously these docs were missing although we exposed a lightly-used (but potentially useful) Python API for our distributed key-value store.

ghstack-source-id: 113409195

Test Plan: Will verify screenshots by building the docs.

Reviewed By: pritamdamania87

Differential Revision: D24005598

fbshipit-source-id: 45c3600e7c3f220710e99a0483a9ce921d75d044

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45464

Usage of Symbols to find arguments requires one to generate a nonsense symbol for inputs which don't already have one. The intention of symbols appears to be something of an internalized string, but the namespace component doesn't apply to an argument. In order to access the arguments by name without adding new symbols, versions of those functions with std::string input was added. These can be proved valid based on the existing codepath. Additionally, a hasNamedInput convenience function was added to remove the necessity of a try/catch block in user code.

The primary motivation is to be able to easily handle the variable number of arguments in glow, so that the arange op may be implemented.

Reviewed By: eellison

Differential Revision: D23972315

fbshipit-source-id: 3e0b41910cf07e916186f1506281fb221725a91b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44678

This is a prototype PR that introduces 4 bit qtensors. The new dtype added for this is c10::quint4x2

The underlying storage for this is still uint8_t, so we pack 2 4-bit values in a byte while quantizing it.

This change uses most of the existing scaffolding for qtensor storage. We allocate storage

based on the dtype before creating a new qtensor.

It also adds a dispatch mechanism for this dtype so we can use this to get the bitwidth, qmin and qmax info

while quantizing and packing the qtensor (when we add 2-bit qtensor)

Kernels that use this dtype should be aware of the packing format.

Test Plan:

Locally tested

```

x = torch.ones((100, 100), dtype=torch.float)

qx_8bit = torch.quantize_per_tensor(x, scale=1.0, zero_point=2, dtype=torch.quint8)

qx = torch.quantize_per_tensor(x, scale=1.0, zero_point=2, dtype=torch.quint4x2)

torch.save(x, "temp.p")

print('Size float (B):', os.path.getsize("temp.p"))

os.remove('temp.p')

torch.save(qx_8bit, "temp.p")

print('Size quantized 8bit(B):', os.path.getsize("temp.p"))

os.remove('temp.p')

torch.save(qx, "temp.p")

print('Size quantized 4bit(B):', os.path.getsize("temp.p"))

os.remove('temp.p')

```

Size float (B): 40760

Size quantized 8bit(B): 10808

Size quantized 4bit(B): 5816

Imported from OSS

Reviewed By: raghuramank100

Differential Revision: D23993134

fbshipit-source-id: 073bf262f9680416150ba78ed2d932032275946d

Summary:

This modifies the default bailout depth to 20 which gives us a reasonable performance in benchmarks we considered (fastrnns, maskrcnn, hub/benchmark, etc)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45710

Reviewed By: robieta

Differential Revision: D24071861

Pulled By: Krovatkin

fbshipit-source-id: 472aacc136f37297b21f577750c1d60683a6c81e

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45693

**Summary**

This commit updates the docstring for

`torch.distributions.NegativeBinomial` to better match actual behaviour.

In particular, the parameter currently documented as probability of

success is actually probability of failure.

**Test Plan**

1) Ran the code from the issue to make sure this is still an issue (it

is)

2) `make html` and viewed the docs in a browser.

*Before*

<img width="879" alt="Captura de Pantalla 2020-10-01 a la(s) 1 35 28 p m" src="https://user-images.githubusercontent.com/4392003/94864456-db3a5680-03f0-11eb-977e-3bab0fb9c206.png">

*After*

<img width="877" alt="Captura de Pantalla 2020-10-01 a la(s) 2 12 24 p m" src="https://user-images.githubusercontent.com/4392003/94864478-e42b2800-03f0-11eb-965a-51493ca27c80.png">

**Fixes**

This commit closes#42449.

Test Plan: Imported from OSS

Reviewed By: robieta

Differential Revision: D24071048

Pulled By: SplitInfinity

fbshipit-source-id: d345b4de721475dbe26233e368af62eb57a47970

Summary:

We are trying to build libtorch statically (BUILD_SHARED_LIBS=OFF) then link it into a DLL. Our setup hits the infinite loop mentioned [here](54c05fa34e/torch/csrc/autograd/engine.cpp (L228)) because we build with `BUILD_SHARED_LIBS=OFF` but still link it all into a DLL at the end of the day.

This PR fixes the issue by changing the condition to guard on which windows runtime the build links against using the `CAFFE2_USE_MSVC_STATIC_RUNTIME` flag. `CAFFE2_USE_MSVC_STATIC_RUNTIME` defaults to ON when `BUILD_SHARED_LIBS=OFF`, so backwards compatibility is maintained.

I'm not entirely confident I understand the subtleties of the windows runtime versus linking setup, but this setup works for us and should not affect the existing builds.

Fixes https://github.com/pytorch/pytorch/issues/44470

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43532

Reviewed By: mrshenli

Differential Revision: D24053767

Pulled By: albanD

fbshipit-source-id: 1127fefe5104d302a4fc083106d4e9f48e50add8

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45672

This PR merges all quantization mode and will only expose the following top level functions:

```

prepare_fx

prepare_qat_fx

convert_fx

```

Test Plan:

Imported from OSS

Imported from OSS

Reviewed By: z-a-f

Differential Revision: D24053439

fbshipit-source-id: 03d545e26a36bc22a73349061b751eeb35171e64

Summary:

WIP: This PR is working in progress for the partition of fx graph module. _class partitioner_ generates partitions for the graph module. _class partition_ is a partition node in the partitions.

_Partitioner()_ : create a partitioner

_partition_graph(self, fx_module: GraphModule, devices: List[str]) -> None_:

use fx graph module and devices as the input and create partition_ids for each node inside the graph module

_dump_partition_DAG(self) -> None_:

print out the information about each partition, including its id, its backend type (what type of device this partition uses), all the nodes included in this partition, its parent partitions, children partitions, input nodes, and output nodes.

So far, only a single partition is considered, which means there is only one device with unlimited memory.

A test unit call _test_find_single_partition()_ is added to test if all nodes in the graph are marked for the only partition.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45429

Reviewed By: izdeby

Differential Revision: D24026268

Pulled By: scottxu0730

fbshipit-source-id: 119d506f33049a59b54ad993670f4ba5d8e15b0b

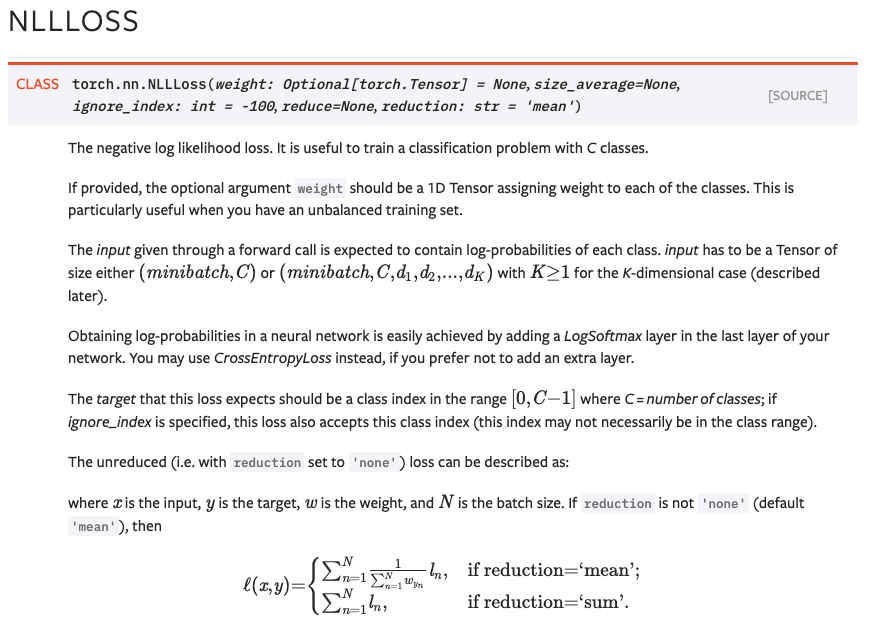

Summary:

Fixes https://github.com/pytorch/pytorch/issues/42855. Previously, back quotes weren't rendering correctly in

equations. This is because we were quoting things like `'mean'`. In

order to backquote properly in latex in text-mode, the back-quote needs

to be written as a back-tick.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45662

Test Plan:

- built docs locally and viewed the changes.

For NLLLoss (which is not the original module mentioned in the issue, but it has the same problem), we can see how the back quotes now render properly:

Reviewed By: glaringlee

Differential Revision: D24049880

Pulled By: zou3519

fbshipit-source-id: 61a1257994144549eb8f29f19d639aea962dfec0

Summary:

This PR adds support for complex-valued input for `torch.symeig`.

TODO:

- [ ] complex cuda tests raise `RuntimeError: _th_bmm_out not supported on CUDAType for ComplexFloat`

Update: Added xfailing tests for complex dtypes on CUDA. Once support for complex `bmm` is added these tests will work.

Fixes https://github.com/pytorch/pytorch/issues/45061.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45121

Reviewed By: mrshenli

Differential Revision: D24049649

Pulled By: anjali411

fbshipit-source-id: 2cd11f0e47d37c6ad96ec786762f2da57f25dac5

Summary:

Amp gradient unscaling is a great use case for multi tensor apply (in fact it's the first case I wrote it for). This PR adds an MTA unscale+infcheck functor. Really excited to have it for `torch.cuda.amp`. izdeby your interface was clean and straightforward to use, great work!

Labeled as bc-breaking because the native_functions.yaml exposure of unscale+infcheck changes from [`_amp_non_finite_check_and_unscale_` to `_amp_foreach_non_finite_check_and_unscale_`]( https://github.com/pytorch/pytorch/pull/44778/files#diff-f1e4b2c15de770d978d0eb77b53a4077L6289-L6293).

The PR also modifies Unary/Binary/Pointwise Functors to

- do ops' internal math in FP32 for FP16 or bfloat16 inputs, which improves precision ([and throughput, on some architectures!](https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#arithmetic-instructions)) and has no downside for the ops we care about.

- accept an instantiated op functor rather than an op functor template (`template<class> class Op`). This allows calling code to pass lambdas.

Open question: As written now, the PR has MTA Functors take care of pre- and post-casting FP16/bfloat16 inputs to FP32 before running the ops. However, alternatively, the pre- and post-math casting could be deferred/written into the ops themselves, which gives them a bit more control. I can easily rewrite it that way if you prefer.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44778

Reviewed By: gchanan

Differential Revision: D23944102

Pulled By: izdeby

fbshipit-source-id: 22b25ccad5f69b413c77afe8733fa9cacc8e766d

Summary:

* Support propagating `dim_param` in ONNX by encoding as `ShapeSymbol` in `SymbolicShape` of outputs. If export is called with `dynamic_axes` provided, shape inference will start with these axes set as dynamic.

* Add new test file `test_pytorch_onnx_shape_inference.py`, reusing all test cases from `test_pytorch_onnx_onnxruntime.py`, but focus on validating shape for all nodes in graph. Currently this is not enabled in the CI, since there are still quite some existing issues and corner cases to fix. The test is default to run only at opset 12.

* Bug fixes, such as div, _len, and peephole.cpp passes for PackPadded, and LogSoftmaxCrossEntropy.

* This PR depends on existing PR such as 44332.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44920

Reviewed By: eellison

Differential Revision: D23958398

Pulled By: bzinodev

fbshipit-source-id: 00479d9bd19c867d526769a15ba97ec16d56e51d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45292

This PR merges all quantization mode and will only expose the following top level functions:

```

prepare_fx

prepare_qat_fx

convert_fx

```

Test Plan: Imported from OSS

Reviewed By: vkuzo

Differential Revision: D23913105

fbshipit-source-id: 4e335286d6de225839daf51d1df54322d52d68e5

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45343

Current default dynamic quant observer is not correct since we don't accumulate

min/max and we don't need to calculate qparams.

Test Plan: Imported from OSS

Reviewed By: supriyar

Differential Revision: D23933995

fbshipit-source-id: 3ff497c9f5f74c687e8e343ab9948d05ccbba09b

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/45586

Test Plan: The unit test has been softened to be less platform sensitive.

Reviewed By: mruberry

Differential Revision: D24025415

Pulled By: robieta

fbshipit-source-id: ee986933b984e736cf1525e1297de6b21ac1f0cf

Summary:

This is an attempt at refactoring `torch.distributed` implementation. Goal is to push Python layer's global states (like _default_pg) to C++ layer such that `torch.distributed` becomes more TorchScript friendly.

This PR adds the skeleton of C++ implementation, at the moment it is not included in any build (and won't be until method implementations are filled in). If you see any test failures related, feel free to revert.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45547

Reviewed By: izdeby

Differential Revision: D24024213

Pulled By: gmagogsfm

fbshipit-source-id: 2762767f63ebef43bf58e17f9447d53cf119f05f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45585

I discovered this bug when I was trying to print the graph to a file. Turns out I had to close the file, but flushing should be a good safeguard in case other users forget.

Test Plan:

Tested with and without flushing.

with P144064292

without P144064767

Reviewed By: mortzur

Differential Revision: D24023819

fbshipit-source-id: 39574b3615feb28e5b5939664c04ddfb1257706a

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45474

When batchnorm affine is set to false, weight and bias is set to None, which is not supported in this case. Added a fix to set weights to 1 and bias to 0 if they are not set.

Test Plan: Add unit test for testing fusing conv, batchnorm where batchnorm is in affine=False mode.

Reviewed By: z-a-f

Differential Revision: D23977080

fbshipit-source-id: 2782be626dc67553f3d27d8f8b1ddc7dea022c2a

Summary:

Export of embedding bag with dynamic list of offsets.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44693

Reviewed By: malfet

Differential Revision: D23831980

Pulled By: bzinodev

fbshipit-source-id: 3eaff1a0f20d1bcfb8039e518d78c491be381e1a

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45377

This PR adds a C++ implementation of the TripletMarginWithDistanceLoss, for which the Python implementation was introduced in PR #43680. It's based on PR #44072, but I'm resubmitting this to unlink it from Phabricator.

Test Plan: Imported from OSS

Reviewed By: izdeby

Differential Revision: D24003973

fbshipit-source-id: 2d9ada7260a6f27425ff2fdbbf623dad0fb79405

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44826

As described in https://github.com/pytorch/pytorch/issues/43690, there

is a need for DDP to be able to ignore certain parameters in the module (not

install allreduce hooks) for certain use cases. `find_unused_parameters` is

sufficient from a correctness perspective, but we can get better performance

with this upfront list if users know which params are unused, since we won't

have to traverse the autograd graph every iteration.

To enable this, we add a field `parameters_to_ignore` to DDP init and don't

pass in that parameter to reducer if that parameter is in the given list.

ghstack-source-id: 113210109

Test Plan: Added unittest

Reviewed By: xw285cornell, mrshenli

Differential Revision: D23740639

fbshipit-source-id: a0411712a8b0b809b9c9e6da04bef2b955ba5314

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45461

This PR disables autograd for all C -> C, R -> C functions which are not included in the whitelist `GRADIENT_IMPLEMENTED_FOR_COMPLEX`. In practice, there will be a RuntimeError during forward computation when the outputs are differentiable:

```

>>> x=torch.randn(4, 4, requires_grad=True, dtype=torch.cdouble)

>>> x.pow(3)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

RuntimeError: pow does not support automatic differentiation for outputs with complex dtype.

```

The implicit assumption here is that all the C -> R functions have correct backward definitions. So before merging this PR, the following functions must be tested and verified to have correct backward definitions:

`torch.abs` (updated in #39955 ), `torch.angle`, `torch.norm`, `torch.irfft`, `torch.istft`.

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision: D23998156

Pulled By: anjali411

fbshipit-source-id: 370eb07fe56ac84dd8e2233ef7bf3a3eb8aeb179

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45532

- updated documentation

- explicitly not supporting negative values for beta (previously the

result was incorrect)

- Removing default value for beta in the backwards function, since it's

only used internally by autograd (as per convention)

Test Plan: Imported from OSS

Reviewed By: gchanan

Differential Revision: D24002415

Pulled By: bdhirsh

fbshipit-source-id: 980c141019ec2d437b771ee11fc1cec4b1fcfb48

Summary:

This PR allows Timer to collect deterministic instruction counts for (some) snippets. Because of the intrusive nature of Valgrind (effectively replacing the CPU with an emulated one) we have to perform our measurements in a separate process. This PR writes a `.py` file containing the Timer's `setup` and `stmt`, and executes it within a `valgrind` subprocess along with a plethora of checks and error handling. There is still a bit of jitter around the edges due to the Python glue that I'm using, but the PyTorch signal is quite good and thus this provides a low friction way of getting signal. I considered using JIT as an alternative, but:

A) Python specific overheads (e.g. parsing) are important

B) JIT might do rewrites which would complicate measurement.

Consider the following bit of code, related to https://github.com/pytorch/pytorch/issues/44484:

```

from torch.utils._benchmark import Timer

counts = Timer(

"x.backward()",

setup="x = torch.ones((1,)) + torch.ones((1,), requires_grad=True)"

).collect_callgrind()

for c, fn in counts[:20]:

print(f"{c:>12} {fn}")

```

```

812800 ???:_dl_update_slotinfo

355600 ???:update_get_addr

308300 work/Python/ceval.c:_PyEval_EvalFrameDefault'2

304800 ???:__tls_get_addr

196059 ???:_int_free

152400 ???:__tls_get_addr_slow

138400 build/../c10/core/ScalarType.h:c10::typeMetaToScalarType(caffe2::TypeMeta)

126526 work/Objects/dictobject.c:_PyDict_LoadGlobal

114268 ???:malloc

101400 work/Objects/unicodeobject.c:PyUnicode_FromFormatV

85900 work/Python/ceval.c:_PyEval_EvalFrameDefault

79946 work/Objects/typeobject.c:_PyType_Lookup

72000 build/../c10/core/Device.h:c10::Device::validate()

70000 /usr/include/c++/8/bits/stl_vector.h:std::vector<at::Tensor, std::allocator<at::Tensor> >::~vector()

66400 work/Objects/object.c:_PyObject_GenericGetAttrWithDict

63000 ???:pthread_mutex_lock

61200 work/Objects/dictobject.c:PyDict_GetItem

59800 ???:free

58400 work/Objects/tupleobject.c:tupledealloc

56707 work/Objects/dictobject.c:lookdict_unicode_nodummy

```

Moreover, if we backport this PR to 1.6 (just copy the `_benchmarks` folder) and load those counts as `counts_1_6`, then we can easily diff them:

```

print(f"Head instructions: {sum(c for c, _ in counts)}")

print(f"1.6 instructions: {sum(c for c, _ in counts_1_6)}")

count_dict = {fn: c for c, fn in counts}

for c, fn in counts_1_6:

_ = count_dict.setdefault(fn, 0)

count_dict[fn] -= c

count_diffs = sorted([(c, fn) for fn, c in count_dict.items()], reverse=True)

for c, fn in count_diffs[:15] + [["", "..."]] + count_diffs[-15:]:

print(f"{c:>8} {fn}")

```

```

Head instructions: 7609547

1.6 instructions: 6059648

169600 ???:_dl_update_slotinfo

101400 work/Objects/unicodeobject.c:PyUnicode_FromFormatV

74200 ???:update_get_addr

63600 ???:__tls_get_addr

46800 work/Python/ceval.c:_PyEval_EvalFrameDefault

33512 work/Objects/dictobject.c:_PyDict_LoadGlobal

31800 ???:__tls_get_addr_slow

31700 build/../aten/src/ATen/record_function.cpp:at::RecordFunction::RecordFunction(at::RecordScope)

28300 build/../torch/csrc/utils/python_arg_parser.cpp:torch::FunctionSignature::parse(_object*, _object*, _object*, _object**, bool)

27800 work/Objects/object.c:_PyObject_GenericGetAttrWithDict

27401 work/Objects/dictobject.c:lookdict_unicode_nodummy

24115 work/Objects/typeobject.c:_PyType_Lookup

24080 ???:_int_free

21700 work/Objects/dictobject.c:PyDict_GetItemWithError

20700 work/Objects/dictobject.c:PyDict_GetItem

...

-3200 build/../c10/util/SmallVector.h:at::TensorIterator::binary_op(at::Tensor&, at::Tensor const&, at::Tensor const&, bool)

-3400 build/../aten/src/ATen/native/TensorIterator.cpp:at::TensorIterator::resize_outputs(at::TensorIteratorConfig const&)

-3500 /usr/include/c++/8/x86_64-redhat-linux/bits/gthr-default.h:std::unique_lock<std::mutex>::unlock()

-3700 build/../torch/csrc/utils/python_arg_parser.cpp:torch::PythonArgParser::raw_parse(_object*, _object*, _object**)

-4207 work/Objects/obmalloc.c:PyMem_Calloc

-4500 /usr/include/c++/8/bits/stl_vector.h:std::vector<at::Tensor, std::allocator<at::Tensor> >::~vector()

-4800 build/../torch/csrc/autograd/generated/VariableType_2.cpp:torch::autograd::VariableType::add__Tensor(at::Tensor&, at::Tensor const&, c10::Scalar)

-5000 build/../c10/core/impl/LocalDispatchKeySet.cpp:c10::impl::ExcludeDispatchKeyGuard::ExcludeDispatchKeyGuard(c10::DispatchKey)

-5300 work/Objects/listobject.c:PyList_New

-5400 build/../torch/csrc/utils/python_arg_parser.cpp:torch::FunctionParameter::check(_object*, std::vector<pybind11::handle, std::allocator<pybind11::handle> >&)

-5600 /usr/include/c++/8/bits/std_mutex.h:std::unique_lock<std::mutex>::unlock()

-6231 work/Objects/obmalloc.c:PyMem_Free

-6300 work/Objects/listobject.c:list_repeat

-11200 work/Objects/listobject.c:list_dealloc

-28900 build/../torch/csrc/utils/python_arg_parser.cpp:torch::FunctionSignature::parse(_object*, _object*, _object**, bool)

```

Remaining TODOs:

* Include a timer in the generated script for cuda sync.

* Add valgrind to CircleCI machines and add a unit test.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44717

Reviewed By: soumith

Differential Revision: D24010742

Pulled By: robieta

fbshipit-source-id: df6bc765f8efce7193893edba186cd62b4b23623

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45482

Working on some models that need these ops on lite interpreter.

Test Plan: locally build and load/run the TS model without problem.

Reviewed By: iseeyuan

Differential Revision: D23906581

fbshipit-source-id: 01b9de2af2046296165892b837bc14a7e5d59b4e

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45520

With this change `Load`s and `Store`s no longer accept `Placeholder`s in

their constructor and `::make` functions and can only be built with

`Buf`.

`Placeholder` gets its own `store`, `load`, `storeWithMask`, and

`loadWithMask` method for more convenient construction.

Test Plan: Imported from OSS

Reviewed By: glaringlee

Differential Revision: D23998789

Pulled By: ZolotukhinM

fbshipit-source-id: 3fe018e00c1529a563553b2b215f403b34aea912

Summary:

This might be an alternative to reverting https://github.com/pytorch/pytorch/issues/45396 .

The obvious rough edge is that I'm not really seeing the work group limits that TensorExpr produces.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45506

Reviewed By: zhangguanheng66

Differential Revision: D23991410

Pulled By: Krovatkin

fbshipit-source-id: 11d3fc4600e4bffb1d1192c6b8dd2fe22c1e064e

Summary:

This PR adds a new GraphManipulation library for operating on the GraphModule nodes.

It also adds an implementation of replace_target_nodes_with, which replaces all nodes in the GraphModule or a specific op/target with a new specified op/target. An example use of this function would be replacing a generic operator with an optimized operator for specific sizes and shapes.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44775

Reviewed By: jamesr66a

Differential Revision: D23874561

Pulled By: gcatron

fbshipit-source-id: e1497cd11e0bbbf1fabdf137d65c746248998e0b

Summary:

Per feedback in the recent design review. Also tweaks the documentation to clarify what "deterministic" means and adds a test for the behavior.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45410

Reviewed By: ngimel

Differential Revision: D23974988

Pulled By: mruberry

fbshipit-source-id: e48307da9c90418fc6834fbd67b963ba2fe0ba9d

Summary:

Updated `cholesky_backward` to work correctly for complex input.

Note that the current implementation gives the conjugate of what JAX would return. anjali411 is that correct thing to do?

Ref. https://github.com/pytorch/pytorch/issues/44895

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45267

Reviewed By: bwasti

Differential Revision: D23975269

Pulled By: anjali411

fbshipit-source-id: 9908b0bb53c411e5ad24027ff570c4f0abd451e6

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45488

model_name logging was broken, issue is from the recent change of assigning the method name into the module name, this diff is fixing it.

ghstack-source-id: 113103942

Test Plan:

made sure that now the model_name is logged from module_->name().

verified with one model which does not contain the model metadata, and the model_name field is logged as below:

09-28 21:59:30.065 11530 12034 W module.cpp: TESTINGTESTING run() module = __torch__.Model

09-28 21:59:30.065 11530 12034 W module.cpp: TESTINGTESTING metadata does not have model_name assigning to __torch__.Model

09-28 21:59:30.066 11530 12034 W MobileModuleQPLObserver.cpp: TESTINGTESTING onEnterRunMethod log model_name = __torch__.Model

09-28 21:59:30.066 11530 12034 W MobileModuleQPLObserver.cpp: TESTINGTESTING onEnterRunMethod log method_name = labels

09-28 21:59:30.068 11530 12034 W MobileModuleQPLObserver.cpp: TESTINGTESTING onExitRunMethod()

Reviewed By: linbinyu

Differential Revision: D23984165

fbshipit-source-id: 5b00f50ea82106b695c2cee14029cb3b2e02e2c8

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45261

**Summary**

This commit enables `unused` syntax for ignoring

properties. Inoring properties is more intuitive with this feature enabled.

`ignore` is not supported because class type properties cannot be

executed in Python (because they exist only as TorchScript types) like

an `ignored` function and module properties that cannot be scripted

are not added to the `ScriptModule` wrapper so that they

may execute in Python.

**Test Plan**

This commit updates the existing unit tests for class type and module

properties to test properties ignored using `unused`.

Test Plan: Imported from OSS

Reviewed By: navahgar, Krovatkin, mannatsingh

Differential Revision: D23971881

Pulled By: SplitInfinity

fbshipit-source-id: 8d3cc1bbede7753d6b6f416619e4660c56311d33

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45479

Add a top level boolean attribute to the model called mobile_optimized that is set to true if it is optimized.

Test Plan: buck test //caffe2/test:mobile passes

Reviewed By: kimishpatel

Differential Revision: D23956728

fbshipit-source-id: 79c5931702208b871454319ca2ab8633596b1eb8

Summary:

Fix `torch._C._autocast_*_nesting` declarations in __init__.pyi

Fix iterable constructor logic: not every iterable can be constructed using `type(val)(val)` trick, for example it would not work for `val=range(10)` although `isinstance(val, Iterable)` is True

Change optional resolution logic to meet mypy expectations

Fixes https://github.com/pytorch/pytorch/issues/45436

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45480

Reviewed By: walterddr

Differential Revision: D23982822

Pulled By: malfet

fbshipit-source-id: 6418a28d04ece1b2427dcde4b71effb67856a872

Summary:

This PR makes the deprecation warnings for existing fft functions more prominent and makes the torch.stft deprecation warning consistent with our current deprecation planning.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45409

Reviewed By: ngimel

Differential Revision: D23974975

Pulled By: mruberry

fbshipit-source-id: b90d8276095122ac3542ab625cb49b991379c1f8

Summary:

PR opened just to run the CI tests

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44465

Reviewed By: ngimel

Differential Revision: D23907565

Pulled By: mruberry

fbshipit-source-id: 620661667877f1e9a2bab17d19988e2dc986fc0f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44846

The save function traverses the model state dict to pick out the observer stats

load function traverse the module hierarchy to load the state dict into module attributes depending on observer type

Test Plan:

python test/test_quantization.py TestQuantizeFx.test_save_observer_state_dict

Imported from OSS

Reviewed By: raghuramank100

Differential Revision: D23746821

fbshipit-source-id: 05c571b62949a2833602d736a81924d77e7ade55

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45390

Tensor objects should always refer to their Function's bufs. Currently

we never create a Tensor with a buffer different than of its function,

but having it in two places seems incorrect and dangerous.

Differential Revision: D23952865

Test Plan: Imported from OSS

Reviewed By: nickgg

Pulled By: ZolotukhinM

fbshipit-source-id: e63fc26d7078427514649d9ce973b74ea635a94a

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45388

Classes defined in these files are closely related, so it is reasonable

to have them all in one file. The change is purely a code move.

Differential Revision: D23952867

Test Plan: Imported from OSS

Reviewed By: nickgg

Pulled By: ZolotukhinM

fbshipit-source-id: 12cfaa968bdfc4dff00509e34310a497c7b59155

Summary:

In profiler, cuda did not report self time, so for composite functions there was no way to determine which function is really taking time. In addition, "total cuda time" reported was frequently more than total wallclock time. This PR adds "self CUDA time" in profiler, and computes total cuda time based on self cuda time, similar to how it's done for CPU. Also, slight formatting changes to make table more compact. Before:

```

-------------------- --------------- --------------- --------------- --------------- --------------- --------------- --------------- --------------- ---------------

Name Self CPU total % Self CPU total CPU total % CPU total CPU time avg CUDA total % CUDA total CUDA time avg Number of Calls

-------------------- --------------- --------------- --------------- --------------- --------------- --------------- --------------- --------------- ---------------

aten::matmul 0.17% 890.805us 99.05% 523.401ms 5.234ms 49.91% 791.184ms 7.912ms 100

aten::mm 98.09% 518.336ms 98.88% 522.511ms 5.225ms 49.89% 790.885ms 7.909ms 100

aten::t 0.29% 1.530ms 0.49% 2.588ms 25.882us 0.07% 1.058ms 10.576us 100

aten::view 0.46% 2.448ms 0.46% 2.448ms 12.238us 0.06% 918.936us 4.595us 200

aten::transpose 0.13% 707.204us 0.20% 1.058ms 10.581us 0.03% 457.802us 4.578us 100

aten::empty 0.14% 716.056us 0.14% 716.056us 7.161us 0.01% 185.694us 1.857us 100

aten::as_strided 0.07% 350.935us 0.07% 350.935us 3.509us 0.01% 156.380us 1.564us 100

aten::stride 0.65% 3.458ms 0.65% 3.458ms 11.527us 0.03% 441.258us 1.471us 300

-------------------- --------------- --------------- --------------- --------------- --------------- --------------- --------------- --------------- ---------------

Self CPU time total: 528.437ms

CUDA time total: 1.585s

Recorded timeit time: 789.0814 ms

```

Note recorded timeit time (with proper cuda syncs) is 2 times smaller than "CUDA time total" reported by profiler

After

```

-------------------- ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------

Name Self CPU % Self CPU CPU total % CPU total CPU time avg Self CUDA Self CUDA % CUDA total CUDA time avg # of Calls

-------------------- ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------

aten::matmul 0.15% 802.716us 99.06% 523.548ms 5.235ms 302.451us 0.04% 791.151ms 7.912ms 100

aten::mm 98.20% 519.007ms 98.91% 522.745ms 5.227ms 790.225ms 99.63% 790.848ms 7.908ms 100

aten::t 0.27% 1.406ms 0.49% 2.578ms 25.783us 604.964us 0.08% 1.066ms 10.662us 100

aten::view 0.45% 2.371ms 0.45% 2.371ms 11.856us 926.281us 0.12% 926.281us 4.631us 200

aten::transpose 0.15% 783.462us 0.22% 1.173ms 11.727us 310.016us 0.04% 461.282us 4.613us 100

aten::empty 0.11% 591.603us 0.11% 591.603us 5.916us 176.566us 0.02% 176.566us 1.766us 100

aten::as_strided 0.07% 389.270us 0.07% 389.270us 3.893us 151.266us 0.02% 151.266us 1.513us 100

aten::stride 0.60% 3.147ms 0.60% 3.147ms 10.489us 446.451us 0.06% 446.451us 1.488us 300

-------------------- ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------ ------------

Self CPU time total: 528.498ms

CUDA time total: 793.143ms

Recorded timeit time: 788.9832 ms

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45209

Reviewed By: zou3519

Differential Revision: D23925491

Pulled By: ngimel

fbshipit-source-id: 7f9c49238d116bfd2db9db3e8943355c953a77d0

Summary:

Inline pytorch into wrapper, which is especially helpful in combination

with dead code elimination to reduce IR size and compilation times when

a lot of parameters are unused.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45445

Test Plan: CI

Reviewed By: ZolotukhinM

Differential Revision: D23969009

Pulled By: asuhan

fbshipit-source-id: a21509d07e4c130b6aa6eae5236bb64db2748a3d

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43612

**Summary**

This commit modifies the `torch._C._jit_to_backend` function so that it

accepts `ScriptModules` as inputs. It already returns `ScriptModules`

(as opposed to C++ modules), so this makes sense and makes the API more

intuitive.

**Test Plan**

Continuous integration, which includes unit tests and out-of-tree tests

for custom backends.

**Fixes**

This commit fixes#41432.

Test Plan: Imported from OSS

Reviewed By: suo, jamesr66a

Differential Revision: D23339854

Pulled By: SplitInfinity

fbshipit-source-id: 08ecef729c4e1e6bddf3f483276947fc3559ea88

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45280

Performance is the same on CPU and on CUDA is only 1-1.05x slower. This change is necessary for the future nan ops including nan(min|max|median)

Test Plan: Imported from OSS

Reviewed By: gchanan

Differential Revision: D23908796

Pulled By: heitorschueroff

fbshipit-source-id: c2b57acbe924cfa59fbd85216811f29f4af05088

Summary:

Stumbled upon a little gem in the audio conversion for `SummaryWriter.add_audio()`: two Python `for` loops to convert a float array to little-endian int16 samples. On my machine, this took 35 seconds for a 30-second 22.05 kHz excerpt. The same can be done directly in numpy in 1.65 milliseconds. (No offense, I'm glad that the functionality was there!)

Would also be ready to extend this to support stereo waveforms, or should this become a separate PR?

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44201

Reviewed By: J0Nreynolds

Differential Revision: D23831002

Pulled By: edward-io

fbshipit-source-id: 5c8f1ac7823d1ed41b53c4f97ab9a7bac33ea94b

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45214

When in verbose mode the package exporter will produce an html visualization

of dependencies of a module to make it easier to trim out unneeded code,

or debug inclusion of things that cannot be exported.

Test Plan: Imported from OSS

Reviewed By: suo

Differential Revision: D23873525

Pulled By: zdevito

fbshipit-source-id: 6801991573d8dd5ab8c284e09572b36a35e1e5a4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45401

Added a DeleteKey API for the TCP Store

ghstack-source-id: 112997162

Test Plan:

Modified the existing get/set test to use delete. verified that the

correct keys were deleted and that the numKeys API returned the right values

Reviewed By: mrshenli

Differential Revision: D23955730

fbshipit-source-id: 5c9f82be34ff4521c59f56f8d9c1abf775c67f9f

Summary:

Recent changes to the seq_num correlation behavior in profiler (PR https://github.com/pytorch/pytorch/issues/42565) has changed the behavior for emit_nvtx(record_shapes=True) which doesn't print the name of the operator properly.

Created PR to dump out the name in roctx traces, irrespective of the sequence number assigned only for ROCm.

cc: jeffdaily sunway513

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45229

Reviewed By: zou3519

Differential Revision: D23932902

Pulled By: albanD

fbshipit-source-id: c782667ff002b70b51f1cc921afd1b1ac533b39d

Summary:

This PR cleans up some of the rough edges around `Timer` and `Compare`

* Moves `Measurement` to be dataclass based

* Adds a bunch of type annotations. MyPy is now happy.

* Allows missing entries in `Compare`. This is one of the biggest usability issues with `Compare` right now, both from an API perspective and because the current failure mode is really unpleasant.

* Greatly expands the testing of `Compare`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45361

Test Plan: Changes to Timer are covered under existing tests, changes to `Compare` are covered by the expanded `test_compare` method.

Reviewed By: bwasti

Differential Revision: D23966816

Pulled By: robieta

fbshipit-source-id: 826969f73b42f72fa35f4de3c64d0988b61474cd

Summary:

Export of view op with dynamic input shape is broken when using tensors with a 0-dim.

This fix removes symbolic use of static input size to fix this issue.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43558

Reviewed By: ailzhang

Differential Revision: D23965090

Pulled By: bzinodev

fbshipit-source-id: 628e9d7ee5d53375f25052340ca6feabf7ba7c53

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45291

It's not necessary, you can just check if the dtype is integral.

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision: D23911963

Pulled By: gchanan

fbshipit-source-id: 230139e1651eb76226f4095e31068dded30e03e8

Summary:

As per title. Fixes [#{38948}](https://github.com/pytorch/pytorch/issues/38948). Therein you can find some blueprints for the algorithm being used in this PR.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43002

Reviewed By: zou3519

Differential Revision: D23931326

Pulled By: albanD

fbshipit-source-id: e6994af70d94145f974ef87aa5cea166d6deff1e

Summary:

Changes the deprecation of norm to a docs deprecation, since PyTorch components still rely on norm and some behavior, like automatically flattening tensors, may need to be ported to torch.linalg.norm. The documentation is also updated to clarify that torch.norm and torch.linalg.norm are distinct.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45415

Reviewed By: ngimel

Differential Revision: D23958252

Pulled By: mruberry

fbshipit-source-id: fd54e807c59a2655453a6bcd9f4073cb2c12e8ac

Summary:

Fix a couple of issues with scripting inplace indexing in prepare_inplace_ops_for_onnx pass.

1- Tracing index copy (such as cases lik x[1:3] = data) already applies broadcasting on rhs if needed. The broadcasting node (aten::expand) is missing in scripting cases.

2- Inplace indexing with ellipsis (aten::copy_) is replaced with aten::index_put and then handled with slice+select in this pass.

Support for negative indices for this op added.

Shape inference is also enabled for scripting tests using new JIT API.

A few more tests are enabled for scripting.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44351

Reviewed By: ezyang

Differential Revision: D23880267

Pulled By: bzinodev

fbshipit-source-id: 78b33444633eb7ae0fbabc7415e3b16001f5207f

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45143

This PR prevents freezing cleaning up a submodule when user requests to

preserve a submodule.

Test Plan: Imported from OSS

Reviewed By: eellison

Differential Revision: D23844969

Pulled By: bzinodev

fbshipit-source-id: 80e6db3fc12460d62e634ea0336ae2a3551c2151

Summary:

in ONNX NegativeLogLikelihoodLoss specification, ignore_index is optional without default value.

therefore, when convert nll op to ONNX, we need to set ignore_index attribute even if it is not specified (e.g. ignore_index=-100).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44816

Reviewed By: ezyang

Differential Revision: D23880354

Pulled By: bzinodev

fbshipit-source-id: d0bdd58d0a4507ed9ce37133e68533fe6d1bdf2b

Summary:

Optimize export_onnx api to reduce string and model proto exchange in export.cpp

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44332

Reviewed By: bwasti, eellison

Differential Revision: D23880129

Pulled By: bzinodev

fbshipit-source-id: 1d216d8f710f356cbba2334fb21ea15a89dd16fa

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44419

Closes https://github.com/pytorch/pytorch/issues/39969

This PR adds support for propagation of input shapes over the wire when the profiler is invoked with `record_shapes=True` over RPC. Previously, we did not respect this argument.

This is done by saving the shapes as an ivalue list and recovering it as the type expected (`std::vector<std::vector<int>>` on the client). Test is added to ensure that remote ops have the same `input_shapes` as if the op were run locally.

ghstack-source-id: 112977899

Reviewed By: pritamdamania87

Differential Revision: D23591274

fbshipit-source-id: 7cf3b2e8df26935ead9d70e534fc2c872ccd6958

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/44967

When enabling profiler on server, if it is a different machine it may

not have CUDA while caller does. In this case, we would crash but now we

fallback to CPU and log a warning.

ghstack-source-id: 112977906

Test Plan: CI

Reviewed By: pritamdamania87

Differential Revision: D23790729

fbshipit-source-id: dc6eba172b7e666842d54553f52a6b9d5f0a5362

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43963

Added a DeleteKey API for the TCP Store

ghstack-source-id: 112939762

Test Plan:

Modified the existing get/set test to use delete. verified that the

correct keys were deleted and that the numKeys API returned the right values

Reviewed By: jiayisuse

Differential Revision: D23009117

fbshipit-source-id: 1a0d95b43d79e665a69b2befbaa059b2b50a1f66

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/43962

TCPStore needs a getNumKeys API for our logging needs.

ghstack-source-id: 112939761

Test Plan: Adding tests to C++ Store Tests

Reviewed By: pritamdamania87

Differential Revision: D22985085

fbshipit-source-id: 8a0d286fbd6fd314dcc997bae3aad0e62b51af83

Summary:

This PR adds get_all_users_of function. The function returns all the users of a specific node. A test unit is also added.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45216

Reviewed By: ezyang

Differential Revision: D23883572

Pulled By: scottxu0730

fbshipit-source-id: 3eb68a411c3c6db39ed2506c9cb7bb7337520ee4

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/45221